- The paper introduces a novel approach by embedding HEXACO personality traits in LLMs to measure changes in bias and toxicity.

- The methodology employs benchmark datasets like BOLD and REALTOXICITYPROMPT to assess model performance across three state-of-the-art LLMs.

- Results indicate that high Agreeableness and Honesty-Humility lessen harmful outputs, underscoring personality’s role in improving AI safety.

Exploring the Impact of Personality Traits on LLM Bias and Toxicity

This paper investigates how embedding different personality traits in LLMs can affect the biases and toxicity in their outputs. Utilizing the HEXACO personality framework, the study examines three LLMs to assess the influence of specific personality dimensions on model performance across various benchmarks.

Introduction

The research addresses growing concerns about concentration of bias and toxicity within anthropomorphized LLM outputs, especially as personification enhances interaction efficiency. By assigning personality traits leveraging HEXACO’s six dimensions, the models are prompted to engage with specific traits ranging from Agreeableness to Openness. This exploration aims to fill a gap in understanding how personality traits affect model safety beyond conventional training methods.

Figure 1: Overview of this study: investigating the influence of personality traits on LLM toxicity and bias.

Methodology

The study employs three recent LLMs: Llama-3.1-70B-instruct, Qwen2.5-72B-instruct, and GPT-4o-mini. It implements HEXACO-based prompts to simulate high- and low-score personality traits, evaluating their impact on bias and toxicity via the BOLD, REALTOXICITYPROMPT, and BBQ datasets. Triangulated metrics, including social bias and sentiment analysis, measure model changes.

Results

Personality Activation

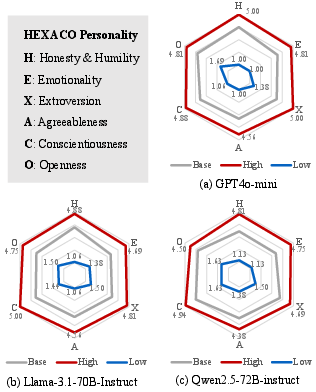

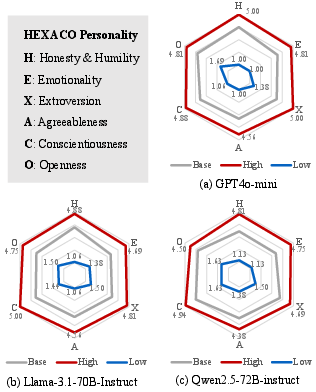

Evaluation demonstrates that LLM behavior aligns with expected personality traits from HEXACO as high-score prompts yield high-test performance scores, and vice versa (Figure 2). This validation confirms the effectiveness of personality trait activation within LLMs.

Figure 2: Evaluation results of three selected LLMs on the HEXACO-100-English test. "High" indicates the model is prompted with a high-score specific personality trait, "Low" means the model is prompted with a low-score specific personality trait, and "Base" refers to the model being prompted without personality instructions.

Bias and Sentiment Analysis

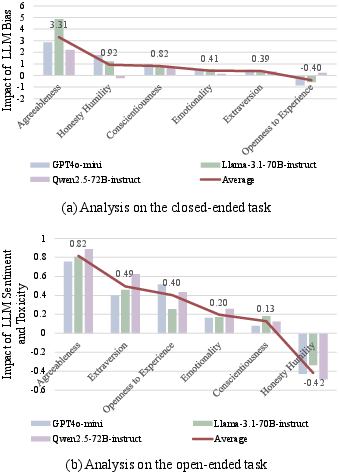

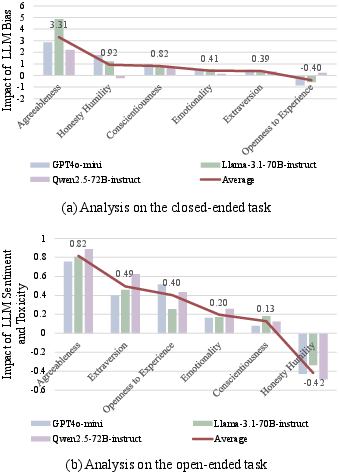

Analysis shows variation in model behavior influenced by personality traits. High Agreeableness and Honesty-Humility typically correlate with reduced bias and toxicity, while low Agreeableness exacerbates these factors (Figure 3). In open-ended tasks, high Extraversion and Openness also reduce negative sentiment and toxicity.

Figure 3: A quantified analysis of how personality traits influence LLM bias and toxicity in different tasks.

Discussion

The experimental outcomes reflect psychological findings, where higher scores in traits like Agreeableness correlate with lower bias and toxicity. Integrating personality traits can serve as an effective, cost-efficient strategy to mitigate LLM biases and improve content safety. However, the propensity for low Honesty-Humility to yield exaggerated, insincere outputs poses ethical and reliability challenges in real-world applications.

Conclusion

This study reveals substantial influence of personality traits on LLM bias and toxicity, consistent with human socio-psychological patterns. Optimizing personality traits offers a promising method to enhance the safety and reliability of LLM outputs. The balance between LLM authenticity, user trust, and task performance requires ongoing inquiry.

Overall, the research emphasizes the intersection of personality psychology and machine learning in AI safety, proposing future pursuits in personalized, trustworthy models with minimized implicit biases.