- The paper demonstrates that integrating Retrieval-Augmented Generation with a fine-tuned LLaMA 3.1 significantly improves smart contract vulnerability detection, achieving 100% recall.

- The methodology uses QLoRA for efficient 4-bit quantization, enabling robust performance on hardware-constrained environments.

- Experimental results show SmartLLM outperforms static tools like Mythril and Slither by dynamically leveraging ERC standards and contextual insights.

SmartLLM: Smart Contract Auditing using Custom Generative AI

Smart contracts, integral to the blockchain and decentralized finance landscapes, pose significant security challenges due to vulnerabilities such as re-entrancy and access control issues. The paper "SmartLLM: Smart Contract Auditing using Custom Generative AI" presents an innovative approach leveraging a fine-tuned version of LLaMA 3.1 and Retrieval-Augmented Generation (RAG) to enhance vulnerability detection in smart contracts.

Methodology

Retrieval-Augmented Generation and LLaMA 3.1

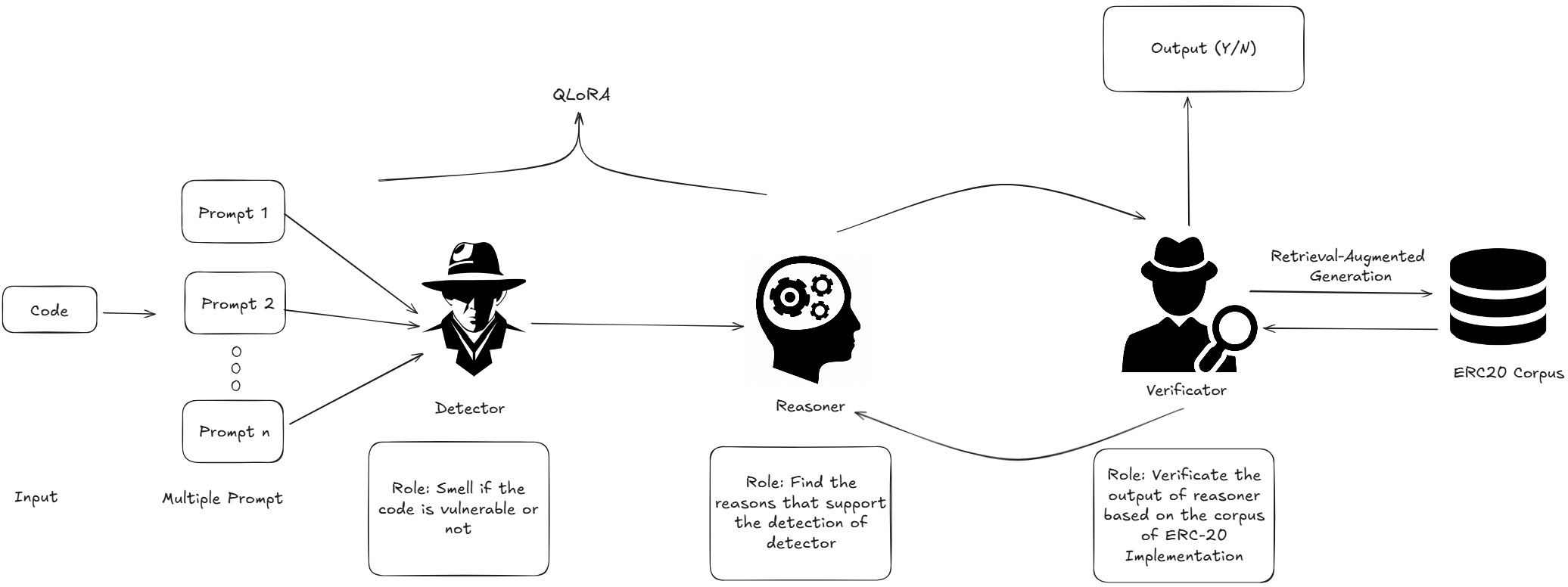

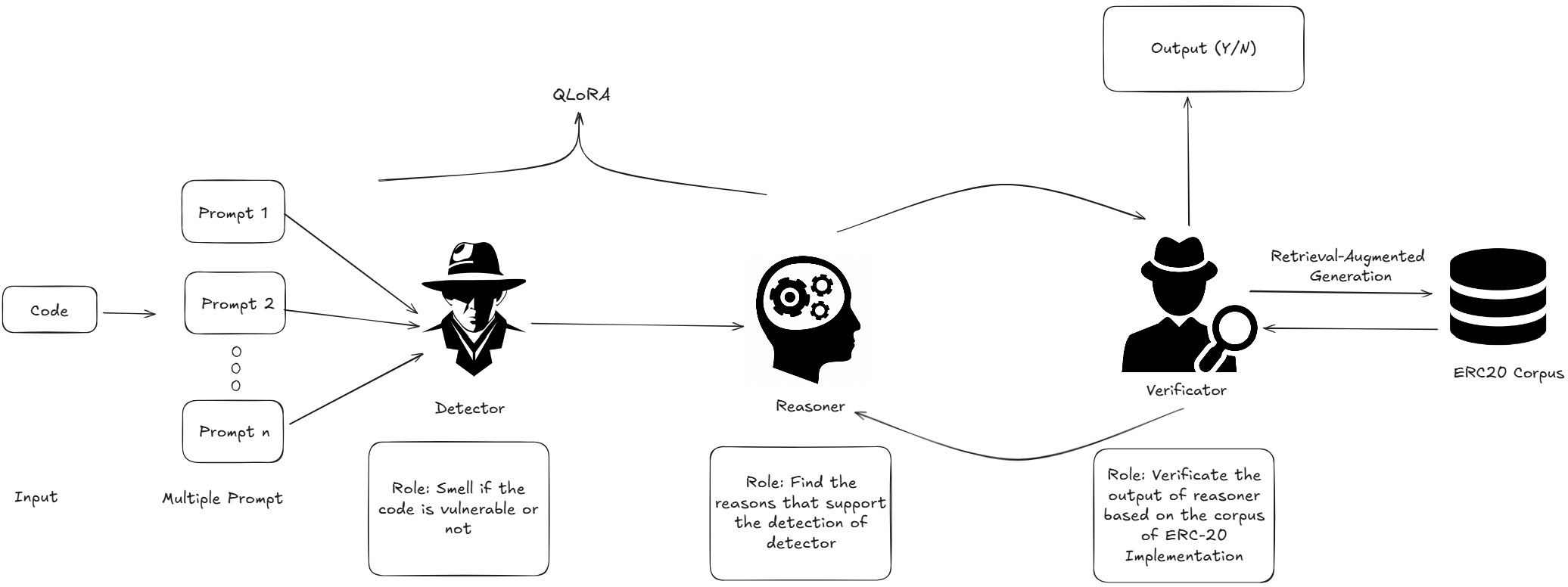

The core of the proposed methodology is the integration of RAG with the fine-tuned LLaMA 3.1, a powerful LLM. This approach allows the model to leverage domain-specific knowledge from ERC standards to detect vulnerabilities more accurately compared to traditional static analysis tools and zero-shot LLMs.

The pipeline involves several roles:

- Detector: Responsible for identifying vulnerable contracts.

- Reasoner: Explains the logic behind identified vulnerabilities.

- Verificator: Ensures the outputs align with ERC standards using RAG.

Data Collection and Preprocessing

Data was collected from the Etherscan platform, encompassing 300 smart contracts split evenly between vulnerable and non-vulnerable samples. The data was preprocessed to remove irrelevant components, normalize formatting, and tokenize using the LLaMA tokenizer to optimize model performance.

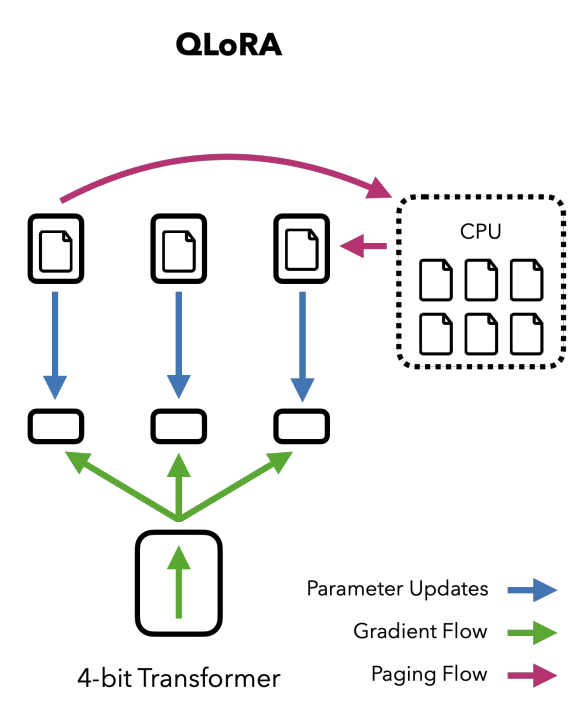

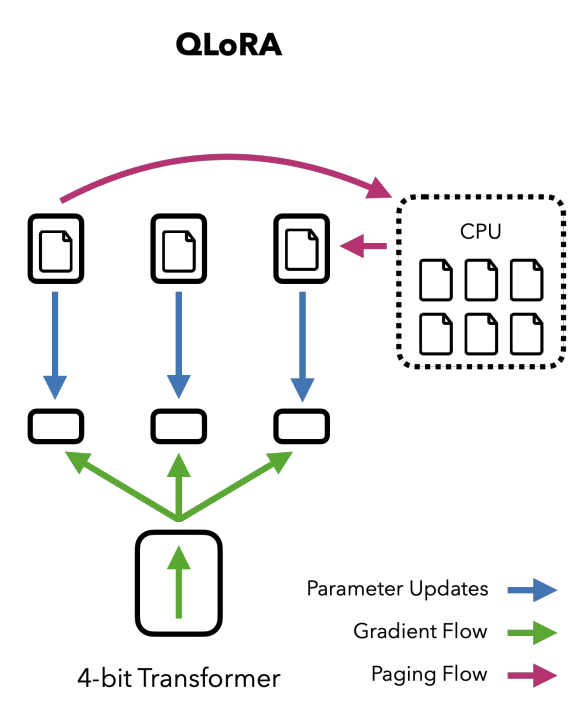

QLoRA for Efficient Finetuning

The paper employs QLoRA (Quantized Low-Rank Adaptation) to efficiently fine-tune LLaMA 3.1. By utilizing 4-bit quantization, this technique manages the computational burden, enabling fine-tuning on hardware-constrained environments without significant performance loss.

Figure 1: How QLORA quantizing the model to 4-bit precision and using paged optimizers to handle memory spikes.

Experimental Setup and Results

Evaluation Metrics

The performance was evaluated using accuracy, recall, precision, and F1 score. The model achieved remarkable results, with a perfect recall of 100% and an accuracy of 70.0%, showcasing its robustness in detecting vulnerabilities while maintaining a high F1 score of 76.9%.

Figure 2: Workflow diagram of SmartLLM, illustrating the roles of Detector, Reasoner, and Verificator in vulnerability detection using Retrieval-Augmented Generation.

Comparative Analysis

Compared to state-of-the-art static analysis tools like Mythril and Slither, and zero-shot prompting models such as ChatGPT-3.5 and GPT-4, SmartLLM demonstrated superior performance. While traditional tools showed limitations in recall and precision due to predefined patterns, SmartLLM excelled by dynamically utilizing contextual knowledge from ERC documentation.

Discussion

The paper identifies several opportunities for enhancing the SmartLLM approach, such as improving precision through diverse datasets and incorporating dynamic execution scenarios. Key challenges include processing token limitations for long contracts and deploying the models cost-effectively due to high computational demands.

Conclusion

SmartLLM demonstrates a substantial advancement in smart contract vulnerability detection, offering higher accuracy and recall than existing tools. The integration of domain-specific knowledge with LLaMA 3.1 and RAG bridges gaps left by traditional auditing tools, ultimately supporting the secure deployment of decentralized applications. Future work is directed at incorporating more complex vulnerabilities, broadening dataset coverage, and optimizing precision without sacrificing recall.