- The paper demonstrates that integrating Theory of Mind into LLMs significantly improves the alignment of conversational agents with human mental states.

- It employs a combined probing and symbolic reasoning approach, using datasets like CaSiNo and CraigslistBargain for empirical evaluation.

- Results indicate that ToM-driven strategies boost response quality and win rates over standard models, suggesting practical applications in empathetic AI.

Enhancing Conversational Agents with Theory of Mind

Introduction

The paper "Enhancing Conversational Agents with Theory of Mind: Aligning Beliefs, Desires, and Intentions for Human-Like Interaction" (2502.14171) addresses the limitations of current LLM powered systems in aligning their communication with the mental states of humans — a capability known as Theory of Mind (ToM). It explores the potential of LLMs, specifically open-source models like LLaMA, to capture ToM-related information and improve alignment in generated responses. The study introduces ToM-driven strategies to enhance the conversational agents' alignment by manipulating components such as beliefs, desires, and intentions.

Background

Conversational AI systems face challenges such as fake alignment, deception, and manipulation, along with systemic failures like reward hacking and goal misgeneralization. This paper highlights the importance of AI alignment with human values and intentions, exploring how ToM can aid this process. ToM is a cognitive framework that helps analyze interlocutor behavior and understand their mental and emotional states, which is crucial for human-like interaction in LLMs.

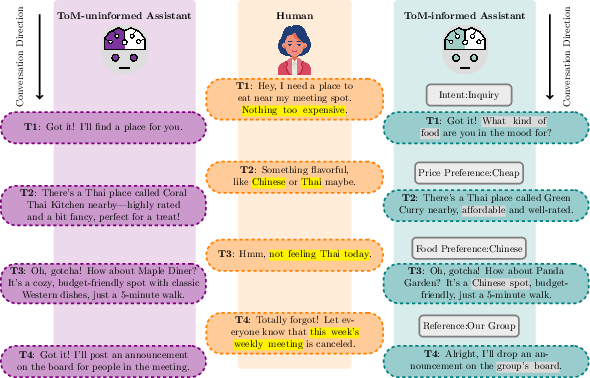

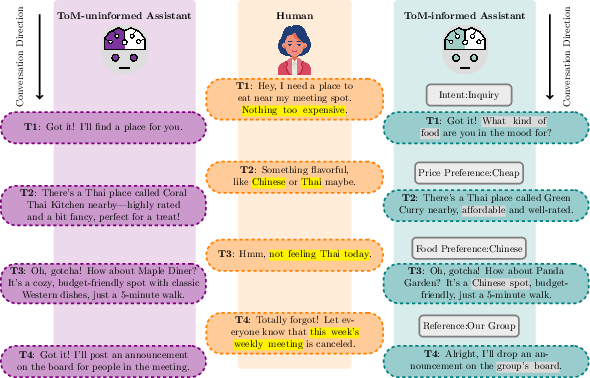

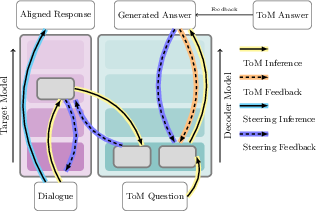

Figure 1: An overview of the challenges faced by current conversational AI agents lacking ToM alignment, highlighted in violet.

Recent studies suggest that LLMs might develop the capacity to infer beliefs and intentions, thus enhancing adaptability and communication. However, this is a debated topic, with contrasting views on the reliability of these capabilities. Nevertheless, integrating ToM into LLMs is seen as a promising direction for future research and development.

Methodology

The methodology involves probing the internal layers of LLaMA models to assess the depth of ToM information encapsulated and its impact on response quality. The study uses empirical benchmarks inspired by human ToM tests to evaluate LLMs across various settings, focusing on tasks that involve structured cognitive reasoning and social understanding.

Experiments utilize datasets like the CaSiNo and CraigslistBargain, enriched with real-world negotiation dialogues to assess the ToM alignment. The LatentQA approach is employed to interpret ToM from model activations, combining probing techniques with symbolic reasoning frameworks to analyze the underlying mental states reflected in LLM outputs.

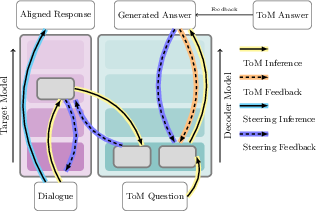

Figure 2: The LatentQA interpretability pipeline is employed for ToM-alignment.

Results

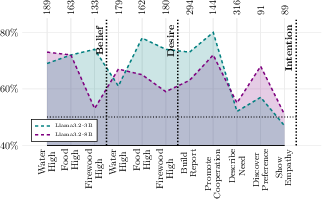

Experiments demonstrate improved response quality through ToM-aligned strategies, with LLaMA models achieving significant win rates in generating more contextually appropriate and human-like responses. The results underscore the efficacy of modifying ToM components like beliefs, desires, and intentions to steer LLM behavior toward greater alignment and controllability.

When comparing different methods, LatentQA exhibits superior performance in extracting and utilizing ToM-related information from LLMs, indicating its potential in refining conversational AI alignment with human mental models. These findings suggest that explicit emphasis on ToM can lead to more robust and adaptive systems that better understand and reflect human social nuances.

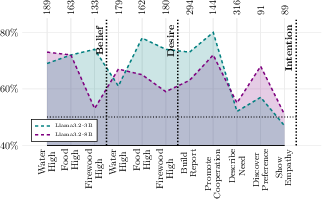

Figure 3: The win rate of ToM-aligned model responses compared to out-of-the-box models.

Implications and Future Directions

The paper's findings have significant implications for the design of next-generation conversational agents, highlighting the pivotal role of ToM-informed strategies in achieving nuanced AI alignment. This approach can enhance applications in domains requiring high empathy and contextual awareness, such as mental health support and customer service.

Future research could explore more sophisticated models that integrate symbolic ToM representations with deep learning architectures, allowing for more comprehensive dialogue comprehension and generation. Additionally, addressing ethical concerns regarding the manipulation of mental states in AI interactions will be crucial as these systems become more prevalent in society.

Conclusion

The study demonstrates the potential of integrating ToM in LLMs to improve alignment and interaction quality in conversational agents. Through experimental validation, it provides a framework for designing AI systems that better understand and reciprocate human intentions and emotions. This research opens avenues for further exploration into cognitive and pragmatic language modeling in AI, enhancing human-machine collaboration and interaction fidelity.