- The paper introduces a risk framework partitioning AI tasks into sub-events, using baseline and AI-modified distributions to capture cumulative risk.

- It employs statistical methods such as Markov chains, copulas, and Bayesian updates to model dependencies and account for extreme outcomes.

- A logistics simulation benchmarks AI versus non-AI processes, providing quantifiable insights into operational risk metrics.

Statistical Scenario Modelling and Lookalike Distributions for Multi-Variate AI Risk

The paper presents a framework for assessing AI risk in multi-stage scenarios, focusing on the impact of AI components integrated into existing systems. It introduces scenario modelling techniques and lookalike distributions to objectively evaluate cumulative risk metrics when AI components substitute or augment traditional processes.

Overview and Contributions

The paper addresses gaps in current AI safety protocols by introducing a statistically grounded method for evaluating AI risk across interdependent systems. The main contributions include:

- Scenario Modelling: The paper proposes partitioning AI-related tasks into sub-events, each modelled as random variables drawn from baseline distributions. When AI is integrated, these baseline distributions are replaced by AI-modified ones to capture modular impacts on risk metrics.

- Statistical Methods: The paper employs Markov chains and copulas to model dependencies among components, facilitating the analysis of risk propagation across stages.

- Data Scarcity Solutions: It suggests using lookalike distributions derived from analogous processes to approximate AI-specific impacts until sufficient empirical AI data becomes available.

- Benchmarking AI Integration: A framework for comparing AI and non-AI processes is provided, allowing practitioners to discern differences in probability distributions, total loss, mean performance, and catastrophic events.

- Example Application: The paper illustrates its framework via a logistics simulation, demonstrating how AI risk can be empirically assessed.

Implementation Strategies

Scenario Partitioning

Breaking down processes into sequential sub-events E1,E2,…,En allows detailed analysis of AI components. Each is linked to a distribution, such as Gamma or Weibull, characterizing the event’s cost, time, or failure frequency. When AI substitutes an event Ei, the distribution shifts to reflect performance changes and potential tail risks.

Dependency Modelling

Copulas

Copulas enable the linkage of multivariate AI risk factors by capturing correlations and dependencies. Using Sklar's theorem, a joint distribution of variables is decomposed into marginals and a copula, such as Gaussian or Archimedean, which outline the dependence structure. This ensures the broader interplay effects between AI-modified events are comprehensively assessed.

Markov Chains

For discrete scenarios, Markov chains model transitions between states of operation, failure, and repair. AI affects these transitions by altering probabilities or rates in a transition matrix, reflecting stochastic shifts that might be induced by AI.

Lookalike Distribution Adjustment

Tail Adjustments

Incorporating heavy-tailed distributions, such as Pareto or Generalized Pareto, into AI-modified event models allows the handling of extreme outliers. These mixture models ensure rare but impactful failures are appropriately represented.

Bayesian Updates

Using Bayesian inference techniques, the paper suggests ongoing updates to parameters of lookalike distributions based on incoming AI performance data, enabling dynamic risk assessment and improved fit with observed realities.

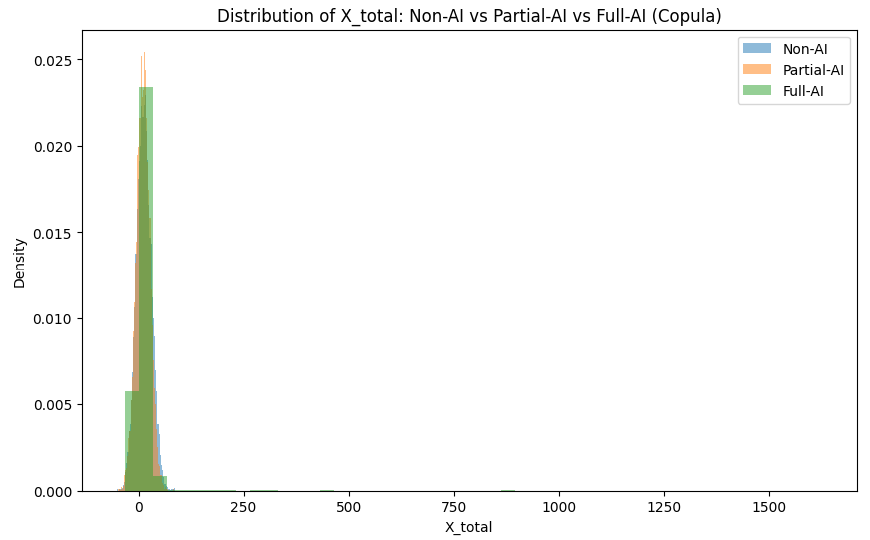

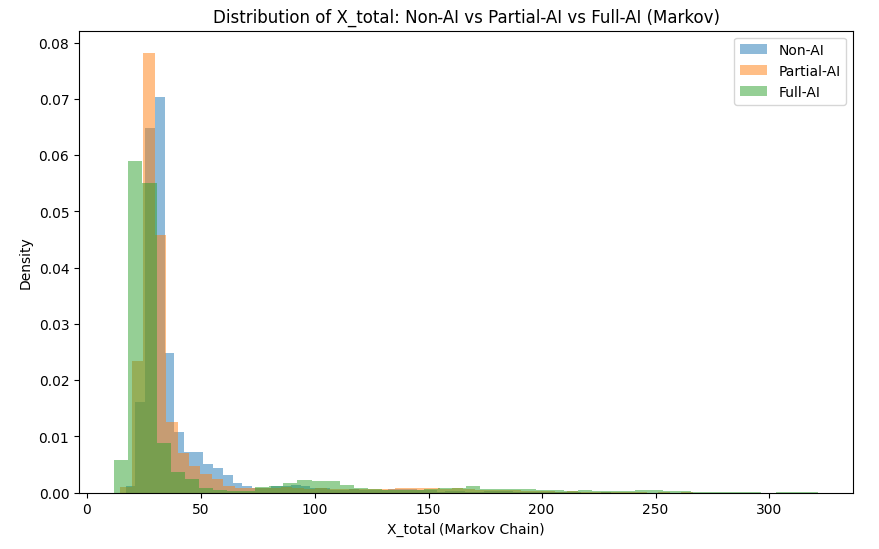

Sensitivity Analysis

Comparisons across scenarios—no AI, partial AI, and full AI—allow for sensitivity analysis that reveals the net effect of AI integration on the total risk profile. Monte Carlo simulations facilitate these assessments by generating empirical distributions from which risk metrics like Value at Risk (VaR) and Expected Shortfall (ES) can be computed.

Figure 1: Distribution of $X_{\mathrm{total}$ under different AI scenarios, showcasing risk shifts with AI integration.

Example Scenario: Logistics Pipeline

Using a logistics pipeline as an example, the paper demonstrates scenario modeling across demand forecasting, warehouse picking, and last-mile delivery:

- Demand Forecasting utilizes AI-driven models that enhance average accuracy but may lead to rare severe misjudgments.

- Warehouse Picking transitions from manual processes to AI-driven systems, reducing time and error rates but introducing potential catastrophic event risks.

- Last-Mile Delivery shifts to autonomous vehicles or drones, expediting delivery yet posing new regulatory and navigation challenges.

Practical Implications

The framework offers quantifiable insights into AI's systemic risks, aiding in operational decisions about AI adoption in workflows. It balances typical performance improvements against potential catastrophic failures. Future work might explore deeper computational solutions for refining real-time AI failure models and adopt advanced statistical or machine learning techniques for improving risk evaluations.

Conclusion

This paper bridges the gap between theoretical AI safety and practical risk assessment by demonstrating the application of well-established statistical methodologies to AI risks. It introduces scenario modelling as a tool for understanding multi-component systems affected by AI integration, presenting a pathway for ongoing refinement and validation of AI risk profiles as empirical data accumulates. This framework sets a foundational template for industry practitioners aiming to systematically integrate AI while managing emerging risks.