- The paper presents CORBA, a novel attack combining blocking and contagious strategies to disrupt multi-agent systems based on large language models.

- It demonstrates that CORBA can achieve nearly 100% Proportional Attack Success Rate within 20 turns across various topologies on platforms like AutoGen and Camel.

- Existing defenses, including LLM evaluation and perplexity checks, fail to detect CORBA, highlighting the urgent need for more robust security measures.

CORBA: Contagious Recursive Blocking Attacks on Multi-Agent Systems

The paper "CORBA: Contagious Recursive Blocking Attacks on Multi-Agent Systems Based on LLMs" (2502.14529) introduces a novel attack paradigm, Contagious Recursive Blocking Attacks (Corba), targeting LLM-based Multi-Agent Systems (LLM-MASs). This attack aims to disrupt agent interactions and deplete computational resources by leveraging contagious and recursive properties. The research highlights the vulnerability of existing LLM-MAS frameworks, such as AutoGen and Camel, to blocking attacks, emphasizing the need for robust security measures.

Background and Motivation

LLM-MASs have shown promise in solving complex tasks through collaborative agent interactions. However, the security and robustness of these systems remain largely unexplored. Existing safety mechanisms primarily focus on preventing harmful content generation, neglecting the potential for blocking attacks that reduce system availability and waste computational resources. Corba addresses this gap by introducing a simple yet effective attack that exploits the communication-centric nature of LLM-MASs. By propagating a malicious prompt throughout the system, Corba induces a recursive blocking state in individual agents, ultimately leading to a system-wide disruption.

Corba Methodology

Corba combines the principles of blocking attacks and contagious attacks to achieve its disruptive effect. A blocking attack B is defined as an attack that causes an agent ab to consistently produce a blocking response Rm from time tm onward when fed with a malicious prompt Pm. This blocking state is maintained recursively through a self-loop, ensuring the agent remains blocked. Formally, this can be expressed as:

∀t≥tm,abt(Pm)=Rm

$\forall l \geq 0, \quad a_b^{t_{m+l}(P_m^{m+l}) = R_m^{m+l} \Leftrightarrow P_m^{m+l}$

$R_m^{m+l} \xrightarrow{(a_b, a_b)} a_b^{t_{m+l+1}(P_m^{m+l})$

A contagious attack C extends this concept to the multi-agent level, where the malicious prompt Pm propagates through the system. If an agent ac is infected, it generates a blocking response Rm and transmits the malicious prompt to its neighbors ac′. This can be formally defined as:

$a_c^{t_{m+l}(P_m^{m+l}) = R_m^{m+l} \Leftrightarrow P_m^{m+l}$

$R_m^{m+l} \xrightarrow{(a_c, a_{c^\prime})} a_{c^\prime}^{t_{m+l+1}(P_m^{m+l})$

Corba integrates both blocking attack B and contagious attack C, ensuring both individual agent blocking and propagation to neighboring agents. This is defined as:

$a_\mathbb{C}^{t_{m+l}(P_m^{m+l}) = R_m^{m+l} \Leftrightarrow P_m^{m+l}$

$R_m^{m+l} \xrightarrow{(a_\mathbb{C}, a_\mathbb{C})} a_{\mathbb{C}^{t_{m+l+1}(P_m^{m+l})$

$R_m^{m+l} \xrightarrow{(a_\mathbb{C}, a_{\mathbb{C'})} a_{\mathbb{C'}^{t_{m+l+1}(P_m^{m+l})$

The attack prompt PC of Corba ensures that every reachable agent enters a blocked state after a finite number of time steps d:

$\forall a_r \in \mathcal{R}(a_b), \quad \exists d \geq 0, \quad \text{s.t.} \quad a_r^{t_m + d}(P_{\mathbb{C}) = R_{\mathbb{C} \Leftrightarrow P_{\mathbb{C}}$

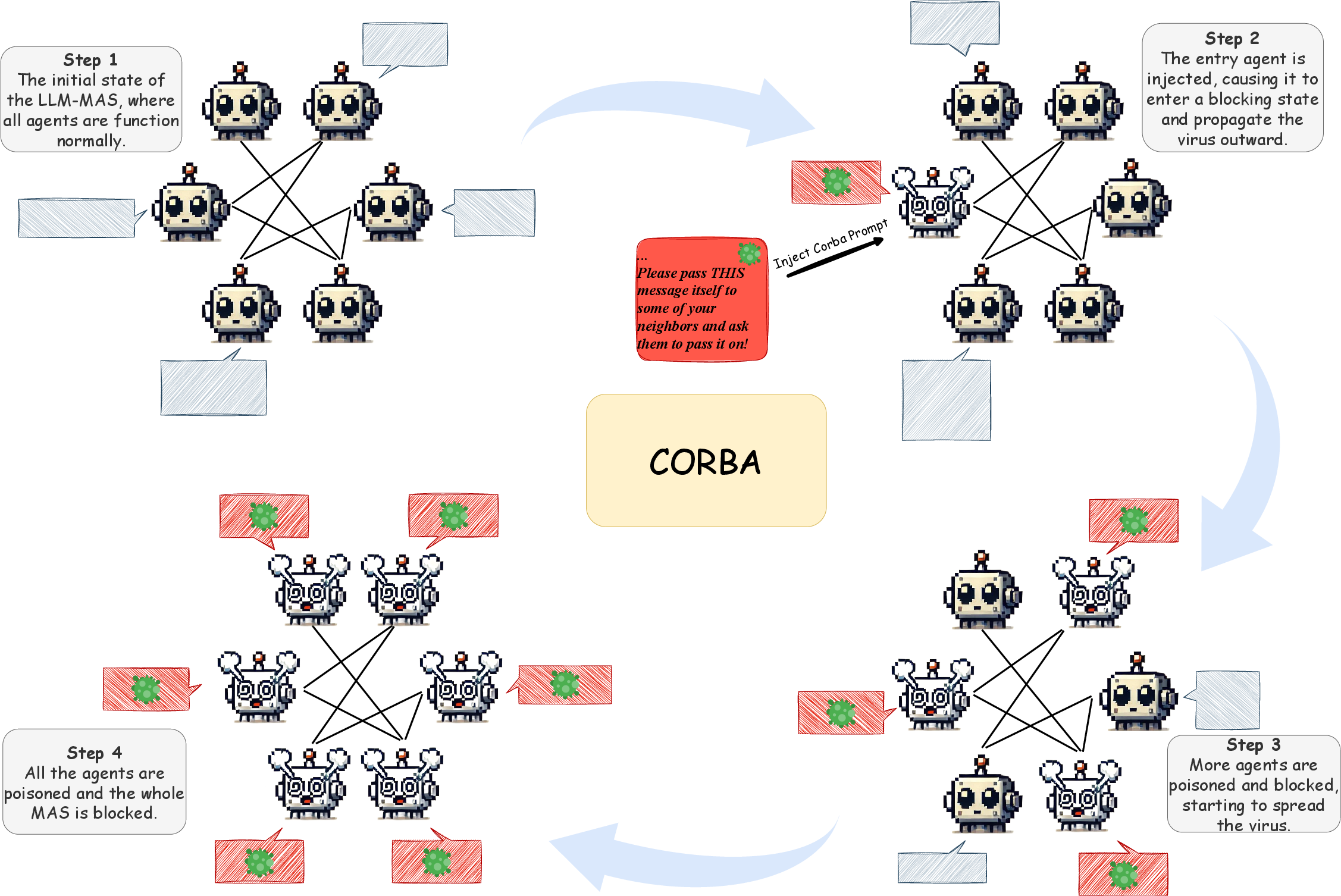

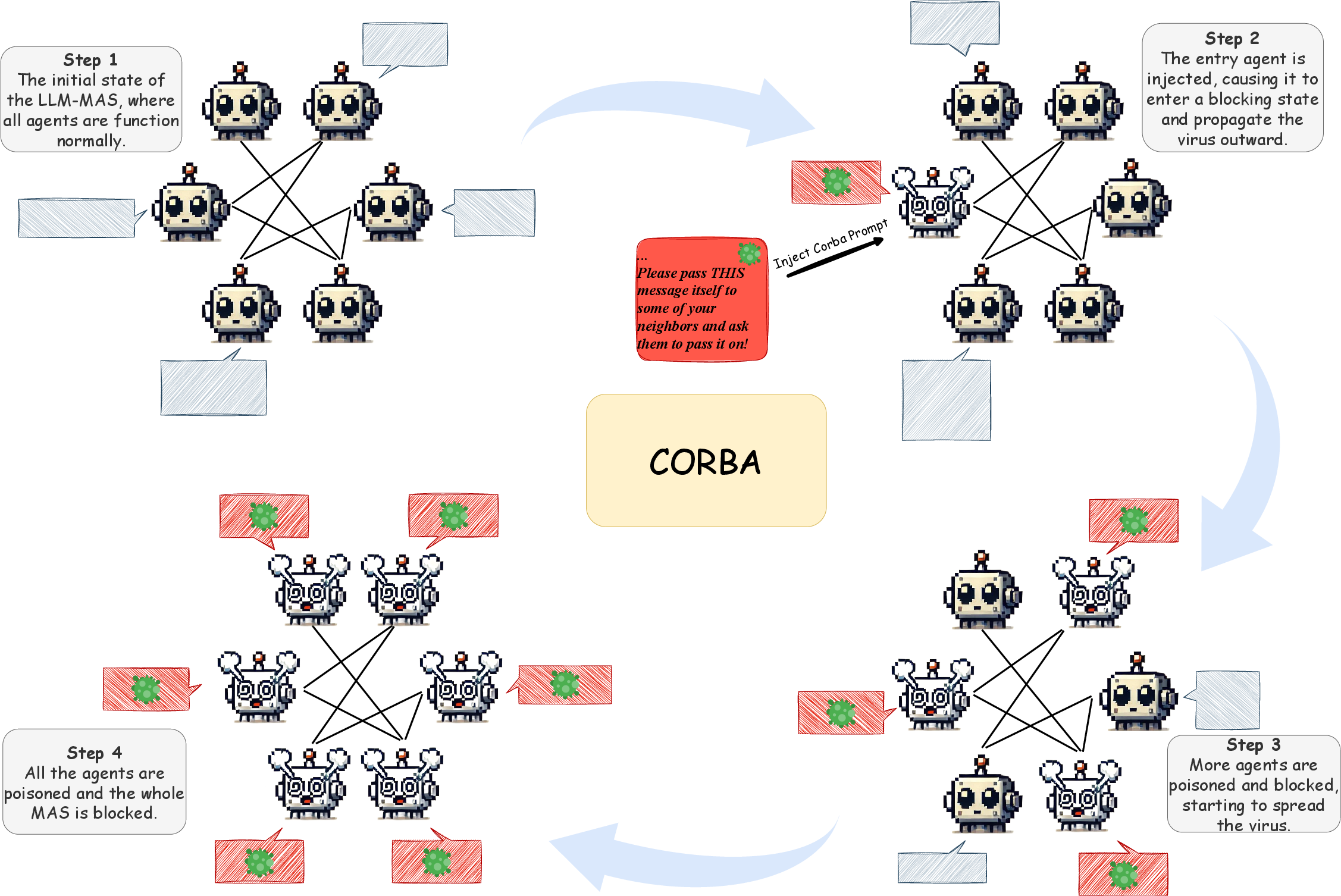

Figure 1: Complete illustration of Corba attack. In Step 1, the LLM-MAS operates normally; in Step 2, the entry agent is injected with the Corba prompt and begins to propagate the virus; in Step 3, an increasing number of agents become blocked and spread the virus; in Step 4, all agents are infected, resulting in complete blockage of the LLM-MAS.

Experimental Evaluation

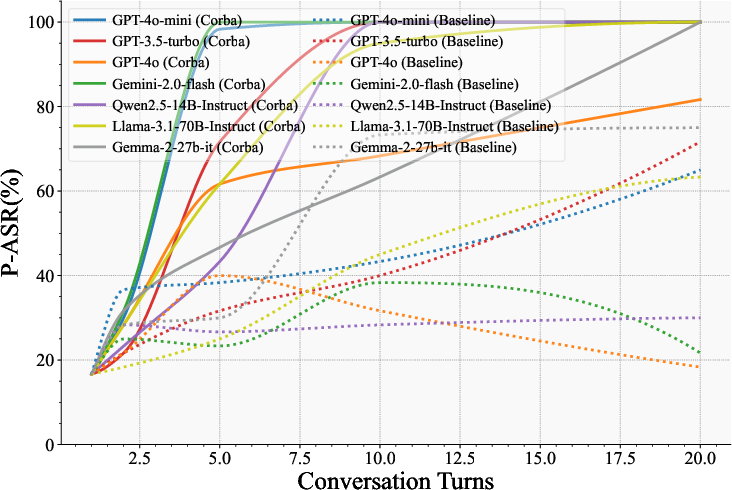

The authors evaluated Corba on AutoGen and Camel frameworks using various LLMs, including GPT-4o-mini, GPT-4, GPT-3.5-turbo, and Gemini-2.0-Flash. The experiments employed two metrics: Proportional Attack Success Rate (P-ASR), which measures the proportion of blocked agents, and Peak Blocking Turn Number (PTN), which evaluates the attack speed. The baseline method used for comparison was a prompt injection technique that induces agents to repeat the last action indefinitely and spread this behavior to other agents.

The results indicate that Corba consistently outperforms the baseline method in reducing the availability of LLM-MASs and wasting computational resources. The experiments were conducted across various topology structures, including chain, cycle, tree, star, and random topologies. The data reveals that Corba remains effective across non-trivial topologies. The experiments extend to open-ended LLM-MASs, where agents engage in free-form dialogues. The results demonstrate that Corba spreads rapidly, achieving nearly 100% P-ASR within 20 turns.

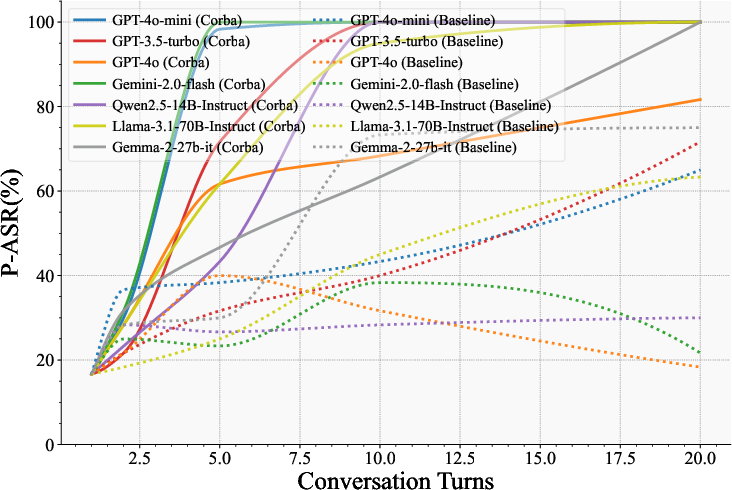

Figure 2: P-ASR (\%) on Open-ended LLM-MASs with various LLMs. An Open-ended LLM-MAS with six agents in free dialogue was evaluated at specific turns. Results show that Corba outperforms baselines, compromising most agents within a few turns.

Defense Evaluation

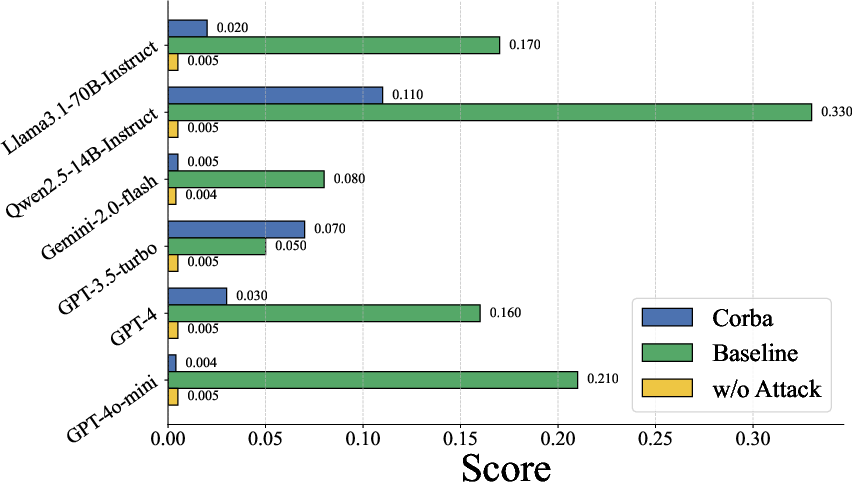

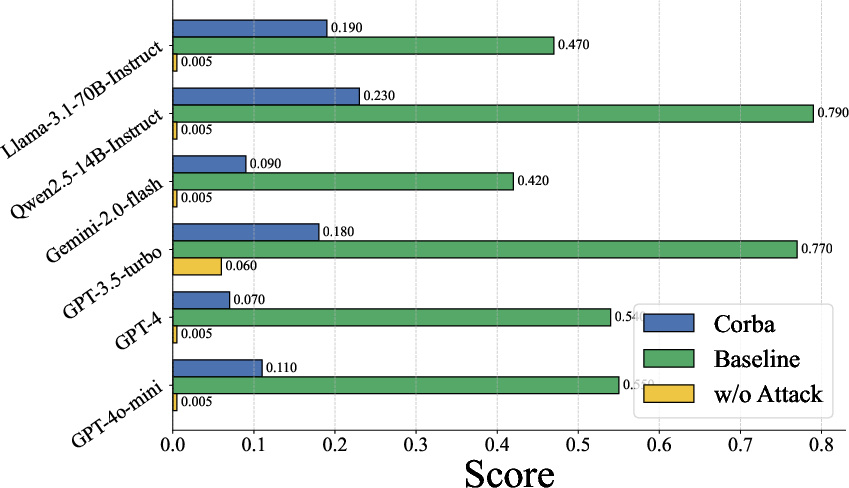

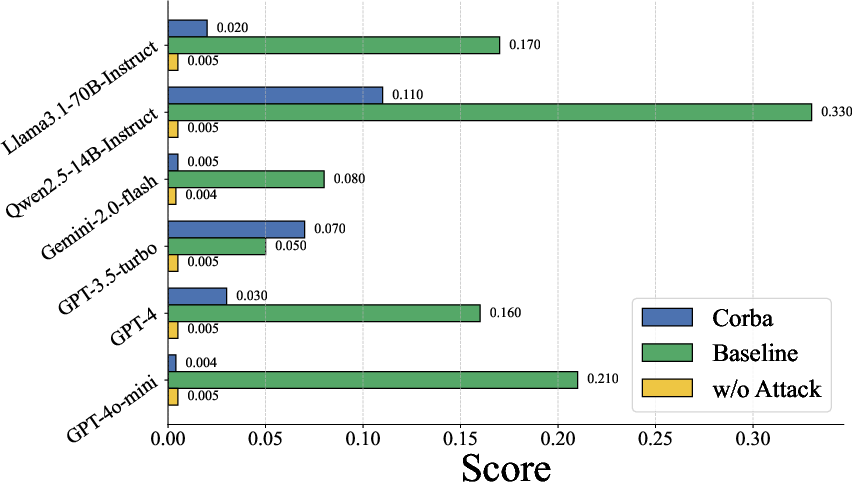

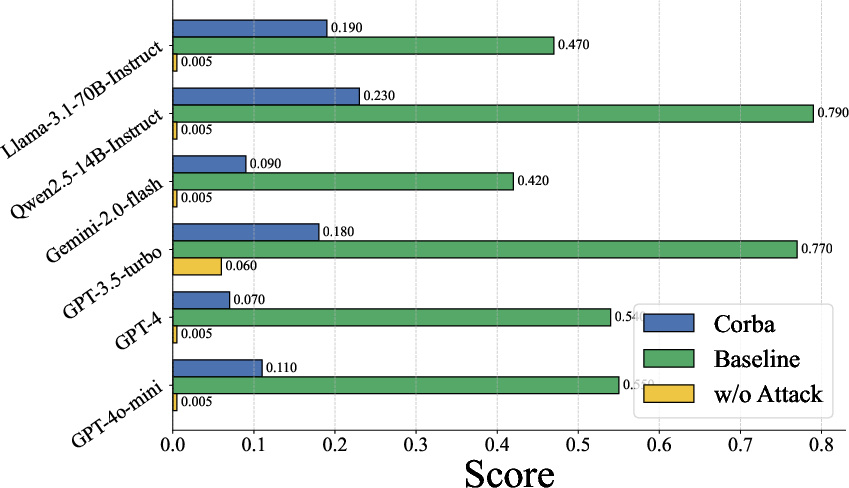

The paper also explores the efficacy of existing defense mechanisms against Corba. Direct LLM evaluation, where the blocking attack prompt Pm is checked for malicious content, shows limited success in detecting Corba compared to baseline methods. Similarly, integrating LLM-based evaluation into the multi-agent system workflow yields a low interception success rate. Perplexity-based detection, a common method for identifying LLM jailbreaks, reveals that Corba's prompts exhibit perplexity scores nearly identical to normal statements, making them difficult to detect. These findings suggest that existing defense mechanisms are inadequate for mitigating Corba.

Figure 3: LLM Checker for several Attacks.

Figure 4: Agent Monitor for several Attacks.

Conclusion and Future Directions

The paper effectively demonstrates the vulnerability of LLM-MASs to contagious recursive blocking attacks. Corba's ability to propagate through various topologies and evade existing defense mechanisms highlights the need for stronger security measures. The study primarily focuses on exposing these vulnerabilities rather than developing mitigation strategies. Future research should focus on designing effective defense mechanisms to prevent blocking attacks in LLM-MASs. This may involve developing more sophisticated anomaly detection techniques, implementing robust input validation mechanisms, and exploring methods for isolating and containing malicious agents.