- The paper introduces TutorLLM, a novel framework that combines KT with RAG to offer dynamic, personalized learning recommendations.

- The paper details a methodology integrating a Scraper model, a BERT-based KT model, and GPT-4 to reduce hallucinations and enhance content relevance.

- The paper's user study shows a 10% boost in satisfaction and a 5% improvement in academic performance, underscoring its potential in education.

TutorLLM: Customizing Learning Recommendations with Knowledge Tracing and Retrieval-Augmented Generation

Introduction

The paper "TutorLLM: Customizing Learning Recommendations with Knowledge Tracing and Retrieval-Augmented Generation" introduces the TutorLLM framework aimed at personalizing educational experiences through the integration of Knowledge Tracing (KT) and Retrieval-Augmented Generation (RAG) techniques with LLMs. This framework addresses several limitations inherent in current LLM-based educational systems such as ChatGPT, focusing primarily on personalization and accuracy in responses. By leveraging a combination of dynamic context retrieval and personalized learning state assessments, TutorLLM offers tailored educational interactions.

The integration of KT techniques provides insights into a student's learning trajectory, enabling the dynamic adjustment of learning recommendations based on individual progress. In contrast, the use of RAG techniques allows for enhanced response accuracy by ensuring that the LLM's outputs are informed by context-specific background knowledge, mitigating inaccuracies, commonly referred to as "hallucinations".

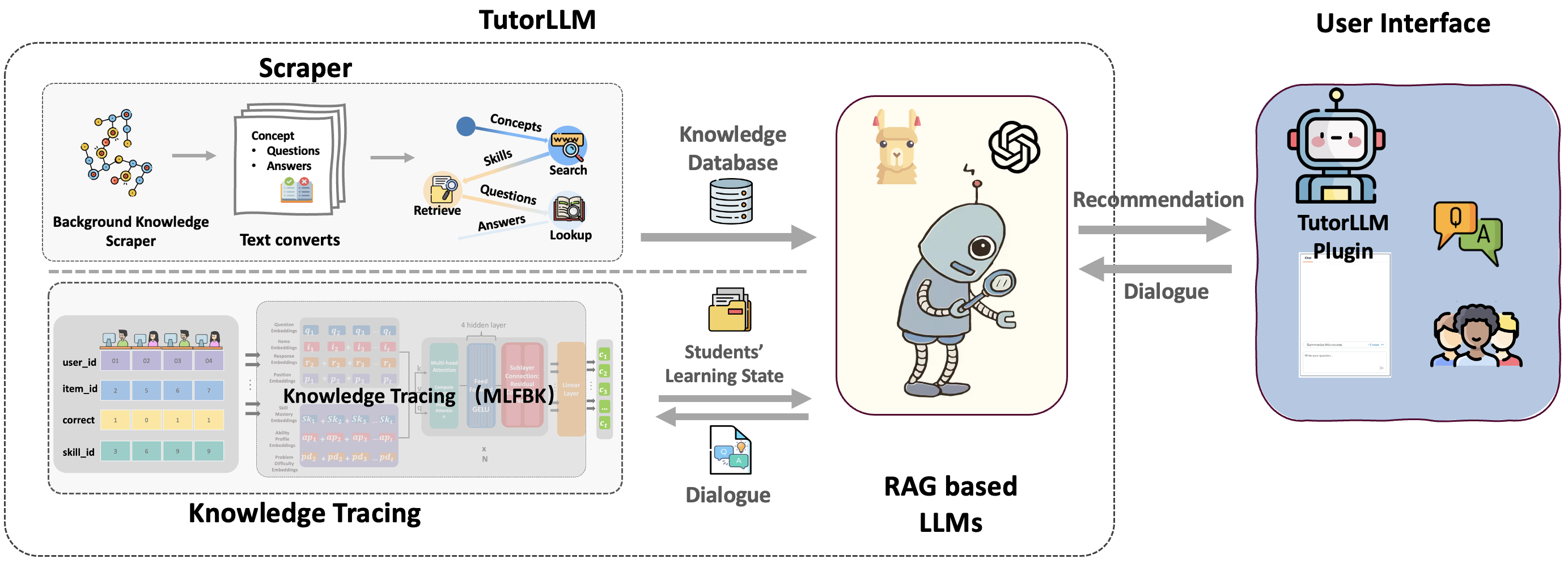

Figure 1: Overall architecture of TutorLLM.

The foundation of TutorLLM builds on advancements in educational recommender systems and the capabilities of LLMs. Traditional systems rely on KT methodologies such as Bayesian Knowledge Tracing (BKT) to track student progress and make informed recommendations, which are limited in their ability to dynamically adapt to content relevance. The paper addresses the linguistic limitations of these systems by incorporating the versatility of LLMs.

Recent innovations in the field have highlighted the potential for deep-learning models to enhance educational outcomes through personalized recommendations. By integrating KT methods with the generative capabilities of LLMs, TutorLLM introduces a significant step forward, addressing content relevance and enhancing interaction dynamics.

Implementation of TutorLLM

TutorLLM is composed of three principal components:

- Scraper Model: This component dynamically scrapes contextual information from active online learning platforms, providing course-specific text inputs to the LLM, thereby enriching the background knowledge base. The model ensures content relevance by consistently updating the knowledge base with captions, subtitles, and other related text extracted from educational websites scanned during student interaction.

- Knowledge Tracing (KT) Model: Utilizing the Multi-Features with Latent Relations BERT Knowledge Tracing (MLFBK) model, this component predicts a student's aptitude, skill mastery, and next possible actions by analyzing previous interactions. The BERT-based framework allows for detailed sequence predictions, integrating Bayesian approaches to model skill mastery and problem difficulty.

- RAG Enhanced LLM: The LLM leverages GPT-4 to provide tailored responses and learning recommendations. By integrating insights from the KT model and dynamically retrieved background content from the Scraper, the model mitigates hallucinations and optimizes learning experiences. Students receive personalized recommendations based on their learning progress and predicted needs.

Figure 2: User Interface of the TutorLLM.

User Study

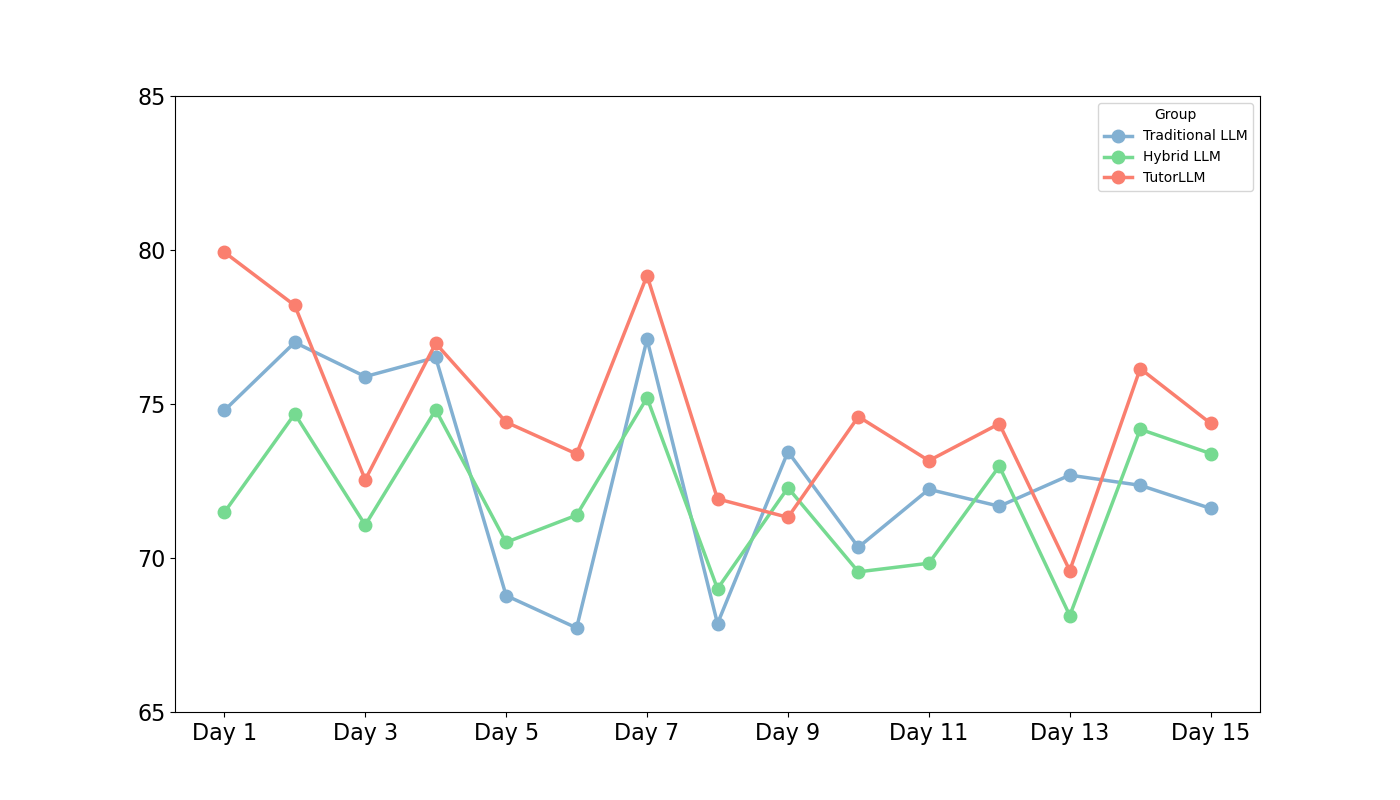

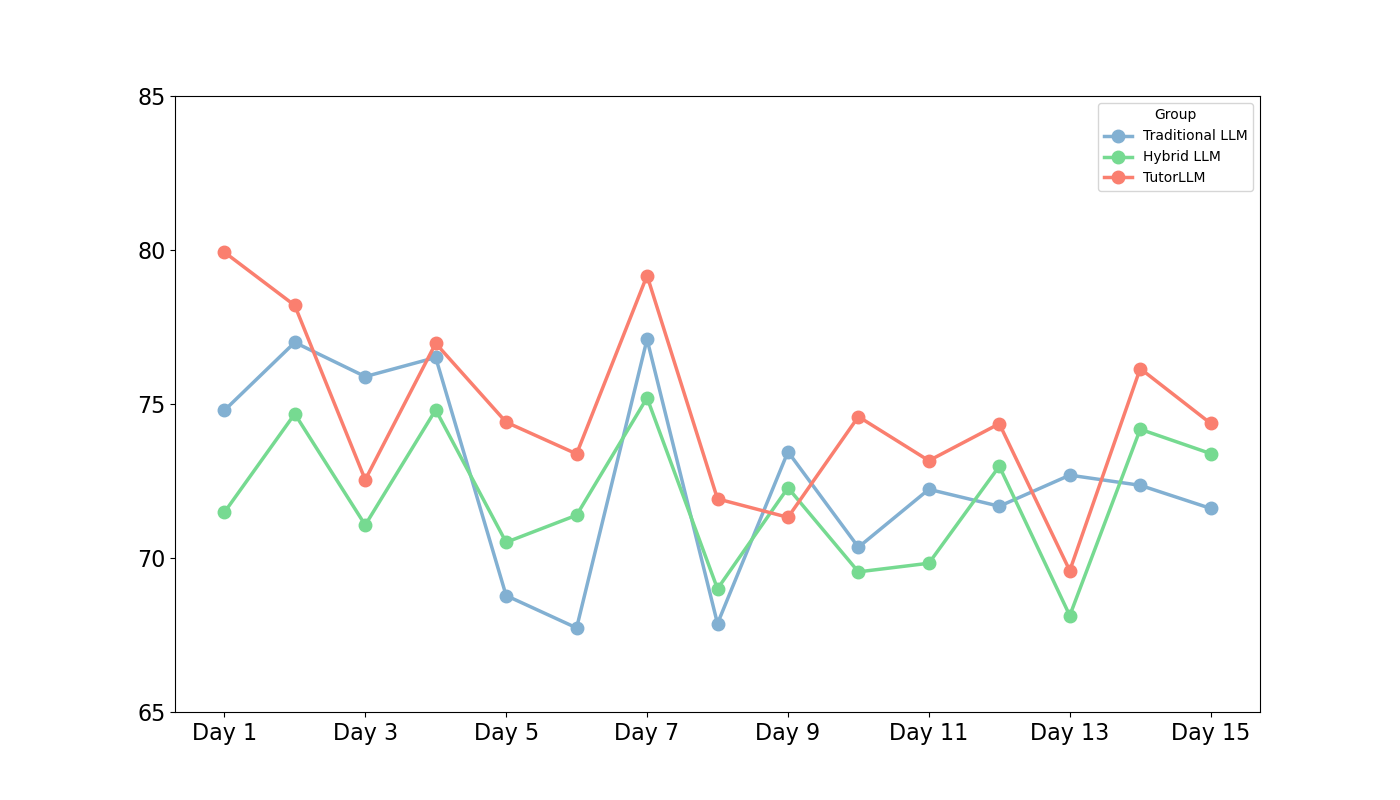

A controlled experiment involving 30 undergraduate students was conducted to evaluate TutorLLM's impact on learning efficacy. Students were grouped to compare performance outcomes between TutorLLM users and general LLM users. The results indicated that TutorLLM users exhibited greater satisfaction and engagement, as measured by the System Usability Scale (SUS) and increased quiz scores. TutorLLM demonstrated a 10% improvement in user satisfaction and a 5% improvement in academic performance relative to general LLM usage.

These findings underscore TutorLLM's potential in enhancing learning engagement and educational outcomes, although statistical significance was not achieved, suggesting avenues for further research.

Figure 3: Daily Mean Scores of User Performance Across Different Study Groups.

Discussion

TutorLLM showcased increased engagement, with students spending significantly more time using the system compared to traditional LLMs. Although not statistically significant, the enhanced engagement and incremental performance improvements reflect the tailored approach's effectiveness. These insights suggest TutorLLM's capability to redefine personalized learning through intelligent interaction systems, underscoring the need for continued refinement and adaptation across broader educational domains.

Conclusion

The TutorLLM framework represents a pioneering approach in integrating KT and RAG with LLMs to advance personalized learning systems. Although further development is needed to optimize personalization features and evaluate effectiveness across diverse educational settings, the system fundamentally enhances the precision and personalization of educational interactions. Future research should explore scaling TutorLLM's applications and address challenges such as data privacy and educator training to further its adoption in educational contexts.