- The paper introduces the Dynamic Parallel Tree Search (DPTS) framework that improves LLM reasoning by dynamically managing parallel inference paths.

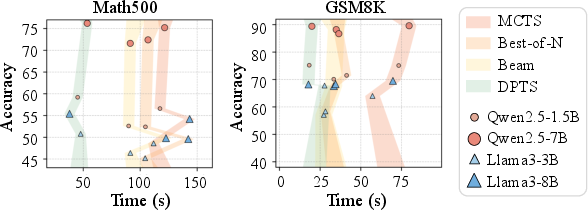

- It optimizes node processing with adaptive KV cache handling and parallel generation, reducing inference time by 2-4 times compared to traditional methods.

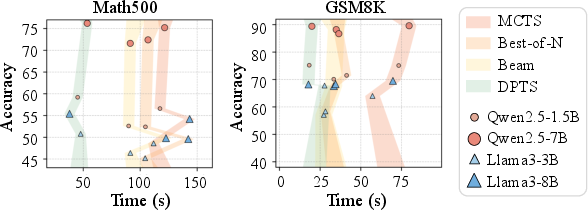

- Experimental evaluations on datasets like Math500 and GSM8K demonstrate that DPTS maintains or surpasses existing accuracy while lowering computational costs.

Dynamic Parallel Tree Search for Efficient LLM Reasoning

The paper "Dynamic Parallel Tree Search for Efficient LLM Reasoning" presents the Dynamic Parallel Tree Search (DPTS) framework, addressing computational inefficiencies in Tree of Thoughts (ToT)-based LLM reasoning. By leveraging dynamic parallelism and strategic path optimizations, the framework improves both computational efficiency and reasoning accuracy. Below, I provide a detailed, technical summary of the paper's contributions and implications.

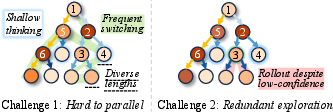

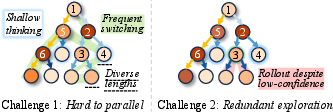

Parallelism Challenges in Tree-Based Reasoning

Tree of Thoughts (ToT) elevates LLM reasoning by structuring it as a tree search, exploiting algorithms such as Monte Carlo Tree Search (MCTS) to explore multiple reasoning pathways. The main challenges lie in the frequent focus shifts and redundant exploration inherent to tree structures, particularly in parallel computing environments.

Figure 1: Challenges of implementing parallelism in reasoning tasks.

Irregular computational trajectories and frequent context switching complicate parallel execution, leading to inefficient memory usage and shallow exploration. This impedes the ability of traditional methods to effectively utilize parallel processing capabilities in GPUs.

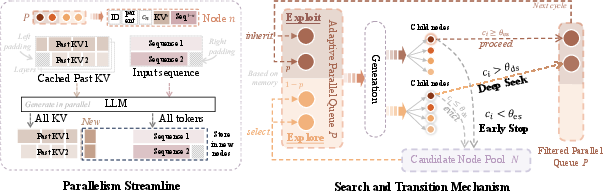

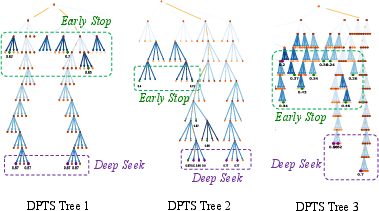

The DPTS Framework

The DPTS framework introduces innovations in the reasoning phase to dynamically manage and optimize reasoning paths during inference. This framework includes two primary components: Parallelism Streamline and the Search and Transition Mechanism.

Parallelism Streamline

The Parallelism Streamline focuses on efficient node parallelization, achieving fine-grained inference through adaptive parallelization.

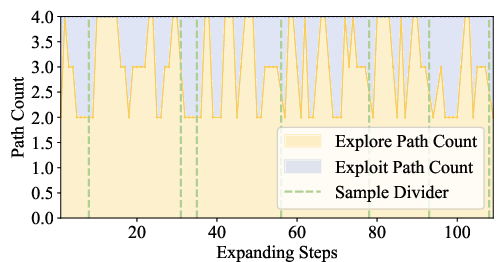

Search and Transition Mechanism

The mechanism effectively balances exploration and exploitation via dynamic node management and bidirectional transitions.

Experimental Evaluation

DPTS demonstrates significant improvements over existing methods when tested across various LLMs (like Qwen-2.5 and Llama-3) on reasoning datasets (Math500 and GSM8K).

Implications and Future Developments

The DPTS framework addresses core computational challenges of LLM reasoning by leveraging parallel computing architectures more effectively. Its approach offers significant implications for both practical applications, such as real-time decision making in complex problem-solving tasks, and theoretical advancements by enhancing the scalability of reasoning models. Future work can expand DPTS applications across different domains like coding and scientific problem solving, and further integrate hardware-level optimizations for even greater efficiency gains.