Modularization is Better: Effective Code Generation with Modular Prompting

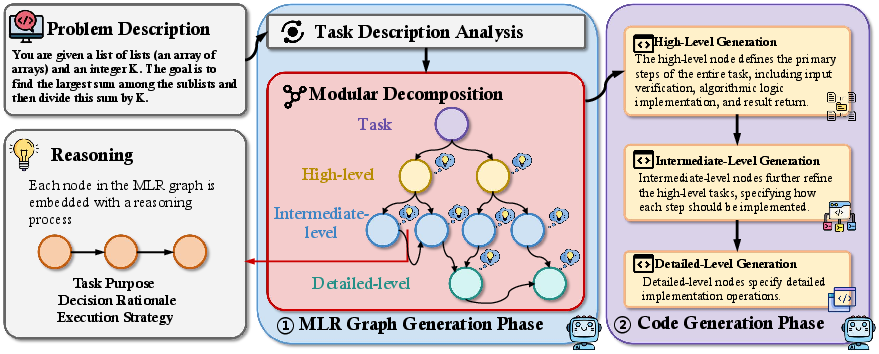

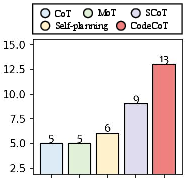

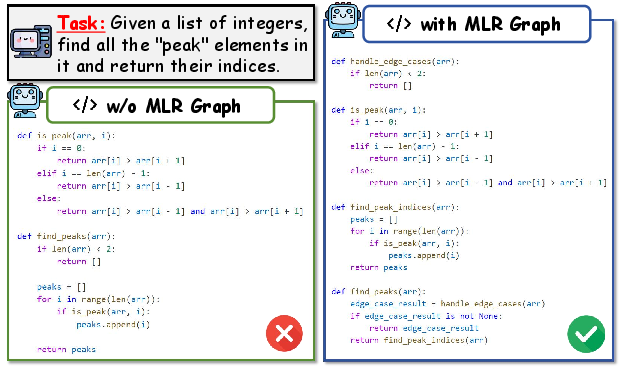

Abstract: LLMs are transforming software development by automatically generating code. Current prompting techniques such as Chain-of-Thought (CoT) suggest tasks step by step and the reasoning process follows a linear structure, which hampers the understanding of complex programming problems, particularly those requiring hierarchical solutions. Inspired by the principle of modularization in software development, in this work, we propose a novel prompting technique, called MoT, to enhance the code generation performance of LLMs. At first, MoT exploits modularization principles to decompose complex programming problems into smaller, independent reasoning steps, enabling a more structured and interpretable problem-solving process. This hierarchical structure improves the LLM's ability to comprehend complex programming problems. Then, it structures the reasoning process using an MLR Graph (Multi-Level Reasoning Graph), which hierarchically organizes reasoning steps. This approach enhances modular understanding and ensures better alignment between reasoning steps and the generated code, significantly improving code generation performance. Our experiments on two advanced LLMs (GPT-4o-mini and DeepSeek-R1), comparing MoT to six baseline prompting techniques across six widely used datasets, HumanEval, HumanEval-ET, HumanEval+, MBPP, MBPP-ET, and MBPP+, demonstrate that MoT significantly outperforms existing baselines (e.g., CoT and SCoT), achieving Pass@1 scores ranging from 58.1% to 95.1%. The experimental results confirm that MoT significantly enhances the performance of LLM-based code generation.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.