- The paper introduces a novel procedural pipeline that generates diverse, high-fidelity articulated objects using probabilistic programming.

- It leverages a tree-growing strategy for articulation, procedural mesh and material generation via Blender API, and systematic joint configuration.

- Experimental evaluations show improved geometric accuracy, faster generation time, and stable, collision-free object performance compared to prior methods.

Infinite Mobility: Procedural Generation of Articulated Objects

Infinite Mobility introduces a pipeline for procedural generation of high-fidelity articulated objects, addressing the limitations found in previous data-driven and simulation-based approaches.

Introduction and Motivation

The demand for large-scale, high-quality articulated objects is a significant challenge in embodied AI, primarily due to the scarcity of sufficient datasets and the labor-intensive nature of generating these objects manually. Existing methods, dependent on limited datasets or simulated environments, struggle with scale and realism. Infinite Mobility offers a novel solution through procedural generation, providing an alternative to traditional data-driven approaches by generating diverse, high-fidelity articulated objects using probabilistic programming.

Methodology

Procedural Pipeline

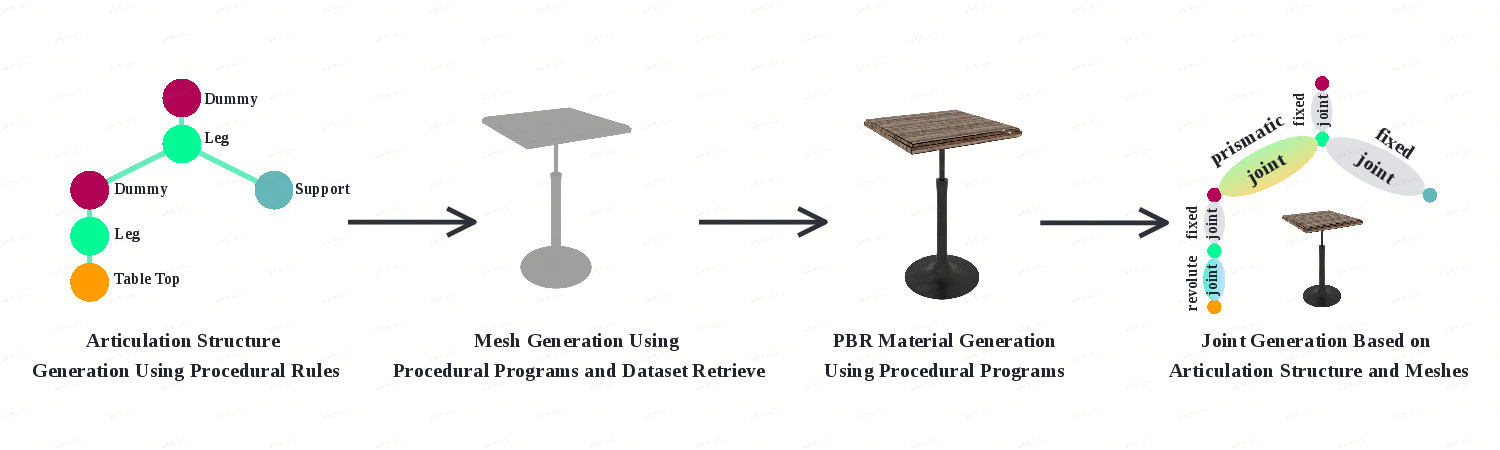

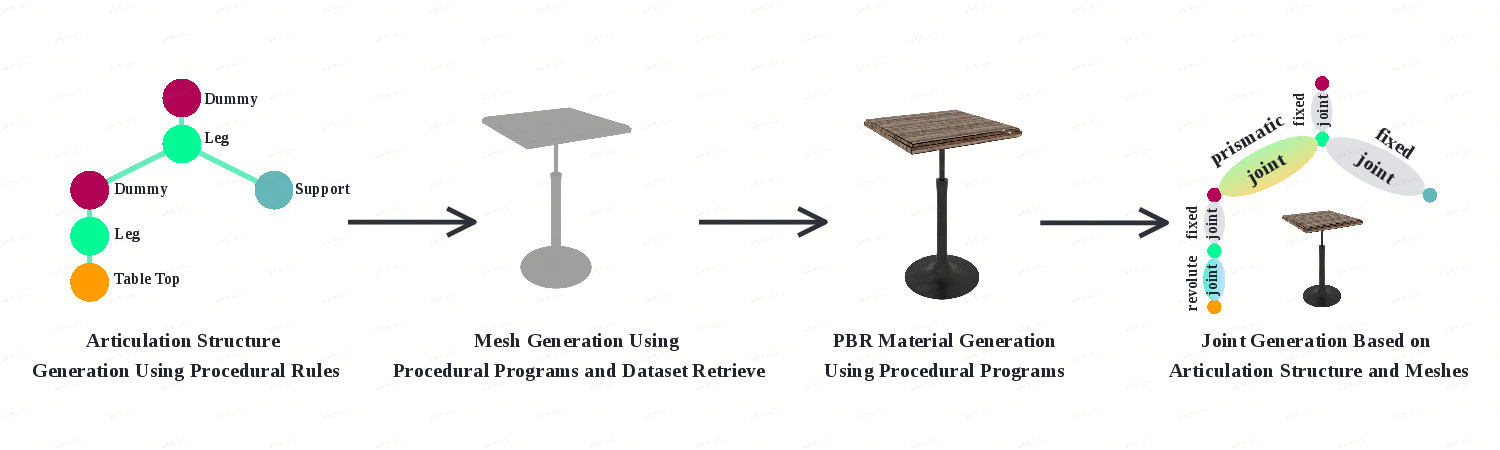

The pipeline can be divided into four main components:

- Articulation Tree Structure Generation: This step employs a tree-growing strategy to define the articulation structure of objects, ensuring semantic coherence and diversity by adhering to category-specific growth rules.

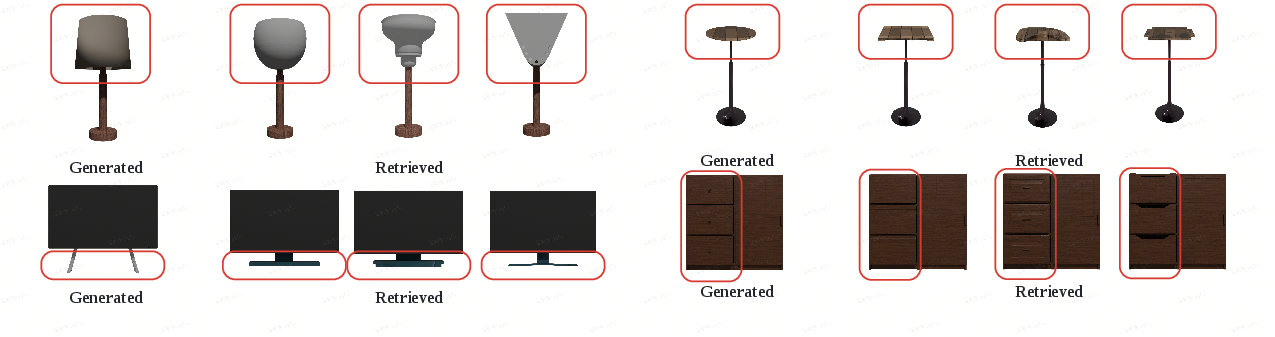

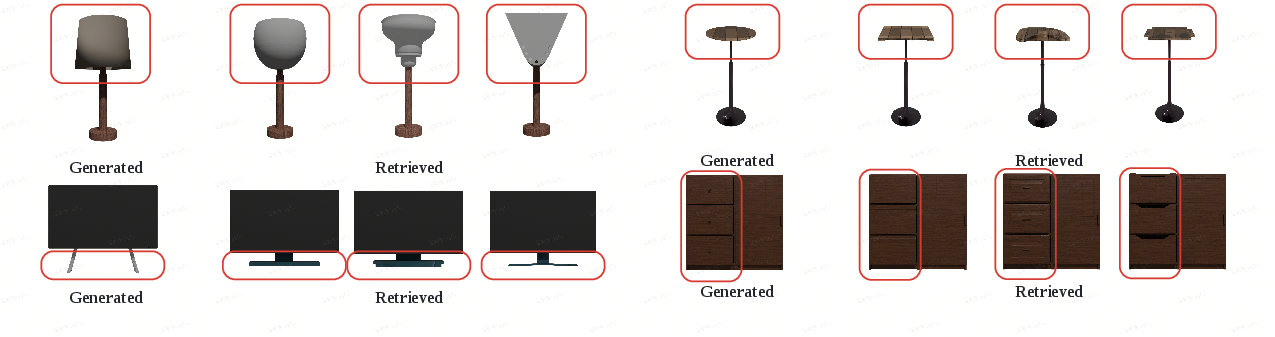

- Geometry Generation: Geometric shape synthesis is accomplished through procedural mesh generation using Blender's API and part retrieval from curated datasets, improving shape diversity.

- Material Generation: The material properties are procedurally generated and varied using Blender's shader node system, adding randomness in diffuse, specular, and normal maps.

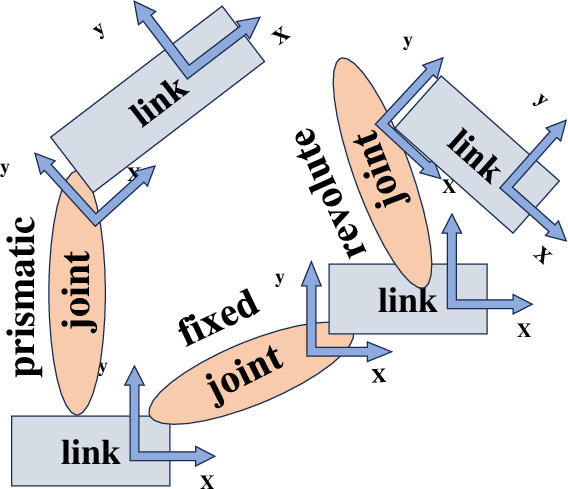

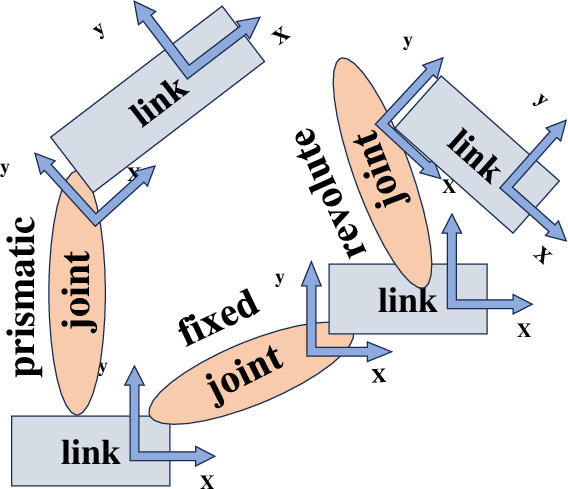

- Joint Generation: Joint physical properties such as type, position, and motion range are systematically determined based on articulated parts, including six kinds of joints, from simple to compound.

Figure 1: The whole pipeline is divided into four parts: articulation tree structure generation, geometry generation, material generation, and joint generation.

Figure 2: Structure of the URDF file. Each link is a part of the object, represented as a textured mesh. Each joint connects two links, describing the articulation structure.

Enhancing Mesh Quality and Articulation

Procedural Mesh Retrieval

Utilizing a curated dataset, the pipeline integrates procedural refinement mechanisms for mesh retrieval, addressing the inconsistency and quality issues prevalent in existing datasets like ShapeNet and PartNet.

Figure 3: Geometry of each part is obtained via Blender's API or from a curated dataset. Both methods ensure diverse shape generation.

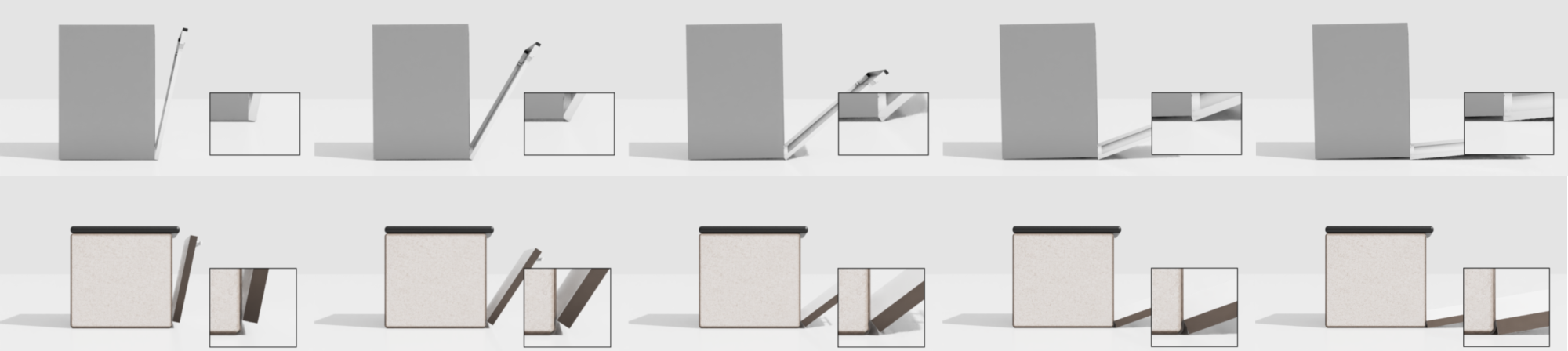

Collision and Physical Plausibility Adjustments

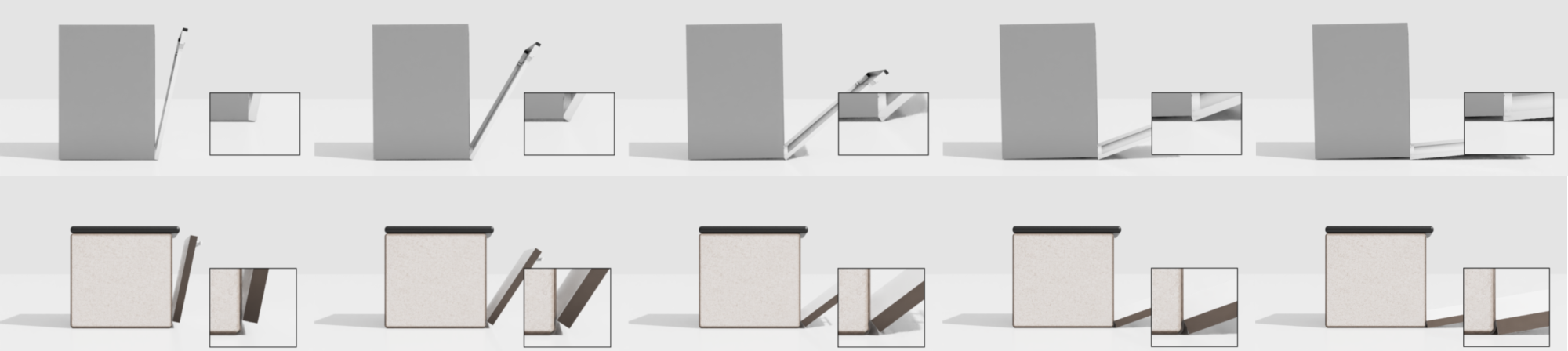

The pipeline incorporates adjustments for mesh placement and joint configuration to ensure physical plausibility, such as expanded gaps between moving parts for stability and avoidance of collisions during articulation.

Figure 4: Adjustments ensure no collisions and maintain stability. Unlike objects with collision issues, those generated by our method exhibit stable articulation.

Experimental Evaluations

Comparative Analysis

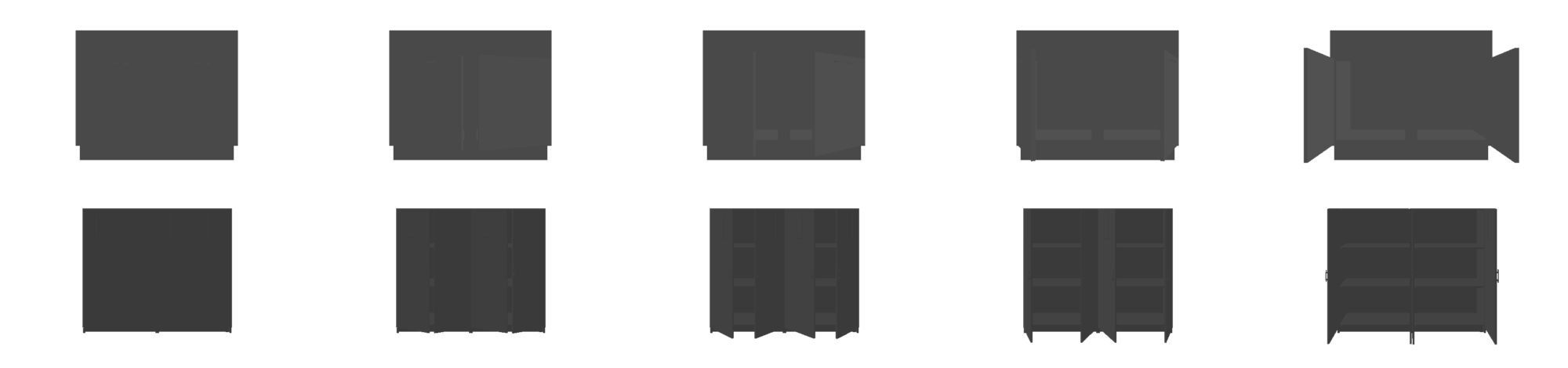

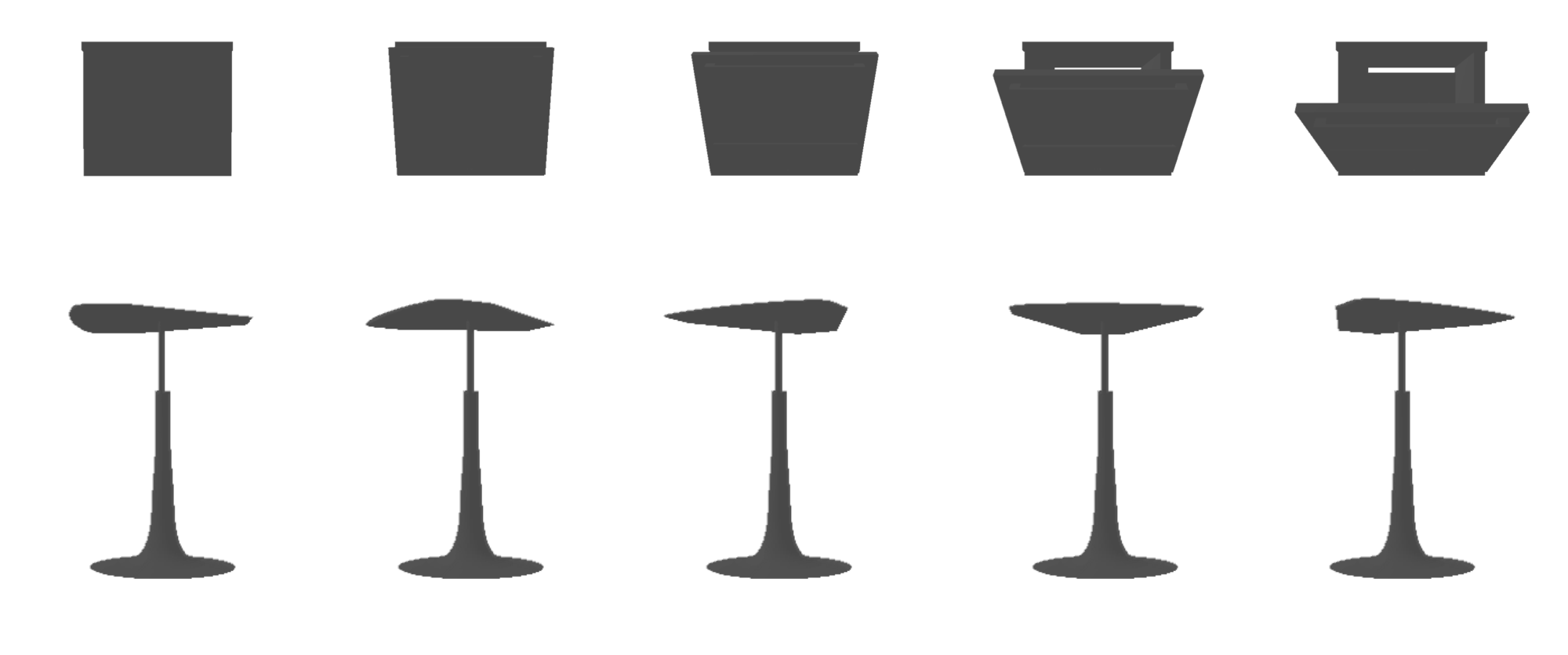

Infinite Mobility's procedural data generation outperforms existing models like NAP and CAGE and compares favorably to human-annotated datasets such as PartNet-Mobility across multiple evaluation metrics, including geometric accuracy and articulation fidelity.

Figure 5: Human evaluators determining the superior motion structure by observing textureless videos of articulated objects.

Figure 6: Articulated objects generated by CAGE trained on datasets from our pipeline, demonstrating accurate geometry and motion structure.

Our method surpasses existing generative models in generation time and complexity, producing objects with higher diversity and joint complexity.

Conclusion

Infinite Mobility provides a robust solution for synthesizing articulated objects, combining procedural and probabilistic generation techniques to deliver high-fidelity, diverse, and physically plausible outputs. The framework's ability to easily integrate with simulation environments suggests a promising avenue for scaling articulated object generation in AI-driven applications.

In future work, the automation of parameter tuning and the expansion of object categories could further enhance this framework's applicability and ease of use.