- The paper introduces the EAP framework that replaces verbose natural language prompts with labeled examples, significantly reducing manual prompt crafting.

- The paper employs unsupervised clustering for global example selection and cosine similarity for local examples, ensuring balanced and representative data input.

- The paper demonstrates that using example-based prompts can reduce LLM inference latency by up to 70% while maintaining robust performance in e-commerce tasks.

Overview of the Paper

The paper, "Examples as the Prompt: A Scalable Approach for Efficient LLM Adaptation in E-Commerce" (2503.13518), addresses the challenges of prompt engineering in LLMs within the e-commerce domain. It introduces a framework named "Examples as the Prompt" (EAP), which utilizes labeled data to optimize the composition of prompts in LLM applications, enhancing performance while minimizing manual crafting efforts. The framework presents an adaptive, unsupervised method for example selection, demonstrating advantages in efficiency, flexibility, and performance across various e-commerce tasks. Additionally, EAP_lite replaces natural language components with labeled examples, speeding up LLM inference significantly.

Prompt Design and Challenges in E-Commerce

Prompt-based applications are prevalent in e-commerce for tasks like query understanding, recommendation systems, and customer support. These applications benefit from the flexibility of prompt-based methods compared to traditional fine-tuning, allowing rapid adaptation to changing customer needs. However, crafting and maintaining prompts require significant domain expertise and frequent updates, posing challenges in bias and runtime constraints. EAP seeks to address these limitations by emphasizing the selection of representative examples rather than solely relying on natural language definitions.

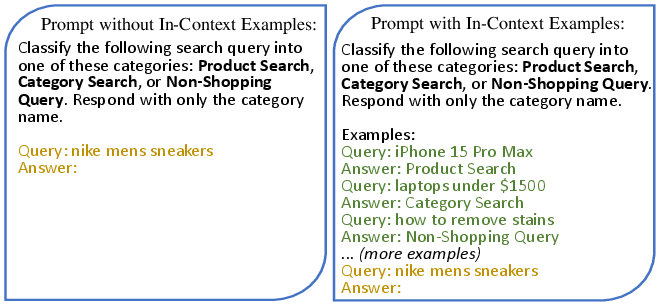

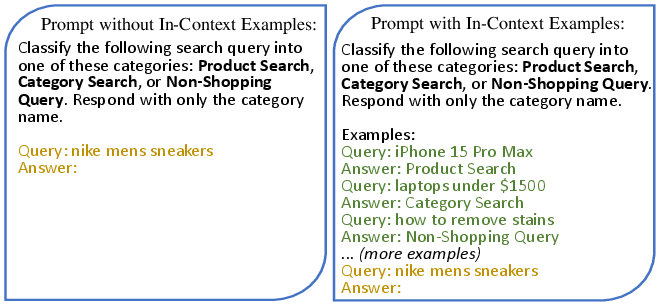

Figure 1: Prompt examples for search query classification. The green text represents in-context examples, while the yellow text denotes the input q.

Methodology of EAP

Global and Local Example Selection

Global Examples: These examples represent the entire data distribution for a given task, helping LLMs understand the problem space comprehensively. They are selected through unsupervised methods like K-means clustering, ensuring optimal representation of the dataset's diversity and mitigating imbalances through techniques like subsampling.

Local Examples: To address corner cases and prevent overconfidence in LLM predictions, local examples are selected based on similarity to specific inputs using embeddings and similarity measures such as cosine similarity. This approach balances the need for general understanding with precision in edge cases.

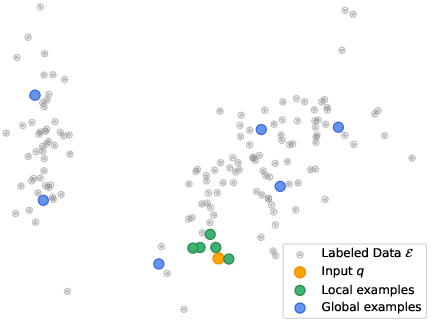

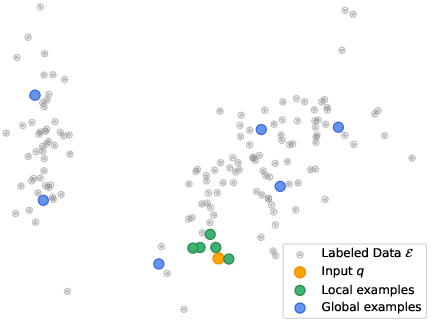

Figure 2: Visualization of global and local examples selected by EAP. While global examples represent the overall data distribution, local examples help characterize the specific input.

By substituting verbose prompt definitions with succinct examples-only prompts, EAP significantly reduces LLM inference latency, maintaining performance while enhancing system efficiency. This is further validated in tasks where runtime constraints are critical, showcasing potential for both online and offline improvements.

Experimental Validation

The paper evaluates EAP across four e-commerce tasks—Navigation, Order, Deal, and Question2Query (Q2Q)—demonstrating its superiority over traditional hand-crafted prompts. EAP exhibits strong improvements in detecting navigational intent and comparable results in other tasks, while delivering runtime efficiencies by reducing prompt length.

Global and Local Tuning

The balance between global and local examples is crucial for maximizing EAP's performance, as shown in the experiments on navigational intent detection. Adjusting this balance effectively impacts precision and recall, particularly for minority classes.

Implications for Production

The streamlined example-based approach of EAP enables efficient data labeling and auditing, substantially improving the speed of LLM-based pipeline operations. EAP_lite's ability to decrease inference latency by up to 70% in data labeling tasks highlights its practical benefits, offering potential for increased revenue through improved model performance derived from expanded labeled datasets.

Conclusion

The introduction of EAP represents a significant advance in prompt engineering for LLMs within e-commerce, enhancing adaptability and reducing manual input requirements. By integrating example selection into prompt design, EAP offers a scalable, efficient alternative to human-crafted prompts, enabling superior performance with reduced latency. This approach is poised for broad application across diverse domains, fostering the rapid development of LLM solutions in industry.