- The paper presents OPRO, a novel framework integrating LLMs and physics-based simulations for nuclear fuel assembly optimization.

- It uses iterative meta-prompts to generate, evaluate, and refine candidate solutions, outperforming traditional genetic algorithms.

- The study underscores the impact of prompt engineering and detailed contextual information in enhancing model performance.

Optimization through In-Context Learning and Iterative LLM Prompting for Nuclear Engineering Design Problems

Introduction

The paper introduces a novel application of LLMs in the domain of nuclear engineering design optimization. While traditionally utilized for text-based tasks, LLMs are leveraged here for numerical optimization, particularly in the complex field of nuclear fuel assembly configurations. This approach, termed Optimization by Prompting (OPRO), integrates logical reasoning capabilities with high-fidelity physics-based simulations to iteratively refine and improve engineering design solutions.

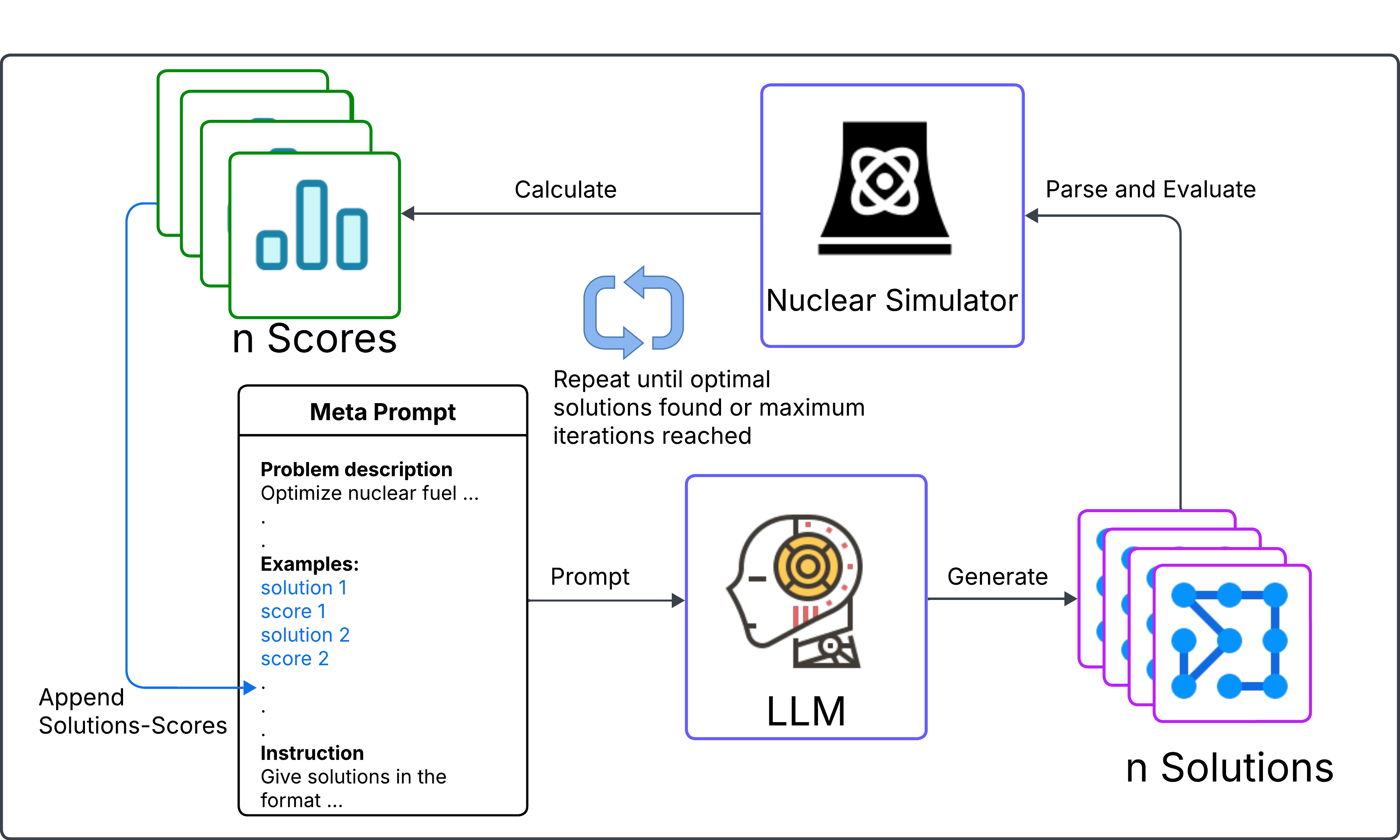

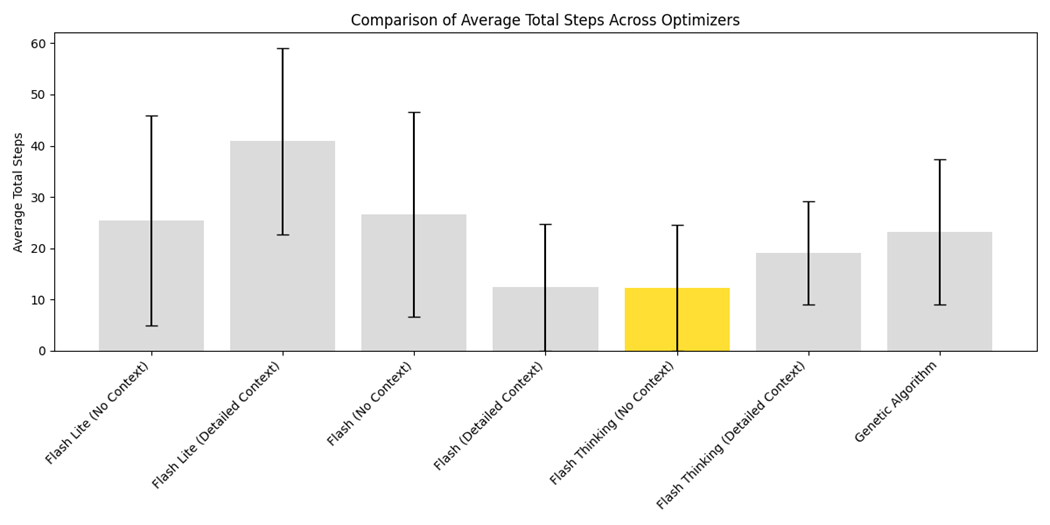

Figure 1: Iterative Optimization Framework Using LLM and Nuclear Simulation.

Optimization by Prompting (OPRO)

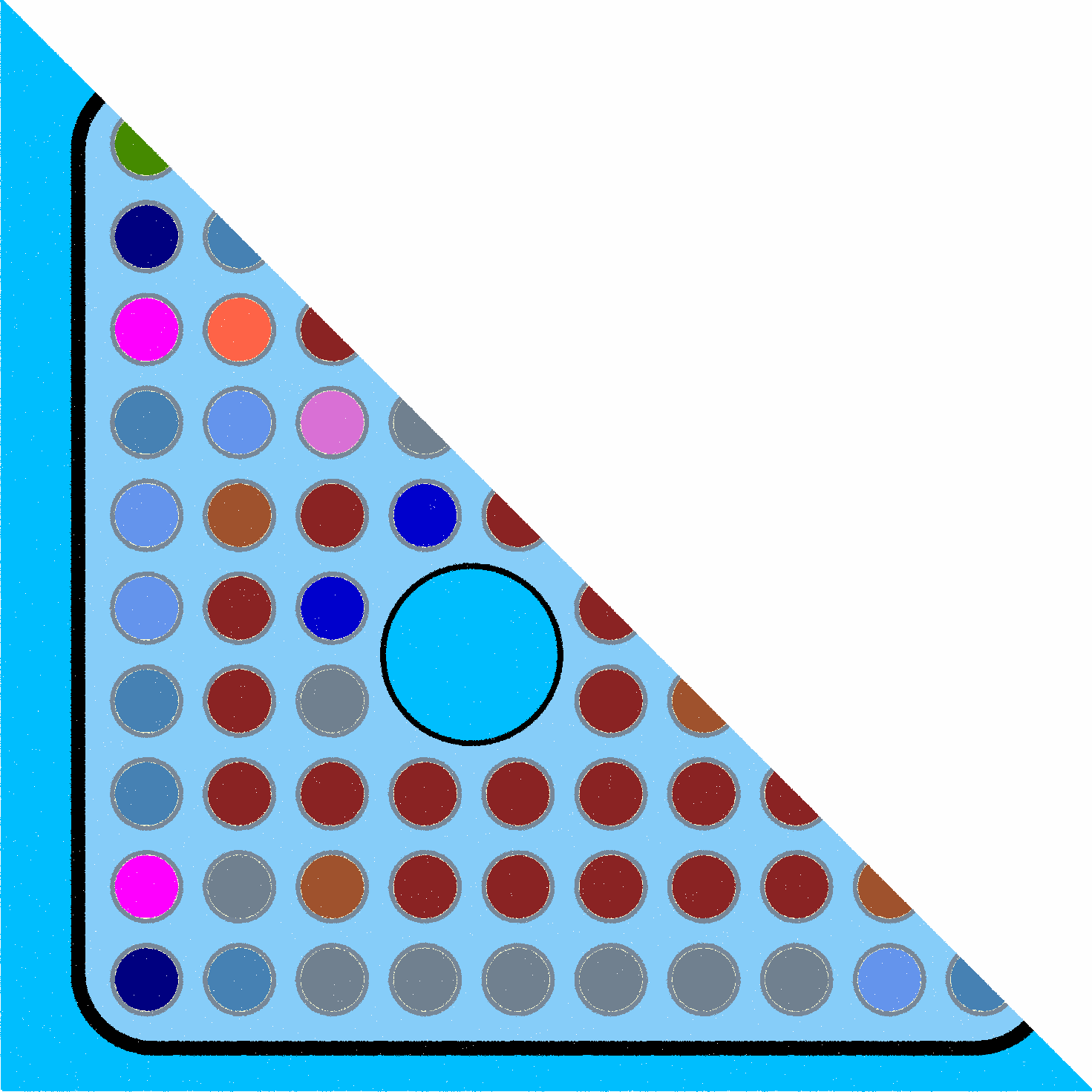

In OPRO, LLMs are employed not as mere tools for linguistic tasks but as strategic optimizers. The framework allows the practitioners to define optimization problems using natural language, eliminating the need for intricate mathematical formulations. The core of this method is the use of meta-prompts, which include problem descriptions, historical solutions, and specific instances to iteratively generate and evaluate solutions.

The algorithm underlying OPRO leverages natural language to create a dynamic feedback loop. Candidate solutions generated by the LLM are assessed through a scoring function, reflecting their performance, and these scores are used to iteratively refine the model's outputs. The innovative step here is replacing numerical gradient descent with a conceptual equivalent achievable through language-driven LLM prompts.

Application in BWR Fuel Lattice Optimization

The paper elucidates the application of OPRO to a Boiling Water Reactor (BWR) fuel lattice optimization problem, specifically focusing on the GE-14 assembly configuration. The problem involves adjusting the fuel pin enrichment and gadolinium content within specified constraints to achieve optimal reactor performance, characterized by a target infinite multiplication factor and minimized power peaking factor.

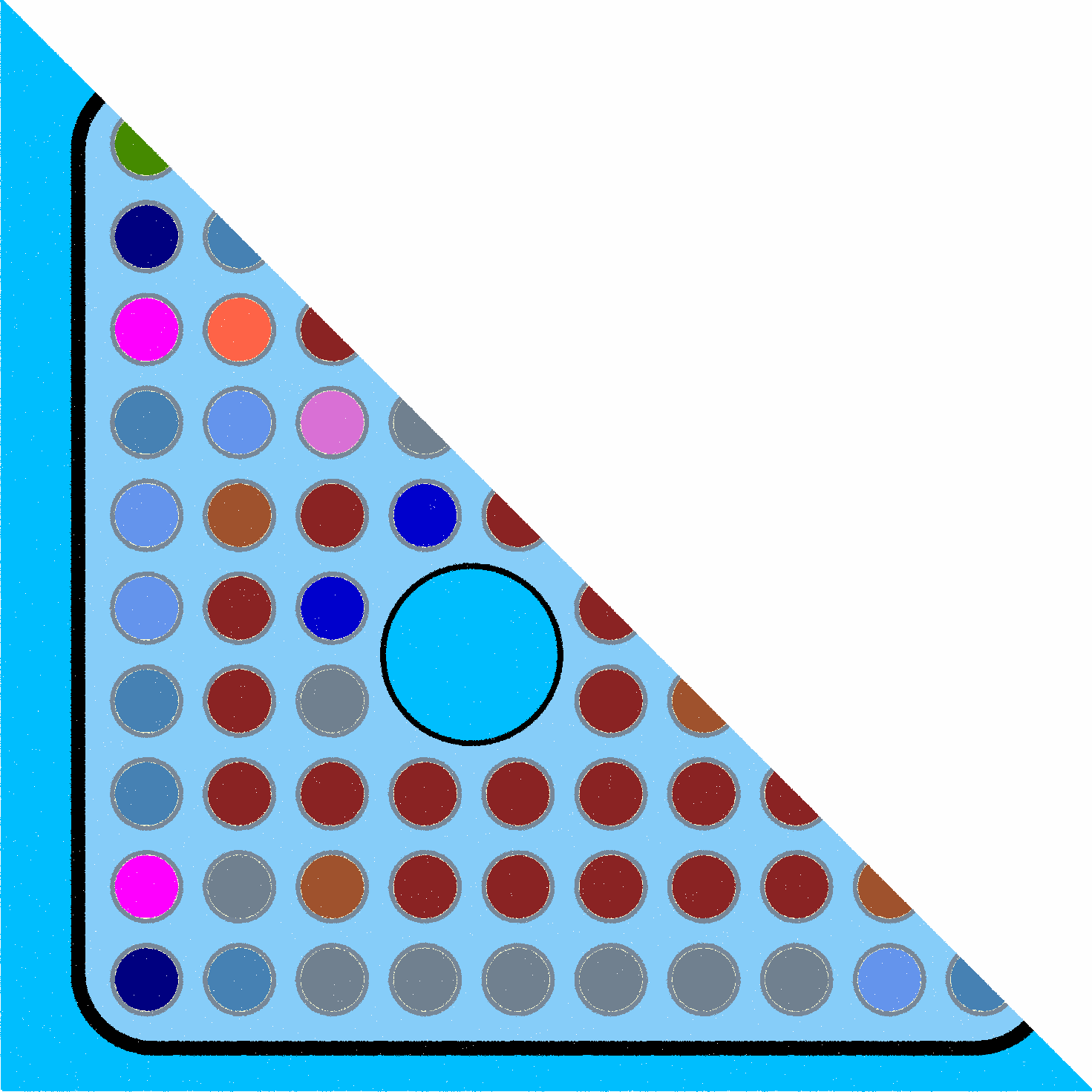

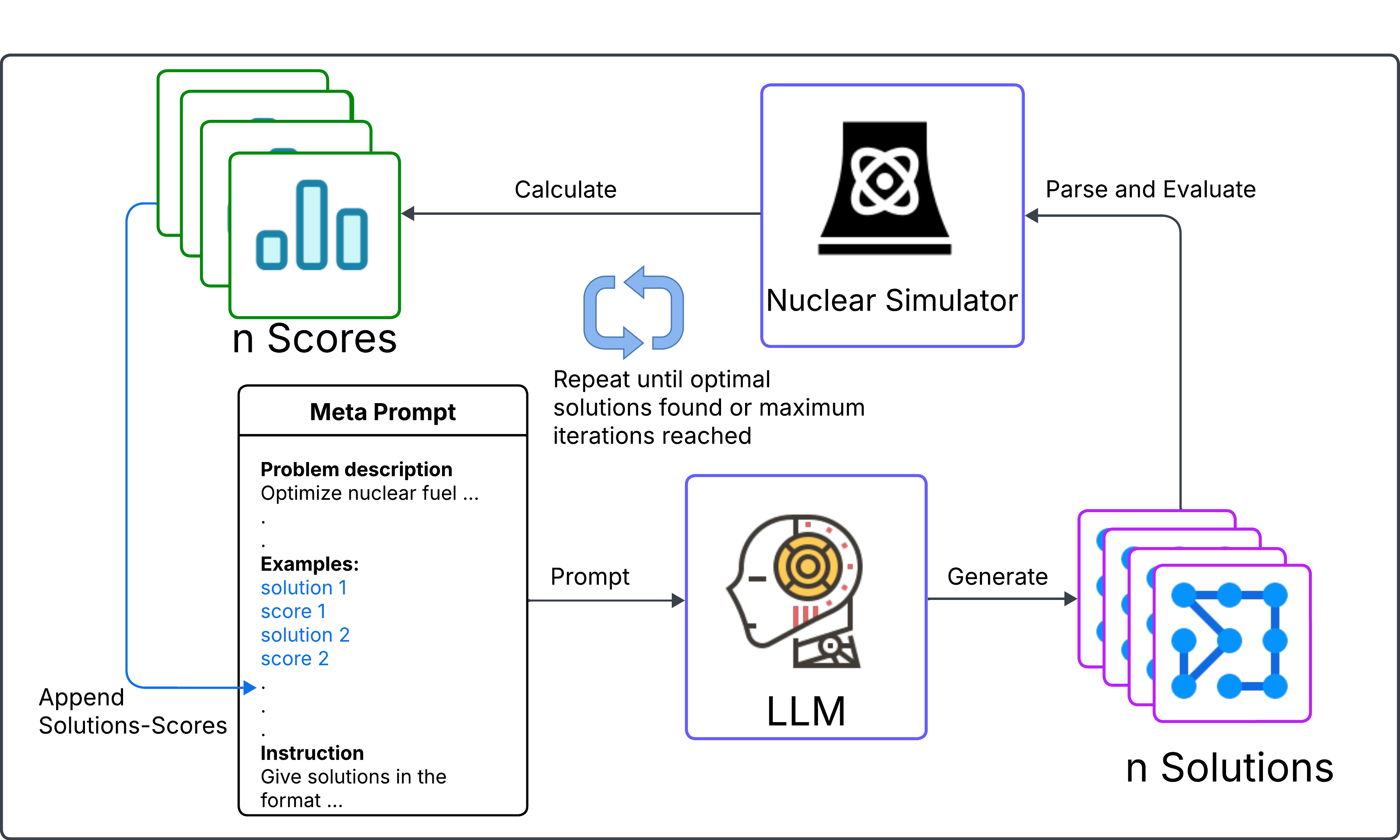

Figure 2: Half symmetry of GE-14 Dominant (DOM) fuel lattice. The image is generated by the authors using Casmo-5 software.

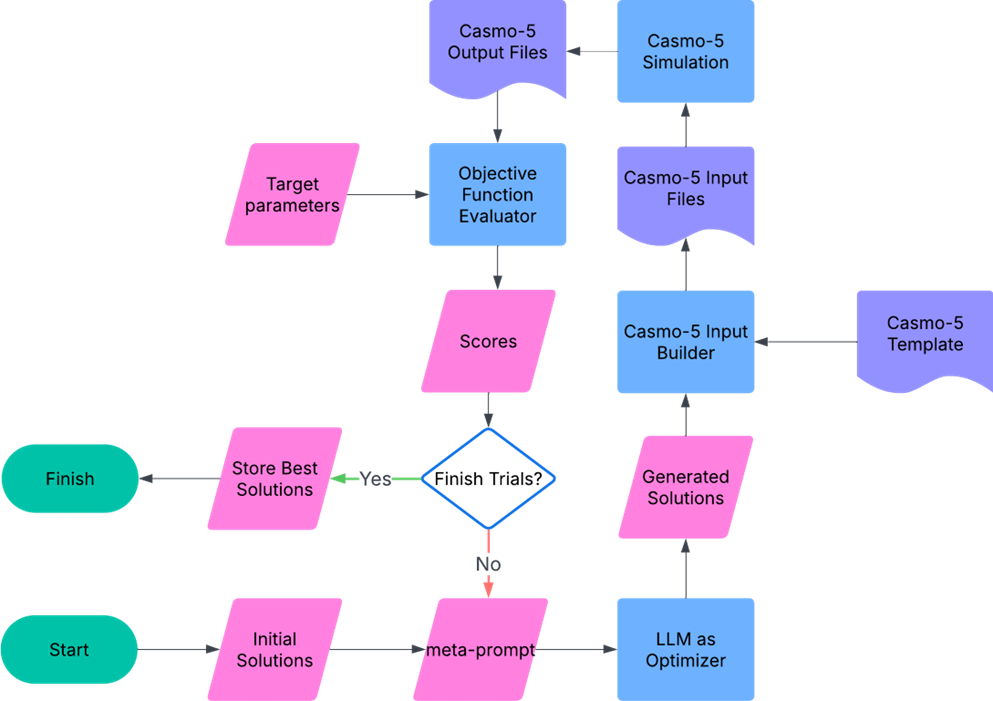

The objective function accounts for deviations from desired performance metrics, penalizing solutions that fail to meet reactivity and power-peaking constraints. The optimization process employs CASMO-5 simulations in conjunction with LLM-generated solutions to iteratively explore and refine the parameter space.

Comparison of Optimization Strategies

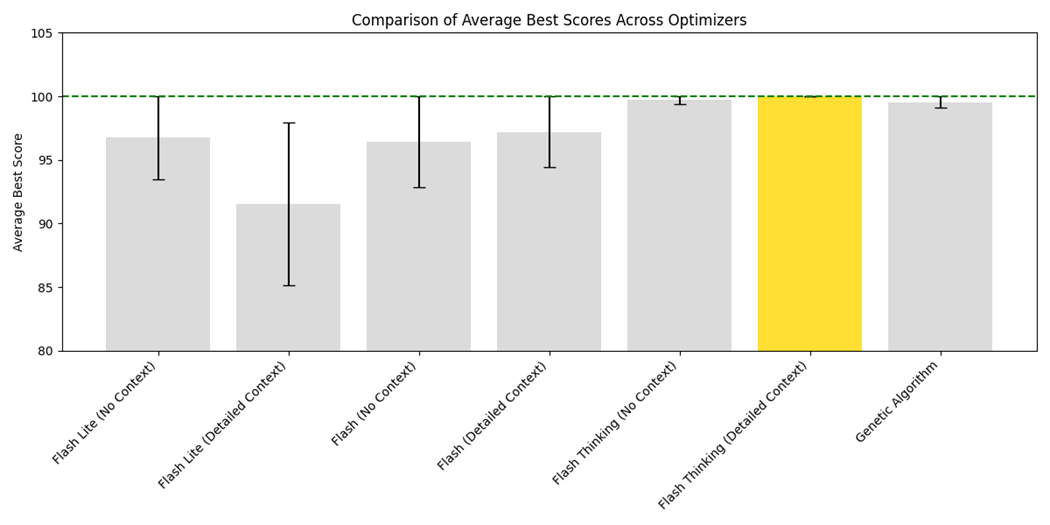

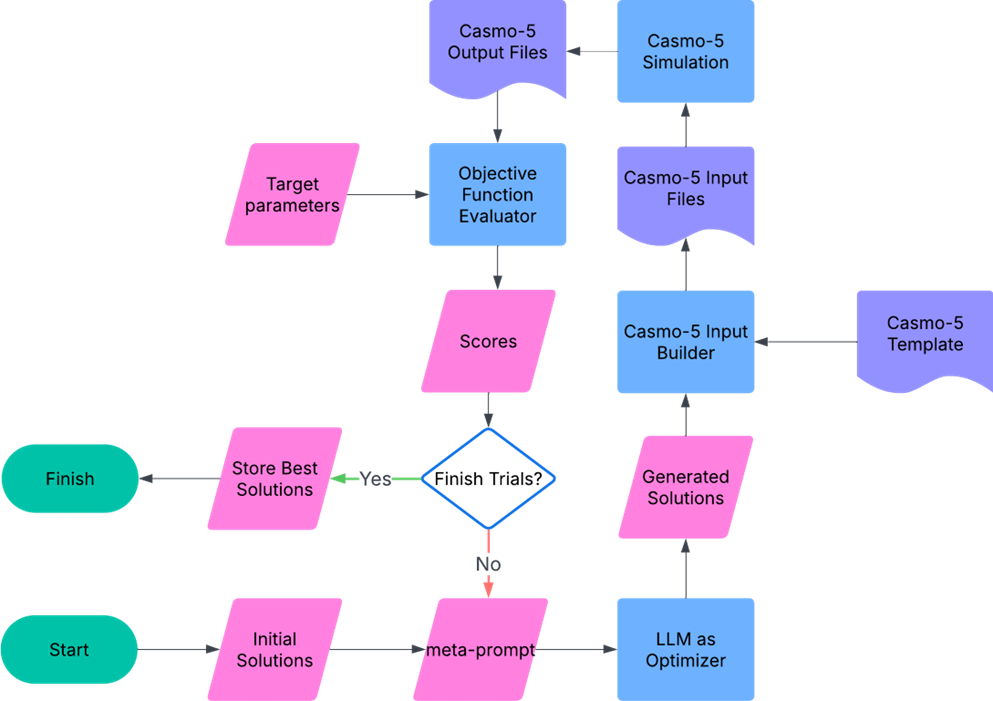

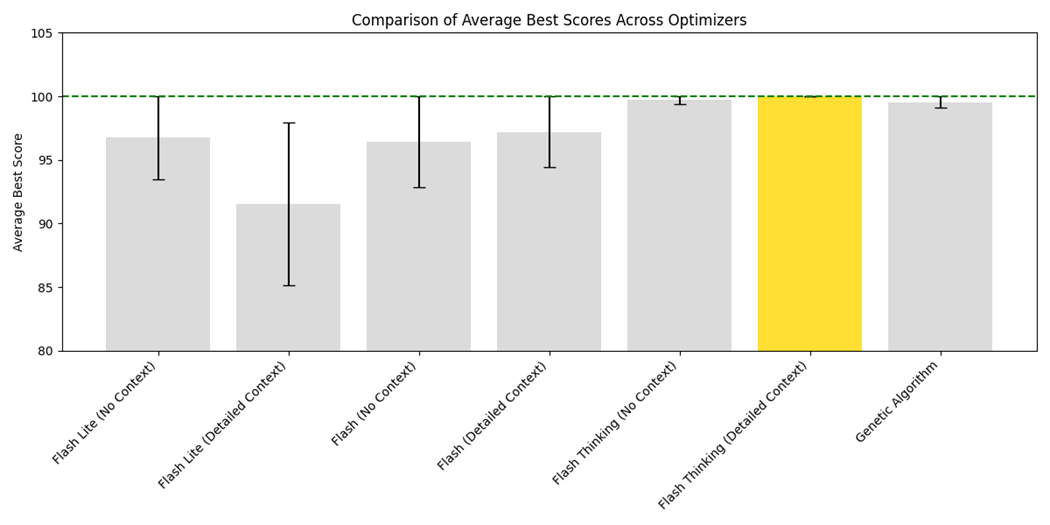

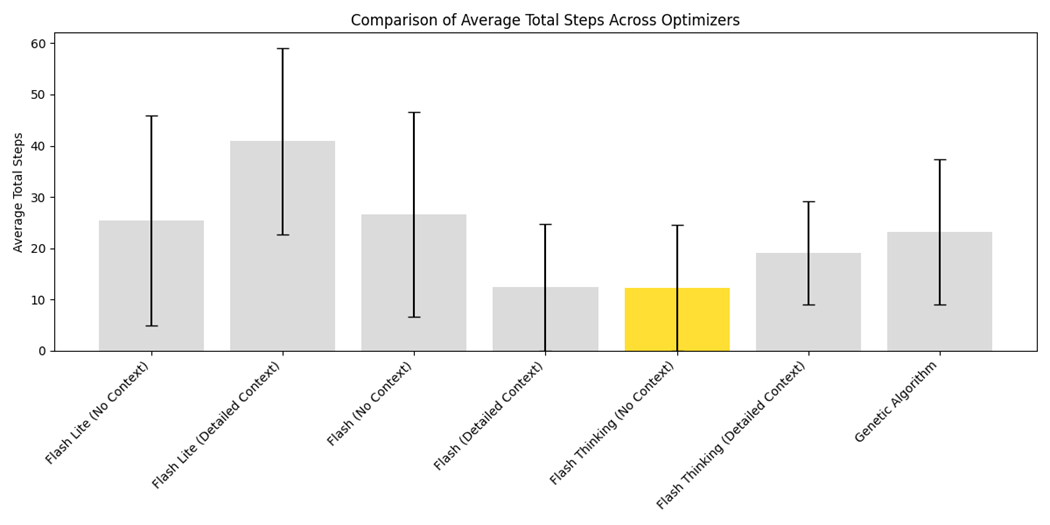

The study compares different LLM models, namely, variants of the Google Gemini 2.0 (Flash Lite, Flash, and Flash Thinking), and approaches to prompting (detailed context versus no context). The results indicate that the Flash Thinking model consistently achieves optimal scores, particularly when provided with detailed contextual information, underscoring the significance of prompt engineering in enhancing LLM performance.

Figure 3: Optimization by LLM prompting flowchart with Casmo-5 evaluations.

Figure 4: Comparison of Average Best Scores Across Optimizers for Different Prompting Strategies. The yellow bar highlights the optimal approach.

Figure 5: Average Steps Required for Convergence Across Optimizers and Prompting Strategies. The yellow bar highlights the optimal approach.

The LLM-based approaches demonstrate competitive performance compared to traditional genetic algorithms, showcasing the potential of LLMs to efficiently navigate complex optimization landscapes without the need for manual tuning or precise mathematical formulations.

Implementation and Challenges

Implementing OPRO involves crafting meta-prompts tailored to the specific domain, using LLMs to generate candidate solutions, and employing high-fidelity simulations for evaluation. This method is shown to be straightforward to implement, requiring minimal computational resources beyond the LLM itself and a suitable evaluation script.

However, the scalability of this approach poses challenges as problem complexity increases. Higher-dimensional optimization problems introduce difficulties in generating text-based prompts suitable for LLM input, potentially exceeding context window limitations. Additionally, the risk of model hallucinations increases with complexity, which can lead to suboptimal or erroneous solutions.

Conclusion

The research exemplifies the application of LLMs in engineering optimization, highlighting their potential to simplify and accelerate solution generation for complex design problems. While promising, the approach necessitates careful consideration of prompt design and model selection to mitigate potential shortcomings associated with complexity and scalability. These insights open avenues for further research into domain-specific LLM applications, particularly in fields demanding sophisticated optimization techniques.