Reconstructing Humans with a Biomechanically Accurate Skeleton

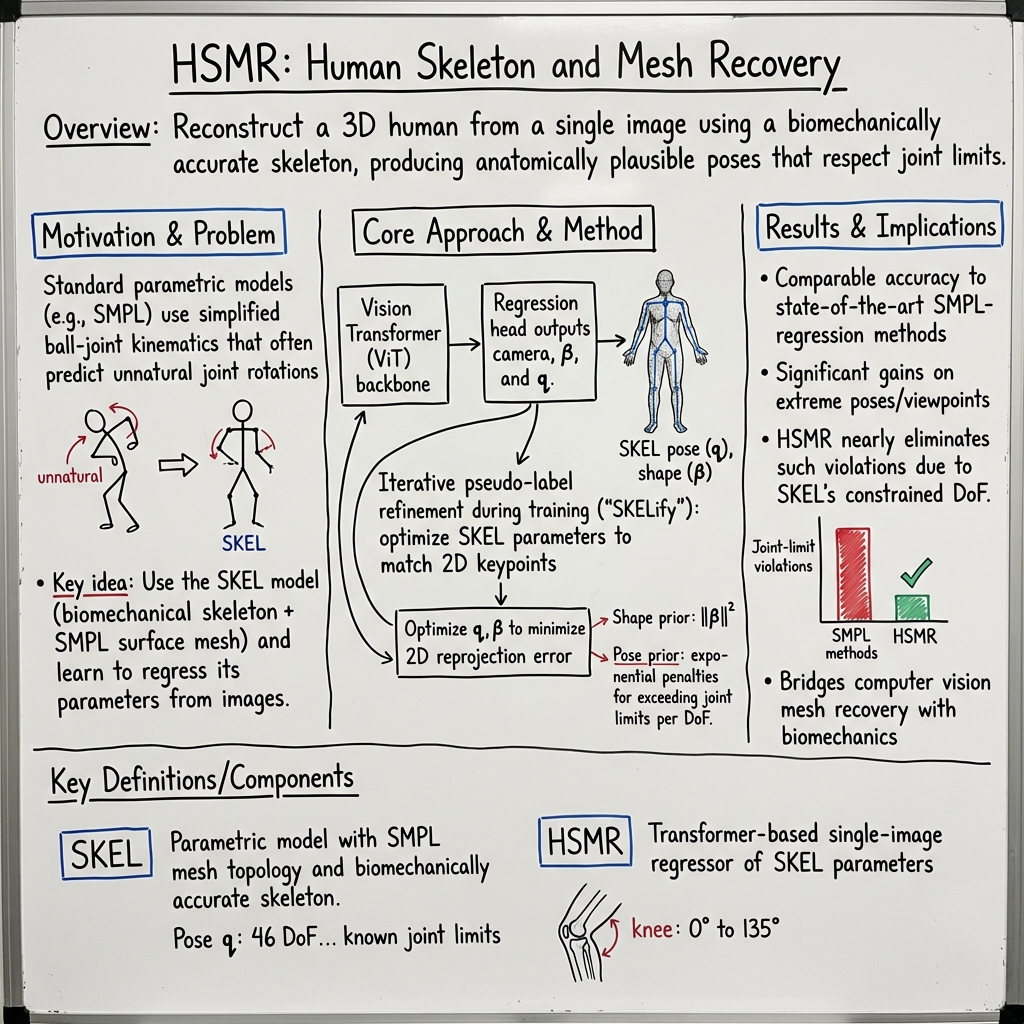

Abstract: In this paper, we introduce a method for reconstructing 3D humans from a single image using a biomechanically accurate skeleton model. To achieve this, we train a transformer that takes an image as input and estimates the parameters of the model. Due to the lack of training data for this task, we build a pipeline to produce pseudo ground truth model parameters for single images and implement a training procedure that iteratively refines these pseudo labels. Compared to state-of-the-art methods for 3D human mesh recovery, our model achieves competitive performance on standard benchmarks, while it significantly outperforms them in settings with extreme 3D poses and viewpoints. Additionally, we show that previous reconstruction methods frequently violate joint angle limits, leading to unnatural rotations. In contrast, our approach leverages the biomechanically plausible degrees of freedom making more realistic joint rotation estimates. We validate our approach across multiple human pose estimation benchmarks. We make the code, models and data available at: https://isshikihugh.github.io/HSMR/

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper shows how to turn a single photo of a person into a full 3D “digital human” that has both a surface (the skin you see) and a skeleton inside that moves like a real human body. The key idea is to use a more realistic skeleton model—called SKEL—that follows how real joints actually work, so the results look natural and are useful for science and sports.

What were the authors trying to do?

In simple terms, they wanted to:

- Build a system that can look at one image of a person and guess their 3D body shape and pose with a skeleton that bends only in natural ways.

- Avoid common fake-looking poses, like knees twisting in directions they can’t in real life.

- Work even on tricky photos with unusual poses (like yoga) or odd camera angles.

- Do this fast, without needing special motion-capture gear.

How did they do it?

Think of a 3D character as two parts: a “mesh” (the skin or outer shape) and a “skeleton” (the bones and joints inside). Many past systems got the mesh looking good but used a simple, unrealistic skeleton that let joints spin in ways humans can’t. This paper uses SKEL, a skeleton designed with real biomechanical rules (for example, a knee bends like a hinge and has clear angle limits).

Here’s their approach, explained with everyday ideas:

- The smart reader (Transformer): They use a vision transformer (a powerful image-recognizing neural network) that looks at the input photo and predicts:

- the person’s body shape (how tall, slim, muscular, etc.),

- the pose (how each joint is bent),

- and the camera view (so the 3D body lines up with the picture).

- Fewer, more realistic knobs to turn: In many models, each joint can rotate freely in three directions. SKEL is more realistic, so it uses fewer “knobs” (46 instead of 72) and each knob has a natural range (for example, a knee can flex up to about 135°, but not twist sideways like a ball joint).

- Teaching without perfect answer keys: There was no big dataset of photos labeled with SKEL’s exact settings. So the authors created “pseudo labels” (best-guess answers) in two steps: 1) Convert from older models: Use existing datasets that had labels for a simpler model (called SMPL) and fit SKEL to match them. This gives OK starting labels but isn’t perfect. 2) Iterate and improve (“SKELify”): During training, they repeatedly fine-tune these labels by fitting the 3D skeleton so its projected 2D joints line up with the 2D keypoints on the image (like aligning a mannequin to a photo). They also add penalties if any joint tries to bend beyond its real-life limits. Over time, the labels get better, and the network learns more accurately.

- Smoother way to learn rotations: Instead of directly predicting angles (which can be tricky because angles “wrap around”), the network predicts a smooth rotation representation and then converts it back to the angles SKEL expects. This makes learning more stable and accurate.

What did they find, and why is it important?

Here are the main results, explained simply:

- Matches the best on regular poses: On common benchmarks (standard photos and everyday poses), their method performs about as well as the top systems that use simpler skeletons.

- Better on hard poses: On a challenging dataset with yoga and unusual views (MOYO), their method is clearly better—errors drop by more than a centimeter on average. The realistic skeleton helps the system avoid strange guesses when the pose is extreme.

- More natural joints: Older methods often produced elbows and knees that bent in impossible ways. The authors measured how often joint limits were broken and found that SKEL-based predictions almost never violate natural angles, while many older methods did so surprisingly often.

- Faster than optimization-only approaches: A baseline that fits SKEL by heavy optimization takes minutes per image. Their learned model produces results quickly, which is practical for real use.

Why this matters:

- Real-world science and sports need realism: Biomechanics, physical therapy, sports analysis, and robotics need bodies that move like real humans. This method provides that, directly from a single photo.

- Fewer strange artifacts: The results look more natural and are more trustworthy for simulations and training.

What could this lead to?

- Better tools for biomechanics: Researchers and clinicians could analyze movement more accurately without lab gear, opening doors for remote assessments or large-scale studies.

- More believable animation and AR/VR: Games, movies, and virtual worlds can get more realistic motions from simple inputs.

- Safer robots and wearables: Using human-like constraints helps robots and assistive devices interact more safely and effectively with people.

- Future improvements: With true 3D ground-truth data and video training, the method could get even smoother and more accurate over time, and it could be plugged into physics simulations to ensure motions are not just anatomically valid but also physically consistent.

Final takeaway

The paper introduces a way to turn a single picture into a 3D human with a skeleton that obeys real joint limits. It learns this despite not having perfect labels by creating and refining its own training data. The result is a system that’s competitive on everyday photos, better on extreme poses, and much more realistic in how joints bend—making it a strong step toward practical, science-ready 3D human reconstruction.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a concise list of what remains missing, uncertain, or unexplored in the paper, framed to enable concrete follow-up studies.

- Lack of ground-truth SKEL-labeled images: No dataset exists with images paired to validated SKEL parameters. Collect multi-view motion-capture data and derive SKEL parameters via OpenSim to quantify how much pseudo-label noise limits performance.

- Unquantified pseudo-label error: The SMPL-to-SKEL conversion and SKELify refinement are error-prone, but their error statistics and impact on downstream metrics are not measured. Benchmark conversion error (mesh, joint angles, bone lengths) against motion-capture-based SKEL fits.

- Potential confirmation bias in iterative refinement: The model’s own predictions seed SKELify, risking self-reinforcement of errors. Study convergence, stability, and bias by varying initialization strategies and refinement schedules.

- Absence of a learned SKEL pose prior: Only joint-limit penalties are used in SKELify; there is no learned prior for anatomically plausible pose. Train and evaluate a learned SKEL pose prior (e.g., from AddBiomechanics/AMASS-like resources) and measure its effect on accuracy and violations.

- Joint-limit constraints not integrated in end-to-end training: Limits are only enforced during SKELify but not as a differentiable loss during network training. Incorporate differentiable joint-limit penalties or bounded angle parameterizations and assess gains.

- Limited biomechanical validation beyond joint-angle limits: The model is not evaluated on biomechanical metrics like inverse kinematics/dynamics consistency, joint moments, or muscle-feasible kinematics. Integrate into OpenSim and quantify downstream biomechanical fidelity.

- Scale ambiguity and camera modeling: The paper does not evaluate absolute scale accuracy or camera parameter reliability. Measure absolute joint/vertex error (not PA metrics) under known intrinsics and known subject heights.

- Subject-specific skeletal scaling and bone-length accuracy: SKEL shares SMPL’s shape parameters, but the fidelity of resulting bone lengths is not validated. Evaluate bone-length errors and subject-specific scaling (including children, elderly, and atypical proportions).

- Generalization to clinically and athletically relevant motions: Evaluation focuses on standard pose benchmarks and yoga (MOYO). Test on clinical gait, sport motions, and rehabilitation datasets; measure accuracy and biomechanical plausibility.

- Robustness under occlusion, truncation, and heavy clothing: No targeted evaluation under severe occlusion or foreshortening. Benchmark on occlusion-heavy subsets (e.g., CrowdPose, OCHuman) with SKEL metrics.

- Limited joint coverage in biomechanical checks: Violation analysis only for elbows and knees. Extend to hips, shoulders, wrists, ankles, spine, and scapulothoracic articulation; include foot/ankle DOFs and evaluate contact-consistent poses.

- Absence of contact and physical constraints: No modeling of foot–ground contact, balance, or self-collision. Add contact losses and collision penalties and analyze improvements in plausibility and error, especially for standing/squatting tasks.

- Single-image setting only: Temporal jitter is noted, but no temporal model is provided. Develop and assess video-based SKEL recovery with temporal consistency, contact, and dynamics regularization.

- Uncertainty quantification: The model outputs deterministic parameters without confidence measures. Provide calibrated uncertainties for joint angles and bone lengths and study their usefulness for clinical or robotics applications.

- Inference speed and deployment readiness: Only the SKEL-fit baseline’s runtime is reported; HSMR’s real-time feasibility is not. Report and optimize inference latency on GPUs/edge devices for practical use.

- Euler angle conversion and potential singularities: The approach converts from continuous rotations to Euler angles for SKEL. Investigate failure modes (e.g., gimbal singularities) and test bounded reparameterizations (e.g., tanh-mapped angles) against limits.

- Comparative analysis vs. SMPL with joint-limit regularization: It remains unclear whether adding joint-limit losses to SMPL methods narrows the gap to SKEL. Run controlled ablations that endow SMPL baselines with joint-limit penalties for a fair comparison.

- Self-penetration and mesh–skeleton consistency: The system does not check self-collisions or skin–skeleton consistency. Quantify self-penetrations and assess whether adding collision terms improves anatomical validity.

- Evaluation on OpenCapBench and other biomechanics benchmarks: The paper acknowledges OpenCapBench but does not evaluate on it. Re-test HSMR on OpenCapBench and AddBiomechanics-derived benchmarks when available.

- Multi-person and crowded-scene handling: The system assumes single-person crops. Extend detection, association, and SKEL regression to multi-person scenes and measure performance under crowding.

- Demographic and shape diversity: Training uses HMR2.0 data with unknown demographic balance. Assess fairness and performance across age groups, body mass index ranges, and populations (including pathological gait).

- Hands and face integration: Only the body is modeled. Explore integrating anatomical hand and face models with joint-limit-aware priors for full-body applications.

- Failure case characterization and mitigation: Motion blur, rare viewpoints, and extreme poses remain challenging. Quantify error by failure type and evaluate data augmentation or synthetic training (e.g., domain randomized renders) as remedies.

- Reproducibility of iterative refinement: Implementation details of the periodic batch SKELify procedure are deferred to supplementary material. Provide explicit schedules, hyperparameters, stopping criteria, and sensitivity analyses.

Practical Applications

Immediate Applications

Below is a concise set of practical, deployable applications that can leverage the paper’s findings and tools now. Each item notes sectors, likely tools/workflows, and key assumptions or dependencies.

- Biomechanics lab workflows for markerless kinematics

- Sectors: healthcare research, sports science, academia

- Tools/workflows: use HSMR to extract SKEL joint angles from single images or per-frame videos; export to OpenSim for inverse kinematics/dynamics; apply joint-limit-aware analytics (e.g., knee flexion, elbow extension range)

- Assumptions/dependencies: reliable 2D keypoint detector for SKELify-like refinement when needed; camera parameters and subject scale estimation; single-person capture; GPU for near-real-time; not yet clinically validated for diagnostic use

- Quality assurance for SMPL-based pipelines

- Sectors: computer vision, animation/VFX, software tooling

- Tools/workflows: run a joint-limit violation checker on SMPL outputs; auto-convert problematic frames to SKEL via HSMR or SKELify to correct unnatural rotations; integrate as a CI step for datasets or production pipelines

- Assumptions/dependencies: access to SMPL meshes; availability of HSMR model and conversion scripts; tolerance for occasional failure cases due to occlusion or motion blur

- AR/VR avatar calibration from a single photo

- Sectors: AR/VR, gaming, consumer apps

- Tools/workflows: infer SKEL parameters to rig avatars with anatomically constrained joints; reduce pose artifacts in in-app screenshots, profile pictures, and quick rig setups; plug-ins for Unity/Unreal/Blender/Maya

- Assumptions/dependencies: single-view capture, good lighting and minimal occlusion; shape estimation within SMPL/SKEL shape space; not yet optimized for real-time full-body tracking

- Sports coaching and yoga guidance

- Sectors: fitness tech, consumer wellness

- Tools/workflows: smartphone apps compute joint angles (e.g., hip, knee, shoulder) from single images; provide pose feedback consistent with biomechanical limits; MOYO-like extreme pose robustness helps yoga/form coaching

- Assumptions/dependencies: camera placement guidance; non-medical claims; calibration for scale if absolute angles/ROM are needed

- Ergonomics screening from workplace photos

- Sectors: occupational health, EHS (environment, health & safety)

- Tools/workflows: assess joint angles against ergonomic guidelines (e.g., OSHA/NIOSH-referenced ranges) from snapshot imagery; flag risky postures with joint-limit-aware kinematics

- Assumptions/dependencies: privacy compliance; controlled viewpoints; trained staff to interpret outputs; not a substitute for multi-view measurement in high-stakes scenarios

- Dataset curation and enrichment in academia

- Sectors: computer vision research, biomechanics research

- Tools/workflows: use HSMR + SKELify to convert SMPL-labeled datasets to SKEL parameters; iteratively refine pseudo labels; evaluate under physiological constraints (e.g., OpenCapBench)

- Assumptions/dependencies: adoption of the provided code/models; sufficient computational resources; acceptance of pseudo-label imperfections

- Education in anatomy/kinesiology

- Sectors: education, edtech

- Tools/workflows: interactive tools for demonstrating joint DoFs and rotation limits; classroom exercises turning single photos into SKEL visualizations; visual comparisons of SMPL vs SKEL for pedagogy

- Assumptions/dependencies: simplified setups (single subject, clean background); non-clinical use

- Rapid rigging for VFX/animation

- Sectors: media/entertainment, 3D content creation

- Tools/workflows: single reference image to anatomically constrained rig + surface mesh; reduce manual fix-ups due to unrealistic joint rotations in reference-based character builds

- Assumptions/dependencies: standard graphics toolchain integration; artist review for final tweaks; non-temporal (static pose) focus

- Tele-wellness range-of-motion checks (non-diagnostic)

- Sectors: consumer health, wellness apps

- Tools/workflows: use SKEL angles from single images to approximate ROM (e.g., elbow flexion, knee extension) and give non-medical feedback; track progress over time from repeated snapshots

- Assumptions/dependencies: disclaimers (not medical advice); consistent camera placement; scale calibration if absolute measures are required

- Joint-limit-aware retargeting for mocap cleanup

- Sectors: motion capture, animation

- Tools/workflows: identify frames with violated joint limits; constrain retargeted motion to SKEL’s DoFs; reduce artifacts in retargeting pipelines

- Assumptions/dependencies: motion data access; batch processing; temporal smoothing handled by downstream tools

Long-Term Applications

These applications are promising but need further research, scaling, validation, or engineering (e.g., temporal modeling, robustness, regulatory clearance, multi-person handling, real-time performance).

- Clinical gait and movement analysis from monocular video

- Sectors: healthcare, rehabilitation

- Tools/workflows: end-to-end pipelines integrating HSMR with temporal smoothing and OpenSim; derive clinically relevant metrics (kinematics, kinetics) from commodity cameras; remote assessments

- Assumptions/dependencies: rigorous clinical validation; measurement standardization; camera calibration; regulatory approval (medical device compliance)

- Real-time full-body tracking in consumer VR with biomechanical constraints

- Sectors: AR/VR hardware/software

- Tools/workflows: on-device HSMR and temporal networks for real-time tracking; ensure joint-limit consistency to improve comfort and prevent “uncanny” motion

- Assumptions/dependencies: hardware acceleration; low-latency inference; multi-view or IMU fusion for occlusion robustness

- Human–robot collaboration safety monitoring

- Sectors: robotics, industrial automation

- Tools/workflows: single-camera monitoring of human postures in factories; joint-limit-aware intent estimation to update robot safe zones; integration with safety controllers

- Assumptions/dependencies: robust to occlusions, PPE, varied lighting; certification for safety-critical deployment; privacy and compliance frameworks

- Broadcast-scale sports performance analytics

- Sectors: sports tech, media

- Tools/workflows: analyze athletes’ joint angles and form at scale from broadcast feeds; biomechanical constraint regularization to stabilize estimates under extreme poses

- Assumptions/dependencies: multi-view/video pipelines; temporal smoothing; athlete identification and per-subject scaling; legal rights to process video

- Personalized orthotics and brace pre-design

- Sectors: medtech, biomechanics-driven product design

- Tools/workflows: pipeline from real-world imagery to SKEL-based FEM/differentiable biomechanics (referencing “Differentiable Biomechanics”/brace optimizer); simulate fit and ROM constraints

- Assumptions/dependencies: integration with differentiable simulation; accurate subject-specific scaling; clinical validation and regulatory approval

- Low-cost motion capture replacement for training and film

- Sectors: training simulation, media/entertainment

- Tools/workflows: replace multi-camera rigs with monocular/video + biomechanical constraints; temporal HSMR models to produce production-quality motion

- Assumptions/dependencies: sequence modeling (reduce jitter); occlusion handling; artist QA; hardware optimization

- Policy and standards for biomechanically valid pose estimation

- Sectors: policy, standards bodies

- Tools/workflows: develop benchmarks and compliance criteria emphasizing joint-limit adherence (e.g., extend OpenCapBench); require joint-limit metrics alongside MPJPE/PA-MPJPE for public datasets and tools

- Assumptions/dependencies: consensus among stakeholders; reproducible evaluation tooling; sector-specific acceptance

- Ergonomic risk modeling for insurance and workforce analytics

- Sectors: insurance, HR analytics, EHS

- Tools/workflows: large-scale posture risk scoring from workplace imagery/videos; integrate with injury prevention/claims models

- Assumptions/dependencies: privacy, consent and governance; bias and fairness audits; robust video analytics in varied environments

- Intent prediction and pedestrian modeling for autonomous systems

- Sectors: autonomous vehicles, robotics

- Tools/workflows: biomechanically constrained kinematics as a prior for predicting human motion; reduce “physically impossible” forecasts

- Assumptions/dependencies: temporal forecasting models; diverse dataset coverage; real-time constraints

- Large-scale SKEL-labeled dataset creation and pose priors

- Sectors: academia, industry R&D

- Tools/workflows: use the pseudo-label refinement pipeline to build SKEL ground truth at scale; learn SKEL-specific pose/motion priors for robust downstream models

- Assumptions/dependencies: high-quality pseudo-label refinement; diverse data; open licensing; community adoption

Notes on cross-cutting assumptions and dependencies:

- Single-image limitations: failures may occur under heavy occlusion, motion blur, rare viewpoints; video and temporal models are needed for smooth motions.

- Camera calibration and subject scaling: accurate absolute angle/ROM often requires controlled capture or auxiliary scale reference.

- Hardware: GPU or accelerator improves throughput; mobile deployment requires further optimization.

- Data and validation: current training relies on pseudo labels; clinical/industrial use requires rigorous validation and, in some sectors, regulatory approval.

- Privacy and governance: workplace and healthcare applications necessitate strong privacy, consent, and compliance programs.

Glossary

- Ball (socket) joint: A joint model that allows three independent rotational degrees of freedom at a joint. "the joints are represented as ball (socket) joints, introducing additional degrees of freedom."

- Biomechanically accurate skeleton: A skeletal model whose structure and joint motions adhere to human anatomical constraints. "using a biomechanically accurate skeleton model."

- BSM: The biomechanical skeleton model used within SKEL to provide anatomically realistic joint structures. "a biomechanical skeleton model, BSM."

- Continuous rotation representation: A smooth, over-parameterized rotation encoding (e.g., 6D) used to regress rotations and then map to valid rotation matrices. "we adopt the continuous rotation representation~\cite{zhou2019continuity}"

- Degrees of freedom: Independent parameters that define the allowable motions (e.g., rotations) at a joint. "only models the realistic degrees of freedom."

- Euler angles: A three-parameter orientation representation using ordered axis rotations; in SKEL, each DoF is represented by a single Euler angle. "SKEL represents the pose parameters with Euler angles."

- Gram–Schmidt: An orthonormalization procedure used here to convert continuous rotation representations into proper rotation matrices. "using GramâSchmidt~\cite{zhou2019continuity}"

- Joint limits: Anatomically plausible bounds on joint rotations that should not be exceeded. "explicit joint rotation limits."

- Kinematic parameters: The parameters (e.g., joint angles) that specify a body’s configuration within a kinematic chain. "SKEL carefully designs the kinematic parameters according to the real human biomechanical structure"

- Kinematic tree: The hierarchical graph of joints and bones describing parent–child relationships in a skeleton. "the kinematic tree does not align with the actual skeletal structure of the human body"

- Mean Per Joint Position Error (MPJPE): A 3D accuracy metric measuring the average Euclidean distance between predicted and ground-truth joint locations. "Mean Per Joint Position Error (MPJPE)"

- Mean Per Vertex Position Error (MPVPE): A 3D mesh accuracy metric measuring the average distance between predicted and ground-truth mesh vertices. "Mean Per Vertex Position Error (MPVPE)"

- Percentage of Correct Keypoints (PCK): A 2D accuracy metric reporting the fraction of keypoints within a normalized distance threshold from ground truth. "Percentage of Correct Keypoints (PCK)"

- Procrustes Alignment: A similarity-transform alignment (rotation, translation, scale) applied before error computation to factor out global pose and scale differences. "Procrustes Alignment version PA-MPJPE"

- Pose prior: A regularization term or model capturing typical, plausible joint configurations to constrain pose estimation. "we do not have an existing pose prior for SKEL."

- Pseudo ground truth: Supervisory labels derived from optimization or fitting rather than true annotations, used to train models. "pseudo ground truth SKEL parameters"

- Reprojection objective: A loss that aligns projected 3D joints with observed 2D keypoints in the image. "we introduce a reprojection objective, "

- Robustifier: A robust loss function that reduces the influence of outliers during optimization. "with the addition of a robustifier~\cite{geman1987statistical}"

- Shape prior: A regularization on shape parameters (e.g., SMPL’s β) that discourages implausible body shapes. "The shape prior is inherited from SMPL"

- SKEL model: A parametric body model combining the SMPL surface with a biomechanical skeleton to enforce anatomically realistic kinematics. "the SKEL model~\cite{keller2023skin}"

- SMPL: A widely used skinned parametric human body model with a fixed mesh topology and simple joint parameterization. "the SMPL model~\cite{loper2015smpl}"

- SMPL-X: An extension of SMPL that includes articulated hands and facial expressions for more expressive body modeling. "SMPL-X~\cite{pavlakos2019expressive}"

- Triangulation: The process of reconstructing 3D points from multiple 2D observations across different camera views. "after triangulation from multiple views"

- Vision Transformer (ViT): A transformer-based neural network architecture for image processing that operates on patch embeddings. "We start with a ViT backbone"

Collections

Sign up for free to add this paper to one or more collections.