- The paper introduces a co-training strategy that merges simulation and real-world data to significantly enhance robotic manipulation performance.

- It examines the integration of task-aware and task-agnostic simulation data, emphasizing the importance of co-training ratios and data alignment.

- Co-training using simulation data achieved a 38% improvement in task success, demonstrating enhanced policy generalization and robustness.

Sim-and-Real Co-Training: A Simple Recipe for Vision-Based Robotic Manipulation

This paper introduces a systematic approach to improve vision-based robotic manipulation by leveraging a combination of simulation and real-world data. The concept of sim-and-real co-training is explored as a means to enhance policy performance in real-world tasks, addressing the data collection challenges in robotics. They propose a framework that systematically examines the integration of simulation data into real-world robotic tasks to improve generalization and task performance.

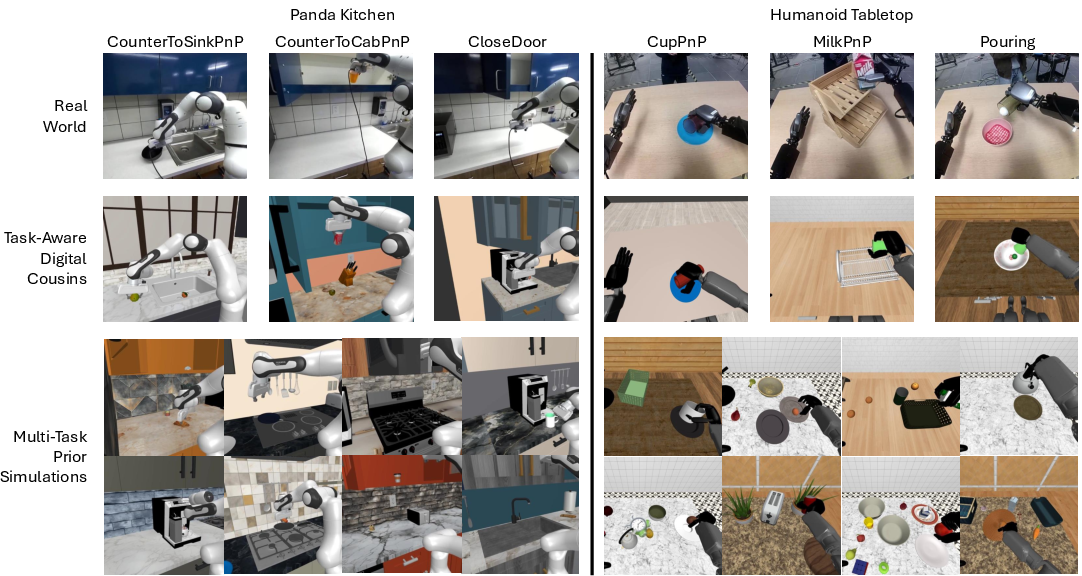

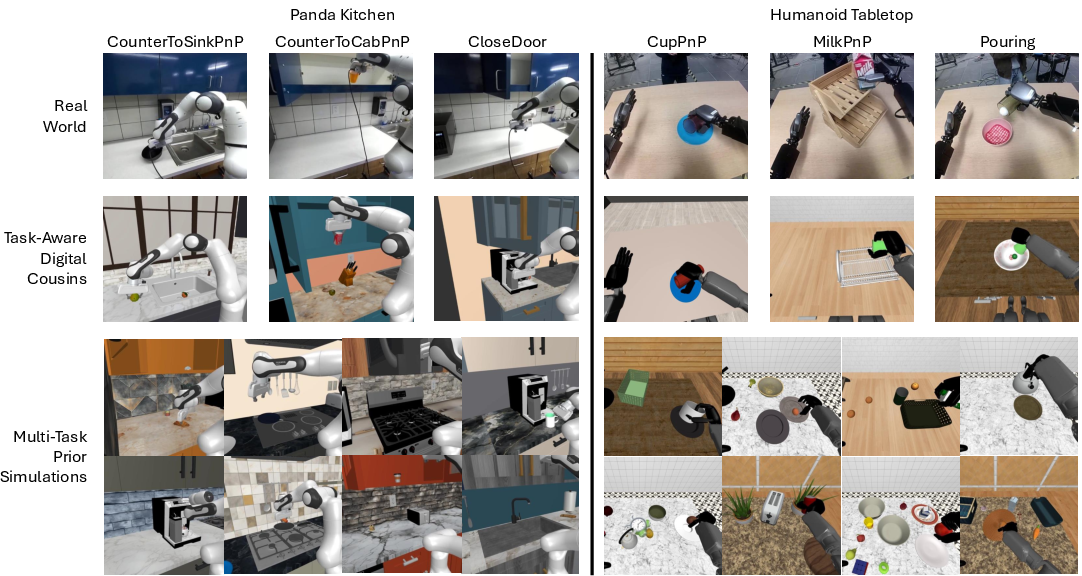

The authors detail a co-training strategy that leverages both real-world robotic demonstration data and synthetic data from simulation environments. The simulation environments are categorized into task-aware digital cousins and task-agnostic prior datasets, each contributing to the learning process in distinct ways.

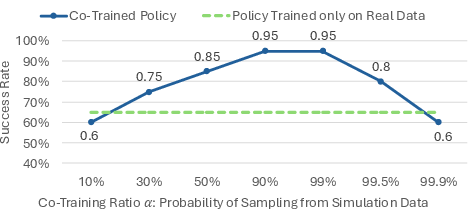

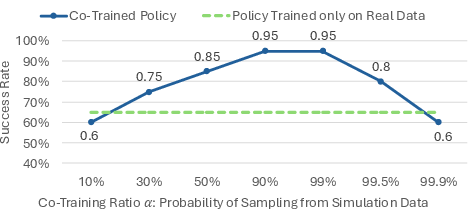

Key insights include identifying how different dataset factors impact training, such as task composition, object variations, and camera alignments. Their approach emphasizes co-training with diverse data compositions and a co-training ratio, which is critical in balancing the influence of simulation and real-world data during training.

Figure 1: Real-World and Simulation Tasks. Displays three data sources in Kitchen Panda and Humanoid Tabletop domains for real-world tasks, task-aware digital cousin environments, and prior multi-task data from simulations.

Experimental Setup

The study employs two robotic domains: a Panda manipulator in a kitchen environment and a GR-1 humanoid robot in a tabletop setting. Various tasks are defined for each, covering a range of manipulation actions from pick-and-place to complex bimanual tasks.

Co-training experiments demonstrate improvements in policy performance when blending real and simulated data, even with significant differences between these data sources. Effectiveness is measured in terms of task success rates, enhanced generalization to unseen scenarios, and improved robustness against positional and object variances.

Figure 2: Effect of the different co-training ratios. Highlights the importance of the co-training ratio on policy performance in CupPnP task with 20 real demos and 1,000 simulation demos.

Results and Analysis

The co-training approach achieved an average task performance improvement of 38% compared to training solely on real-world data. Task-aware digital cousins provided noticeable gains by offering semantically similar data, while task-agnostic data also contributed when aligned properly, particularly with automatic camera pose adjustments.

Key factors influencing co-training success include the amount of simulation data, the co-training ratio, and visual alignment between simulation and real-world environments. The study underscores the role of diverse simulation data in amplifying generalization capacities of learned policies, offering strategic guidelines for data composition.

Figure 3: Examples of the Video2Video model outputs with different noise strengths. Shows how video diffusion models can enhance visual realism by simulating different noise levels.

Limitations and Future Work

The research acknowledges its primary focus on pick-and-place manipulation tasks and suggests future exploration in simulating complex dynamics like deformation and fluid interaction. The paper also hints at leveraging advanced generative AI models for creating more realistic simulation environments. Further studies could explore broader domains and task types to solidify the generalizability of the proposed co-training recipe.

Conclusion

In summary, this paper presents a comprehensive methodology for utilizing simulation data to augment real-world robotic task training effectively. The sim-and-real co-training strategy offers a viable pathway for overcoming data collection limitations, improving task performance, and enabling robust policy learning in robotics through strategic use of simulation datasets. The insights provided offer valuable guidelines for practitioners aiming to blend real and synthetic data in robot learning programs, setting the stage for future innovations in robotic autonomy and manipulation.