- The paper introduces a novel continual learning framework that incrementally integrates unannotated modalities using the Continual Mixture of Experts (CMoE) Adapter.

- It employs a Pseudo-Modality Replay mechanism to stabilize semantic alignment and mitigate catastrophic forgetting in cross-modal tasks.

- Ablation studies demonstrate improved zero-shot performance and robust generalization across paired and unseen modality configurations.

Continual Cross-Modal Generalization

The paper "Continual Cross-Modal Generalization" introduces a method to address the challenge of unifying disparate modalities into a shared semantic space, which enables knowledge transfer across unannotated modalities. This process, termed cross-modal generalization, is made complex by the scarcity of extensively paired data across all required modalities. To surmount this limitation, the paper proposes a novel approach using continual learning techniques to incrementally integrate new modalities into an existing semantic space, thereby leveraging more readily available bimodal data.

Introduction and Background

The growth of multimodal data has prompted numerous efforts to map diverse modalities into a common semantic space. Methods such as CLIP and ImageBind have made progress by learning inter-modal alignment through paired data. These efforts typically employ contrastive learning with modality-agnostic encoders to reduce the semantic gap between paired modalities, though a significant domain gap often remains.

Recent advancements involve leveraging explicit vector quantization or prototypes to align semantic features across modalities better. However, these methods compress multimodal features into single vectors, which challenges fine-grained alignment.

The Continual Cross-Modal Generalization (CMG) task seeks to address these issues by allowing for the inclusion of new modalities post-initial training. It endeavors to extend the existing CMG framework to support continual learning, thus overcoming the difficulties associated with aligning initially unpairable modalities. Essentially, CMG aims to maintain an expandable discrete codebook that adapts to new semantic features as new modalities are integrated.

Continual Mixture of Experts Adapter

The primary innovation presented in this paper is the Continual Mixture of Experts Adapter (CMoE-Adapter). This module is designed to map various modalities into a unified space while retaining previously learned knowledge. It employs a multimodal universal adapter comprising a common layer and modality-specific layers, activated according to the modality being processed.

The CMoE architecture augments the adapter's encoding capacity by leveraging a mixture-of-experts framework. Here, different combinations of experts are utilized to handle diverse scenarios. This is especially crucial in a continual learning context, where the model must balance expanding its encoding capacity with preserving existing knowledge. An adaptive Elastic Weight Consolidation (EWC) loss is introduced to mitigate the catastrophic forgetting of prior knowledge by penalizing significant changes to the model parameters deemed critical for previous tasks.

Pseudo-Modality Replay Mechanism

To complement the CMoE-Adapter, the paper introduces a Pseudo-Modality Replay (PMR) mechanism. This mechanism facilitates semantic alignment across stages by employing a dynamically expanding codebook. The replay mechanism constructs pseudo-modalities using learned knowledge from intermediary modalities as guidance. These pseudo-modalities help stabilize the training of new modalities by using sequences selected from the current codebook based on their proximity to known semantic features.

This dynamic approach lets the model maintain a stable, expandable discrete representation space, accommodating newly introduced semantic features without disturbing the previously established code mappings.

Experiments and Observations

The experimental results demonstrate the effectiveness of the proposed CMoE-Adapter and PMR mechanisms. The model was evaluated on several datasets across different paired modality configurations, showing robust performance in both seen and unseen data pairs tasks.

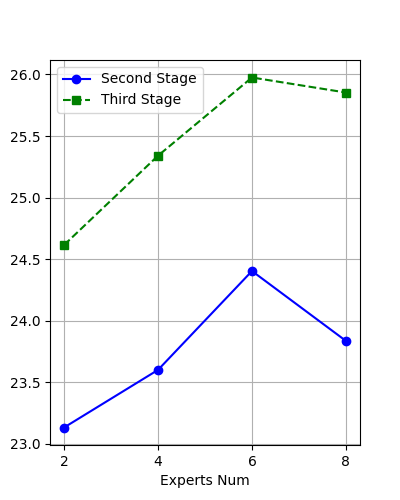

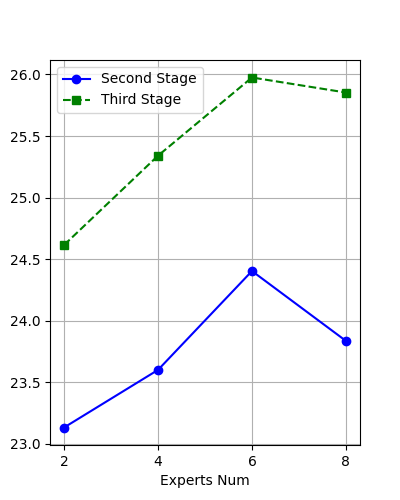

Figure 1: Ablation study on the number of experts highlights the performance impact with varying expert counts.

Key observations include the model's improved capability to generalize across multiple modalities, evidenced by superior performance in zero-shot cross-modal generalization tasks. Furthermore, ablation studies reveal the significant impact of the CMoE-Adapter's architecture and the PMR mechanism on the model's ability to retain and utilize previously learned knowledge across new and existing modalities.

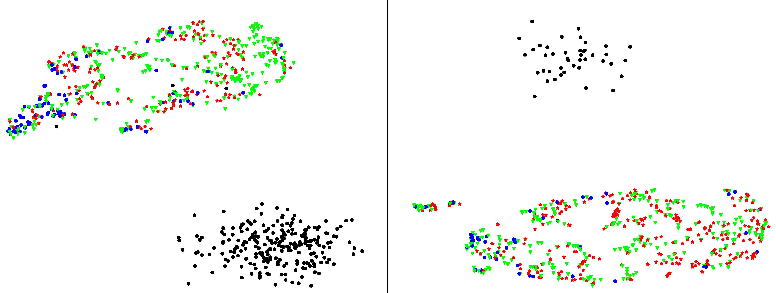

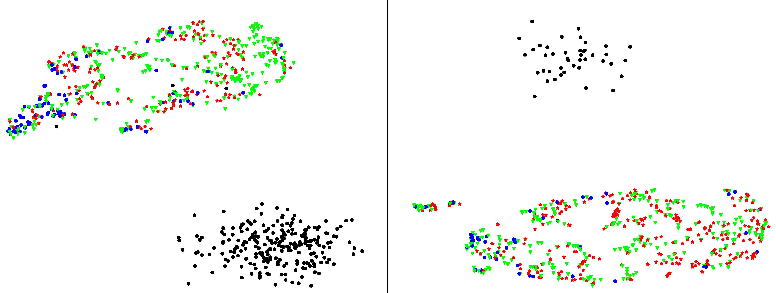

Figure 2: Visualization of discrete codes, presenting effective activation by different modalities.

Conclusion

The proposed framework for continual cross-modal generalization effectively overcomes the constraints of acquiring extensively paired data and offers a scalable solution for integrating new modalities into a shared semantic space. The CMoE-Adapter and PMR mechanism introduce a robust methodology for managing and leveraging heterogeneous multimodal data. These contributions pave the way for more flexible and comprehensive multimodal representation learning, with potential applications in various cross-disciplinary fields requiring integrated multimodal reasoning and understanding.