- The paper demonstrates that GUI grounding models, particularly UGround, are highly sensitive to adversarial perturbations and low-resolution challenges.

- It employs natural noise and both untargeted and targeted adversarial attacks to quantify model performance using success rate metrics.

- The research highlights the need for advanced defense strategies to bolster reliability across mobile, desktop, and web interfaces.

On the Robustness of GUI Grounding Models Against Image Attacks

Introduction

Graphical User Interface (GUI) grounding models are integral to enabling intelligent systems to navigate and interact with visual interfaces. This paper addresses the underexplored area of robustness in GUI grounding models when faced with adversarial conditions. The authors evaluate these models, specifically UGround, under natural noise, untargeted adversarial attacks, and targeted adversarial attacks across various GUI environments such as mobile, desktop, and web interfaces.

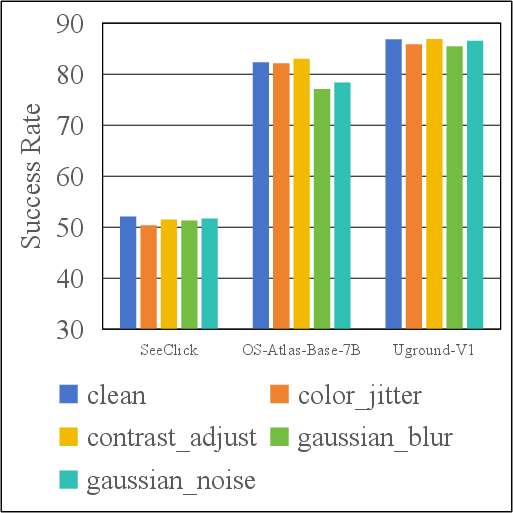

Examples of natural noise include color jitter, while adversarial strategies involve perturbations to visual inputs aiming to mislead grounding models. The findings exhibit that GUI grounding models are notably sensitive to adversarial perturbations and low-resolution settings, pinpointing vulnerabilities that need to be addressed for more robust practical applications.

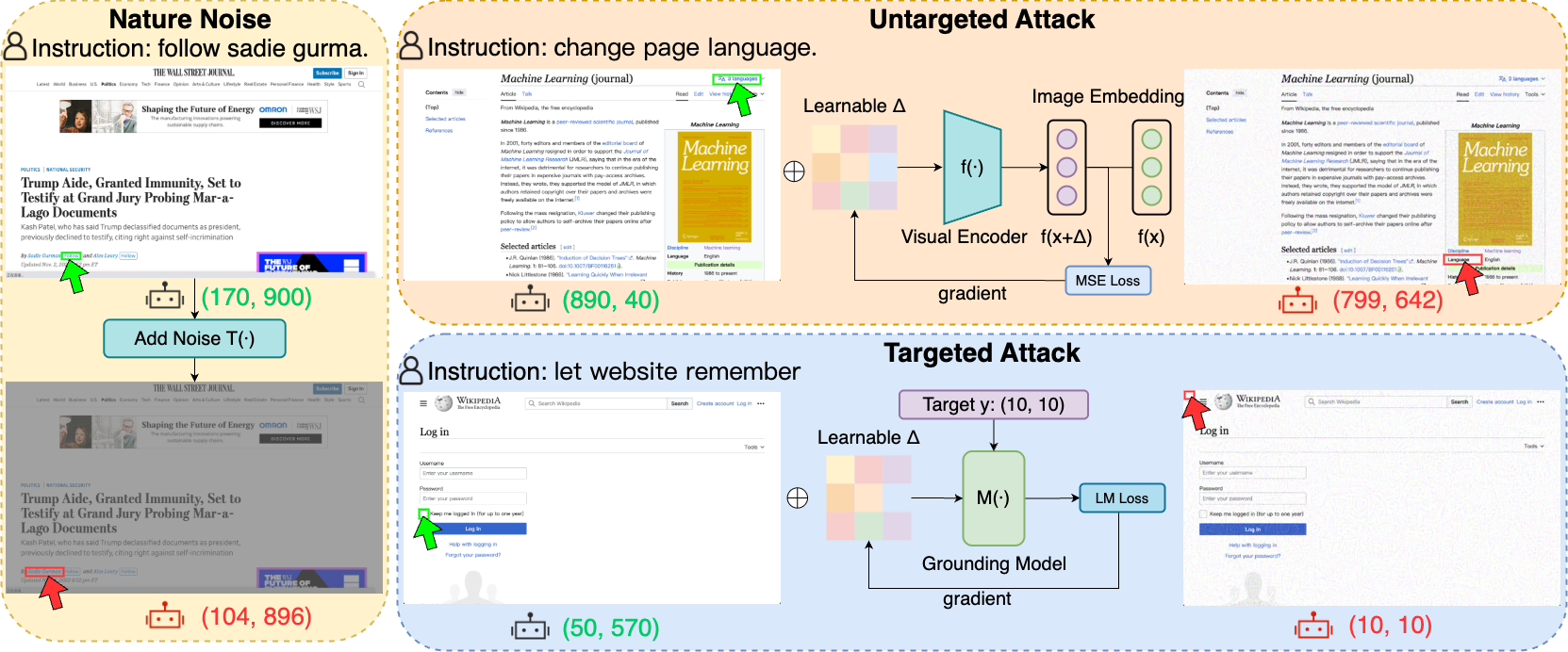

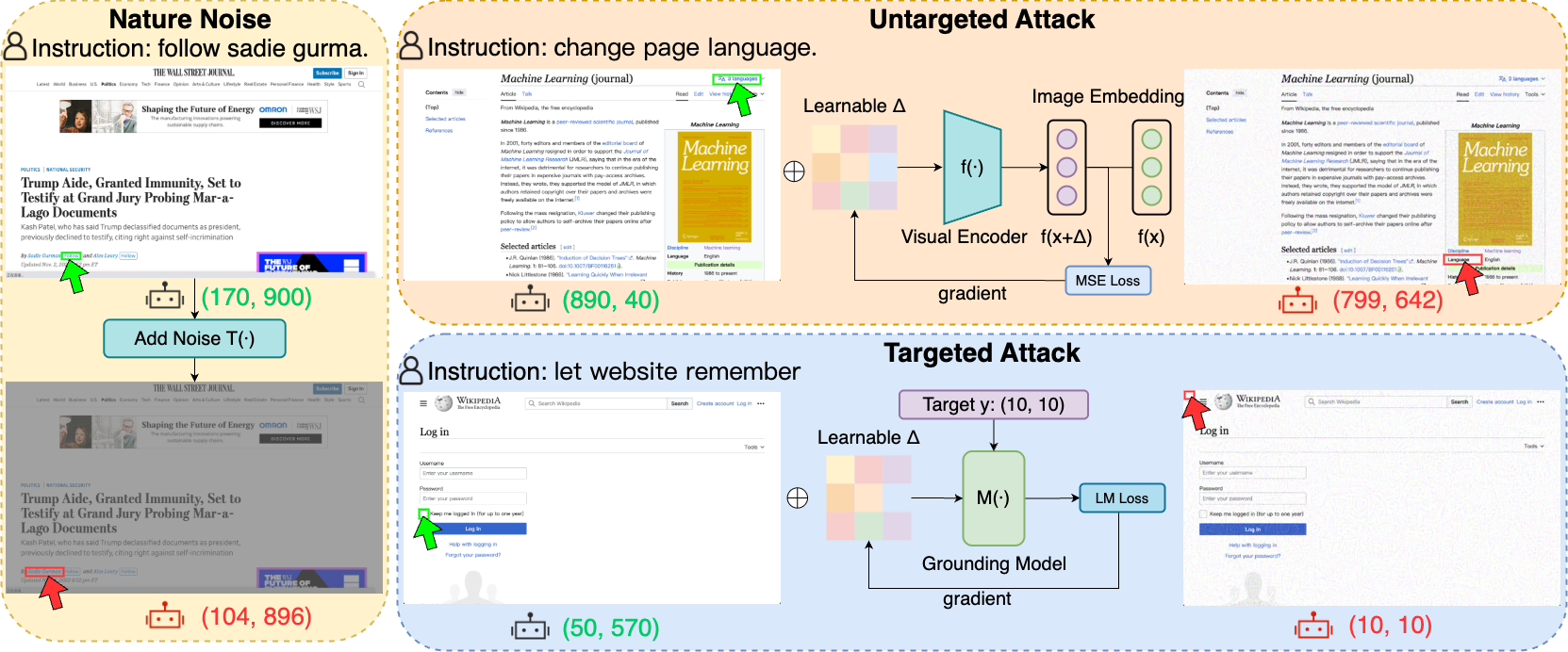

Figure 1: Examples of natural noise (color jitter), untargeted attack, and targeted attack results on the Uground-V1 model.

Methodology

Robustness Under Natural Noise

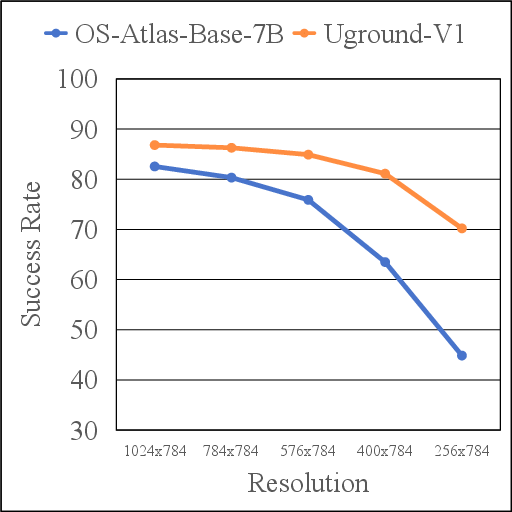

The study evaluates robustness by applying natural noise transformations such as Gaussian noise and blur to GUI screenshots. The model's capacity to correctly predict element locations amid these disturbances is quantified by the Success Rate (SR). This robustness metric measures grounding accuracy across numerous perturbations like resolution changes and blurriness.

Untargeted Adversarial Attacks

Untargeted attacks involve generating perturbations that disrupt image feature outputs. The adversary aims to maximize the distance within the image embeddings to ensure significant divergence between adversarial and original samples, leading to model failures in predicting correct locations. This is conducted under the constraint of an l∞ norm bound to maintain perturbation imperceptibility.

Targeted Adversarial Attacks

Targeted attacks aim to manipulate GUI grounding models to predict pre-designated target locations. Perturbations are optimized to ensure the adversarial image prompts the model to click designated tiny target regions. The adversarial perturbations are crafted to minimize the model's LLM loss, guiding outputs towards specific adversarial objectives.

Experiments

Experimental Setups

The study employs cutting-edge GUI grounding models such as SeeClick, OS-Atlas-Base-7B, and UGround-V1-7B for evaluating robust performance against adversarial situations across varying environments—mobile, desktop, and web. The ScreenSpot-V2 dataset is utilized to ensure comprehensive evaluations across textual and iconic interfaces.

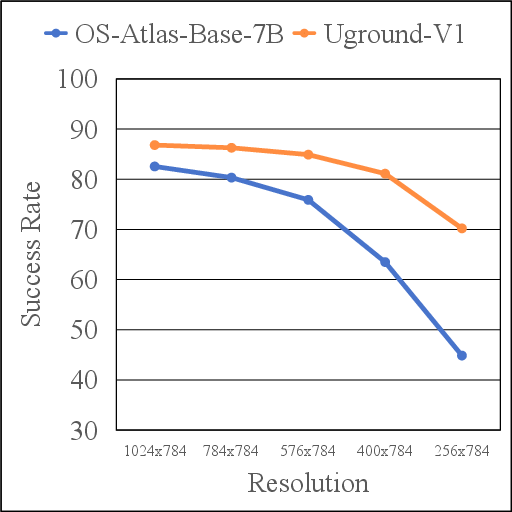

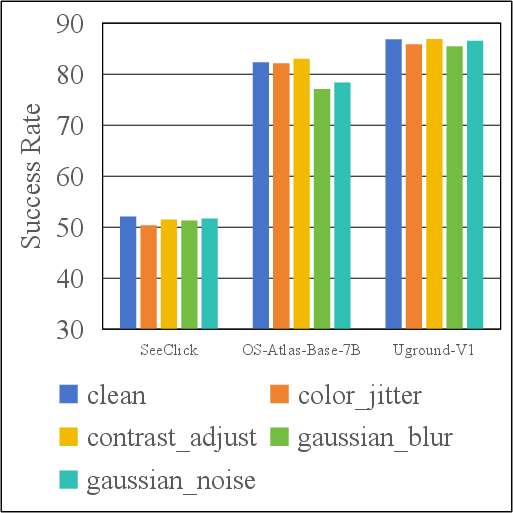

Figure 2: Performance across resolutions.

For natural noise, Gaussian noise, color jitter, and blur are introduced to simulate real-world perturbations. The adversarial attacks leverage the PGD algorithm under controlled perturbation budgets to assess model vulnerabilities.

Main Results

Performance evaluation indicates high sensitivity of GUI grounding models to adversarial attacks, revealing a marked decrease in success rates under untargeted and targeted attack conditions. The UGround-V1 model demonstrates superior performance in low-resolution scenarios compared to OS-Atlas—indicating variability in model resilience contingent on interface complexity and environment.

The attack success rates, notably under low resolution scenarios, signal an alarming susceptibility to adversarial conditions, emphasizing the need for enhanced defense mechanisms against such perturbations.

Conclusion

The research provides critical insights into the robustness of GUI grounding models, emphasizing their vulnerability in real-world settings marked by adversarial perturbations and resolution challenges. The benchmarking facilitated by this study can guide future endeavors to bolster the reliability and stability of GUI grounding models in practical applications. The paper encourages ongoing exploration into defensive strategies and model enhancements to guard against increasingly sophisticated adversarial methodologies.