- The paper introduces OCCAM#1, a training-free segmentation approach that outperforms slot-based methods in object discovery tasks.

- The paper demonstrates that models like HQES offer superior performance on synthetic and real-world benchmarks, improving OOD robustness.

- The paper advocates shifting evaluation towards broader applications of object-centric learning to better capture perceptual organization.

Object-Centric Learning: Evaluative Insights and Future Directions

Object-centric learning (OCL) aims to create representations that encapsulate individual objects within a scene, isolating them from other objects and environmental cues. The inherent ambition is to enable out-of-distribution (OOD) generalization, compositional modeling, and structured environmental interpretation. Historically, the focus has remained on refining unsupervised mechanisms to segregate the representation space into distinct slots, evaluated primarily through unsupervised object discovery. This paper evaluates the current standing of OCL, examining the advancements made and pondering future directions.

Challenges and Proposed Methodologies

The core challenge of OCL has primarily revolved around developing scalable mechanisms that can separate objects effectively in the representation space. Recent innovations such as sample-efficient segmentation models have facilitated pixel-space object separation, offering zero-shot performance on OOD benchmarks and scalability to foundation models. Notably, Object-Centric Classification with Applied Masks (OCCAM#1) has emerged as a pivotal training-free probe advocating segmentation-based encoding over slot-based alternatives.

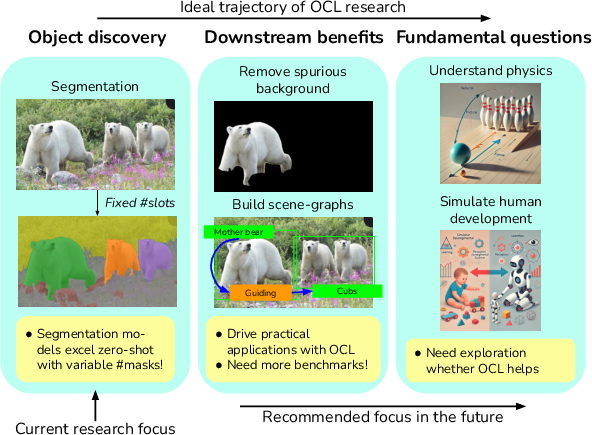

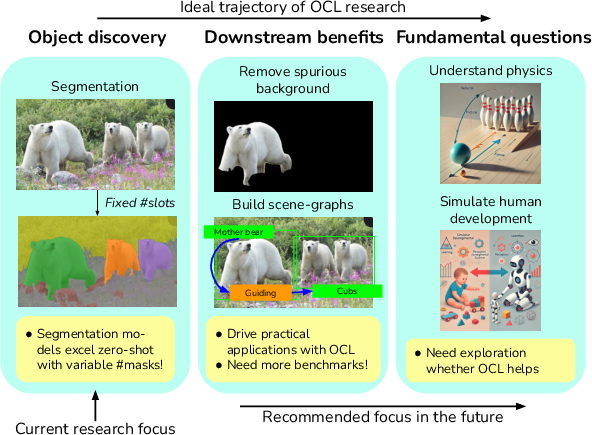

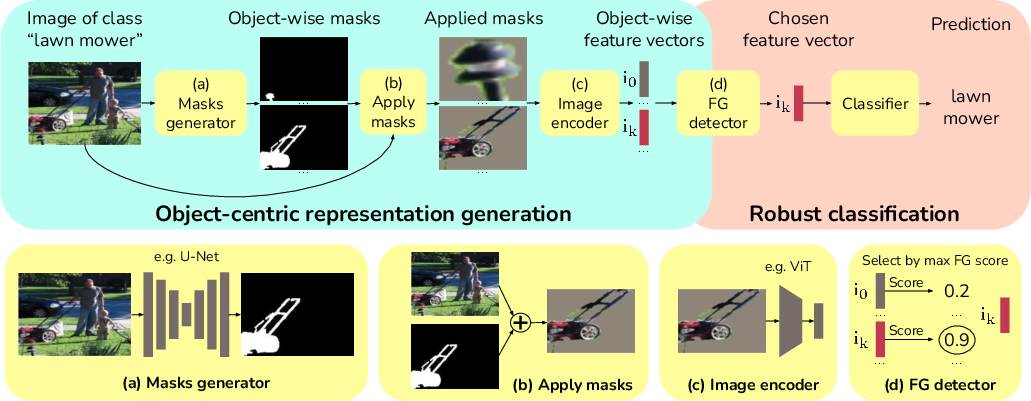

Figure 1: Object-centric learning mechanisms demonstrating downstream benefits in mitigating spurious correlations.

Empirical Evaluations and Results

The evaluation benchmarks highlight compelling performance results by sample-efficient models like HQES surpassing slot-centric OCL approaches on synthetic datasets like Movi-C and Movi-E. Table \ref{tab:quant_object_discovery} underscored HQES’s superiority in object discovery tasks, suggesting a substantial performance leap in mBO metrics.

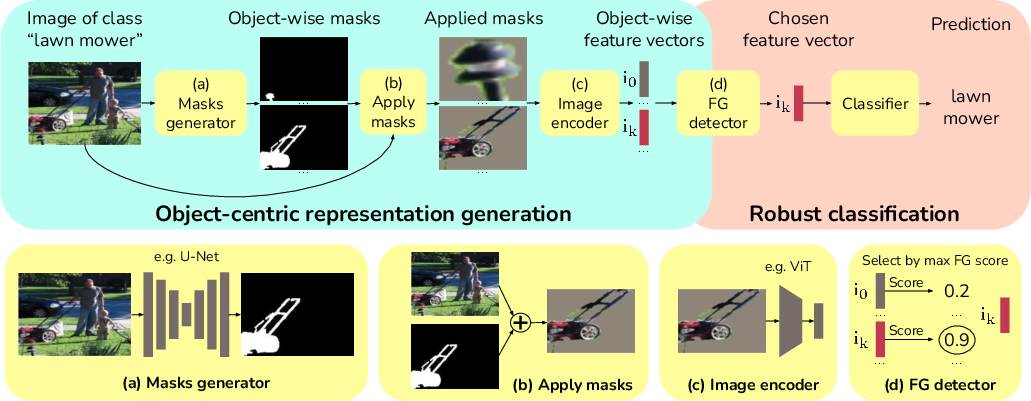

Figure 2: Overview of Object-Centric Classification with Applied Masks showcasing the methodology's two-part structure.

Further assessments on robust classification tasks illuminate OCCAM#1's efficacy in mitigating spurious background influences, achieving notable accuracies across various benchmarks. The deployment of HQES masks significantly improved classification robustness, exemplified by results on datasets such as Waterbirds and ImageNet-9.

Methodology and Analytical Insights

OCCAM#1 utilizes segmentation-based representations to discern object-wise spurious correlation patterns, a critical factor in enhancing classification accuracy under challenging settings. This methodology does not involve additional training, underscoring the practical nature of OCL in achieving superior empirical results without complex procedures.

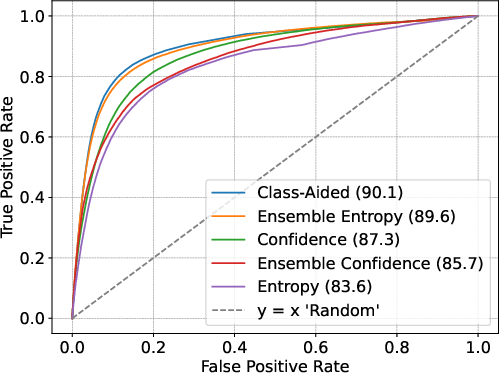

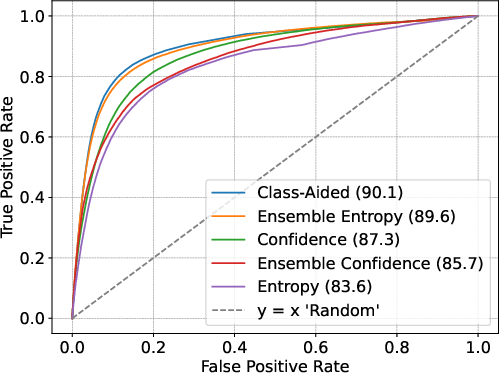

The ablation studies reveal the significance of mask models and FG detection methods in determining classification efficacy. HQES consistently outperformed FT-Dinosaur in providing better masks, reflecting a paradigm shift toward foundational segmentation models for reliable performance metrics.

Future Directions and Conclusions

The paper advocates a shift in OCL evaluation from mere object discovery towards broader applications that exploit object-centric representations effectively across real-world tasks. Developing theoretical frameworks and practical benchmarks aligning with authentic OCL objectives could provide deep insights into visual understanding, drawing from human cognitive processes and real-world causal structures.

Figure 3: ROC-curves depicting efficiency in foreground detection across different methods.

In conclusion, while OCL's foundational aspirations have been largely realized, the paper acknowledges the expansive potential of OCL methodologies in resolving real-world challenges—notably the disentangling of object representations for robust classification. OCL's broader vision necessitates refining objectives and subdividing them into actionable components, paving the way for a comprehensive understanding of perceptual organization. As techniques evolve, integrating richer multimodal data and applying OCL across diverse applications will further illuminate the nuances of visual comprehension and cognitive parallels.