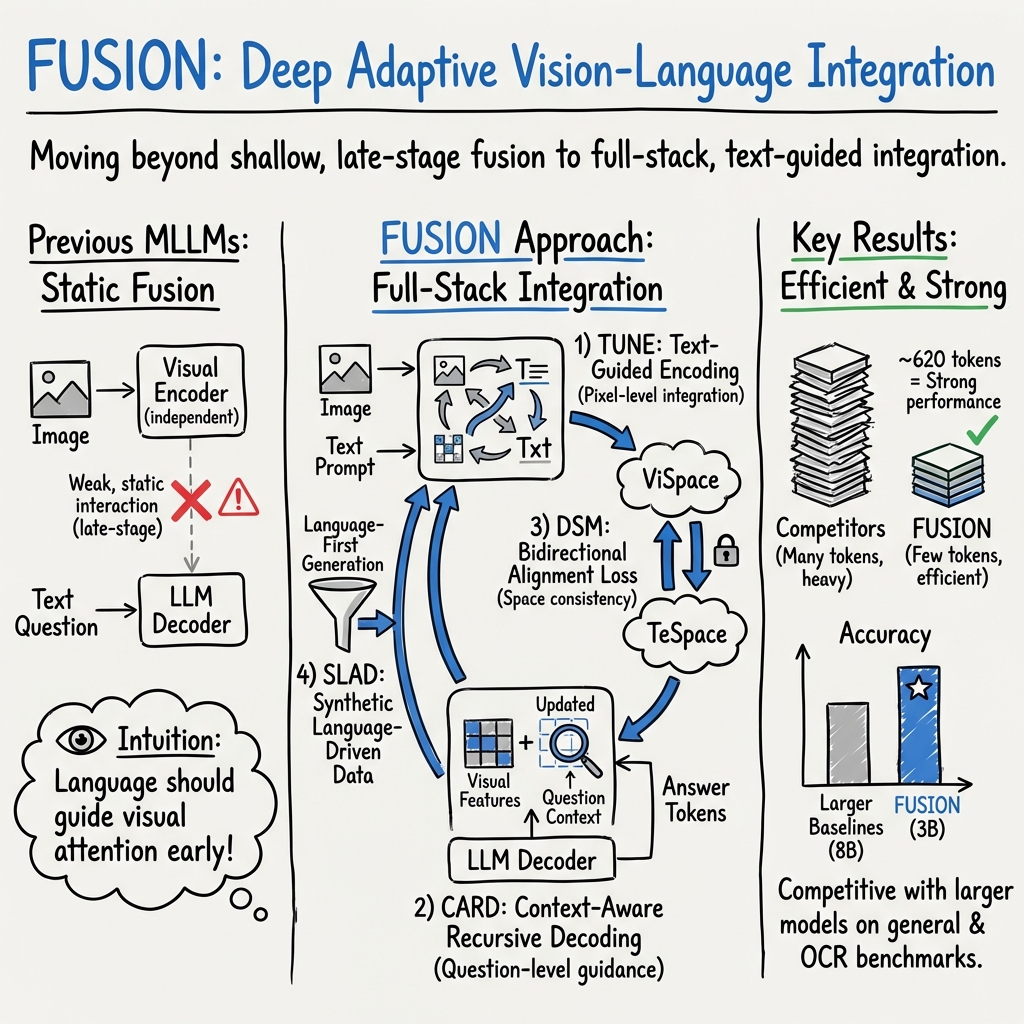

FUSION: Fully Integration of Vision-Language Representations for Deep Cross-Modal Understanding

Abstract: We introduce FUSION, a family of multimodal LLMs (MLLMs) with a fully vision-language alignment and integration paradigm. Unlike existing methods that primarily rely on late-stage modality interaction during LLM decoding, our approach achieves deep, dynamic integration throughout the entire processing pipeline. To this end, we propose Text-Guided Unified Vision Encoding, incorporating textual information in vision encoding to achieve pixel-level integration. We further design Context-Aware Recursive Alignment Decoding that recursively aggregates visual features conditioned on textual context during decoding, enabling fine-grained, question-level semantic integration. To guide feature mapping and mitigate modality discrepancies, we develop Dual-Supervised Semantic Mapping Loss. Additionally, we construct a Synthesized Language-Driven Question-Answer (QA) dataset through a new data synthesis method, prioritizing high-quality QA pairs to optimize text-guided feature integration. Building on these foundations, we train FUSION at two scales-3B, 8B-and demonstrate that our full-modality integration approach significantly outperforms existing methods with only 630 vision tokens. Notably, FUSION 3B surpasses Cambrian-1 8B and Florence-VL 8B on most benchmarks. FUSION 3B continues to outperform Cambrian-1 8B even when limited to 300 vision tokens. Our ablation studies show that FUSION outperforms LLaVA-NeXT on over half of the benchmarks under same configuration without dynamic resolution, highlighting the effectiveness of our approach. We release our code, model weights, and dataset. https://github.com/starriver030515/FUSION

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that leverage FUSION’s fully integrated vision-language pipeline, its strong performance on OCR/charts/diagrams, and its efficiency with fewer vision tokens (≈620, with robust performance even at ≈300).

- Enterprise document and chart Q&A assistants (finance, healthcare admin, legal, government)

- What: Interactive “ask-your-documents” chat over PDFs, forms, tables, and charts, with better OCR/ChartQA/DocVQA performance and reduced hallucinations.

- Tools/products/workflows: Document copilots in DMS/ECM; auditor/compliance assistants; spreadsheet and slide Q&A; multimodal RAG pipelines that index both text and figures using

MLP_{v2t}embeddings. - Dependencies/assumptions: Access to high-resolution scans; privacy-preserving deployment; domain fine-tuning for specific forms/templates; guardrails for extraction accuracy.

- Accessibility companions for non-visual users (education, consumer tech, public sector)

- What: Screen and image explanation (e.g., charts, UI, infographics) in real time, guided by user queries.

- Tools/products/workflows: Screen-reader plug-ins; mobile camera assistants describing graphs or signage; alt-text and longdesc generation.

- Dependencies/assumptions: On-device or secure inference to handle PII; robust UI/OCR performance under varied layouts; latency targets met via token-efficient inference.

- Visual tutoring and feedback for STEM (education/EdTech)

- What: Step-by-step explanations of diagrams (AI2D), math visuals (MathVista), lab setups and labeled figures; auto-grading of diagram-based questions.

- Tools/products/workflows: Diagram-focused tutors; content authoring tools that generate questions from figures; homework feedback apps.

- Dependencies/assumptions: Curriculum alignment; factuality checks; bias and pedagogy reviews before classroom deployment.

- E-commerce product QA and customer support (retail, marketplaces)

- What: Product image Q&A (e.g., “Is this jacket waterproof?”), return label reading, catalog alignment, and visual content moderation (POPE-related hallucination controls).

- Tools/products/workflows: Chatbots integrated with product images; listing validation; trust and safety filters; product attribute extraction into PIMs.

- Dependencies/assumptions: Domain-specific fine-tuning (categories, attributes); clear disclaimers for uncertain answers; rights-managed image handling.

- Field service and manufacturing quality checks (manufacturing, logistics)

- What: Photo-based troubleshooting (“Which connector is loose?”) and defect localization via question-guided attention and latent tokens.

- Tools/products/workflows: Mobile maintenance assistants; visual SOP checklists; image annotation for defects.

- Dependencies/assumptions: Training on domain imagery (parts, tolerances); controlled lighting/angles; human-in-the-loop sign-off for critical calls.

- Multimodal RAG for knowledge work (software, consulting, research)

- What: Question answering over mixed modalities—reports, slides, whiteboards, and charts—using aligned embeddings and context-aware decoding.

- Tools/products/workflows: Vector DBs storing

Image-in-TeSpacefeatures; cross-modal retrieval; integrated document assistants. - Dependencies/assumptions: RAG infrastructure; careful chunking for charts/figures; evaluation and calibration for retrieval precision.

- UI automation and QA testing (software engineering, RPA)

- What: Visual understanding of UI screens (desktop/mobile) to find elements via natural language and generate test steps or flows.

- Tools/products/workflows: Test generation based on screenshots; RPA agents that identify UI components by query; accessibility audits.

- Dependencies/assumptions: Training on representative UI screenshots; privacy controls; robust handling of dynamic UI states.

- Content moderation and compliance at scale (platforms, media)

- What: Guided queries to verify presence/absence of sensitive items/logos/text in images, with reduced hallucination via deeper alignment.

- Tools/products/workflows: Automated pre-publication checks; brand safety scanning; ad compliance.

- Dependencies/assumptions: Policy-aligned taxonomies; error triage workflows; appeal mechanisms.

- Drone and site-inspection Q&A (agriculture, energy, construction)

- What: Question-driven photo analysis (e.g., “Are any insulators cracked?” “Is irrigation uniform?”) from still images.

- Tools/products/workflows: Drone snapshot analyzers; maintenance portals with photo Q&A and overlays.

- Dependencies/assumptions: Training on domain imagery; reliable capture conditions; human verification for critical infrastructure.

- Synthetic data generation for niche domains (industry/academia)

- What: Use the text-centered dataset pipeline to bootstrap high-quality QA pairs and images for underrepresented domains (e.g., insurance forms, industrial diagrams).

- Tools/products/workflows: Data augmentation services; domain-specific instruction sets; QA generation for small, private datasets.

- Dependencies/assumptions: Diffusion/LLM licenses and costs; bias/coverage audits; fidelity of generated images to real-world patterns.

- Cost- and resource-aware deployment (SMBs, edge inference)

- What: Serve competitive VLMs with fewer tokens (≈620/≈300) to lower latency/cost and enable edge or low-GPU deployments.

- Tools/products/workflows: Quantized inference stacks; autoscaling configurations; mobile/offline assistants.

- Dependencies/assumptions: Acceptable accuracy-speed tradeoffs under token reduction; platform-specific optimization (ONNX/TensorRT, etc.).

Long-Term Applications

These opportunities leverage the paper’s core innovations—text-guided encoding, recursive question-conditioned decoding, and dual-supervised alignment—but require additional research, domain data, or safety/regulatory milestones.

- Clinical imaging and diagnostic decision support (healthcare)

- What: Question-guided radiology/pathology image triage (“Is there a pneumothorax?”), structured report drafting integrated with EMRs.

- Potential products: PACS-integrated assistants; multimodal chart-note generation.

- Dependencies/assumptions: Extensive medical training/validation; FDA/CE approvals; bias, reliability, and interpretability; integration with DICOM workflows.

- Embodied question-driven perception for robots (robotics, manufacturing, logistics)

- What: Real-time visual querying to guide manipulation and navigation (“Is the wrench behind the valve?”), with recursive alignment improving attention to task-relevant regions.

- Potential products: Warehouse pick/pack assistants; factory cobots with natural language supervision.

- Dependencies/assumptions: Video/temporal extensions; tight latency budgets; robust SLAM/controls integration; safety certification.

- Autonomous driving and ADAS perception checks (mobility)

- What: Safety checks via targeted queries (“Is there a pedestrian in the crosswalk?”) to augment perception stacks.

- Potential products: QA auditors for perception outputs; event explainability tools.

- Dependencies/assumptions: High-reliability temporal reasoning; rigorous safety cases; domain-specific training; regulator acceptance.

- Video- and multi-image reasoning assistants (media analytics, sports, meetings)

- What: Extend text-guided encoding and context-aware decoding to temporal sequences for scene and event understanding.

- Potential products: Meeting minute extraction from slides + whiteboards + video; sports play analysis.

- Dependencies/assumptions: New datasets and training for temporal coherence; compute optimization for streaming.

- Scientific literature and knowledge graph construction (academia, R&D policy)

- What: Parsing diagrams, charts, and figure captions at scale to populate structured knowledge bases.

- Potential products: Research copilots; grant review tools that verify figure claims against text.

- Dependencies/assumptions: Rights and fair use considerations; domain ontologies; stringent accuracy requirements.

- Financial risk and regulatory reporting copilots (finance)

- What: Cross-document analysis of charts, tables, and narratives to flag risk exposures and compliance issues.

- Potential products: Regulatory reporting automation; portfolio visualization explainers.

- Dependencies/assumptions: Auditability and traceability; strict hallucination controls; regulator-reviewed benchmarks.

- Edge IoT vision-language analytics (energy, smart cities, industrial IoT)

- What: On-device QA over camera feeds and instrument panels for privacy-preserving monitoring.

- Potential products: Smart meters/substation inspectors; shop-floor monitors that answer targeted queries.

- Dependencies/assumptions: Hardware acceleration; ruggedization; battery/runtime constraints; on-device privacy.

- Legal e-discovery and defensible AI workflows (legal, compliance)

- What: Multimodal search and Q&A over image-rich evidence (scans, photos, diagrams) with explainable cross-modal attention trails.

- Potential products: E-discovery suites with visual analytics; deposition preparation aids.

- Dependencies/assumptions: Chain-of-custody, provenance tracking; human-in-the-loop validation; explainability standards.

- Cross-modal retrieval and discovery (museums, media archives, retail search)

- What: Unified embedding space enables better text→image and image→text retrieval; discovery by question intent rather than keywords.

- Potential products: Multimodal search APIs; collection browsers; product recommendation engines based on visual semantics.

- Dependencies/assumptions: Calibration of embedding spaces; debiasing; robust indexing and deduplication.

- Public policy for data creation and governance

- What: Adopt text-centered synthetic data pipelines to reduce PII exposure and balance datasets; develop audit standards for multimodal alignment and hallucination rates.

- Potential products: Procurement guidelines; certification checklists for multimodal datasets/models.

- Dependencies/assumptions: Community consensus on metrics; reproducibility and documentation norms.

- Multilingual/low-resource multimodal assistants (global education/public services)

- What: Robust cross-lingual diagram and chart understanding; localized deployments for underserved languages.

- Potential products: Government service portals; educational tools in multiple scripts.

- Dependencies/assumptions: Data and evaluation in target languages/scripts; OCR generalization across scripts; cultural/linguistic bias audits.

Notes on Feasibility and Dependencies

- Domain adaptation: Safety-critical sectors (healthcare, AVs, industrial control) require domain-specific data, rigorous validation, and regulatory approval.

- Data quality: The text-centered synthesis pipeline is powerful but can introduce bias or artifacts; human curation and targeted real-world fine-tuning are advisable.

- Compute and latency: FUSION’s reduced token counts enable lower-cost inference, but real-time applications still need optimization (quantization, compilers, hardware accelerators).

- Privacy and security: Document and screen understanding often involve PII; on-device or confidential computing patterns are recommended alongside robust logging and access controls.

- Reliability and explainability: The dual-supervised alignment and question-conditioned attention can support interpretability, but explicit explanation tooling and uncertainty estimates should be added for high-stakes use.

Collections

Sign up for free to add this paper to one or more collections.