- The paper proposes an iterative framework that incrementally refines implicit neural representations to capture high-frequency details and mitigate noise.

- The method leverages a base INR and an auxiliary network in a stepwise reconstruction process, improving performance on image fitting, super-resolution, and denoising tasks.

- Experiments demonstrate significant PSNR gains and enhanced fidelity, setting a new benchmark for robustness and detail preservation in neural signal reconstruction.

Iterative Implicit Neural Representations

Introduction

Implicit Neural Representations (INRs) have become integral to signal processing and computer vision by offering a framework where signals are modeled as continuous functions, realized through neural networks like MLPs. However, traditional INRs face challenges in capturing fine details and managing noise due to their predisposition towards low-frequency components. The "I-INR: Iterative Implicit Neural Representations" paper proposes the Iterative Implicit Neural Representations (I-INRs) framework, which aims to address these issues by refining reconstruction iteratively.

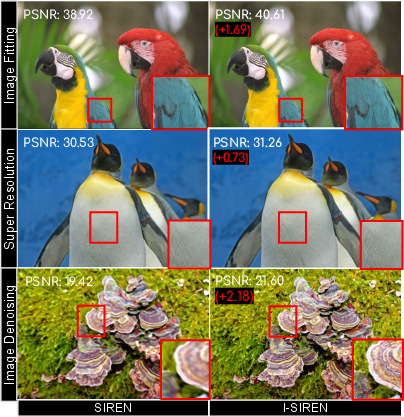

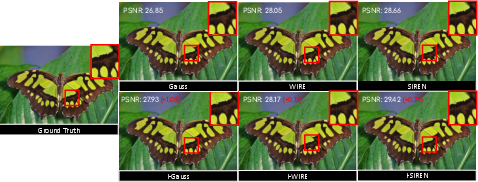

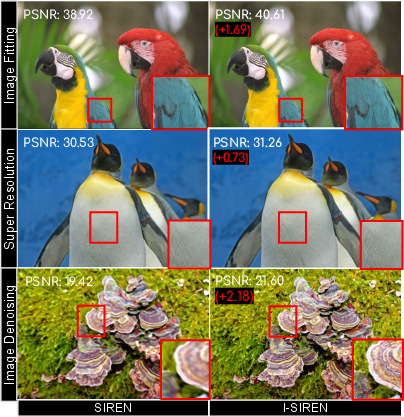

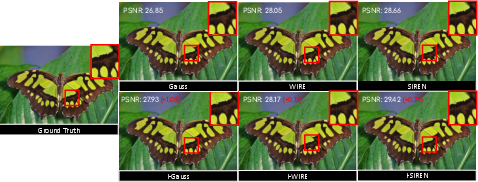

Figure 1: Effectiveness of the proposed methods across multiple tasks, showcasing improvements in detail preservation and high-frequency reconstruction.

Proposed Method

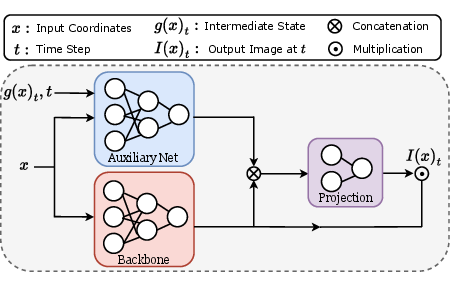

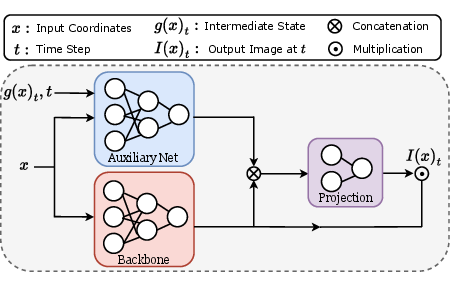

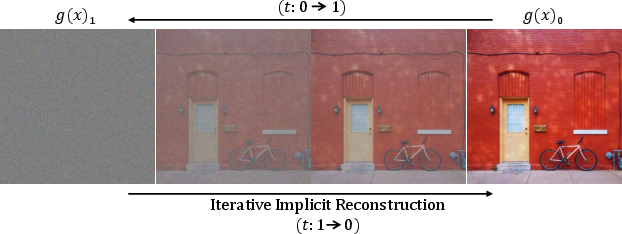

I-INRs are designed to be an enhancement to existing INRs without requiring substantial modifications. The framework introduces an iterative mechanism where signals are reconstructed over multiple steps, effectively capturing high-frequency details and improving noise robustness. The proposed method involves a formulation that transitions the target signal from an initial state Z towards the desired output progressively.

Figure 2: (a) Proposed architecture of the I-INR model featuring a Base INR and an Auxiliary Network. (b) Iterative reconstruction process.

This iterative approach can be mathematically represented using a linear interpolation between the target signal and a known initial state, evolving as follows:

g(x)t=I(x)(1−t)+Zt,

where Z can be sampled from a distribution, such as Gaussian noise.

Experiments and Results

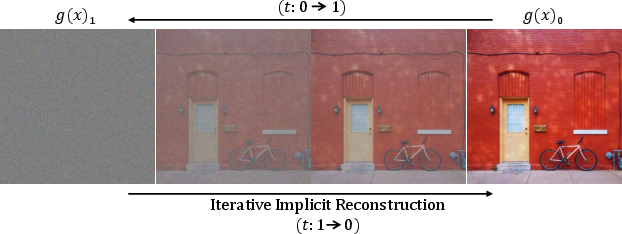

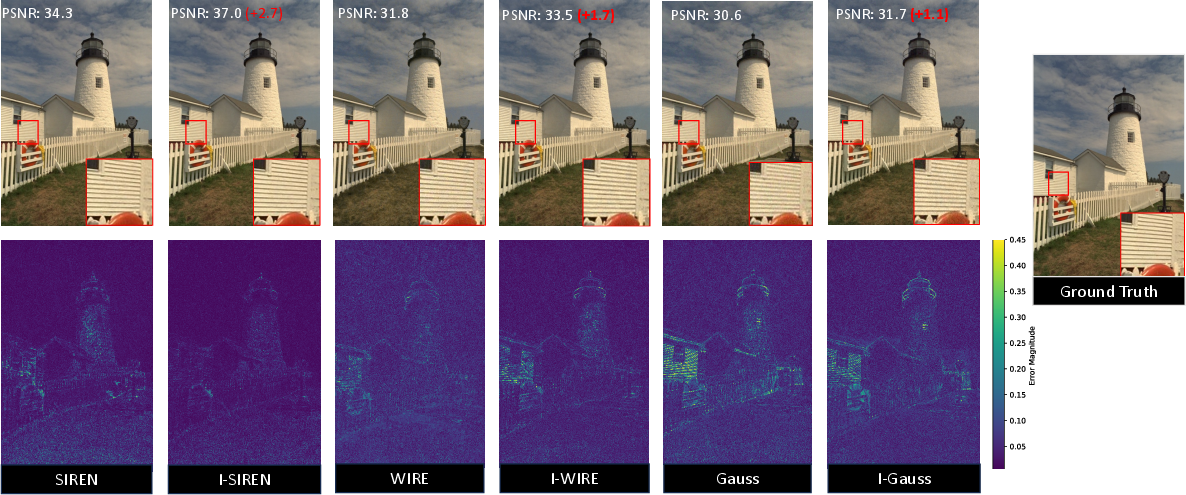

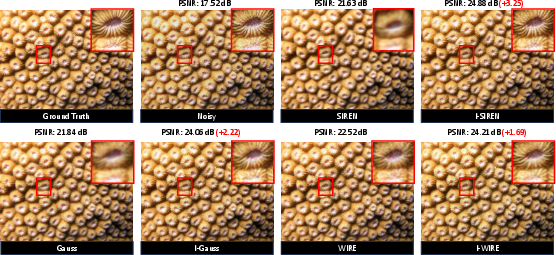

Extensive experimentation demonstrates that I-INRs significantly outperform baseline models like SIREN, WIRE, and Gauss across tasks such as image fitting, super-resolution, and denoising. For image fitting, the iterative models enhanced fidelity and reduced reconstruction errors across test datasets.

Figure 3: Improved image fitting results using iterative models with reported PSNR gains.

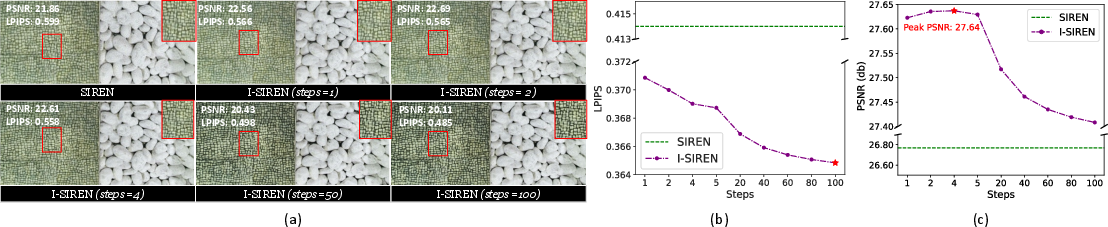

The super-resolution tasks show consistent improvements in perceptual quality metrics alongside fidelity, illustrating the capability of I-INRs to generalize beyond trained scales.

Figure 4: Visual comparison of super-resolution results, highlighting fewer artifacts and better detail preservation with iterative methods.

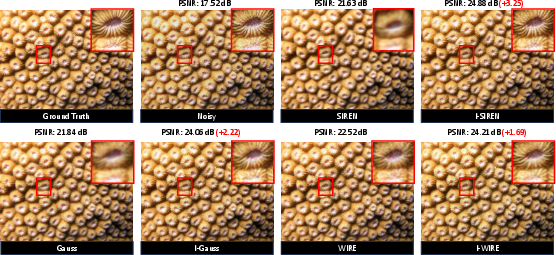

Similarly, in denoising, I-INRs exhibit a marked reduction in artifacts compared to traditional methods.

Figure 5: Denoising results where iterative approaches achieve superior detail preservation and artifact reduction.

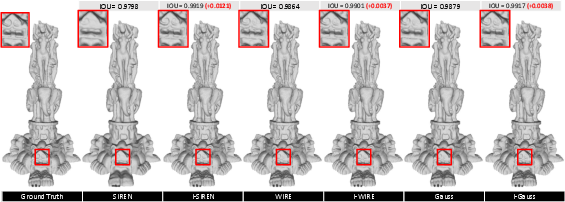

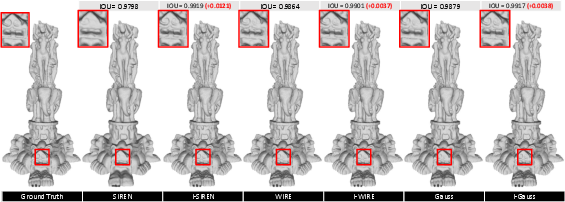

The reconstruction quality for 3D occupancy further supports I-INR's superiority in capturing intricate spatial details.

Figure 6: Iterative methods offer enhanced geometric detail in 3D occupancy reconstruction.

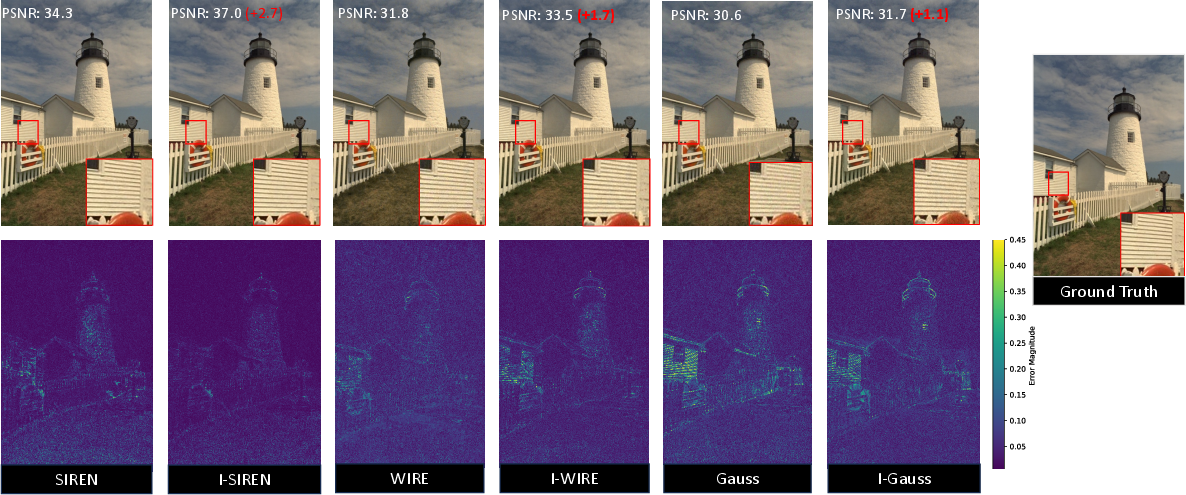

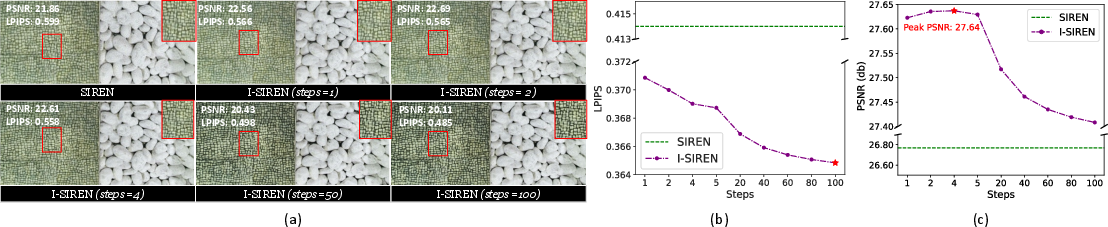

Ablation Studies

The ablation studies confirm the robustness of the I-INRs across different settings, including the choice of initial states and its effect on reconstruction quality. These experiments underscore the importance of iterations in maximizing PSNR and perceptual quality while balancing computational efficiency.

Figure 7: Impact of reconstruction steps on super-resolution performance, showing improved perceptual quality with increased iteration steps.

Conclusion

In conclusion, I-INRs introduce a significant advancement in implicit neural signal representation by refining the reconstruction process iteratively. This framework surmounts traditional limitations in capturing high-frequency details and robustness to noise, setting a new standard for quality in computer vision applications. The iterative nature of I-INRs holds promise for further advancements in AI research, particularly in tasks requiring precision and resilience against noisy data environments.