- The paper presents FedE4RAG, a framework that employs federated and privacy-preserving embedding learning techniques to secure RAG systems.

- It demonstrates that local pre-training with homomorphic encryption can enhance retrieval accuracy, nearing that of centralized models.

- The framework offers a scalable solution for compliance with global privacy standards, paving the way for secure AI in sensitive applications.

Privacy-Preserving Federated Embedding Learning for Localized Retrieval-Augmented Generation

Introduction

The study "Privacy-Preserving Federated Embedding Learning for Localized Retrieval-Augmented Generation" (2504.19101) introduces an innovative framework, FedE4RAG, to enhance the Retrieval-Augmented Generation (RAG) systems in a privacy-preserving manner. This work is critically relevant as it addresses key challenges associated with deploying private RAG systems, particularly focusing on data scarcity, the need for robust data privacy, and enhancing the generalization capabilities of localized retrievers through federated learning mechanisms.

Methodology and Framework Overview

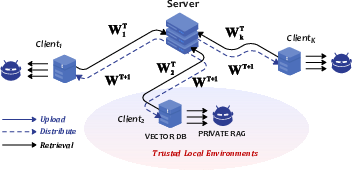

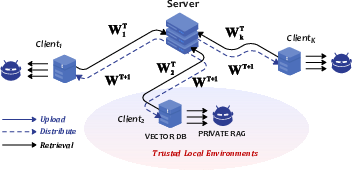

The FedE4RAG framework is designed to enable secure, distributed learning processes across various client environments while shielding sensitive data. It employs federated learning (FL) as a non-intrusive approach where local models at the client side perform computations on data without the need to share raw data externally. This mechanism is coupled with homomorphic encryption to further ensure that model parameters and data remain confidential during training (Figure 1).

Figure 1: Schematic overview of our general Federated Embedding Learning for the private localized RAG systems. Different clients provide complementary data, while each client need not expose their data through federated learning.

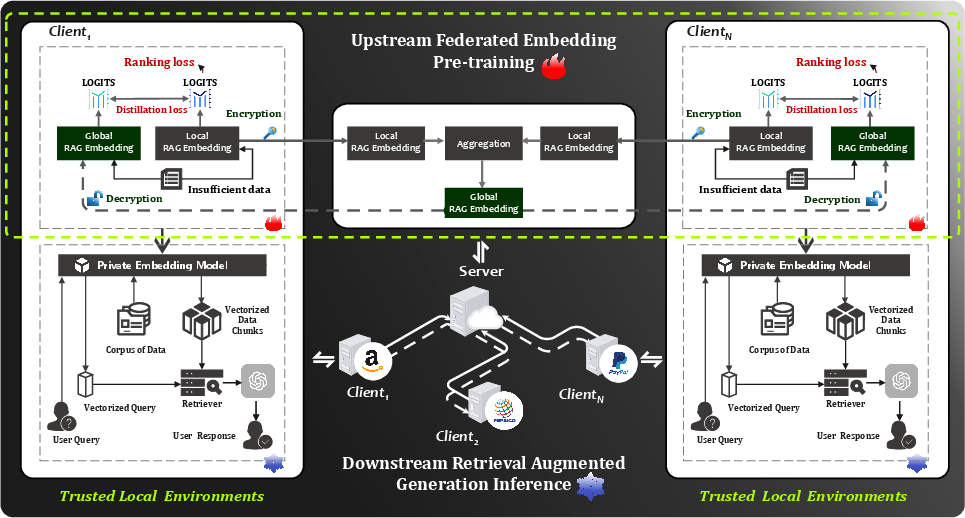

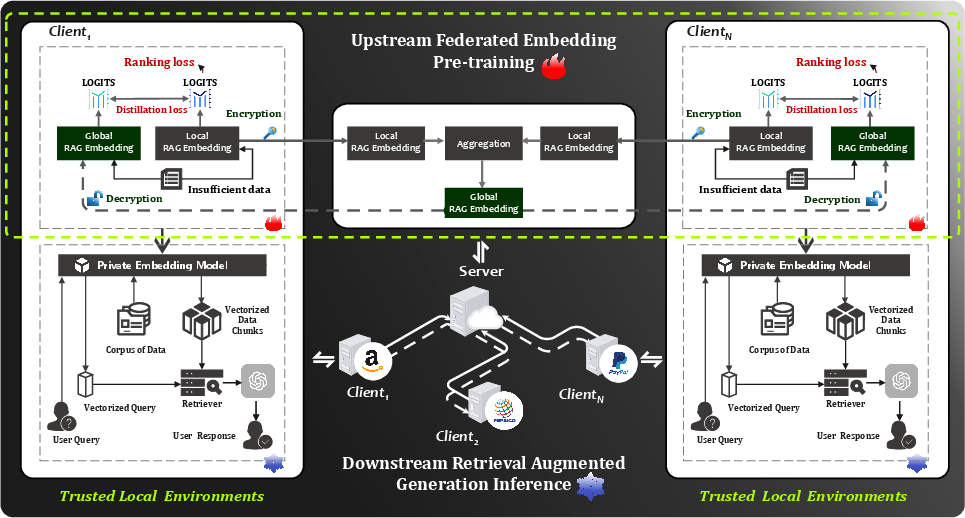

The framework consists of two primary phases (Figure 2):

- Upstream Federated Embedding Pre-Training: In this phase, client-side RAG retrievers are collaboratively trained using federated and privacy-preserving knowledge distillation techniques. It involves aggregating model parameters on a central server, enhancing local model parameters without exposing raw data.

- Downstream Retrieval-Augmented Generation (RAG) Inference: This phase enables clients to perform retrieval and answer generation within a secure, localized environment, implementing private embedding models that utilize outputs from the pre-trained models to generate responses.

Figure 2: Schematic representation of the Federated Embedding Learning (FedE4RAG) framework, illustrating the two-phase process: upstream federated embedding pre-training and downstream retrieval-augmented generation inference.

Experimental Results

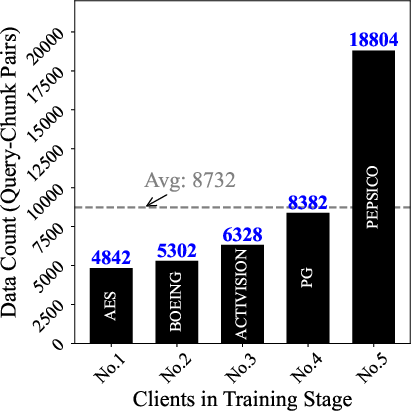

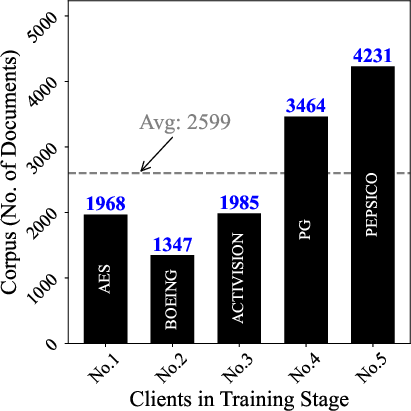

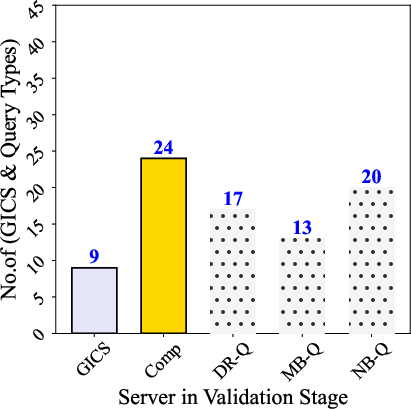

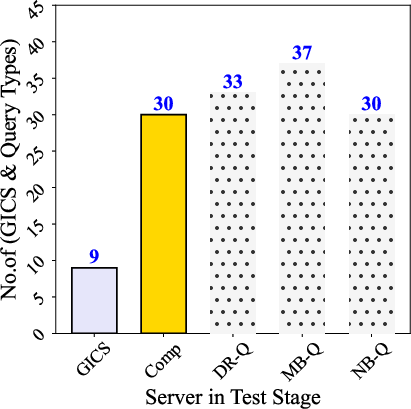

The empirical validation of FedE4RAG showcased its effectiveness across various datasets, confirming significant improvements in retrieval accuracy while maintaining stringent data privacy (Figure 3). A key outcome was demonstrating that federated learning could deliver performance approaching or even surpassing that of centralized models without compromising data privacy.

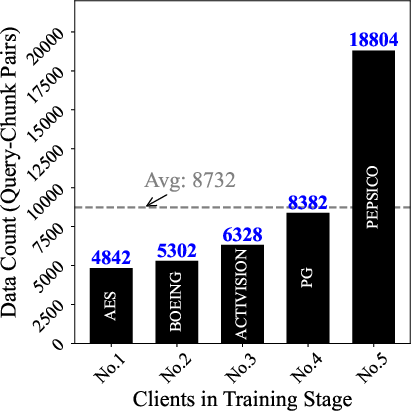

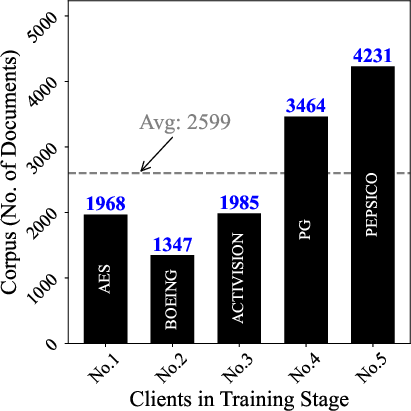

Figure 3: The number of query-chunk pairs in upstream embedding pretraining, and the number of documents provided by each client company.

Implications and Future Directions

This study profoundly impacts the theoretical and practical landscapes of RAG systems. Practically, it opens new horizons for enterprises to leverage collaborative learning without the risks associated with data breaches, thereby aligning with global privacy regulations like GDPR. Theoretically, it presents a scalable and secure federated learning approach that could inspire further innovation in privacy-preserving AI technologies.

The paper anticipates future explorations that could include enhancements in communication efficiency for federated learning and extending this paradigm to other application domains such as healthcare, where data sensitivity is paramount.

Conclusion

FedE4RAG stands out as a pivotal contribution to privacy-preserving technologies, showcasing a practical approach to deploying RAG systems without the detriments of data exposure. By harnessing federated learning combined with robust encryption techniques, this research not only addresses immediate privacy concerns but also lays the groundwork for future developments in secure AI deployment in sensitive environments.