- The paper introduces two novel 1D CNN architectures, ArrhythmiNet V1 and V2, achieving 99% and 98% accuracy respectively on the MIT-BIH dataset.

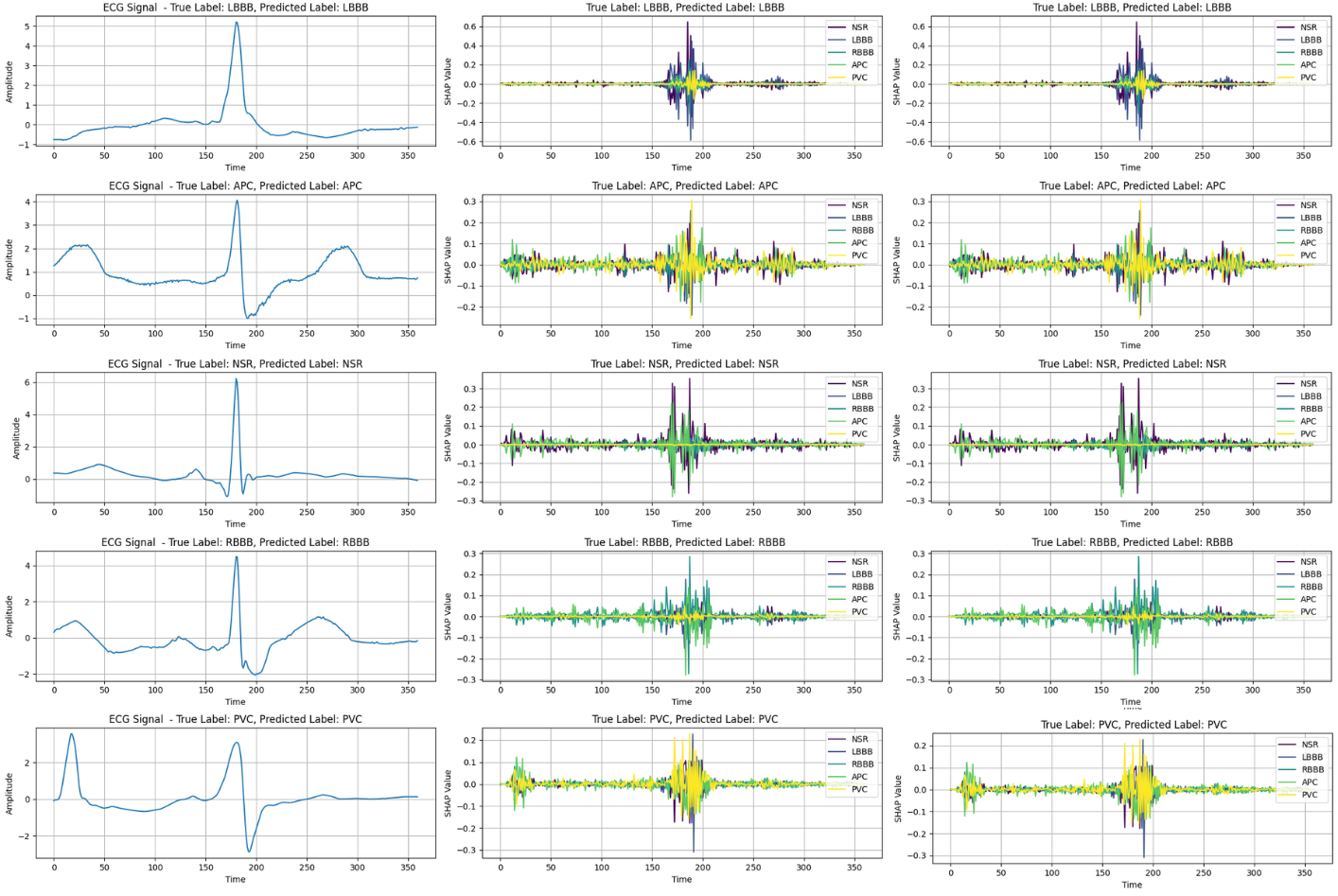

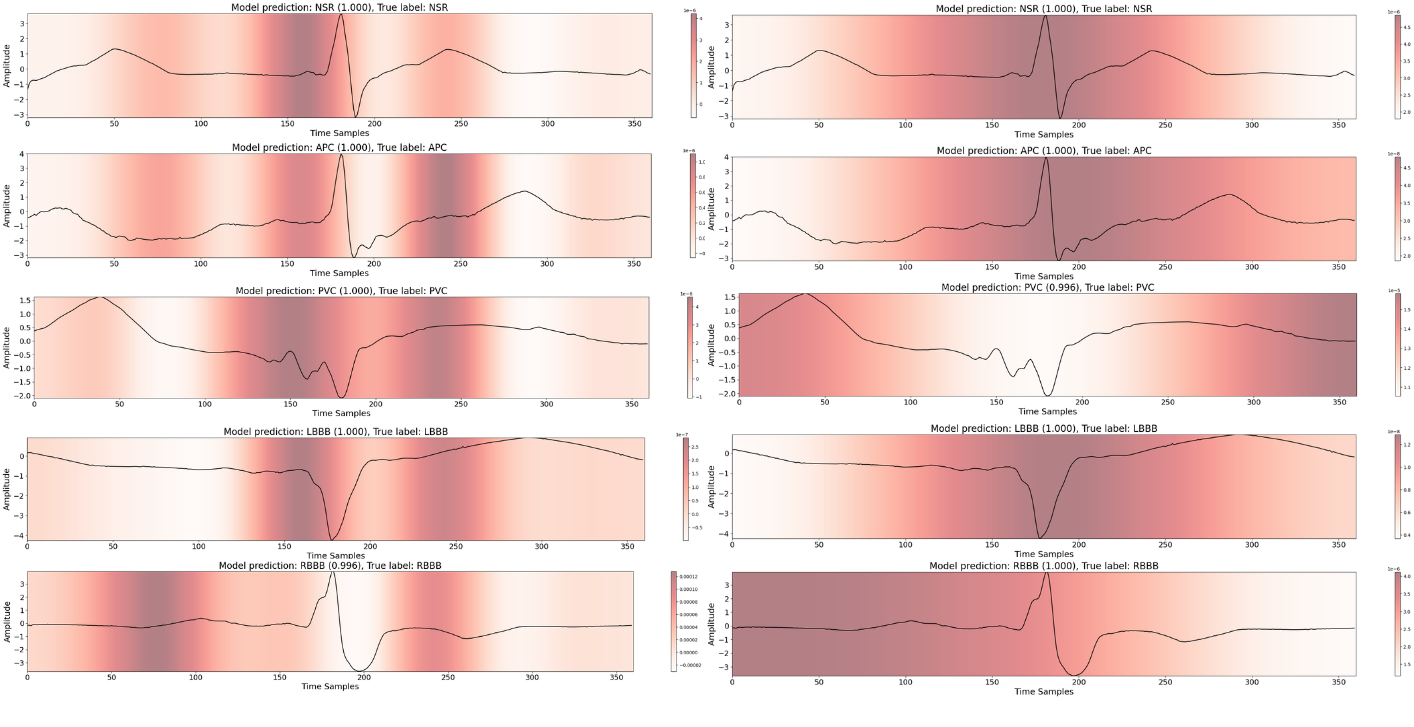

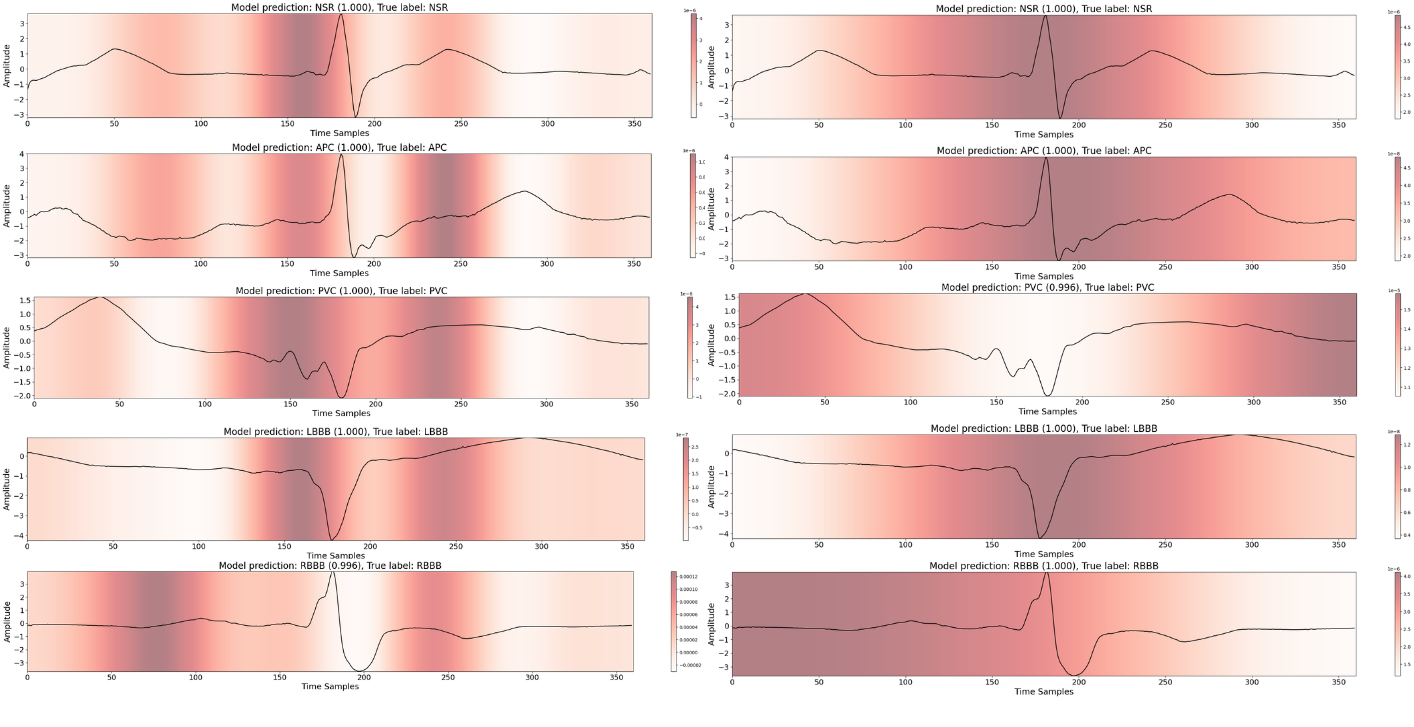

- It integrates explainable AI techniques like SHAP and Grad-CAM to visually highlight key ECG features such as the QRS complex and T-wave.

- The lightweight design enables real-time, edge-device deployment, facilitating timely diagnosis in remote healthcare monitoring systems.

ArrhythmiaVision: Resource-Conscious Deep Learning Models with Visual Explanations for ECG Arrhythmia Classification

Introduction

The paper "ArrhythmiaVision: Resource-Conscious Deep Learning Models with Visual Explanations for ECG Arrhythmia Classification" (2505.03787) addresses the challenge of accurately classifying cardiac arrhythmias using electrocardiogram (ECG) signals with resource-efficient deep learning models. Arrhythmias are critical to diagnose early to prevent life-threatening cardiac events, but traditional manual ECG interpretation requires considerable time and expertise. This study introduces two novel 1D convolutional neural network (CNN) architectures, ArrhythmiNet V1 and V2, designed for deployment on edge devices with limited computational resources. These models achieve high classification accuracy while maintaining a competitive memory footprint, making them suitable for real-time monitoring in resource-constrained environments.

Methodology

Dataset and Preprocessing

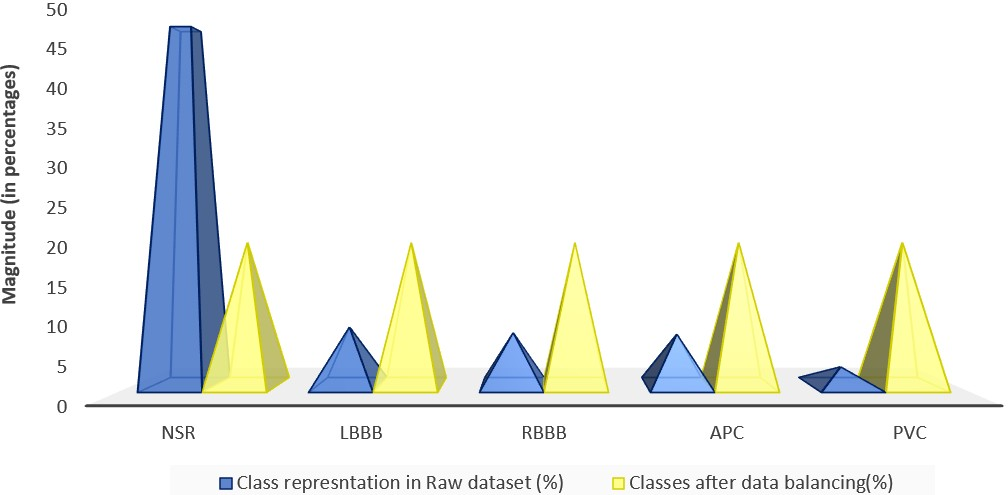

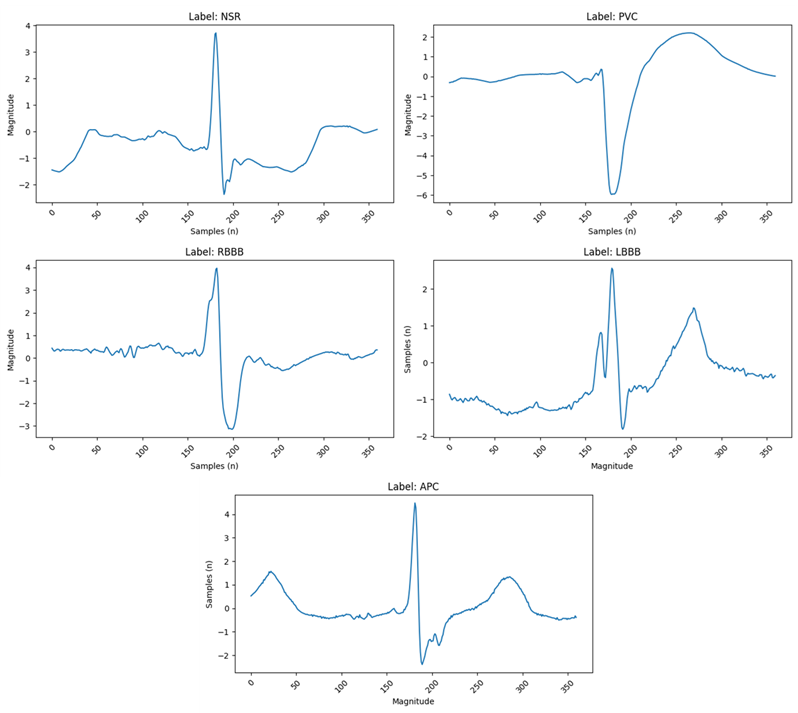

The research utilizes the MIT-BIH Arrhythmia Database, which is the standard benchmark for arrhythmia classification. It includes data from approximately 1.1 million ECG beats sampled at 360 Hz across five arrhythmia classes: Normal Sinus Rhythm, Left-Bundle Branch Block, Right Bundle Branch Block, Atrial Premature Contraction, and Premature Ventricular Contraction.

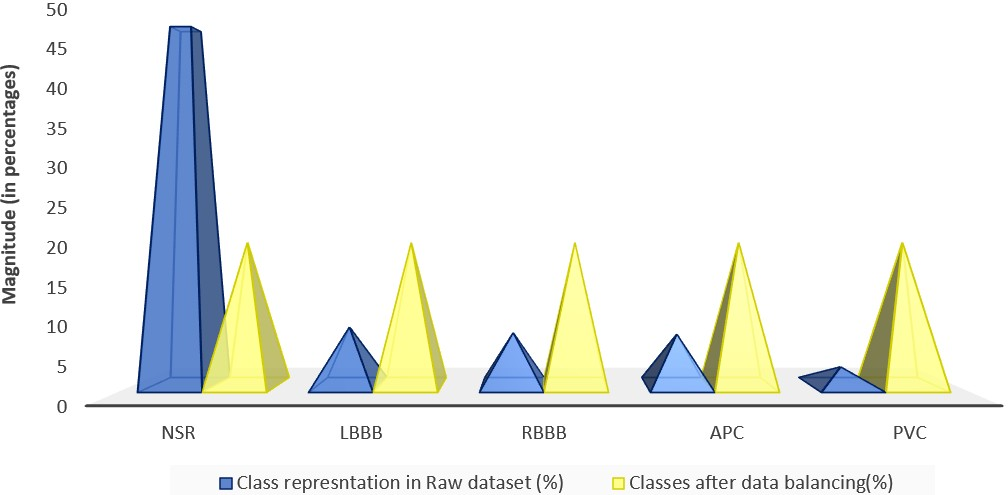

Figure 1: (Left) Distribution of the unbalanced raw MIT-BIH dataset. (Right) Distribution after applying balancing techniques.

The dataset's inherent class imbalance was addressed through oversampling minority classes and undersampling majority classes, ensuring that every class was well-represented during training. The ECG signals were denoised using a wavelet transform and subsequently normalized before model training.

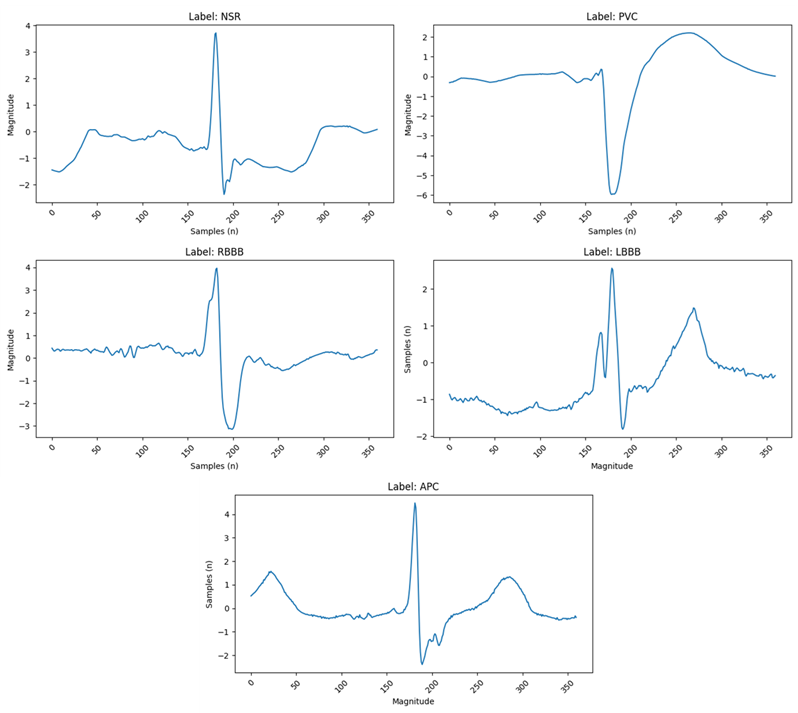

Figure 2: (Top Left) Normal Sinus Rhythm, the standard ECG signal exhibiting all regular morphological features. (Top Right) Premature Ventricular Contraction, characterized by an early contraction of the ventricles. (Middle Left) Right Bundle Branch Block. (Middle Right) Left Bundle Branch Block. (Bottom) Atrial Premature Contraction.

Architectural Design

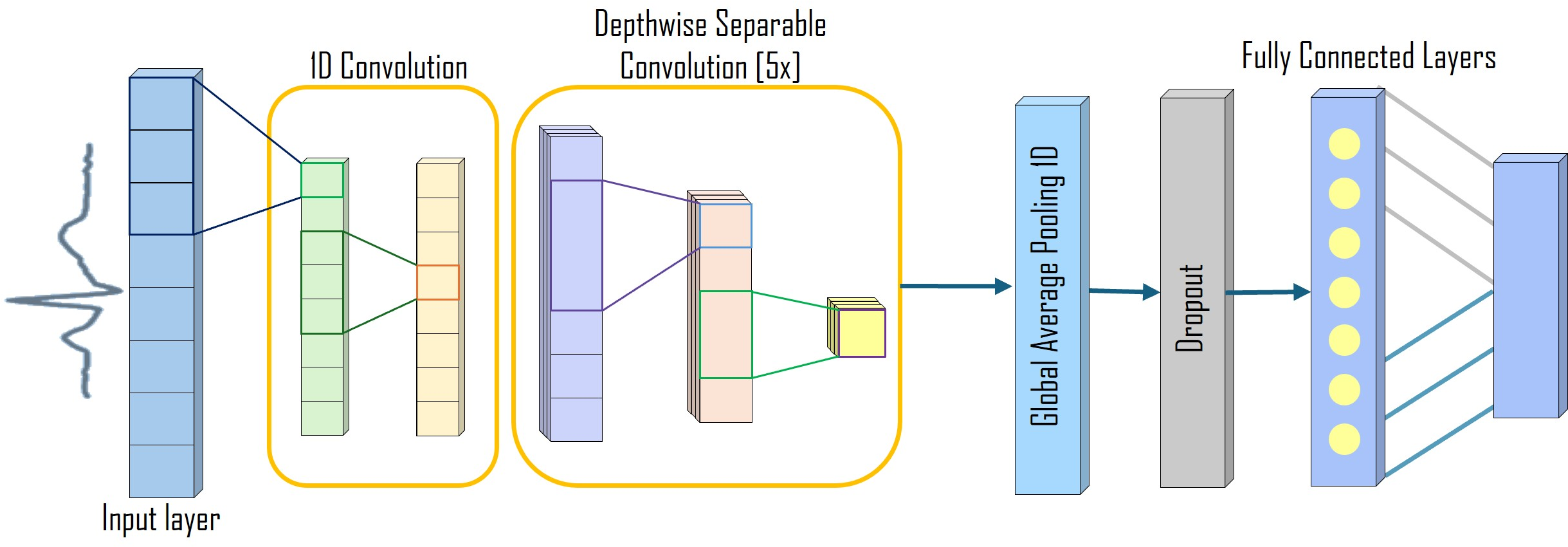

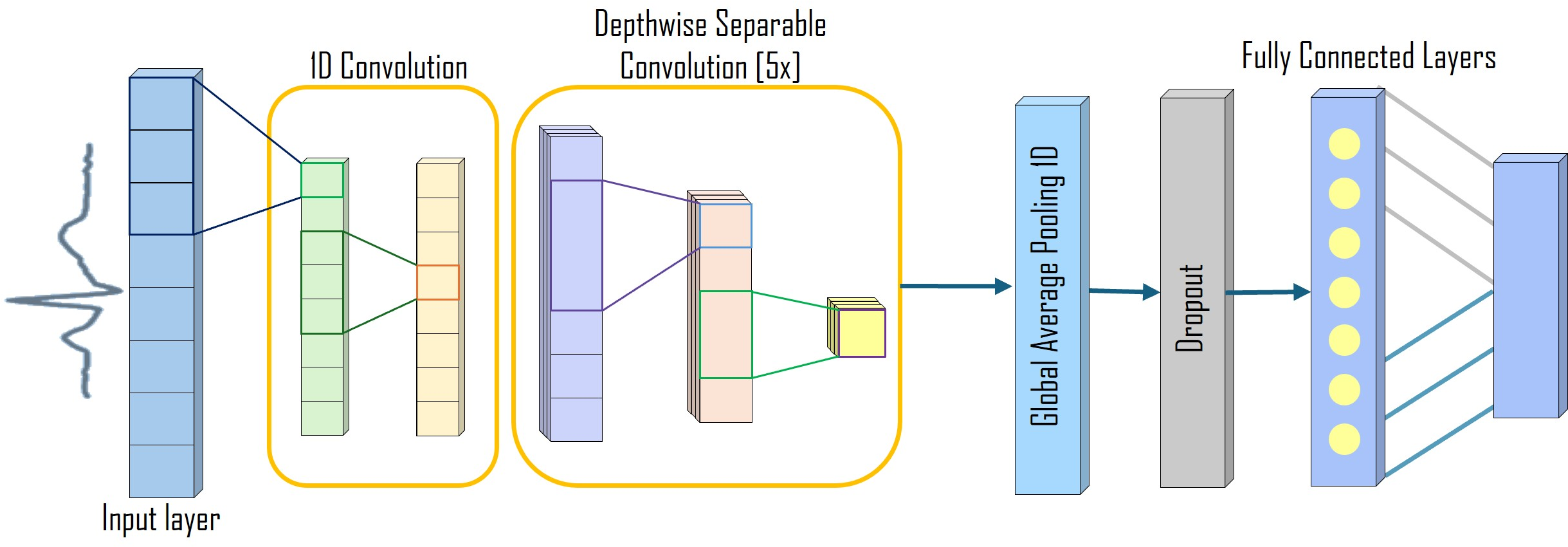

ArrhythmiNet V1 and V2 leverage depthwise separable convolutions, inspired by MobileNet structures, to minimize computational load while preserving spatial and temporal ECG features crucial for arrhythmia detection. ArrhythmiNet V1 features five depthwise separable convolution blocks, achieving high classification accuracy with a modest model size of 303.18 KB.

Figure 3: ArrhythmiNet V1 architecture featuring five depthwise separable convolution blocks.

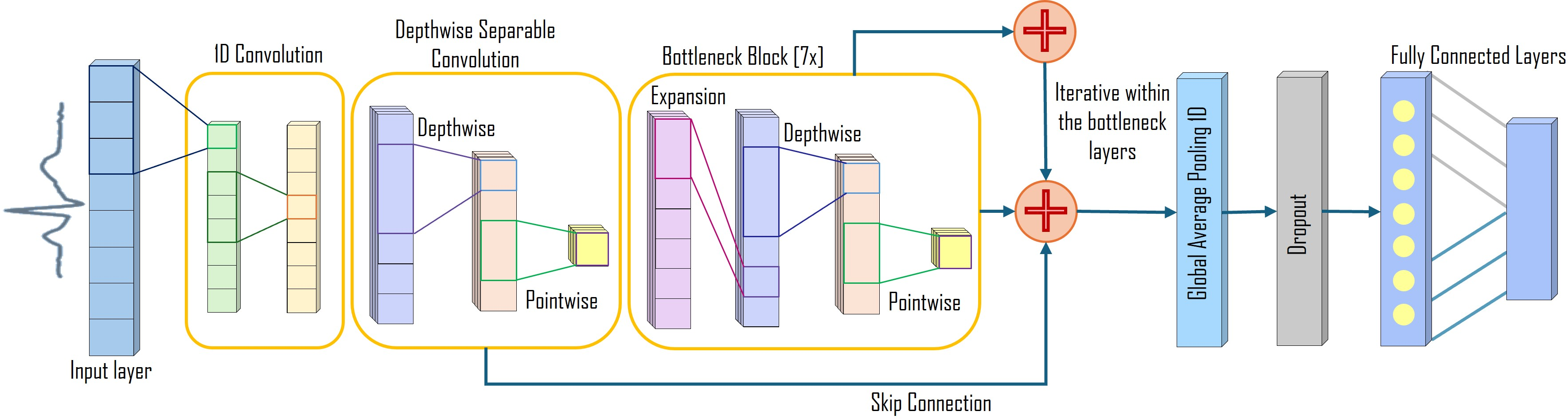

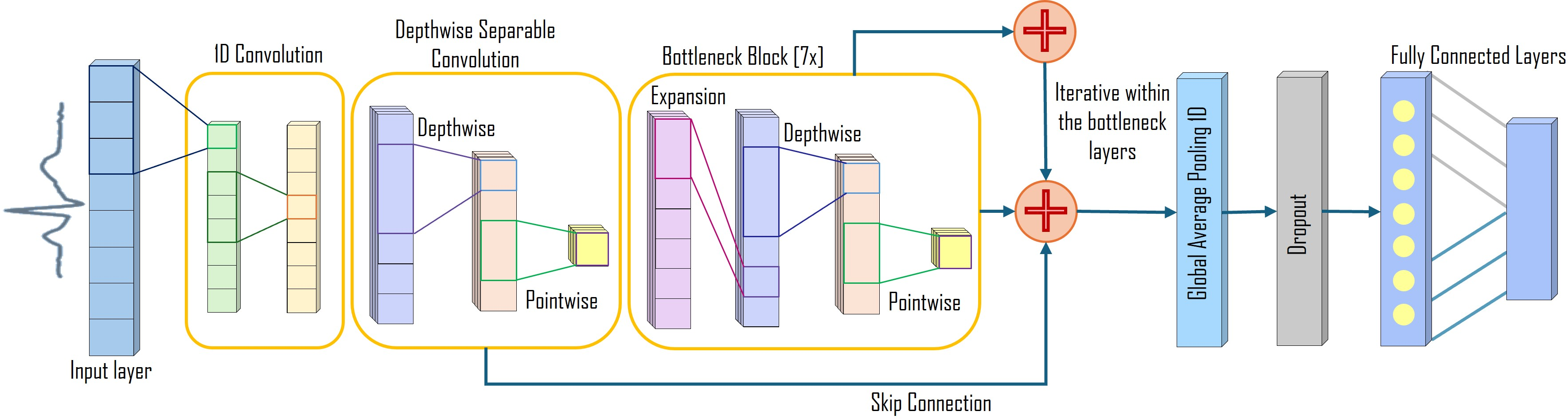

ArrhythmiNet V2 incorporates residual connections and bottleneck blocks, further enhancing feature extraction and convergence speed, while reducing the model size to 157.76 KB.

Figure 4: ArrhythmiNet V2 architecture showing residual connections and seven bottleneck blocks.

Explainable AI Techniques

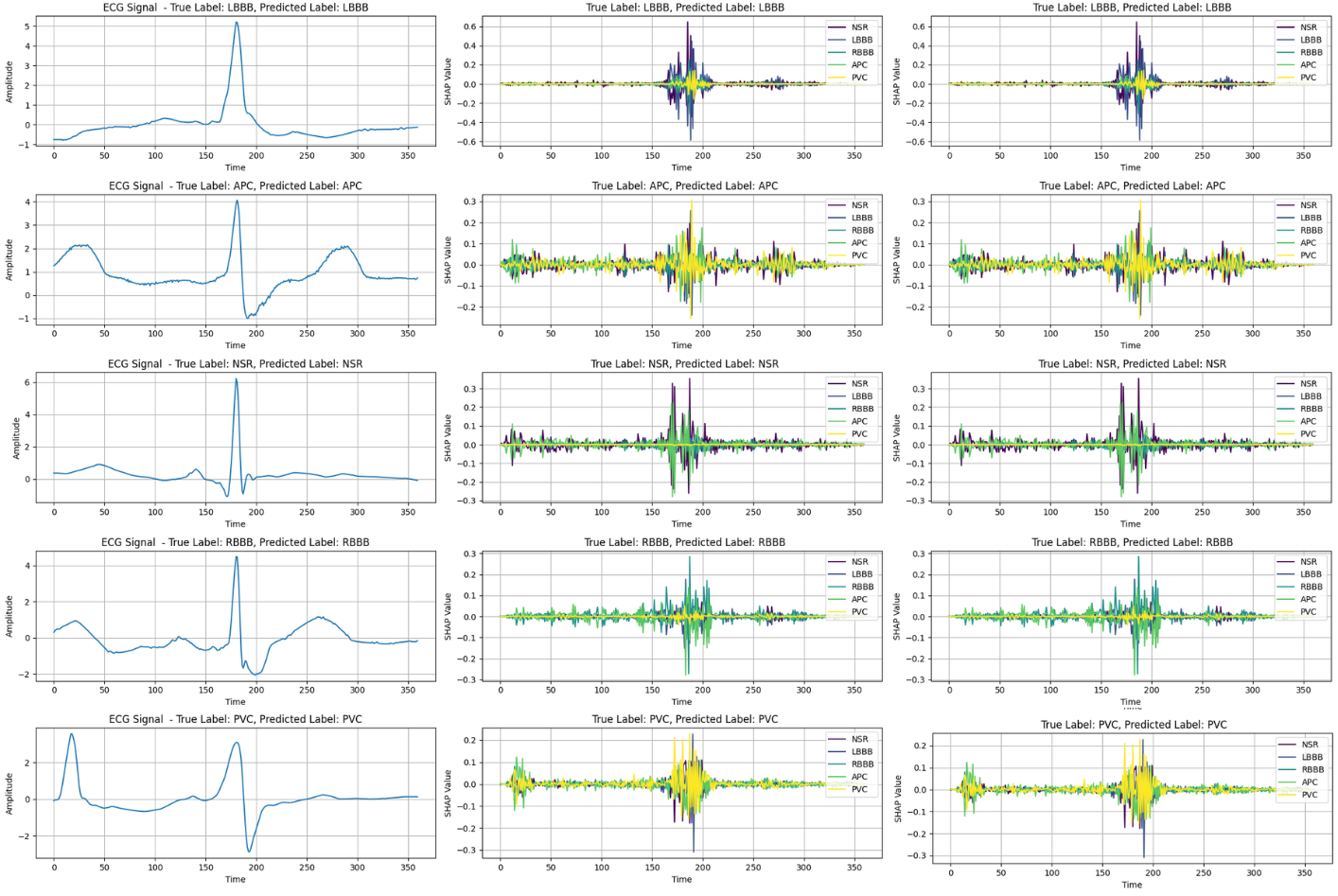

The integration of explainable AI (XAI) methods such as SHAP (Shapley Additive Explanations) and Grad-CAM (Gradient-weighted Class Activation Mapping) enables both local and global interpretability of the model's predictions. These techniques illuminate which aspects of the ECG signals—such as the QRS complex and T-wave—are pivotal to the model's decision-making process.

Figure 5: (Left) Randomly sampled original ECG signal. (Middle) SHAP output for ArrhythmiNet V1. (Right) SHAP output for ArrhythmiNet V2.

Figure 6: (Left) Grad-CAM output for ArrhythmiNet V1. (Right) Grad-CAM results for ArrhythmiNet V2.

Results

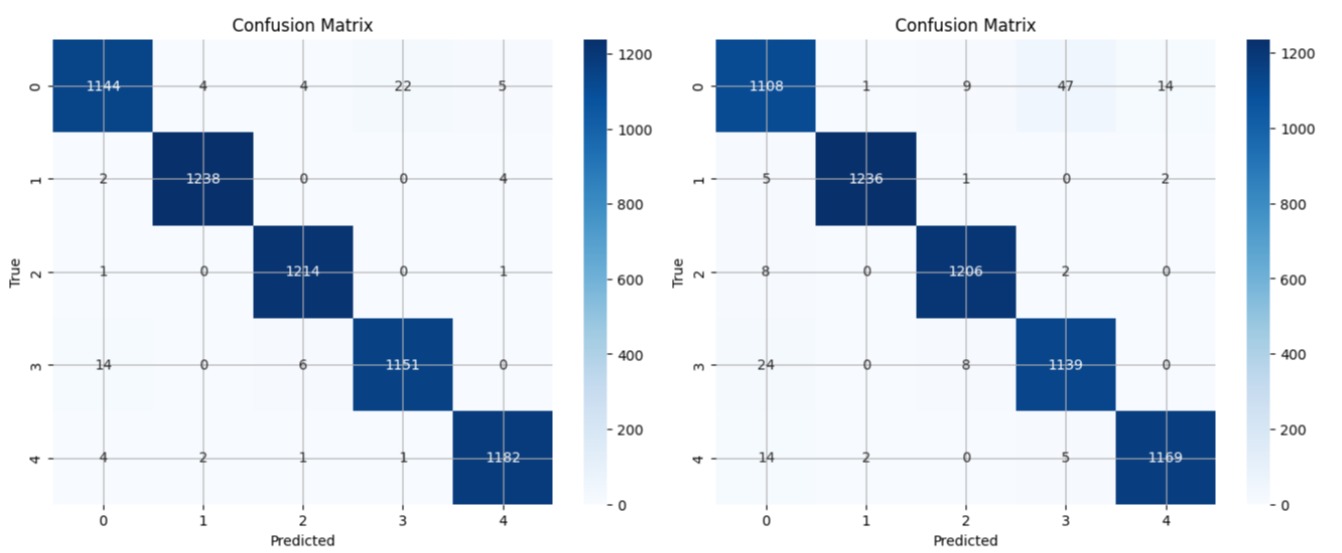

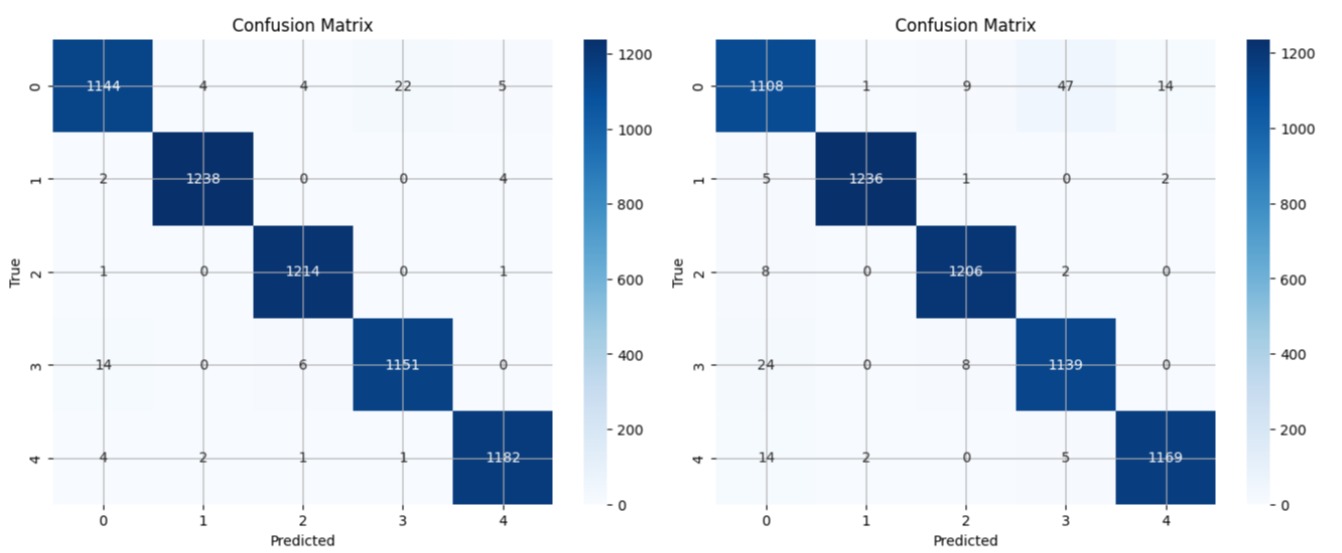

In evaluating both architectures on the MIT-BIH dataset, ArrhythmiNet V1 achieved a classification accuracy of 99%, while ArrhythmiNet V2 reached 98%. Both models demonstrated high precision across all arrhythmia classes, though ArrhythmiNet V1 slightly outperforms V2, possibly due to better feature localization capabilities.

Figure 7: (Left) Confusion matrix for ArrhythmiNet V1, showing minimal misclassifications across all classes. (Right) Confusion matrix for ArrhythmiNet V2, exhibiting slightly lower accuracy than the former but still yielding promising results.

Conclusion

The study presents significant advancements in ECG-based arrhythmia classification by developing models that are not only accurate but also resource-efficient and interpretable. These lightweight CNN architectures are poised for integration into wearable and real-time monitoring systems, offering a feasible solution for remote healthcare monitoring and timely diagnosis of cardiac events. Future research may explore deploying these models in multi-lead ECG configurations and considering additional real-world datasets for further validation.