- The paper introduces KG-HTC, a novel method that integrates knowledge graphs with LLMs to perform effective zero-shot hierarchical text classification without relying on annotated data.

- It employs subgraph retrieval, cosine similarity, and prompt engineering to enhance semantic context and accurately navigate complex label hierarchies.

- Experimental results demonstrate state-of-the-art performance, especially at deeper taxonomy levels, highlighting its robustness in handling large label spaces and imbalanced data.

Summary of "KG-HTC: Integrating Knowledge Graphs into LLMs for Effective Zero-shot Hierarchical Text Classification"

Hierarchical Text Classification (HTC) involves categorizing documents into labels organized in a hierarchy. Traditional methods often rely on supervised learning, which becomes challenging due to data scarcity, large label spaces, and imbalances in class distributions. This paper introduces KG-HTC, a novel method leveraging knowledge graphs (KGs) integrated with LLMs to address these challenges in a zero-shot setting.

Methodology

The proposed KG-HTC method combines Retrieval-Augmented Generation (RAG) with KGs, enhancing LLMs by providing structured semantic context. This integration is designed to improve the LLM's understanding of hierarchical label semantics without relying on annotated data, thereby addressing the zero-shot classification challenge.

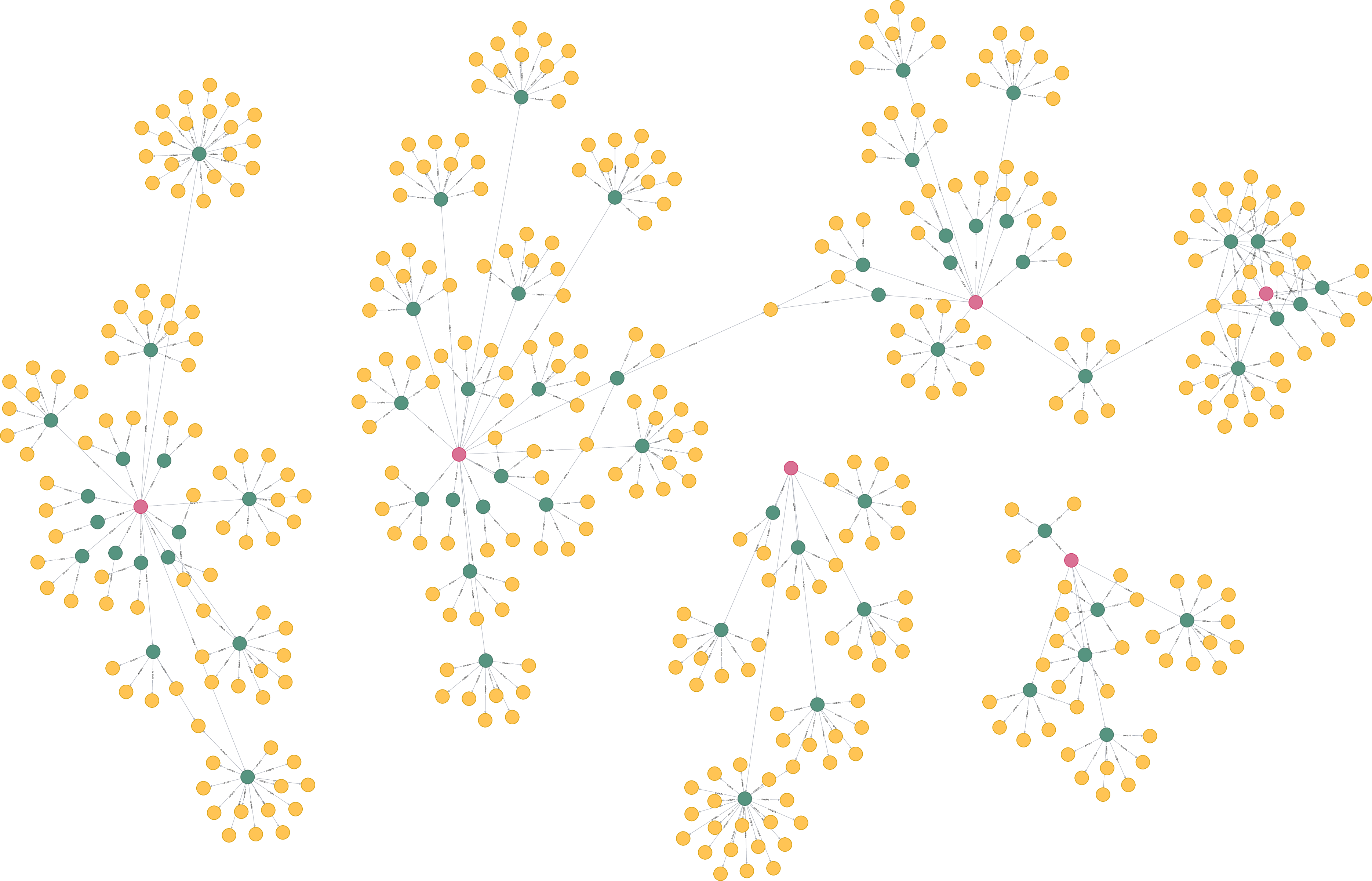

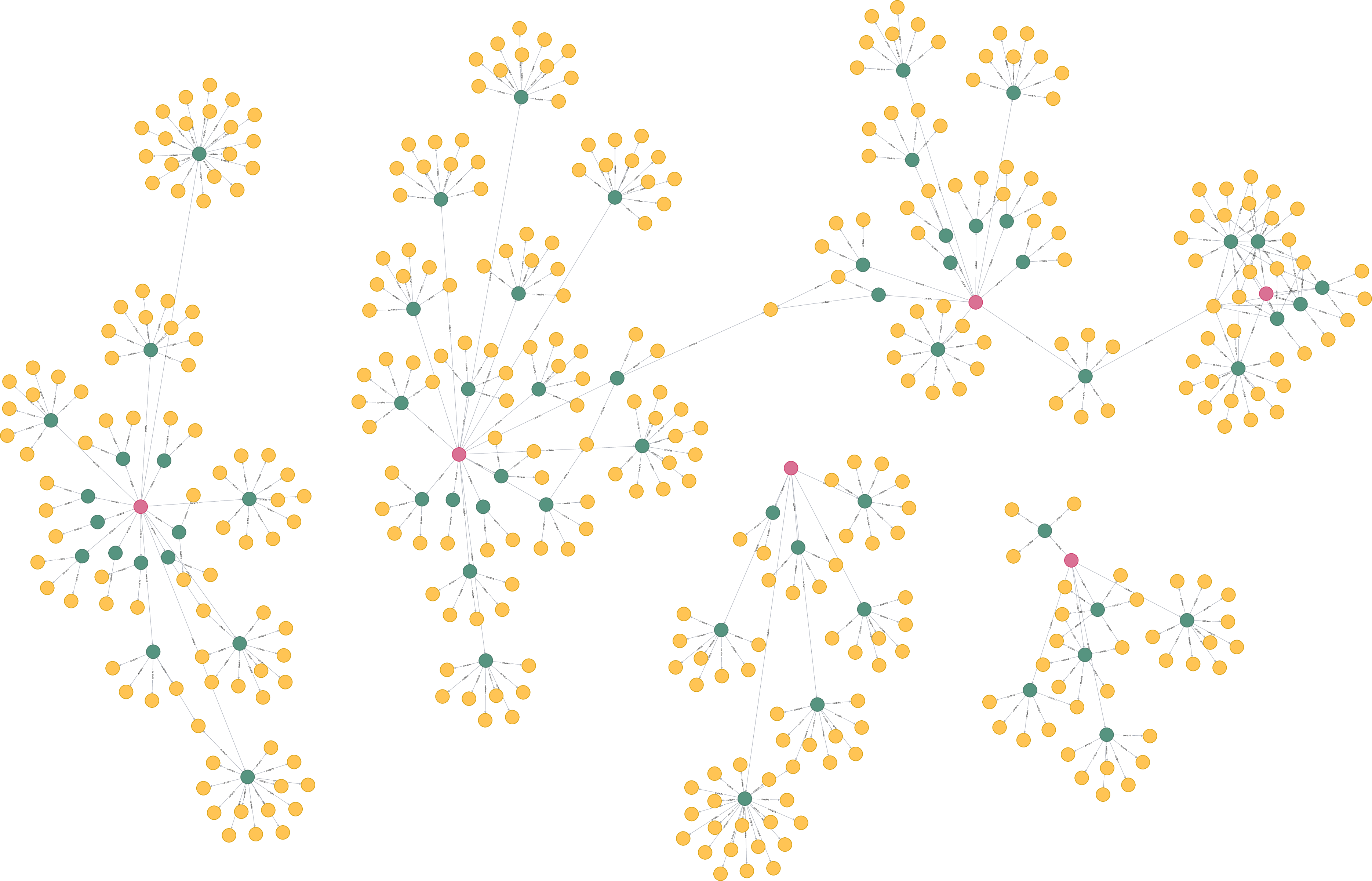

Figure 1: The overview pipeline of KG-HTC.

- Subgraph Retrieval and Similarity Calculation: The system retrieves relevant subgraphs from a KG related to the input text. This involves calculating cosine similarity between the input text's embedding and potential labels at each hierarchical level to ensure relevant retrievals.

- Pipeline Architecture: The architecture involves storing labels in both a graph and a vector database, performing semantic similarity checks to retrieve relevant subgraphs, and using upwards propagation algorithms to define possible label paths from the root to leaf nodes for each classification task.

- Prompt Engineering:

The subgraph is transformed into a structured prompt. This prompt includes the input text and label paths represented in a serialized format, facilitating effective in-context learning within the LLM for hierarchical zero-shot text classification.

Figure 2: Visualization of the knowledge graph constructed from the taxonomy in the Amazon Product Review dataset.

Experimental Results

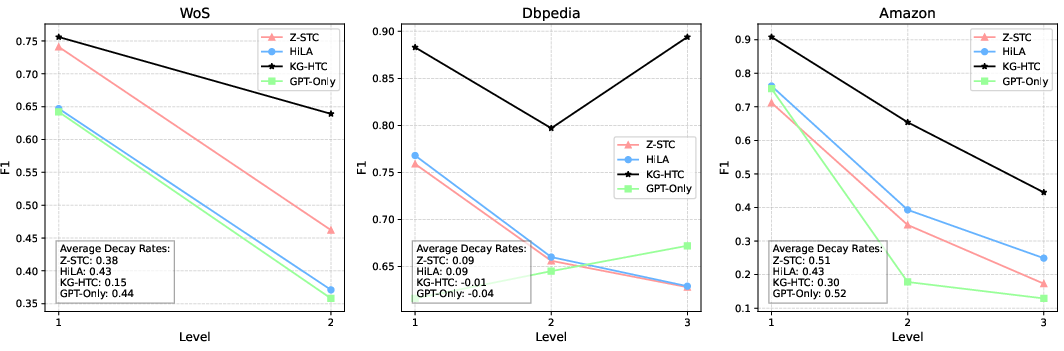

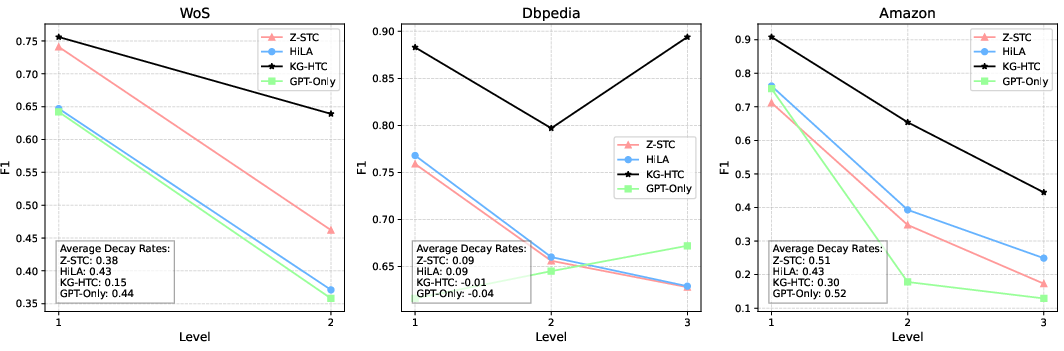

KG-HTC was evaluated on three public datasets: WoS, DBpedia, and Amazon Product Reviews, achieving state-of-the-art performance in zero-shot scenarios. The approach significantly outperformed baselines, particularly demonstrating robust performance at deeper levels of the hierarchy, which often present more significant challenges due to large label spaces and long-tailed distributions.

Implications and Future Directions

The integration of KGs with LLMs as demonstrated by KG-HTC represents a significant advancement in addressing the limitations of HTC in zero-shot settings. The use of subgraph retrieval and prompt structure effectively utilizes massive label spaces while mitigating performance degradation commonly seen in deeper hierarchical levels.

Figure 3: As the taxonomy deepens, KG-HTC exhibits a slower performance degradation on the WoS and Amazon datasets.

The study highlights the promise of KG-enhanced LLMs to improve generalization in taxonomies without additional labeled data, providing a foundation for further exploration in zero-shot and few-shot learning contexts. Future research may focus on dynamically extending and refining knowledge graph representations and exploring broader applications across different domains where hierarchical classification is crucial.