- The paper introduces neural network-based prediction of IMU biases to preserve invariant filtering integrity in visual-inertial odometry.

- The methodology leverages Lie group theory and an invariant Kalman filter formulation to decouple bias effects from state estimation.

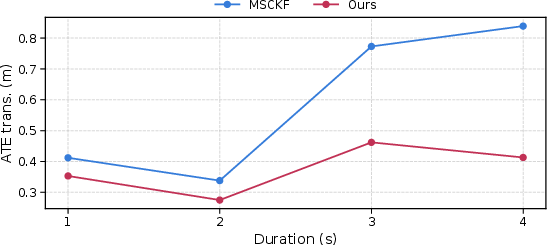

- Evaluation against established methods like MSCKF on standard datasets demonstrates improved robustness during visual feature losses.

Learned IMU Bias Prediction for Invariant Visual Inertial Odometry

Introduction

The advent of autonomous mobile robots has positioned accurate state estimation as a fundamental requirement for reliable operation, particularly when navigating unfamiliar environments. These systems commonly depend on visual-inertial odometry (VIO) methodologies that facilitate position, orientation, and velocity estimation, leveraging data from cameras and inertial measurement units (IMUs). A critical challenge lies in compensating for inherent biases present in IMU readings, which may lead to degraded sensor accuracy over time. Researchers have developed methods to integrate these biases into system state estimates; however, this process often disrupts the symmetry intrinsic to invariant filtering techniques and impacts their robustness. This paper explores the design of a neural network dedicated to predicting IMU biases based on historical measurements, enabling the implementation of an invariant VIO system that circumvents bias inclusion in the filter state.

IMU Bias Prediction

Visual and inertial sensors serve complementary roles within VIO frameworks. While cameras afford pose displacement estimations, they are susceptible to environmental factors such as lighting variations and motion blur. Conversely, IMUs provide high-frequency data independent of these issues, yet suffer from estimate drift induced by measurement biases. The scope of our research includes the neural network-based prediction of IMU biases, leveraging historical IMU data without embedding the bias within the filtering apparatus. This approach circumvents the limitations imposed by bias modeling as a stochastic process and maintains filter consistency via an invariant Kalman filter formulation.

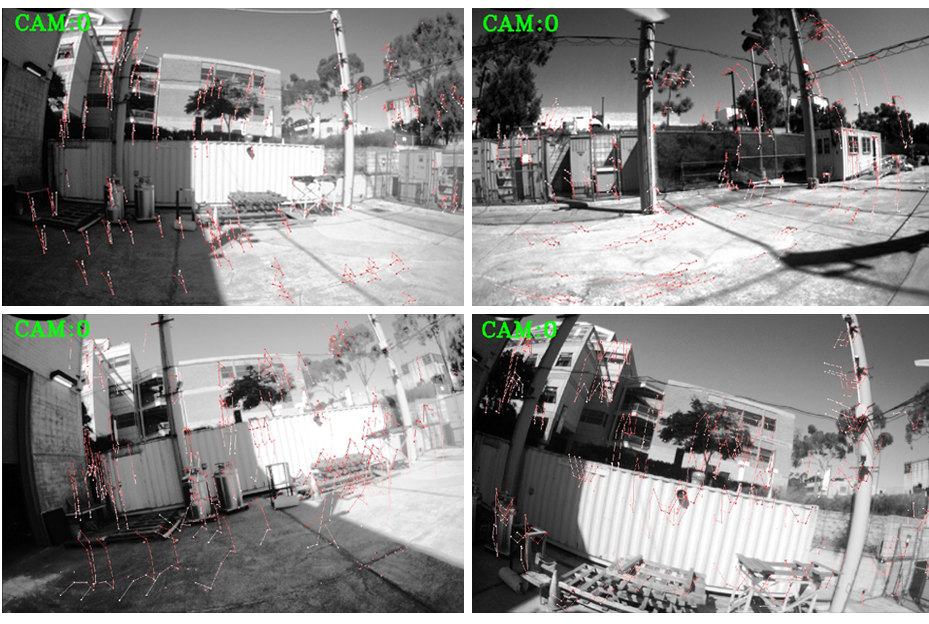

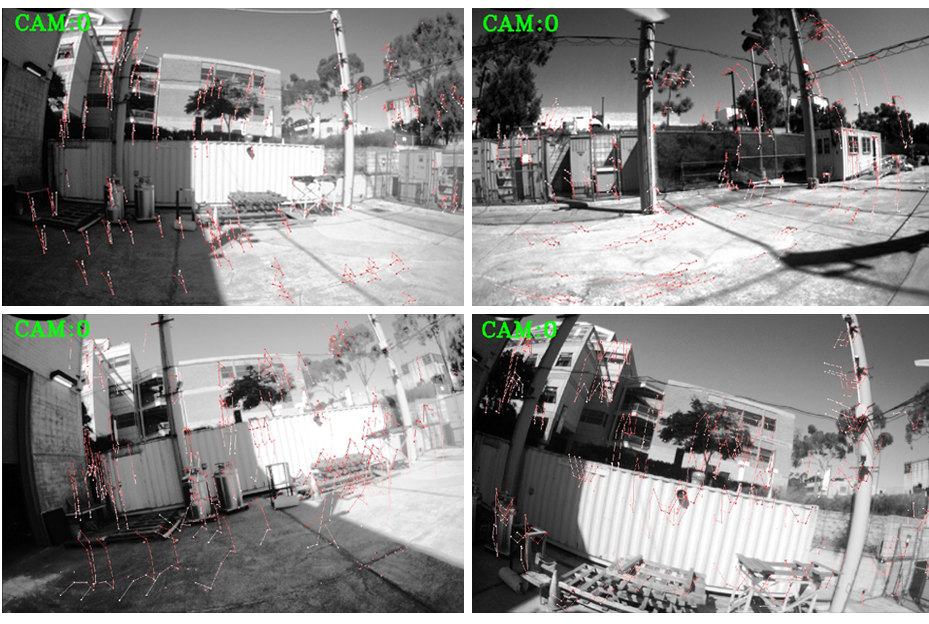

Figure 1: Keypoints and trajectory error depicted during visual information loss with varying durations.

Preliminaries

A robust understanding of Lie group theory underpins the methods addressed in this paper, particularly the SE2(3) manifold governing the robot's state, encompassing orientation, velocity, and position. This structure facilitates the integration of high-level mathematical operators such as matrix exponentiation and adjoint mappings that maintain error state positions invariant to group transformations. These operators are integral to the implementation of invariant error dynamics, preserving the linear independence of propagation equational states—an advantage over conventional EKFs.

Learning-Based Methods

Recent advancements in learning-based odometry highlight sophisticated architectures such as IONet, RoNIN, and TLIO, which primarily specialize in pedestrian motion estimation. These techniques often rely on transforming IMU measurements using exact pose estimations, thereby limiting their applicability in real-time robotic systems where poses are estimated dynamically. This paper proposes a novel methodology that autonomously predicts IMU biases, subsequently enabling trajectory predictions on unseen robotic paths by employing Lie algebra error measurement as a training metric.

Evaluation

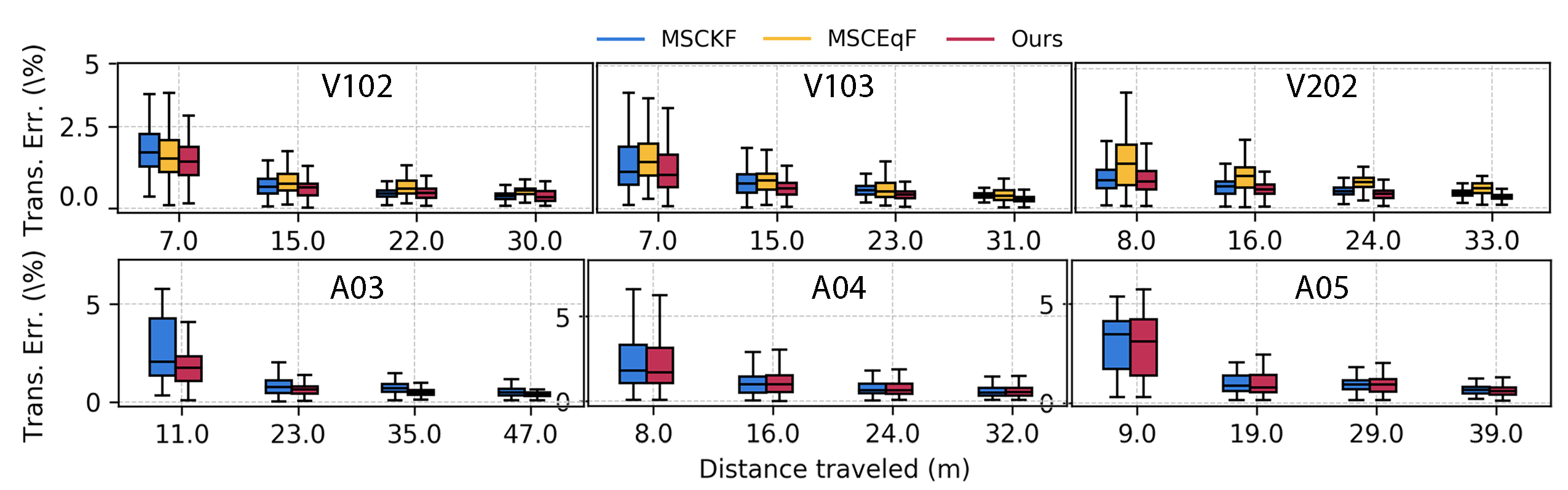

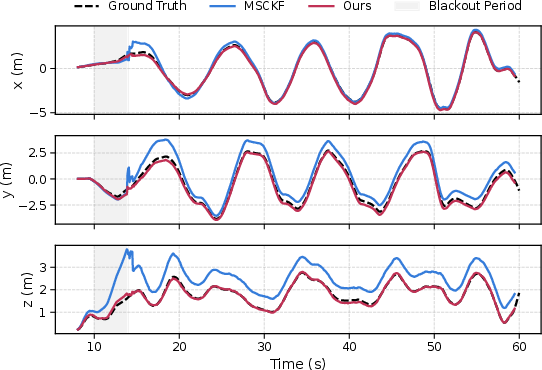

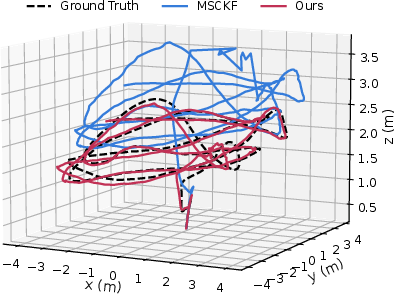

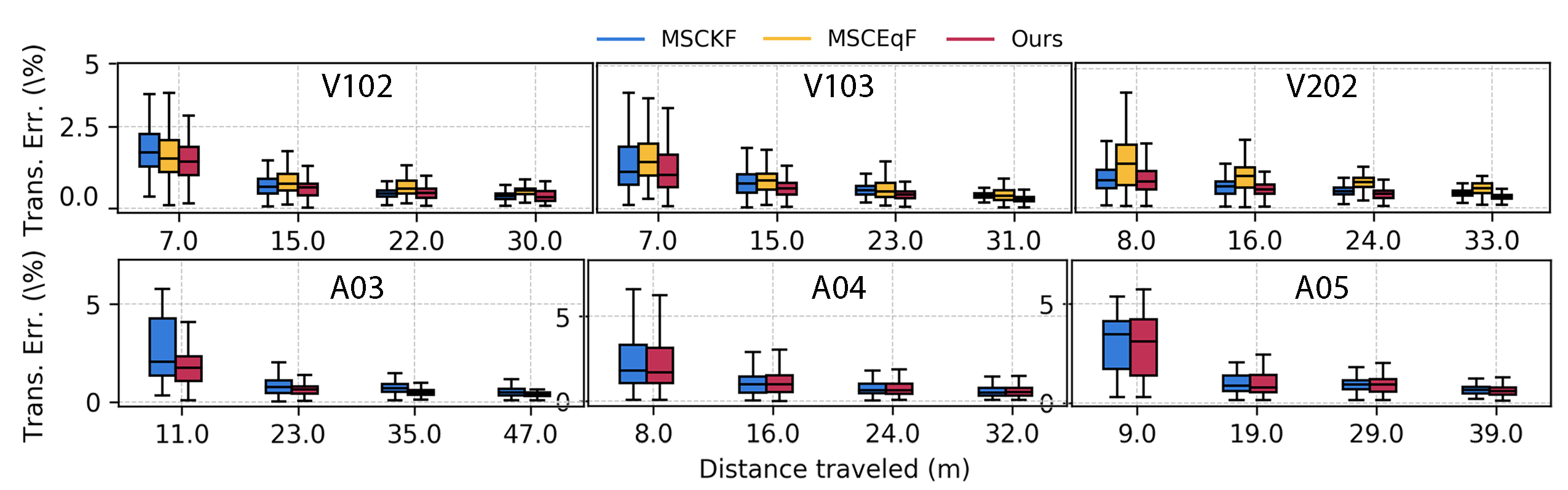

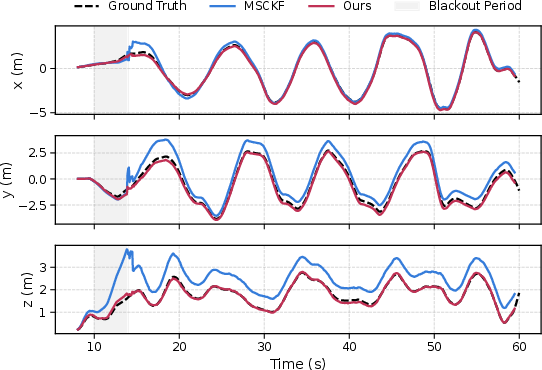

The paper exhaustively evaluates its methodology against established VIO methods like MSCKF and MSCEqF, measuring its performance on publicly available and proprietary datasets. Emphasis is placed on demonstrating the relative superiority of our approach in both normal environmental conditions and during challenging scenarios characterized by visual feature blackouts. Further investigation into this approach's utility is conducted by comparing it to established inertial odometry systems (e.g., IMO) under inertial-only conditions, pursuing adaptations as direct position increment predictors. The results affirm the robustness and generalization capabilities afforded by IMU bias prediction strategies.

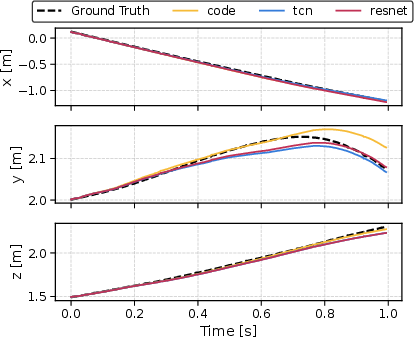

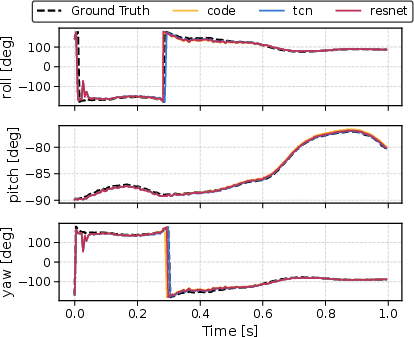

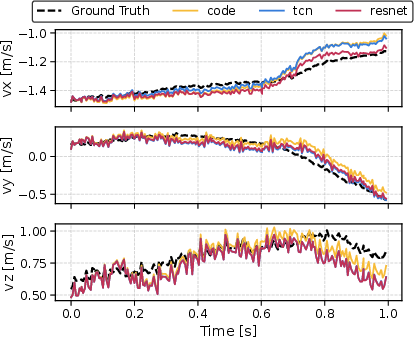

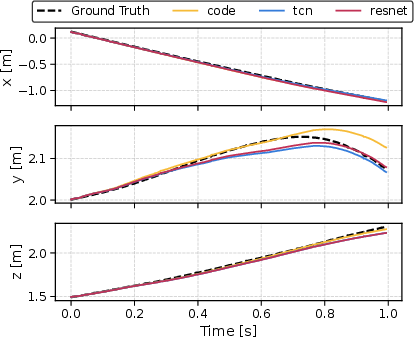

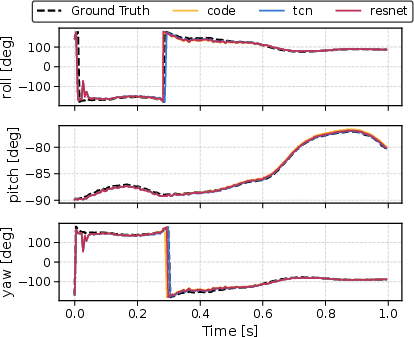

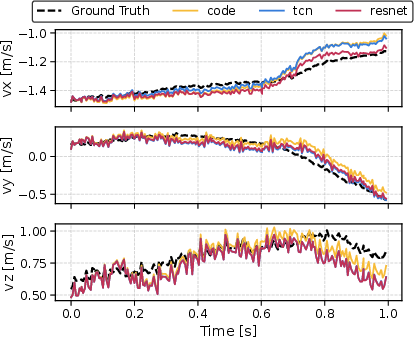

Figure 2: Bias prediction evaluation showcasing comparative outcomes against TCN and ResNet models.

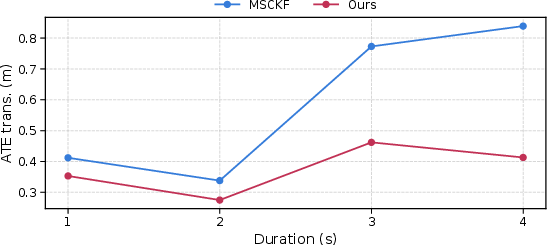

Figure 3: Compilation of translation error statistics across EuRoC and Aerodrome datasets.

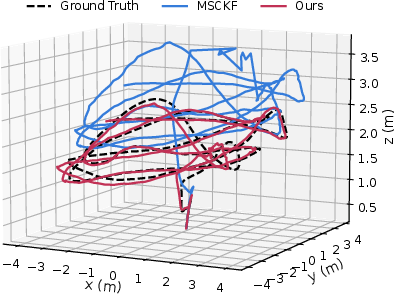

Figure 4: Position estimation trajectories post visual failure initiation.

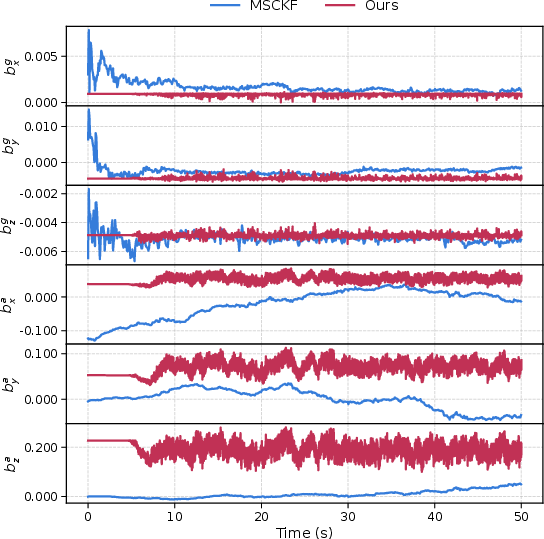

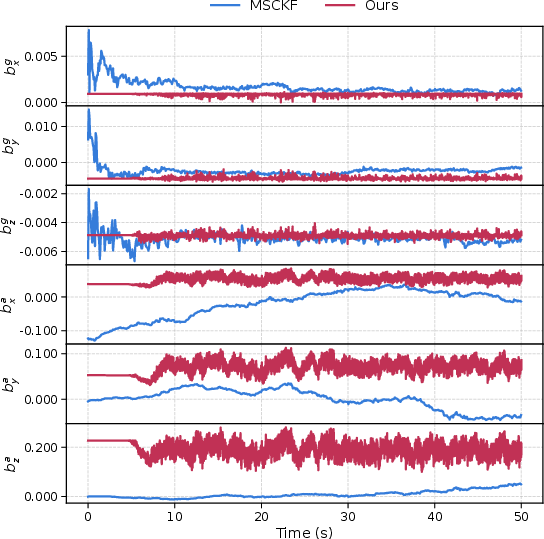

Figure 5: Longitudinal IMU bias estimation comparison between MSCKF and proposed network-based inference.

Conclusion

The research encapsulated in this paper underscores the viability of learned IMU bias predictions for enhancing VIO systems via invariant filtering techniques, instilling robustness especially under strenuous conditions of lesser visual input availability. The strengths detailed in this work pave pathways toward future explorations into optimizing measurement uncertainty estimation, expanding input horizons including image data, and embedding these methodologies into diverse real-world applications.