- The paper reveals a transparency deficit in TikTok's search recommendations, showing how algorithmic opacity obscures comment-driven query generation.

- It outlines computational challenges such as detecting coordinated manipulation and identifying context-specific harms in large-scale data.

- The analysis underscores regulatory gaps under the Digital Services Act and calls for interdisciplinary research to enhance platform accountability.

TikTok Search Recommendations: Governance and Computational Challenges

Introduction

TikTok's emergence as a search engine reorients the discovery paradigm within social media, integrating platform-driven search recommendations as a new user engagement vector. The paper "TikTok Search Recommendations: Governance and Research Challenges" (2505.08385) systematically examines the governance implications, computational complexity, and empirical knowledge gaps arising from TikTok’s search recommendation feature. The authors foreground the lack of transparency regarding how recommendations are generated and moderated, situating the topic at the intersection of platform accountability, recommendation system opacity, and the sociotechnical landscape of online content mediation.

Search Recommendation Functionality and Social Dynamics

TikTok's search recommendations manifest as preformulated search terms overlaid on videos, directly steering user engagement with post-viewing queries. The platform asserts that these recommendations are solely derived from comment discourse and subsequent user search activity; TikTok’s documentation positions itself as an aggregator rather than a generative entity. This stance, compounded by restricted user creator agency, orchestrates a power asymmetry whereby TikTok exerts substantial control yet remains technically opaque.

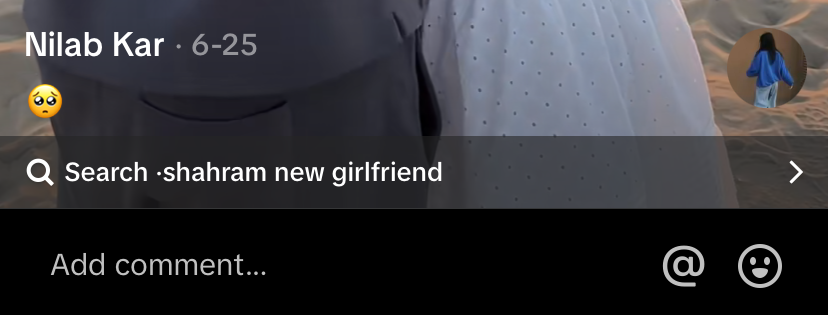

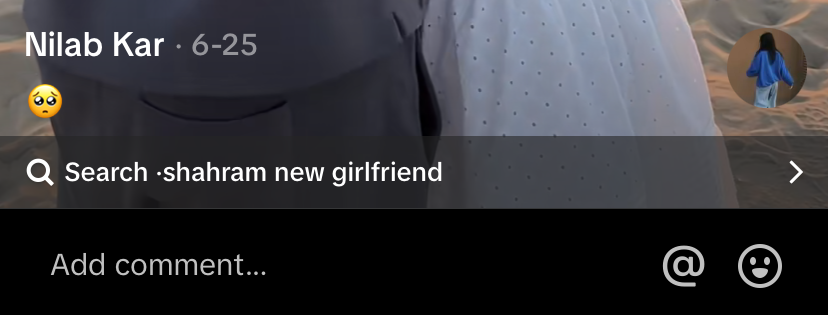

Figure 1: Search recommendation appearing on 18 July 2024 on Nilab Kar’s TikTok video originally shared 25 June 2024.

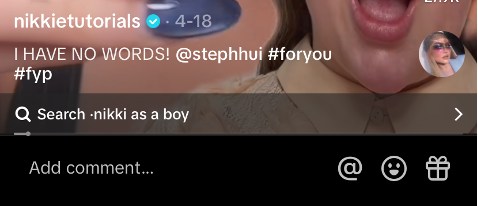

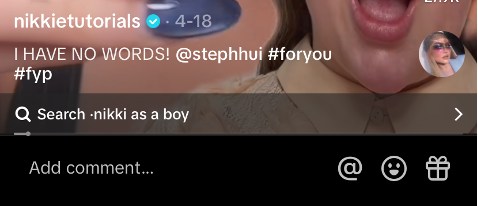

Qualitative observations of Dutch influencer content reveal dynamic, context-sensitive recommendation behaviors. Recommendations regularly amplify personal speculation—queries about relationships or identity frequently surface regardless of explicit content, including instances of transphobic or stereotyping queries (e.g., "Nikkie as a boy").

Figure 2: Search recommendation appearing on 17 July 2024 on NikkieTutorials's TikTok video originally shared 18 April 2024.

Recommendations thus invert the conventional affordances of search engines, imposing platform-determined query boundaries in place of open-ended search. This strategic indexing is susceptible both to social manipulation and to the perpetuation of harmful narratives through speculative escalation and commercial amplification.

Governance and Regulatory Problematics

The governance challenges are multifaceted:

- Transparency Deficit: TikTok fails to comprehensively document recommendation generation mechanisms, only providing vague attestation to AI-driven aggregation of user comments and queries.

- Moderation Ambiguity: There is insufficient clarity regarding moderation and reporting processes, particularly for contextually sensitive or harmful search terms. Moderation enforcement gaps have been empirically documented, especially regarding identity-centric recommendations.

- Limited User/Creator Recourse: Reporting avenues are inconsistent—recommendations in comment sections can be reported, those below videos cannot, and creator notification or influence regarding attached recommendations is nonexistent. Existing comment filters used as mitigation tools are under-documented.

- Regulatory Non-Compliance: The Digital Services Act (DSA) demands transparency for recommender systems and prohibits manipulative design practices. TikTok’s current affordances challenge Article 27(1) (transparency), Article 25(1) (dark pattern prohibition), and Article 16(1) (illegal content notice mechanisms), potentially exposing the platform to compliance risk.

The platform's selective opacity and procedural ambiguity exacerbate governance tensions, especially as search recommendations increasingly enable speculation, misinformation, and covert commercial practices.

Computational Research Agenda

A multi-pronged computational research agenda is delineated:

- Recommendation Generation Attribution: Determining which comment features (topic, sentiment, engagement, recency, user identity) most influence recommendation emergence is challenging due to system opaqueness. Causal inference is impeded by limited data access.

- Detection of Coordinated Manipulation: Coordinated comment campaigns can trigger recommendations, even cross-video. Robust semantic and temporal analysis across large-scale datasets is required to detect such behavior and assess vulnerability to algorithmic weaponization.

- Contextual Harm Identification: Recommendations may be harmful based on their contextual instantiation, requiring advanced computational moderation approaches and external knowledge integration. Definitions of harm are fluid, normative, and regulation-bound.

- Topic Classification and Transparency: Product-centric and identity-centric queries complicate transparency in advertising and organic engagement. Topic modeling and classification methods are necessary to trace recommendation motivations and commercial manipulation mechanisms.

API restrictions, shifting interface design, and the platform's tightly controlled data ecosystem are substantial empirical obstacles, obliging methodological innovation and multidisciplinary problem framing.

Implications and Future Directions

The integration of search recommendations signifies a convergence of search engine and social media logics, with commercial, reputational, and societal ramifications. TikTok’s monetization strategies incentivize search-oriented content production, while recommendation opacity intensifies governance complexity. The theoretical implications touch on recommendation system studies, platform liability for algorithmic mediation, and the reconfiguration of user agency.

Practically, the migration of search recommendations to other platforms (e.g., YouTube Shorts, planned Instagram rollout) portends wider adoption and sectoral standardization, underscoring the urgency for critical research. Enhanced transparency frameworks, regulatory harmonization, and the deployment of computational social science methodologies are essential for future development.

Conclusion

TikTok’s search recommendations introduce an opaque, contextually powerful layer of algorithmic mediation with substantial governance and computational challenges. Addressing issues of transparency, moderation, and creator/user agency requires cross-disciplinary research collaboration and regulatory oversight. The outlined computational research agenda—investigating recommendation generation, manipulation detection, contextual harm, and topic classification—represents foundational tasks for advancing understanding and policy intervention in platform search recommendation systems. As platforms increasingly integrate search-driven features into their discovery architecture, researchers must foreground the social, ethical, and regulatory implications of algorithmically curated query suggestions.