HiddenBench: Assessing Collective Reasoning in Multi-Agent LLMs via Hidden Profile Tasks

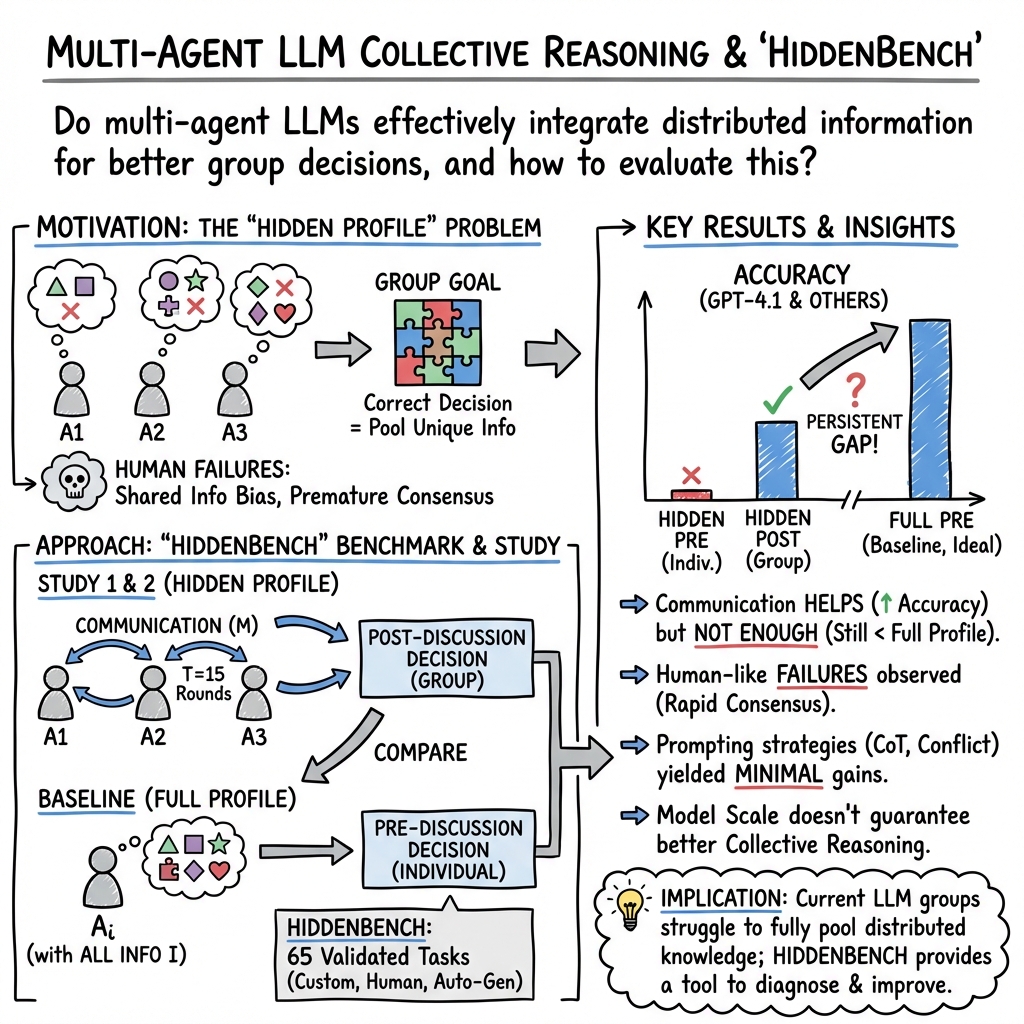

Abstract: Multi-agent systems built on LLMs promise enhanced problem-solving through distributed information integration, but may also replicate collective reasoning failures observed in human groups. Yet the absence of a theory-grounded benchmark makes it difficult to systematically evaluate and improve such reasoning. We introduce HiddenBench, the first benchmark for evaluating collective reasoning in multi-agent LLMs. It builds on the Hidden Profile paradigm from social psychology, where individuals each hold asymmetric pieces of information and must communicate to reach the correct decision. To ground the benchmark, we formalize the paradigm with custom tasks and show that GPT-4.1 groups fail to integrate distributed knowledge, exhibiting human-like collective reasoning failures that persist even with varied prompting strategies. We then construct the full benchmark, spanning 65 tasks drawn from custom designs, prior human studies, and automatic generation. Evaluating 15 LLMs across four model families, HiddenBench exposes persistent limitations while also providing comparative insights: some models (e.g., Gemini-2.5-Flash/Pro) achieve higher performance, yet scale and reasoning are not reliable indicators of stronger collective reasoning. Our work delivers the first reproducible benchmark for collective reasoning in multi-agent LLMs, offering diagnostic insight and a foundation for future research on artificial collective intelligence.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces HiddenBench, a new way to test how groups of AI agents (powered by LLMs) think together and make decisions. The key idea is to see whether multiple AI agents can share and combine different pieces of information to reach the best answer—or whether they make the same mistakes that human groups often do.

What questions were the researchers asking?

They focused on simple, clear questions:

- Can groups of AI agents combine their unique pieces of information to choose the correct option?

- Do AI groups make human-like mistakes, such as paying too much attention to facts everyone already knows and ignoring unique facts held by individuals?

- Does changing the way the agents talk (for example, making them more cooperative or more argumentative) fix these problems?

- Which AI models are better at “collective reasoning,” and does being bigger or “better at reasoning” actually help in group settings?

How did they study it?

They used a classic idea from social psychology called a “Hidden Profile” task. Here’s an everyday analogy:

Imagine four friends are trying to decide the safest place to evacuate during a flood. Everyone sees some shared facts, like “the bridge looks clear,” but each friend also has one unique fact that the others don’t know, like “the dam is about to release water.” The shared facts push the group toward a wrong choice (the bridge), but if they share and listen to each other’s unique facts, they should realize another option is safer (like the hill).

The researchers did two main studies:

Study 1: Testing AI groups and human groups

- They created three evacuation scenarios (three possible destinations; only one is truly safe).

- Each group had four AI agents (GPT‑4.1) that chatted for 15 rounds to try to agree on the best destination.

- They also ran the same scenarios with human groups recruited online.

- Every person or agent first made a “pre-discussion” choice (based only on what they knew), then a “post-discussion” choice after the group chat.

- They tried different prompting styles for the AI agents (very cooperative, very conflictual, chain-of-thought, and more) to see if these would help.

Study 2: Building the HiddenBench benchmark

- They formalized the Hidden Profile idea into a reusable framework (clear rules on options, shared vs. unshared facts, and what counts as success).

- They built a benchmark of 65 tasks:

- 3 custom-designed tasks (like the evacuation scenarios).

- 5 tasks adapted from past human studies.

- 57 tasks automatically generated by an AI pipeline.

- They validated each task to make sure:

- If a single agent sees all the info, the task is solvable (high accuracy).

- If agents only see their own piece plus shared info, the task is hard (low accuracy).

- They tested 15 different LLMs (from OpenAI, Google, Alibaba, and Meta) on all tasks and compared performance.

What did they find?

Here are the main takeaways:

- Communication helped, but not enough. In the “Hidden” condition (where information is split across agents), GPT‑4.1’s accuracy moved from about 1% before discussion to about 23% after discussion. That’s better—but still far below the accuracy when any single agent is given all the information (about 73% before discussion and 89% after).

- AI groups showed human-like mistakes. They often stopped exploring once a quick consensus formed, focused on the facts everyone knew, and didn’t adequately share or weigh the unique facts that mattered. AI agents tended to “settle” much faster than humans—and often settled on the wrong option.

- Changing prompts didn’t fix the issue. Making the agents argue more gave tiny gains in some measures, but then groups couldn’t reach a majority decision. Techniques like chain-of-thought or telling agents their information differed didn’t meaningfully improve results.

- HiddenBench revealed big differences across models. Some models (like Google Gemini 2.5 Pro and Flash) did notably better at collective reasoning. But bigger models or models marketed as “better at reasoning” didn’t consistently outperform smaller ones in group settings. In other words, size and individual reasoning ability weren’t reliable predictors of group reasoning skill.

- The automatic task generator worked well. From 200 candidates, 57 tasks passed strict checks, meaning the benchmark can be expanded and reproduced by other researchers.

Why is this important?

Many real problems require people—or agents—to share different bits of information. If AI teams can’t reliably pool their knowledge, they may make poor decisions, even when the “truth” is available across the group. This matters for:

- Emergency planning (like evacuations),

- Team-based software development,

- Scientific discovery,

- Social simulations and policy discussions.

If we don’t test group reasoning properly, we risk building AI systems that look smart alone but fail when working together.

What’s the impact and what comes next?

HiddenBench gives researchers and developers a fair, repeatable way to test and compare how well AI agents reason together. It highlights where today’s models fall short and points to what needs work:

- Better communication strategies that encourage sharing unique facts,

- Ways to prevent fast but wrong consensus,

- New training or architectures designed specifically for group coordination.

Bottom line: HiddenBench is a starting point for building trustworthy “collective AI”—systems that don’t just think well individually, but also collaborate wisely as a team.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a focused list of what the paper leaves missing, uncertain, or unexplored, phrased to guide concrete follow-up research:

- Scope of group size: Only N=4 agents are studied; systematically vary group size (e.g., N=2–12) to map how information integration scales and where failures emerge.

- Communication topology and protocol: The setup uses fully connected, synchronized rounds; compare alternative protocols (hub-and-spoke moderator, targeted Q&A, asynchronous updates, limited-bandwidth channels, network structures) and their impact on hidden-information elicitation.

- Conversation length and stopping rules: T=15 rounds is fixed; study sensitivity to T, early-consensus penalties, dynamic stopping, and interruptible moderation to reduce premature convergence.

- Aggregation rules: Evaluation focuses on average/majority rules; assess judge-based selection, best-justification voting, argument-quality scoring, Delphi-style iterations, and consensus-with-dissent mechanisms.

- Intervention breadth: Beyond prompt style and zero-shot CoT, test structured protocols (unique-info checklists, “who knows what” registries, turn-taking constraints), role specialization (devil’s advocate, summarizer, skeptic, facilitator), meta-controllers/moderators, and retrieval/memory tools.

- Training-time methods: No fine-tuning or RL interventions are explored; evaluate supervised fine-tuning on Hidden Profile traces, RL from group-level feedback, or curriculum learning on distributed-knowledge tasks.

- Mechanistic diagnosis: Failures (e.g., shared-information bias, early consensus) are observed but not quantified; develop metrics for unique-fact mention frequency, coverage over time, consensus timing, and conformity vs dissent dynamics, and link them causally to outcomes.

- Task feature attribution: It is unclear which task characteristics drive difficulty; annotate and ablate features such as decoy strength, number/overlap/redundancy of hidden facts, option count K, and misleading shared cues, and relate them to model performance.

- Domain generality: Tasks are social/organizational; extend to technical, numerical, logical, causal, spatial, scientific, and code domains, and test whether patterns persist.

- Multimodality: Benchmark is text-only; add multimodal Hidden Profile tasks (maps, tables, diagrams, sensor data) and embodied/interactive settings to test broader collective reasoning.

- Heterogeneous agent populations: All agents are identical within groups; evaluate heterogeneity (different models, sizes, prompts, or personas) and quantify diversity–accuracy trade-offs.

- Robustness to adversarial/noisy agents: No adversarial behavior is tested; introduce misinformed, strategic, or malicious agents and evaluate robustness and defense protocols.

- Incentives and objectives for agents: Agents lack explicit incentives; study effects of agent-level reward shaping (accuracy, coverage of unique facts, disagreement bonuses) and group-level incentives on outcomes.

- Human–LLM mixed groups: Only LLM-only and human-only groups are studied; evaluate hybrid groups to test whether LLMs mitigate or amplify human collective biases.

- Language and culture: Tasks are in English with Western contexts; test cross-lingual and cross-cultural settings to assess generalization and cultural bias.

- Single-agent baselines: Compare multi-agent Hidden discussions to strong single-agent baselines (self-ask, graph-of-thought, iterative scratchpad, retrieval-augmented reasoning) and central-aggregator pipelines that summarize and decide.

- Orchestration frameworks: Cite but do not evaluate Mixture-of-Agents, debate-with-judge, or tree-of-thought orchestrators; include these as baselines and quantify gains/costs.

- Memory and context limits: Long histories may exceed attention; analyze truncation effects, add external memory/scratchpads, and test message summarization and retrieval strategies.

- Sampling sensitivity: Temperature/decoding parameters are not ablated; quantify how stochasticity influences consensus, diversity, and accuracy.

- Cost/latency: Efficiency is not reported; measure token, time, and cost budgets per point of accuracy to guide practical deployments.

- Model-update reproducibility: Closed APIs evolve; provide frozen open-source baselines, seeds, and exact prompts to ensure longitudinal comparability.

- Task generation/validation bias: Automatic validation relies on GPT-4.1; cross-validate tasks with multiple models and human experts to avoid overfitting to one model’s idiosyncrasies.

- Ground-truth soundness: Ensure that “correct” options are uniquely entailed; add formal consistency checks and human expert audits to reduce under/overdetermination.

- Pretraining contamination: Adapted tasks may exist in training data; conduct contamination analysis and release provenance metadata.

- Gemini advantage: Gemini-2.5 models outperform others, but causal factors (instruction tuning, safety policies, long-context handling, search strategies) remain unknown; perform controlled ablations.

- Predictors of collective reasoning: Scale/reasoning scores poorly predict collective performance; develop diagnostic predictors (e.g., info-coverage propensity, dissent tolerance, moderator compliance).

- Metrics beyond accuracy: Add calibration/confidence aggregation, consensus quality, justification consistency, fairness (voice balance), and unique-fact coverage as primary metrics.

- Realistic constraints: Simulate noisy/partial communication, time pressure, missing data, and conflicting goals to mirror real-world collective settings.

- Safety and alignment effects: Social desirability and alignment settings may bias group outcomes; quantify these effects and assess mitigation strategies.

- Public release completeness: Ensure benchmark includes code for generation/validation, conversation scripts, seeds, model/version metadata, and licenses to maximize reproducibility and extension.

Practical Applications

Practical Applications of HIDDENBENCH and the Hidden Profile Formalism

HIDDENBENCH introduces a theory-grounded, reproducible benchmark for multi-agent LLM collective reasoning, formalizes Hidden Profile tasks, and reveals persistent human-like failures (e.g., shared information bias, premature consensus). Below are concrete applications derived from these findings, methods, and innovations.

Immediate Applications

The following applications can be deployed now with modest adaptation and integration work.

- Model selection and procurement for multi-agent deployments [software, enterprise IT]

- Use HIDDENBENCH scores to choose model families (e.g., Gemini-2.5-Pro/Flash) that empirically exhibit stronger collective reasoning; avoid assuming “bigger” or “reasoning-augmented” models will perform better in group settings.

- Potential product/workflow: “Collective Reasoning Score” reported alongside standard benchmarks in vendor evaluations and RFPs.

- Assumptions/dependencies: Benchmark alignment with the target domain; access to multiple model APIs; version drift monitoring.

- Pre-deployment audits and red-teaming of group AI systems [healthcare, finance, public safety]

- Audit multi-agent assistants (e.g., clinical decision support committees, investment “AI committees,” emergency planning copilots) for groupthink-prone behavior using Hidden Bench tasks adapted to domain specifics.

- Potential product/workflow: Internal risk assessment playbooks that include a Hidden Profile test suite before production.

- Assumptions/dependencies: Domain-specific scenario adaptation; data governance and privacy safeguards.

- Orchestration policy updates to reduce premature consensus [software/agent frameworks]

- Modify agent interaction protocols to delay voting and elicit unshared evidence: e.g., “disagree-first” turns, mandatory evidence sharing rounds, time-to-consensus thresholds, rotating “devil’s advocate” role.

- Potential tools: Plugins for AutoGen/LangGraph/AgentVerse that inject anti-conformity turn-taking and logging of “unique evidence surfaced.”

- Assumptions/dependencies: Engineering integration into existing orchestration; evaluation to avoid zero-consensus outcomes seen in “extremely conflictual” prompts.

- Continuous evaluation in CI/CD for agent teams [software/MLOps]

- Add HIDDENBENCH to agent CI pipelines to catch regressions in collective reasoning after model or policy updates.

- Potential product/workflow: MLOps dashboards showing Ypre, Ypost, Yfull and gap metrics over time.

- Assumptions/dependencies: Compute budget for multi-session evaluation; stable seeds and prompt templates to control variance.

- Meeting facilitation and collaboration tooling with “groupthink guard” [enterprise collaboration, education]

- Embed prompts/checklists that ask participants (human or LLM) to surface unique information before consensus; detect “early convergence” and nudge for additional evidence.

- Potential tools: Slack/Teams add-ins that flag low diversity-of-evidence and suggest follow-up questions.

- Assumptions/dependencies: Mapping “hidden info” cues to real collaboration data; user acceptance; privacy compliance.

- Classroom and professional training on collective decision-making [education, management training, healthcare CME]

- Use HIDDENBENCH-style tasks to teach biases like shared information bias; run live human–LLM group exercises to demonstrate pitfalls and mitigations.

- Potential tools: Course modules and case studies packaged from benchmark tasks.

- Assumptions/dependencies: Age- and context-appropriate content; instructor facilitation.

- Ensemble and MoA (Mixture-of-Agents) evaluation beyond single-model metrics [software]

- Benchmark debate/ensemble strategies (e.g., MoA, multi-agent debate) on collective reasoning rather than only task accuracy; select ensemble policies that actually improve Ypost–Ypre.

- Potential tools: “Collective Reasoning A/B harness” to compare debate styles and vote aggregation rules.

- Assumptions/dependencies: Access to multiple orchestration strategies; guardrails against mode collapse.

- Policy/Compliance checklists for regulated deployments [public sector, healthcare, finance]

- Add a “collective reasoning evaluation” section to AI assurance frameworks (e.g., procurement questionnaires, internal governance) to verify systems integrate distributed evidence.

- Potential outputs: Minimal pass criteria (e.g., Yfull ≥ 0.8 and Ypost–Ypre ≥ 0.4×(Yfull–Ypre)) as internal standards.

- Assumptions/dependencies: Organizational buy-in; alignment with legal/regulatory guidance.

Long-Term Applications

These require further research, scaling, or development before broad deployment.

- Fine-tuning and RL for collective reasoning [AI model development]

- Train agents with objectives that reward surfacing unshared evidence and penalize premature convergence (group RL, self-play using Hidden Profile tasks).

- Potential products: “Collective Reasoning–tuned” model variants for team-based applications.

- Assumptions/dependencies: High-quality, domain-diverse Hidden Profile datasets; stable multi-agent RL pipelines; compute cost.

- New multi-agent architectures for information pooling [software/AI tooling]

- Design blackboard/whiteboard memories, belief-tracking, and Bayesian pooling mechanisms that preserve unique evidence and structure deliberation.

- Potential products: Agent frameworks with built-in evidence registries, provenance tracking, and justification graphs.

- Assumptions/dependencies: UX and latency trade-offs; robustness to noisy or adversarial inputs.

- Domain-specific HiddenBench variants for high-stakes decisions [healthcare, legal, cybersecurity, emergency management]

- Build curated Hidden Profile suites (e.g., differential diagnosis with unique labs/images/notes; incident response with partial telemetry) to stress-test domain assistants.

- Potential products: “HiddenBench-Clinical,” “HiddenBench-IR,” “HiddenBench-Legal” evaluation packs.

- Assumptions/dependencies: Expert-labeled scenarios; handling of sensitive data; regulatory review.

- Standards and certification for collective AI [policy, standards bodies]

- Establish NIST/ISO-like benchmarks and minimum thresholds for multi-agent AI used in critical infrastructure (grids, aviation ops centers).

- Potential outputs: Third-party certification programs centered on collective reasoning metrics.

- Assumptions/dependencies: Multistakeholder consensus; reproducibility across languages and cultures.

- Mixed human–LLM team design that elicits unique human knowledge [enterprise, healthcare]

- Build agents that proactively query humans for missing, non-shared facts and keep “open issues” dashboards until evidence thresholds are met.

- Potential products: Meeting copilots that manage “evidence completeness” before decisions.

- Assumptions/dependencies: Change management; avoidance of user fatigue; clear accountability.

- Multi-agent robotics/IoT coordination under partial observability [robotics, manufacturing, logistics]

- Apply the Hidden Profile paradigm to evaluate and improve information-sharing in distributed robot/sensor teams where each unit has a partial view.

- Potential products: “Info-fusion policies” for swarms (belief propagation, consensus protocols) validated on Hidden Profile analogues.

- Assumptions/dependencies: High-fidelity simulators; translation from text benchmarks to embodied control.

- Safety and misinformation resilience at group scale [platforms, trust & safety]

- Use HIDDENBENCH-inspired diagnostics to detect echo chambers and over-coordination in agent collectives (e.g., content curation bots, moderation triage).

- Potential products: “Consensus health” monitors that trigger dissent injection or additional evidence hunts.

- Assumptions/dependencies: Adversarial testing suites; safeguards against manipulation.

- Market infrastructure for “Collective Reasoning QA” [MLOps, SaaS]

- Offer benchmark-as-a-service with dashboards, regression tracking, and domain adapters; integrate into vendor scoring and SLAs.

- Potential products: SaaS platforms that provide longitudinal tracking of Ypre, Ypost, Yfull and domain-specific pass/fail gates.

- Assumptions/dependencies: Demand and interoperability with existing MLOps stacks; clear ROI for customers.

- Argumentation and evidential reasoning layers [software, research]

- Incorporate structured argumentation (e.g., Dung frameworks), citation enforcement, and conflict resolution protocols to codify how unique evidence changes group beliefs.

- Potential products: Libraries that map dialogue to argument graphs and compute justified conclusions.

- Assumptions/dependencies: Advances in argument-mining, provenance extraction, and evaluation datasets.

- Cross-lingual and cross-cultural benchmark expansions [global deployments]

- Extend HiddenBench to multiple languages and cultural contexts to validate collective reasoning behavior in diverse settings.

- Potential products: Multilingual benchmark packs for multinational organizations.

- Assumptions/dependencies: High-quality translations/adaptations; sensitivity to cultural norms in group decision tasks.

Notes on feasibility and generalization (cross-cutting):

- HiddenBench tasks assume a single correct option, which may not reflect multi-criteria, ambiguous real-world decisions; domain adaptation is essential.

- Current tasks are text-based and predominantly in English; embodied or multimodal settings will need additional instrumentation.

- API availability, cost, and model version drift affect reproducibility; organizations should lock versions or track changes alongside benchmark results.

- Privacy and compliance considerations apply when adapting tasks with real data (e.g., EHR, trading logs).

Glossary

- average rule: A group-level accuracy metric that counts the proportion of agents who select the correct option. "the average rule, which measures the proportion of agents selecting the correct option (our default measure of accuracy),"

- collective intelligence: The emergent capability of a group (including artificial agents) to achieve better outcomes than individuals by integrating knowledge and perspectives. "artificial collective intelligence."

- collective reasoning: Group-level reasoning that integrates distributed information through communication to reach a correct decision. "collective reasoning in multi-agent LLMs"

- conformity bias: The tendency to align with the majority view even when it conflicts with evidence. "conform to majorities (conformity bias)"

- Fisher's exact test: An exact statistical significance test for contingency tables, often used with small sample sizes. "Fisher's exact test Fisher (1922)."

- Full Profile condition: An evaluation setting where each agent has access to the complete set of task-relevant information. "In the Full Profile condition, each agent instead receives the full set I."

- groupthink: A group decision-making pathology where desire for harmony or consensus suppresses critical evaluation, leading to poor choices. "over-coordination, entrenched beliefs, or groupthink"

- Hidden Profile (paradigm): A social-psychology framework where critical information is unevenly distributed across members, making the correct choice identifiable only by pooling unique facts. "the Hidden Profile paradigm from social psychology"

- Hidden Profile condition: A task condition where no single private information set suffices to find the correct answer, but the answer emerges when distributed knowledge is pooled. "The Hidden Profile condition holds when the correct decision cannot be derived from any private information set alone, but becomes attainable once distributed knowledge is pooled through communication:"

- information asymmetry: A situation where different agents hold different pieces of information, often without being aware of the mismatch. "explicitly informing agents of information asymmetry"

- Institutional Review Board: An ethics committee that reviews and approves research involving human participants. "The study was approved by an Institutional Review Board."

- majority rule: A group decision rule marking success when more than half of the agents select the correct option. "the majority rule, which records whether more than half of the agents select the correct option."

- meta-analysis: A systematic, statistical synthesis of results from multiple studies on a topic. "a major Hidden Profile meta-analysis"

- normalcy bias: The tendency to prefer the status quo and underestimate the likelihood or impact of disruptive events. "favor the status quo (normalcy bias)"

- order effects: Performance or response differences caused by the sequence in which information is presented. "To mitigate order effects Pezeshkpour & Hruschka (2023), information order is shuffled within prompts."

- over-coordination: Excessive convergence or alignment in group behavior that hinders exploration of unique information and degrades performance. "These dynamics can culminate in over-coordination, entrenched beliefs, or groupthink"

- s.e.m. (standard error of the mean): A measure of the precision of the sample mean estimate of a population mean. "Error bars indicate mean ± s.e.m."

- shared information bias: The group tendency to discuss and emphasize information known by all members over unique, unshared facts. "a pattern known as shared information bias"

- social desirability bias: The inclination to provide responses viewed favorably by others rather than candid or accurate ones. "social desirability bias"

- stochasticity: Random variability in outcomes across runs due to inherent randomness or sampling in the process. "We run 30 sessions per condition to account for stochasticity"

- zero-shot chain of thought: A prompting technique that elicits step-by-step reasoning without providing worked examples. "zero-shot chain of thought Wei et al. (2022)"

Collections

Sign up for free to add this paper to one or more collections.