- The paper demonstrates that simple kNN methods overcome complex learned routers for LLM routing.

- It introduces standardized benchmarks across tasks such as instruction-following, QA, and vision-language to evaluate cost-quality tradeoffs.

- The study reveals that kNN routers offer superior sample efficiency and practical guidance for deploying multi-model AI systems.

Rethinking Predictive Modeling for LLM Routing: When Simple kNN Beats Complex Learned Routers

Introduction

The paper "Rethinking Predictive Modeling for LLM Routing: When Simple kNN Beats Complex Learned Routers" explores the domain of LLM routing—selecting the optimal LLM for a given input. The proliferation of LLMs with varied capabilities and costs has necessitated efficient routing strategies to enhance user experience and reduce computational expenses. The paper challenges prevailing assumptions by demonstrating that simple k-Nearest Neighbors (kNN) methods can outperform state-of-the-art learned routing strategies. This is significant given the locality properties of performance in embedding spaces which favor non-parametric methods over complex architectures.

Benchmark Development

To facilitate objective comparisons, the paper introduces a suite of standardized benchmarks covering multiple task domains. These include instruction-following, question-answering, and reasoning tasks, complemented by a pioneering multi-modal benchmark for vision-LLMs.

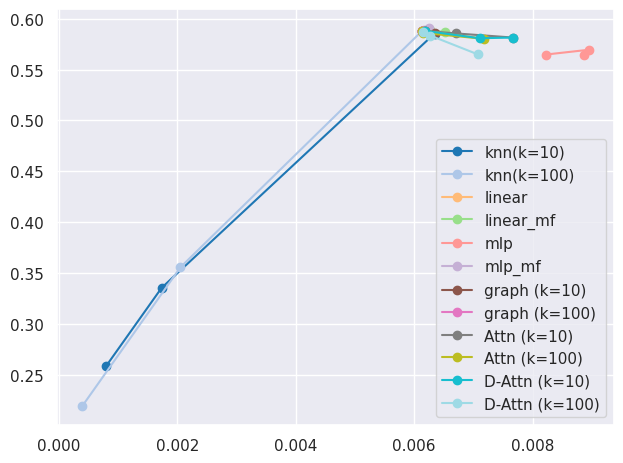

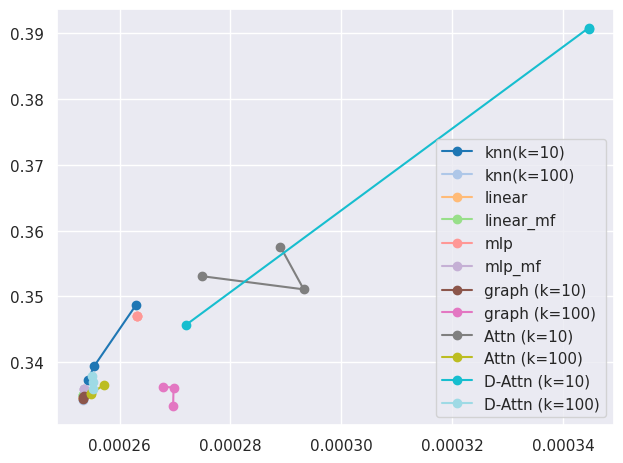

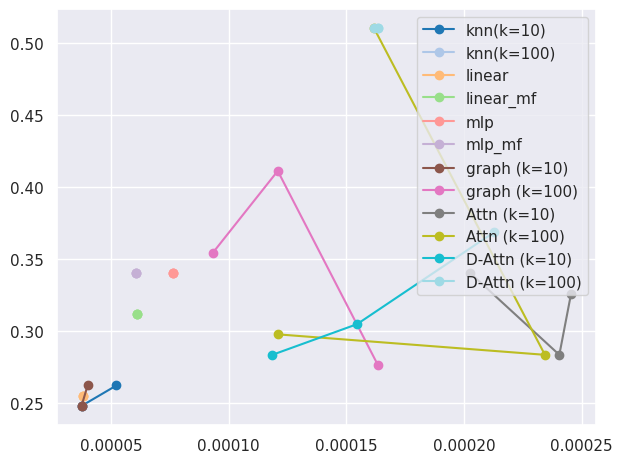

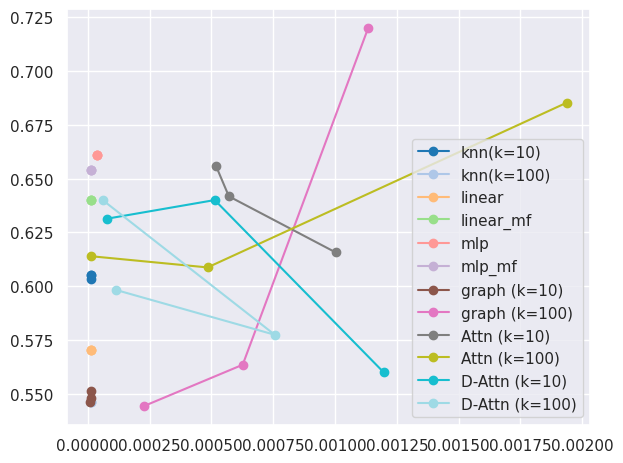

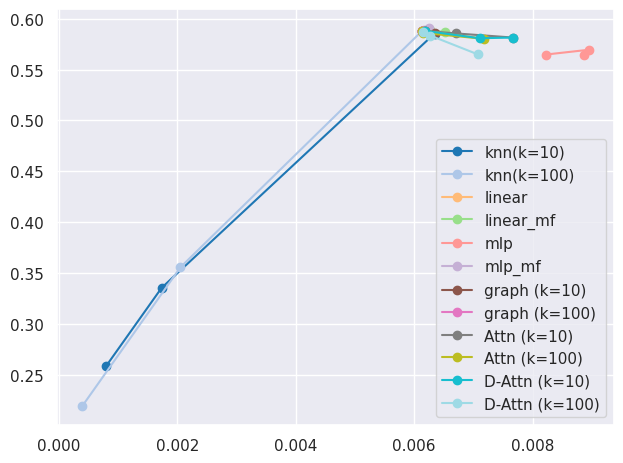

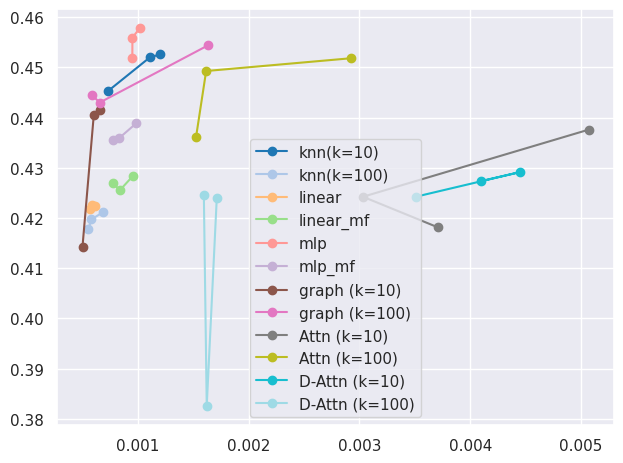

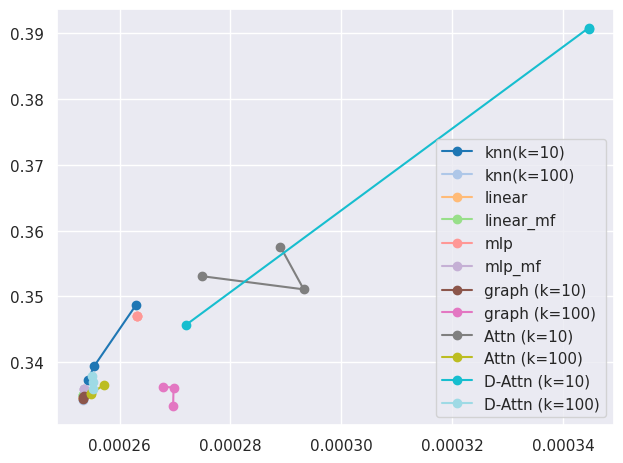

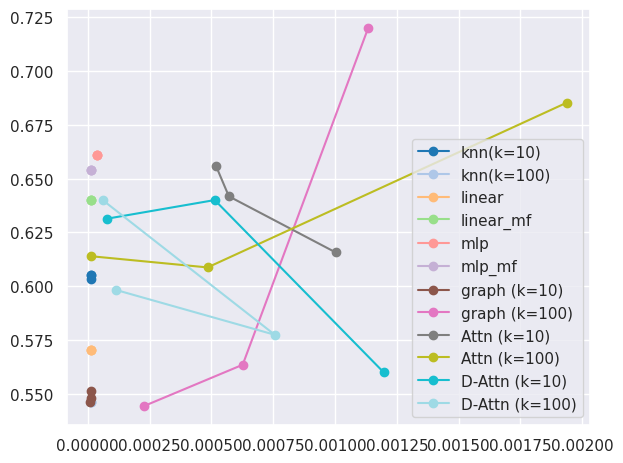

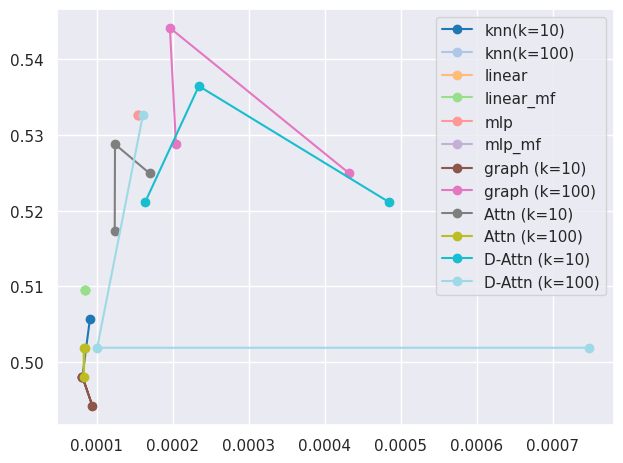

Figure 1: Cost-Quality tradeoff for text-based routing benchmarks using model selection approaches.

The kNN Approach

The simplicity of the kNN method lies in its use of local information from the embedding space. By leveraging nearest neighbor performance data, kNN can achieve strong routing decisions with less sample complexity than parametric approaches. This challenges the notion that sophisticated, learning-based routers are needed for effective LLM routing.

Evaluation Framework

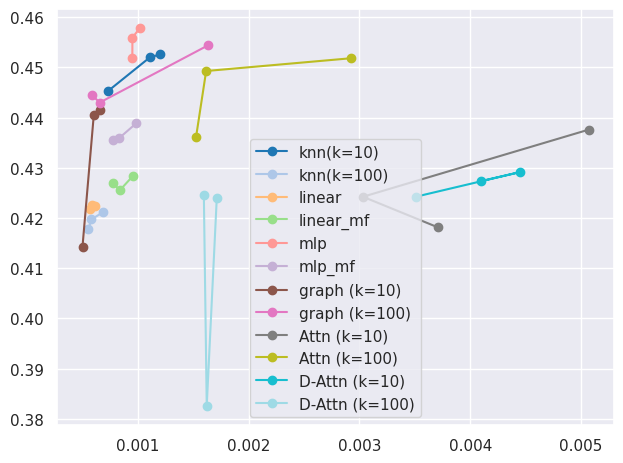

The framework for evaluation utilizes both utility prediction and model selection methods:

- Utility Prediction Evaluation: Involves predicting performance scores and costs to trace the Pareto front, enabling the assessment of routers in terms of balancing performance with cost, quantified by AUC scores.

- Selection-Based Evaluation: Directly maps queries to models, evaluated at distinct cost-performance preferences.

The results show that kNN-based routers perform competitively, often surpassing more complex methods.

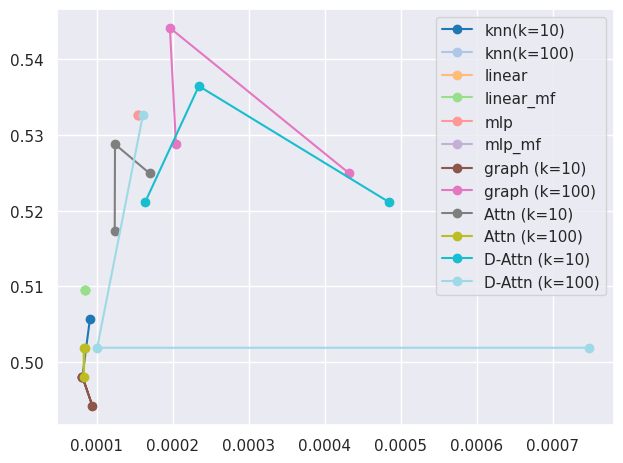

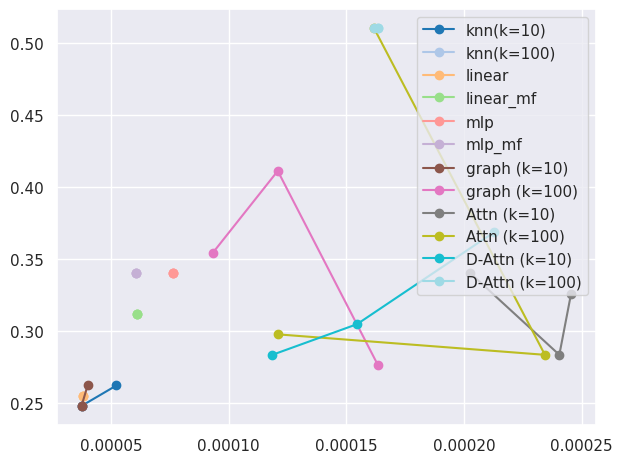

Figure 2: Cost-Quality tradeoff for VLM routing benchmarks using model selection approaches.

Theoretical Insights

The paper provides a theoretical grounding for the observed efficacy of kNN routers. It highlights the locality of model performance in embedding spaces and establishes the sample complexity advantage of kNN over parametric models. The kNN routers exhibit superior sample efficiency, particularly in low-dimensional spaces, emphasizing the importance of embedding quality.

Practical Implications and Future Work

The paper's findings advocate for simplicity in routing strategies, offering practical guidance for the deployment of multi-model systems. Future work may explore dynamic adaptation, alternative training signals, enhanced embedding learning, and batch routing, contributing to a larger discussion on how best to manage diverse AI models effectively.

Conclusion

By revisiting the fundamentals of LLM routing, the paper underscores the potential of simple methods like kNN to deliver strong performance in model selection. This has significant implications for practitioners and researchers, suggesting a re-evaluation of when complexity is warranted, thereby potentially democratizing access to sophisticated LLM-powered systems while maintaining efficiency.

The research challenges existing paradigms by demonstrating that thorough evaluation of simple baselines can yield insights that guide the development and deployment of AI systems in practical, cost-effective ways.