Local Mixtures of Experts: Essentially Free Test-Time Training via Model Merging

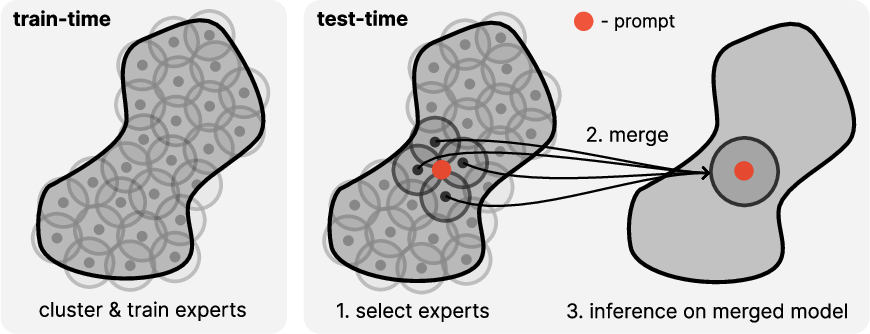

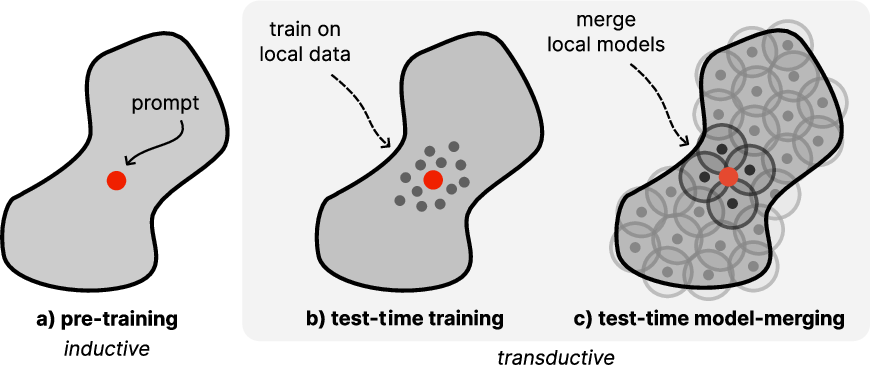

Abstract: Mixture of expert (MoE) models are a promising approach to increasing model capacity without increasing inference cost, and are core components of many state-of-the-art LLMs. However, current MoE models typically use only few experts due to prohibitive training and inference cost. We propose Test-Time Model Merging (TTMM) which scales the MoE paradigm to an order of magnitude more experts and uses model merging to avoid almost any test-time overhead. We show that TTMM is an approximation of test-time training (TTT), which fine-tunes an expert model for each prediction task, i.e., prompt. TTT has recently been shown to significantly improve LLMs, but is computationally expensive. We find that performance of TTMM improves with more experts and approaches the performance of TTT. Moreover, we find that with a 1B parameter base model, TTMM is more than 100x faster than TTT at test-time by amortizing the cost of TTT at train-time. Thus, TTMM offers a promising cost-effective approach to scale test-time training.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a consolidated list of concrete gaps that remain unresolved and could guide future research.

- Formal approximation guarantees: Precisely bound TTMM’s error relative to TTT as a function of number of experts, cluster diameters, merging weights, and LoRA properties; extend beyond single-step GD and Lipschitz assumptions to realistic training regimes and nonconvex losses.

- Equivalence to gradient-based adaptation: Establish when weighted merging of LoRA updates approximates performing gradient steps on the union of local datasets, and quantify the bias introduced by sparse weighting and centroid-based selection.

- Clustering choices and K selection: Systematically compare bisecting k-means to alternatives (e.g., spherical k-means, HDBSCAN, spectral, balanced hierarchical clustering) and develop principled criteria to choose K adaptively per dataset/task to avoid knowledge fragmentation.

- Centroid summarization fidelity: Analyze the error caused by representing clusters via a single normalized centroid, especially for non-spherical or multi-modal clusters; evaluate richer prototypes (multiple centroids per expert, covariance-aware routing, learned routers).

- Embedding model dependence: Benchmark diverse embedding sources (external encoders vs base LM internal embeddings vs task-tuned encoders), normalization schemes, and pooling strategies; study joint router/embedding fine-tuning and its impact on selection accuracy and latency.

- Hyperparameter sensitivity: Provide comprehensive sweeps over temperature β, sparsity τ, LoRA rank, target modules, per-expert training epochs, and learning rates; derive robust default settings and dataset-agnostic tuning procedures to avoid overfitting to holdout sets.

- Merging interference characterization: Quantify parameter interference as the number of active experts grows; compare layer-wise or parameter-wise coefficients, Fisher/TIES-weighted merges, subspace alignment, and orthogonality-promoting training to mitigate interference.

- Adaptive number of active experts: Develop principled strategies to choose N per prompt (e.g., based on similarity gaps, uncertainty estimates, local curvature, or router entropy) rather than fixed N or τ; analyze latency–accuracy trade-offs under these policies.

- Latency across systems: Evaluate TTMM’s overhead under varied hardware (multi-GPU, different interconnects, CPU–GPU bandwidths), batch sizes, and concurrent requests; study caching of merged adapters, prefetching, and overlapping I/O and compute at scale.

- Storage footprint and compression: Quantify CPU memory requirements as K scales; investigate adapter compression (quantization, sparsification, low-precision storage), deduplication, on-disk streaming, and their accuracy/latency trade-offs.

- Training cost accounting: Provide end-to-end compute, wall-clock, and I/O cost to train K experts versus a single fine-tune, including parallelization overhead and optimizer state; assess practicality at K=1k–10k and beyond.

- Generality across models and domains: Test TTMM with larger/instruction-tuned LLMs (e.g., 7B–70B), multilingual corpora, diverse code languages, and non-language modalities; expand to downstream tasks (QA, summarization, reasoning, safety) beyond perplexity.

- Stronger baselines and fair comparisons: Compare against optimized RAG, modern MoE with top‑k routing, dynamic ensembling with shared caches, and richer TTT variants (vary steps and neighbor counts) under matched compute budgets.

- Online reselection during generation: Investigate per-token or per-chunk re-merging policies, stability (avoid thrashing), hysteresis, and their effects on coherence and latency for long generations.

- Robustness to domain shift and OOD prompts: Measure failure modes when the selected experts poorly match the prompt; design uncertainty-aware selection, abstention/fallback to the base model, and mechanisms to detect “no suitable expert.”

- Privacy, data governance, and revocation: Assess risks of memorization/leakage within experts; develop methods to revoke or update experts when data must be removed, enforce per-user access controls, and track provenance/compliance during merging.

- Evaluation breadth and quality metrics: Go beyond perplexity to human preference, factuality, hallucination rates, code correctness (pass@k), calibration, and uncertainty; incorporate merged-parameter variance into confidence estimates.

- Failure analysis and diagnostics: Catalog cases where TTMM underperforms TTT or the base model; build tools to visualize expert coverage, overlap, and selection errors; relate failures to cluster shape, router confidence, and interference.

- Continual learning and maintenance: Define procedures to add/retire/refresh experts as data drifts, support incremental reclustering, and resolve conflicts between overlapping experts without degrading performance.

- Layer- and module-wise merging: Explore per-layer merging coefficients, treatment of biases and layer norms, and differential benefits across attention vs MLP blocks; identify which modules most benefit from TTMM.

- Parameter selection vs ensembling trade-offs: Study whether lightweight logit-level fusion (e.g., shared KV caches, partial ensembling) can close the small accuracy gap to ensembling without multiplicative runtime cost.

- Integration with RAG and MoE: Examine hybrid approaches that combine TTMM with retrieval conditioning or MoE gating; determine when parameter specialization (TTMM) outperforms context specialization (RAG), and how to best combine them.

Collections

Sign up for free to add this paper to one or more collections.