Reasoning Beyond Language: A Comprehensive Survey on Latent Chain-of-Thought Reasoning

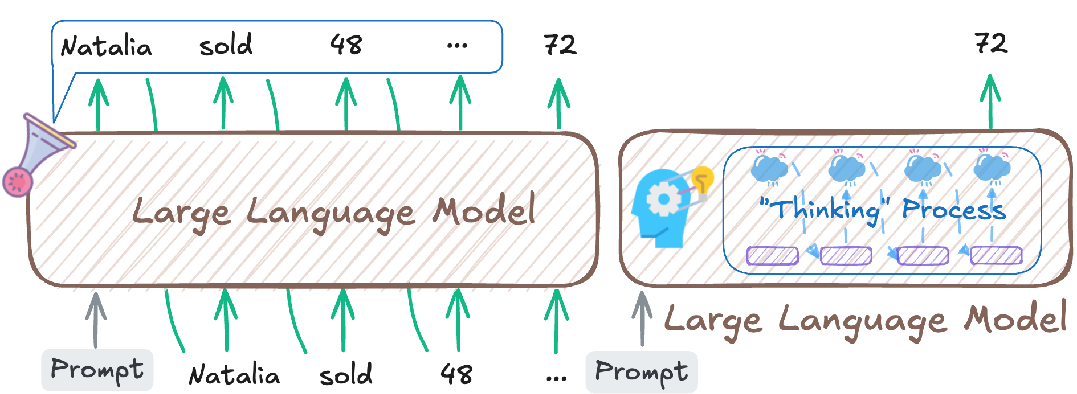

Abstract: LLMs have achieved impressive performance on complex reasoning tasks with Chain-of-Thought (CoT) prompting. However, conventional CoT relies on reasoning steps explicitly verbalized in natural language, introducing inefficiencies and limiting its applicability to abstract reasoning. To address this, there has been growing research interest in latent CoT reasoning, where inference occurs within latent spaces. By decoupling reasoning from language, latent reasoning promises richer cognitive representations and more flexible, faster inference. Researchers have explored various directions in this promising field, including training methodologies, structural innovations, and internal reasoning mechanisms. This paper presents a comprehensive overview and analysis of this reasoning paradigm. We begin by proposing a unified taxonomy from four perspectives: token-wise strategies, internal mechanisms, analysis, and applications. We then provide in-depth discussions and comparative analyses of representative methods, highlighting their design patterns, strengths, and open challenges. We aim to provide a structured foundation for advancing this emerging direction in LLM reasoning. The relevant papers will be regularly updated at https://github.com/EIT-NLP/Awesome-Latent-CoT.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What is this paper about?

This paper is a guided tour of a new way LLMs can “think.” Instead of making the model write out every step of its reasoning in sentences (like showing your work in math), it explores “latent chain-of-thought” reasoning—where the model does most of its thinking silently inside its hidden representations and only says the final answer. The authors explain why this could be faster, more flexible, and sometimes closer to how people think, then organize and compare all the main ideas, methods, and challenges in this growing research area.

Key questions the paper asks

- Can LLMs reason well without writing long explanations?

- How can we help models think “in their heads” efficiently and accurately?

- What kinds of tools and training strategies make silent reasoning work?

- How do we tell real reasoning apart from shortcuts or guesswork?

- Where could this silent reasoning be useful, and what are the risks?

How the authors organize and study the field

Think of the paper as a map of approaches to silent reasoning. It groups methods into a few big buckets and explains them with clear patterns and trade-offs.

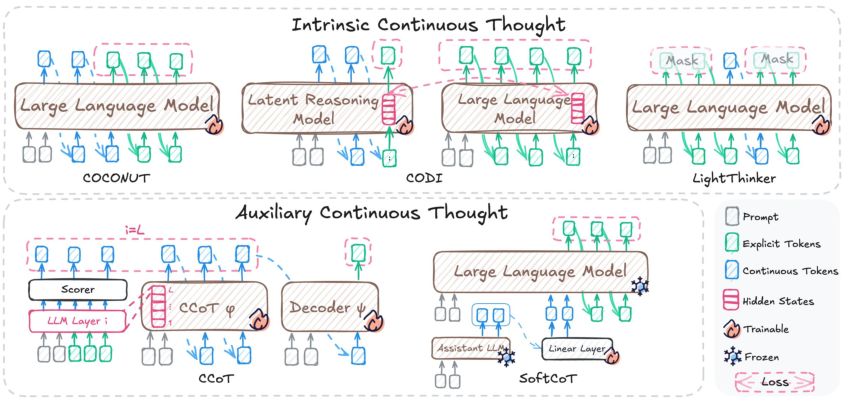

1) Token-wise strategies: adding special “thinking hints”

- Discrete tokens: These are special symbols (like [pause] or learned “thinking” markers) that tell the model to slow down, plan, or separate steps—like hand signals in a team sport. They can guide the model to use more internal computation before answering.

- Continuous tokens: Instead of visible symbols, these are smooth, learned “mini-thoughts” (vectors) the model passes to itself. You can think of them as short, compressed notes or mental sketches the model understands but doesn’t say out loud. Some methods create these notes inside the model; others use a helper module to produce them.

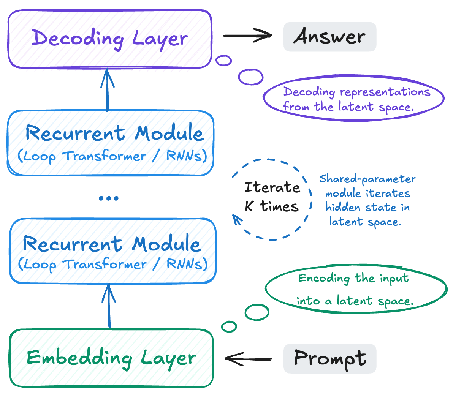

2) Internal mechanisms: changing how the model thinks inside

- Structural CoT: Adjust the model’s compute structure so it can loop or go “deeper” on the same input—like taking extra passes to refine an idea. This is a bit like re-reading a puzzle and improving your draft answer each time.

- Representational CoT: Train the model so that the hidden layers themselves store the “steps” of reasoning. No extra words—just packing the logic directly into its internal memory.

3) Analysis and interpretability: checking what’s really going on

Researchers probe models to see if they truly reason internally or if they jump to answers using shortcuts. They look for signals in hidden states, test for “shortcut” patterns, and even find “directions” in activation space that can switch on more step-by-step thinking.

4) Applications

These ideas are being tried in:

- Text reasoning (math, logic, commonsense)

- Multimodal tasks (images + text, speech + text), where describing every step in words is clunky

- Search and recommendation, where compact internal “thoughts” can speed up retrieval and ranking

Main findings and why they matter

- Silent reasoning is real and useful: Many models can improve accuracy and speed by thinking in hidden space instead of writing long explanations. This cuts token usage and latency.

- Two big toolkits help:

- Special tokens (discrete or continuous) that act like compact “thought handles”

- Architectural changes (more depth or loops) that let the model refine its answer internally

- But there are caveats:

- Models sometimes use shortcuts: they may “guess” from patterns instead of truly reasoning. That means a correct answer isn’t always proof of real thinking.

- Training is hard: It’s easier to supervise written steps than invisible ones. Getting models to learn robust internal reasoning that generalizes is tricky.

- Interpretability and safety: If the thinking is hidden, it’s harder to inspect, debug, or ensure it’s aligned with human values.

Why this matters: If done well, latent chain-of-thought can keep or even improve quality while making models faster and cheaper to use. It can also capture non-verbal, abstract thinking that plain language can’t always express.

What this could change going forward

- Faster, more capable assistants: Shorter outputs with the same or better accuracy—useful for apps that need quick, low-cost answers.

- Better architectures: Looping or recurrent styles may become standard for reasoning, giving models built‑in “time to think.”

- New tools for trust: Better ways to detect shortcuts, verify internal steps, and steer hidden reasoning will be key for safety and reliability.

- Stronger training strategies: Reinforcement learning and curriculum learning could shape internal reasoning without relying on long written chains.

- Broader impact: Multimodal systems (like vision+language) and AI agents (planners, debaters, recommenders) can benefit from compact, internal thought, making them more efficient and scalable.

In short, this survey shows how moving “beyond language” to hidden, compact thoughts can make AI both smarter and more practical—while also highlighting the need for better training and clearer ways to check what the model is really thinking.

Collections

Sign up for free to add this paper to one or more collections.