- The paper introduces TRACE, a framework that detects phase transitions where transformers shift from memorization to forming abstract semantic representations.

- It employs geometric measures, like intrinsic dimensionality and curvature, alongside linguistic probes to analyze model training dynamics.

- Findings reveal that transformer components, such as feed-forward networks and attention heads, play critical roles in stabilizing abstraction and syntactic alignment.

Understanding TRACE: A Framework for Semantic Representation Tracking

Introduction to TRACE

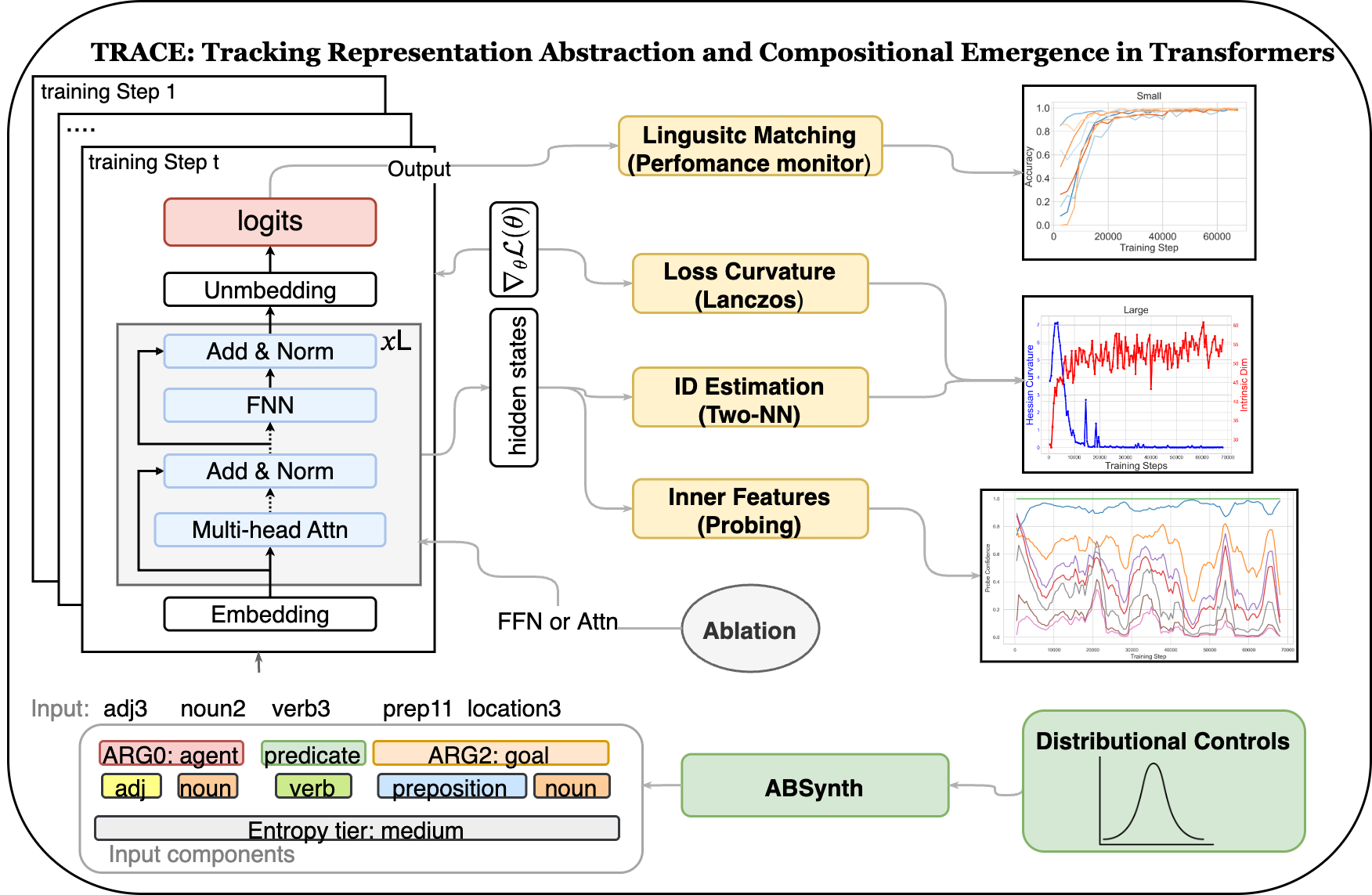

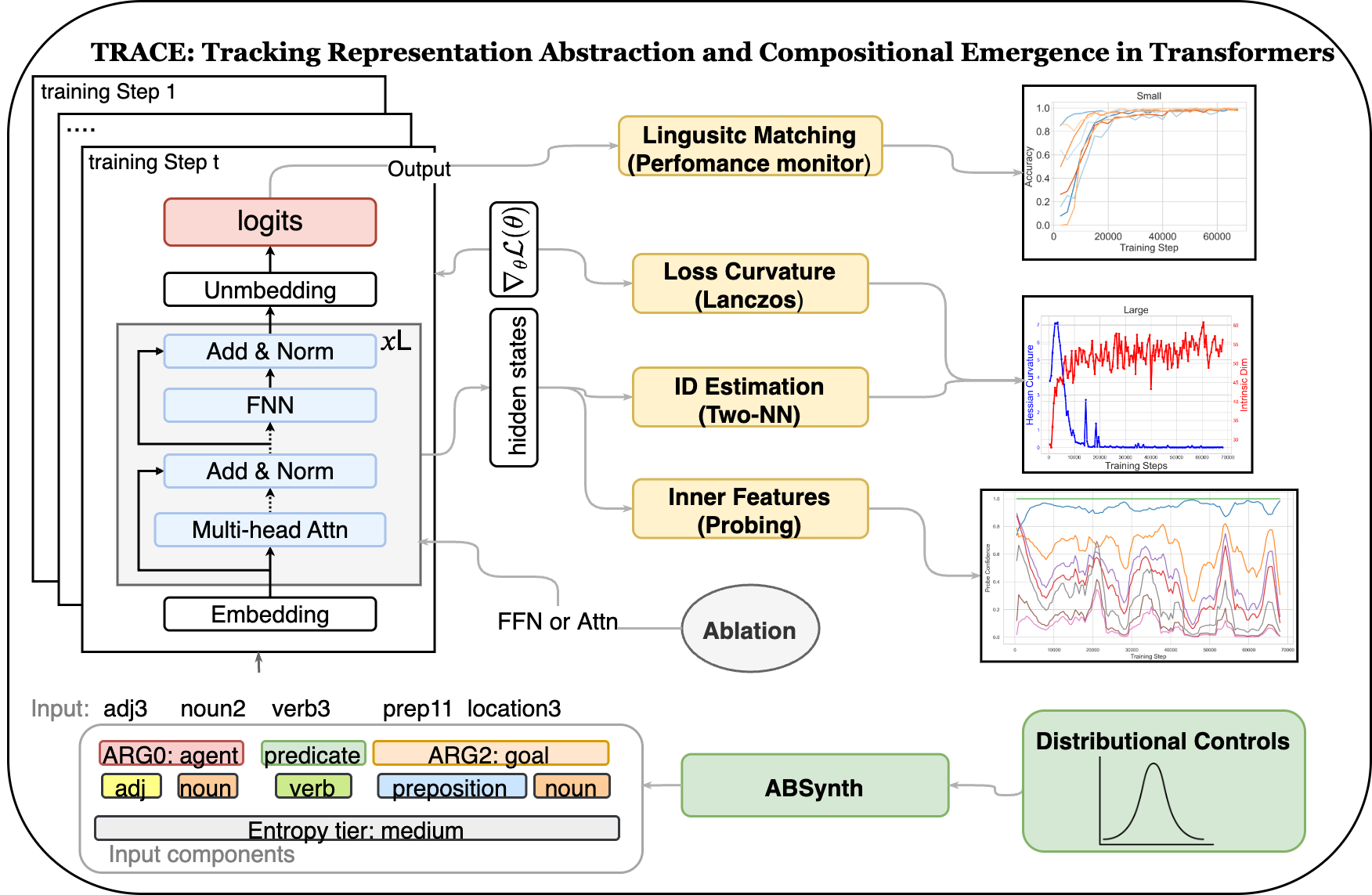

The paper "TRACE for Tracking the Emergence of Semantic Representations in Transformers" introduces TRACE, a diagnostic framework designed to analyze the emergence of abstraction in transformer models. This approach combines geometric, informational, and linguistic signals to detect phase transitions, which are crucial reorganization points in model training where transformers shift from memorizing input data to forming abstract representations. The study presents ABSynth, a novel synthetic corpus generation framework based on frame semantics, facilitating precise examination of how linguistic abstractions arise within transformer models.

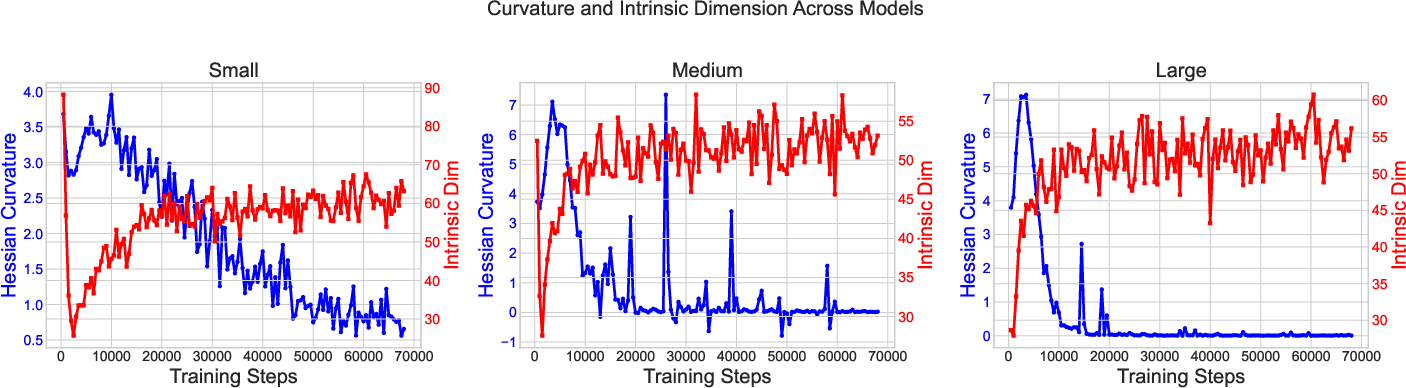

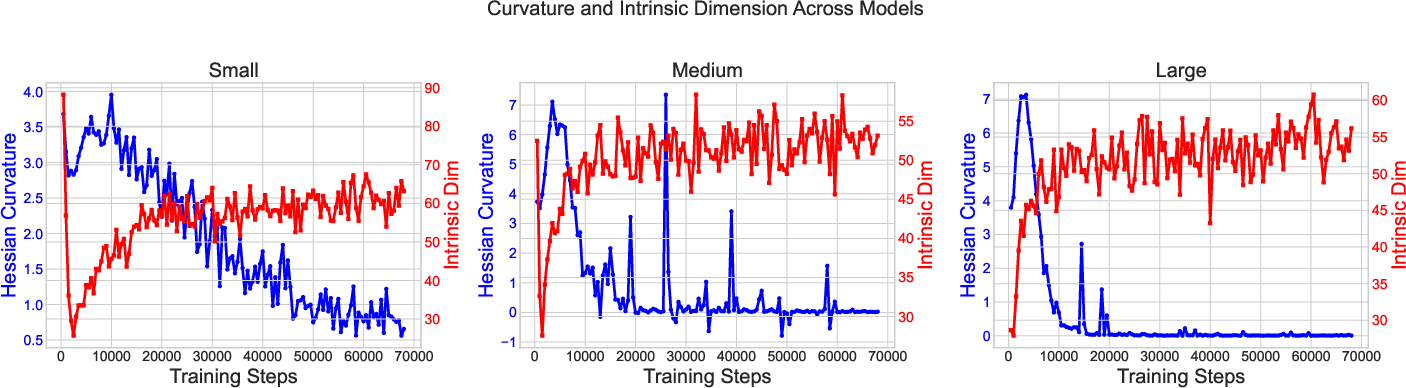

TRACE identifies a characteristic pattern of phase transitions during training. These transitions are marked by clear geometric changes, including a rise followed by stabilization in intrinsic dimensionality and spikes in loss curvature. Such shifts are synchronized with improvements in syntactic and semantic accuracy. Key observations include:

- Dimensionality and Curvature Dynamics: As models train, intrinsic dimensionality initially increases as they accommodate entangled features, then stabilizes or decreases as abstraction phases emerge. Curvature dynamics show transient spikes, indicating phases of structural reorganization before the model settles into efficient generalization patterns.

Figure 1: Coordinated dynamics of Hessian Curvature Score (blue) and Average Intrinsic Dimension (red) across training steps for different model architectures.

Architectural Influence on Abstraction

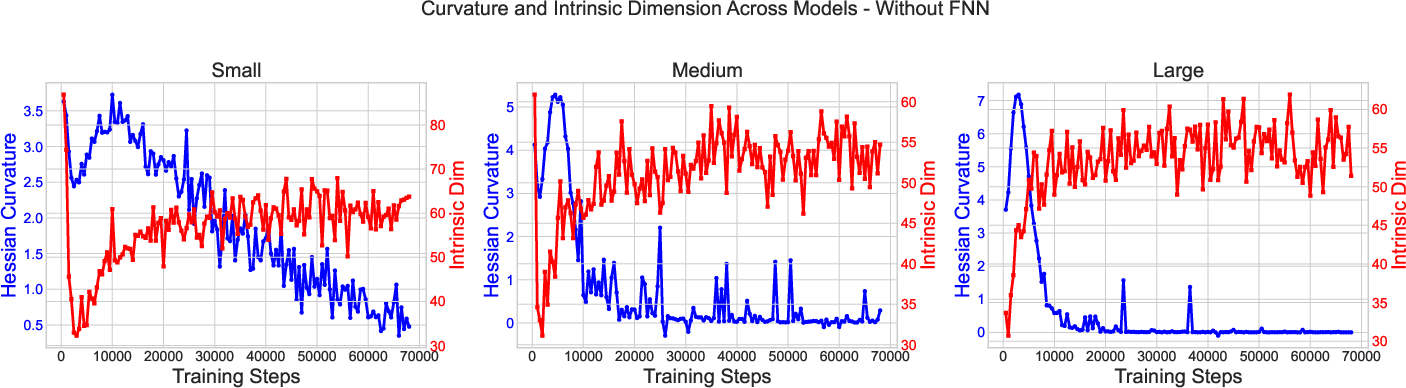

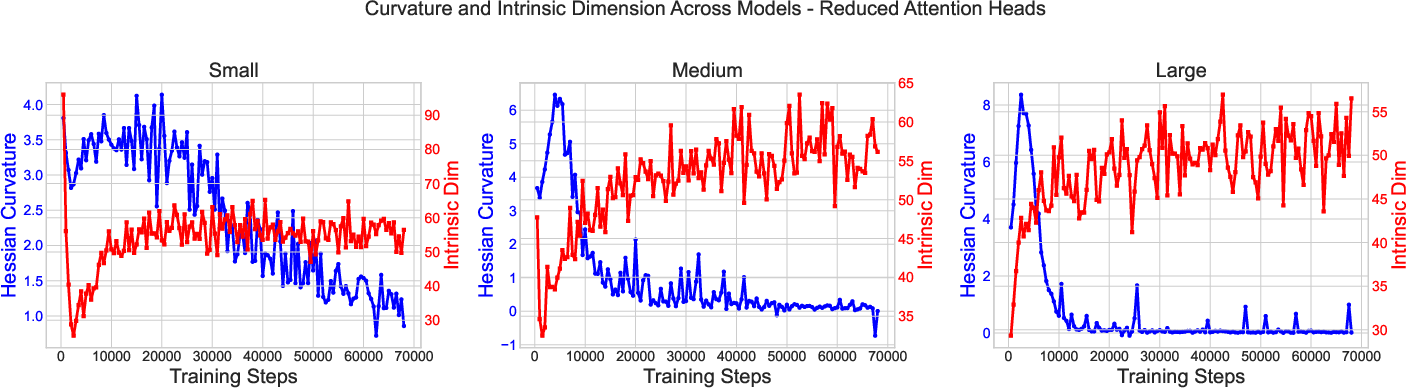

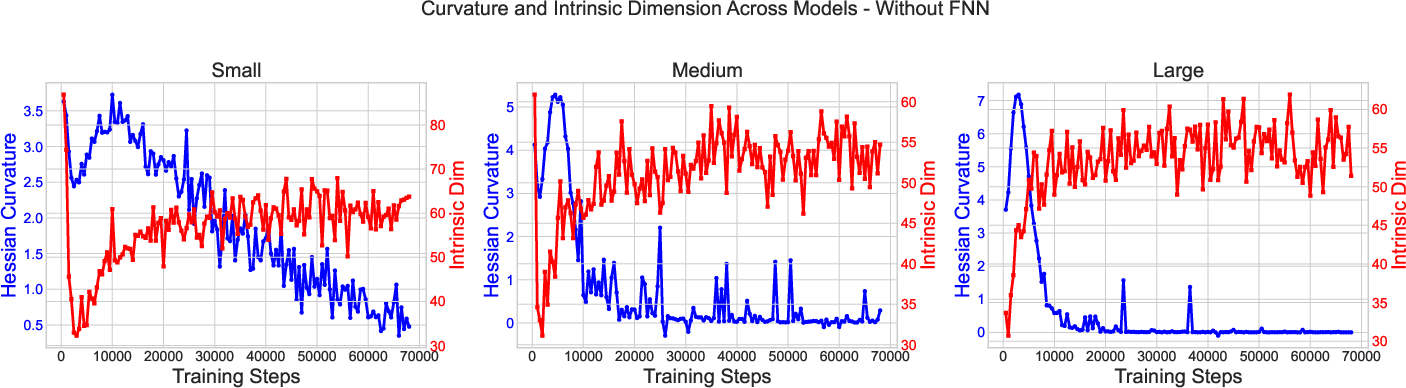

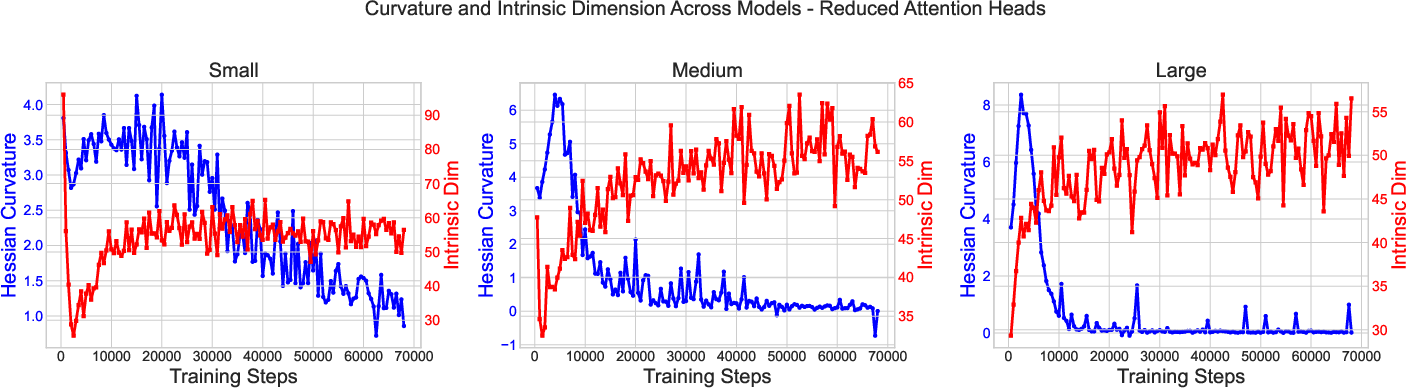

The paper examines how architectural modifications affect abstraction dynamics. Ablations of key transformer components such as feed-forward networks and attention heads provide insights into their roles:

- Feed-Forward Networks: Their removal results in increased curvature volatility and persistent oscillations in medium and small models, underscoring their role in smoothing optimization and supporting stable abstract representation development.

- Attention Heads: Reducing attention heads impacts models differently; smaller models experience delayed phase transitions and lower representational complexity, whereas larger models maintain abstraction capacity with minor stability trade-offs.

Linguistic Emerging Patterns

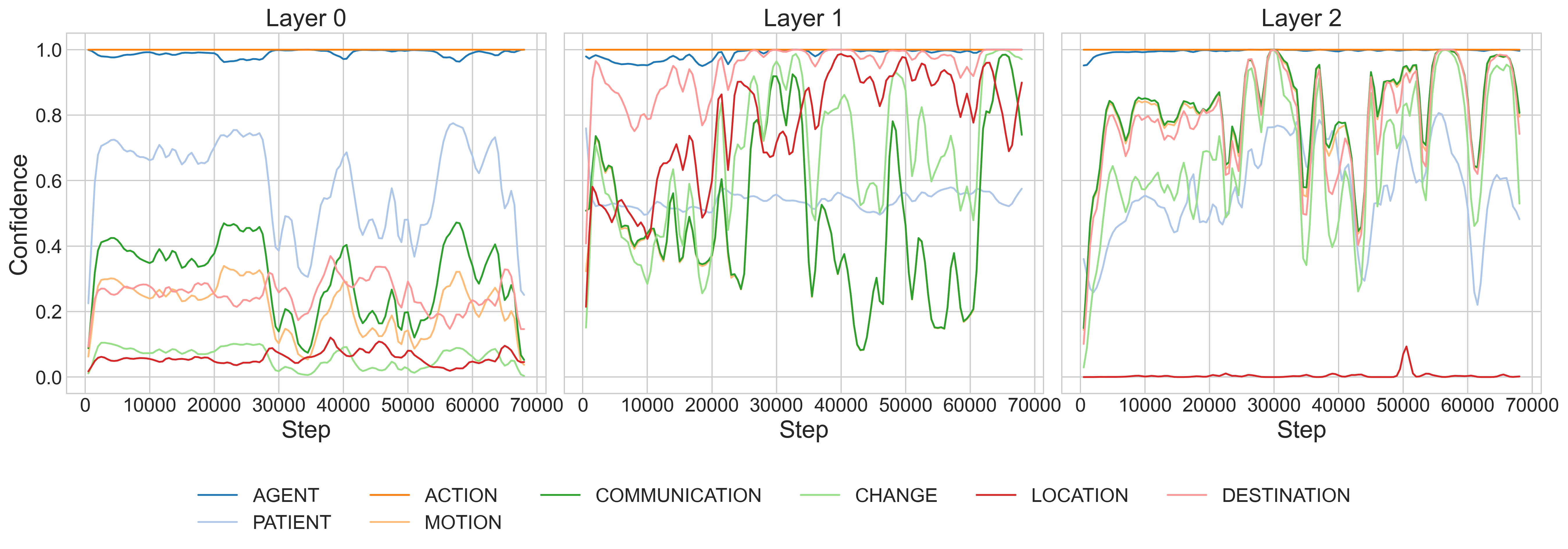

Linguistic alignment with geometric shifts reveals how semantic and syntactic categories emerge:

ABSynth provides the synthetic corpus used to avoid natural data confounds, enabling the precise tracking of linguistic abstractions via controlled complexity and transparent annotation.

- Mutual Information Instability: Although theoretically insightful, mutual information failed to consistently mark phase transitions due to its volatility and lack of alignment with other measurable signals.

Figure 3: Overview of the TRACE framework integrating various monitoring aspects, including intrinsic dimensionality, spectral curvature, and linguistic alignment.

Conclusion and Implications

TRACE advances understanding of abstraction emergence in LLMs, offering a principled approach to diagnosing phase transitions. The insights gained can inform the design of more interpretive, efficient, and generalizable LLMs, potentially transforming LM development strategies. Future exploration may involve extending TRACE to real-world data scenarios and integrating it with mechanistic interpretability tools.

The presented framework provides a robust basis for future analysis of LLMs, particularly in contexts requiring detailed interpretability and phase transition detection for enhanced training efficiency.