- The paper presents a systematic survey categorizing LLM planning methods into external module augmented, finetuning-based, and searching-based strategies.

- It details evaluation approaches using diverse datasets and metrics, underscoring the influence of CoT reasoning and feedback mechanisms on planning performance.

- The survey highlights future research directions with a focus on reinforcement learning integration and developing lightweight models for edge deployment.

LLMs for Planning: A Comprehensive and Systematic Survey

Introduction

LLMs have demonstrated significant capabilities in natural language processing and have been applied across a range of complex tasks, including planning. Planning involves sequential decision-making, requiring agents to analyze environments, reason logically, and devise sequences of actions to achieve desired objectives. This paper provides a systematic survey of LLM-based planning, aiming to bridge the gap between the impressive potential of LLMs and the complexity of real-world planning tasks by categorizing various methodologies, analyzing evaluation techniques, and discussing future directions.

LLM-based Planning Methodologies

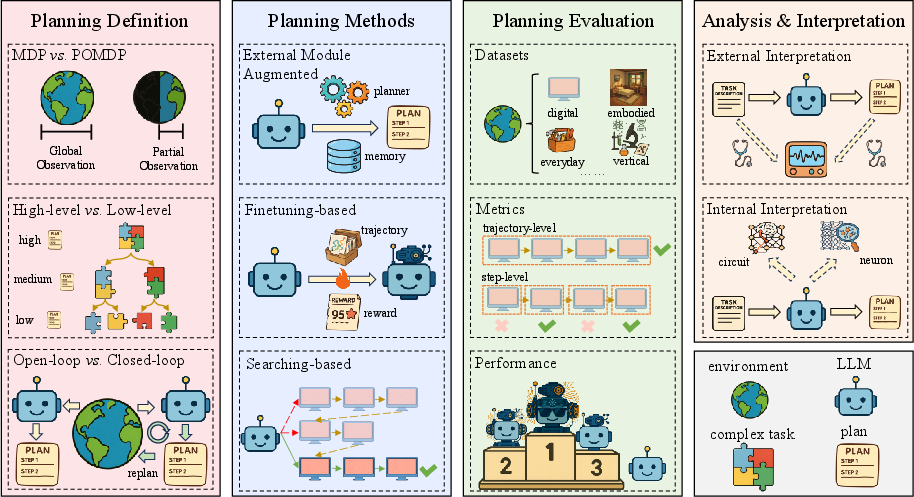

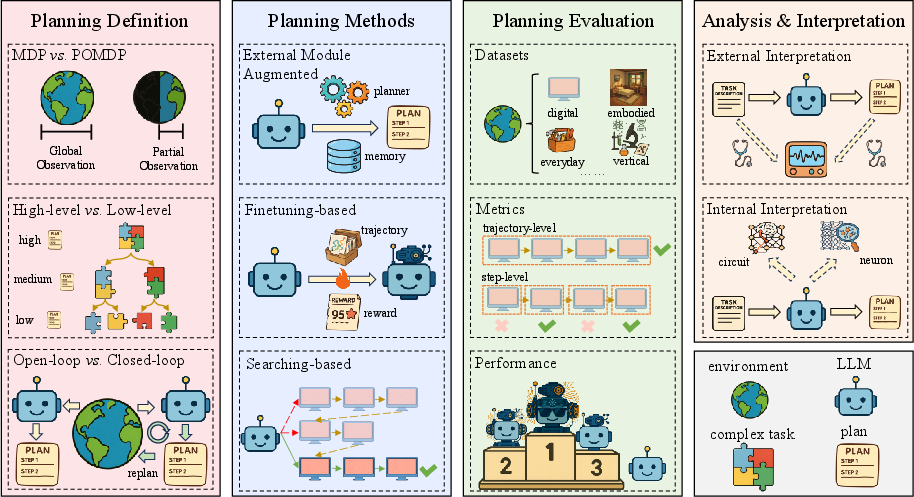

LLM-based planning can be divided primarily into three approaches as depicted in (Figure 1). The methods are categorized into: External Module Augmented Methods, Finetuning-based Methods, and Searching-based Methods, each providing unique strategies to enhance the planning capabilities of LLMs.

Figure 1: An overview of LLM-based agent planning, covering its definition, methods, evaluation approaches, and analysis and interpretation.

External Module Augmented Methods

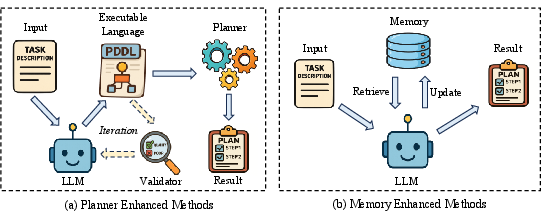

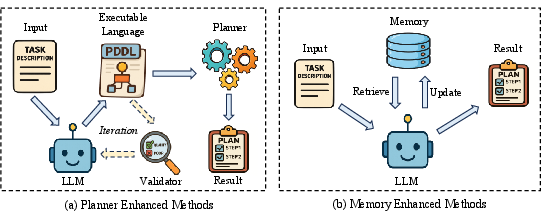

This approach integrates LLMs with external components, aiming to augment LLM capabilities with structured reasoning and long-term memory. Planner Enhanced Methods involve translating natural language descriptions into executable planning languages like PDDL, and coupling with symbolic planners (Figure 2). In contrast, Memory Enhanced Methods equip LLMs with memory mechanisms that store and recall previous interactions, which are pivotal in making informed decisions (Figure 2).

Figure 2: The illustration of external module augmented methods, which includes planner enhanced methods and memory enhanced methods.

Finetuning-based Methods

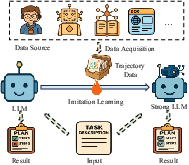

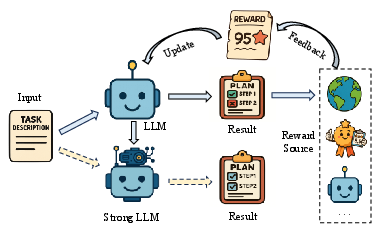

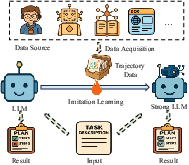

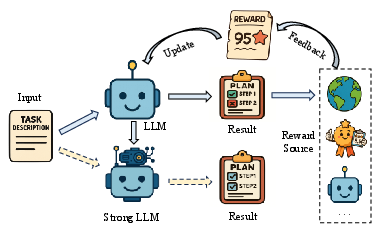

Finetuning methodologies involve adapting LLMs to specific planning tasks via imitation learning and feedback signals. Imitation Learning-based Methods train models using expert trajectory data or self-generated data to mimic desirable behaviors (Figure 3). Feedback-based Methods utilize environmental or model-based feedback to incrementally guide LLM adjustment, enhancing decision-making precision (Figure 4).

Figure 3: The illustration of imitation learning-based methods that use trajectory data to update the parameters of LLMs for enhancing their planning capabilities.

Figure 4: The illustration of feedback-based methods that employ the feedback as the signal to guide the update of LLMs' parameters.

Searching-based Methods

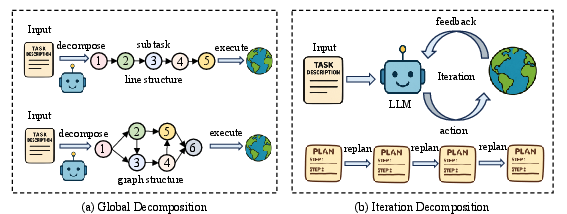

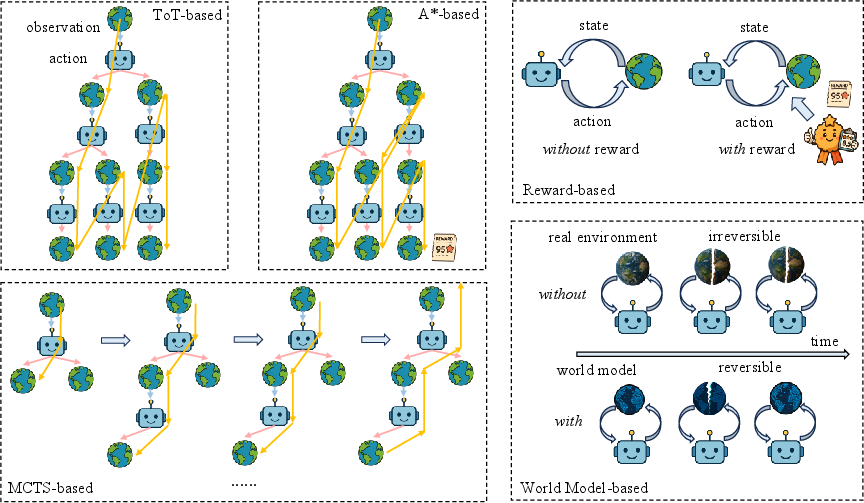

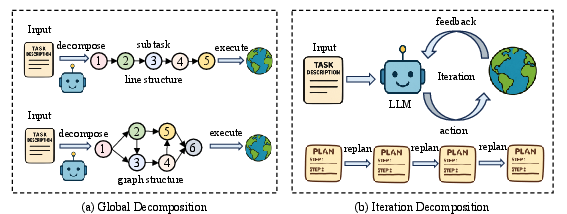

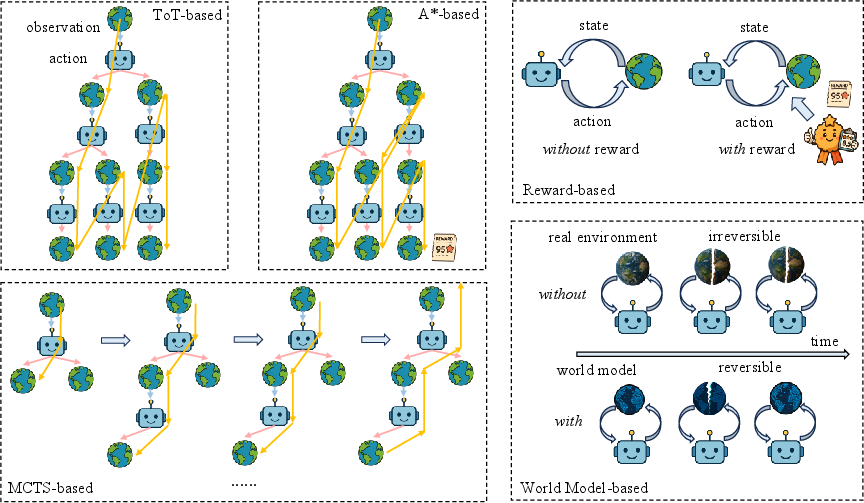

Searching strategies breakdown tasks into smaller components, allowing LLMs to navigate through complex solution spaces efficiently. Decomposition-based Methods divide tasks globally or iteratively for detailed strategic planning (Figure 5). Exploration Methods use AI-based search strategies like ToT, MCTS, and A*-based algorithms to explore multiple pathways to optimal solutions with minimal trial and error (Figure 6).

Figure 5: An overview of decomposition-based methods that can be divided into two decomposition modes, including global decomposition and iteration decomposition.

Figure 6: The illustration of exploration methods, including ToT-based, MCTS-based, A

-based, world model-based, and reward model-based exploration.*

Evaluation Approaches

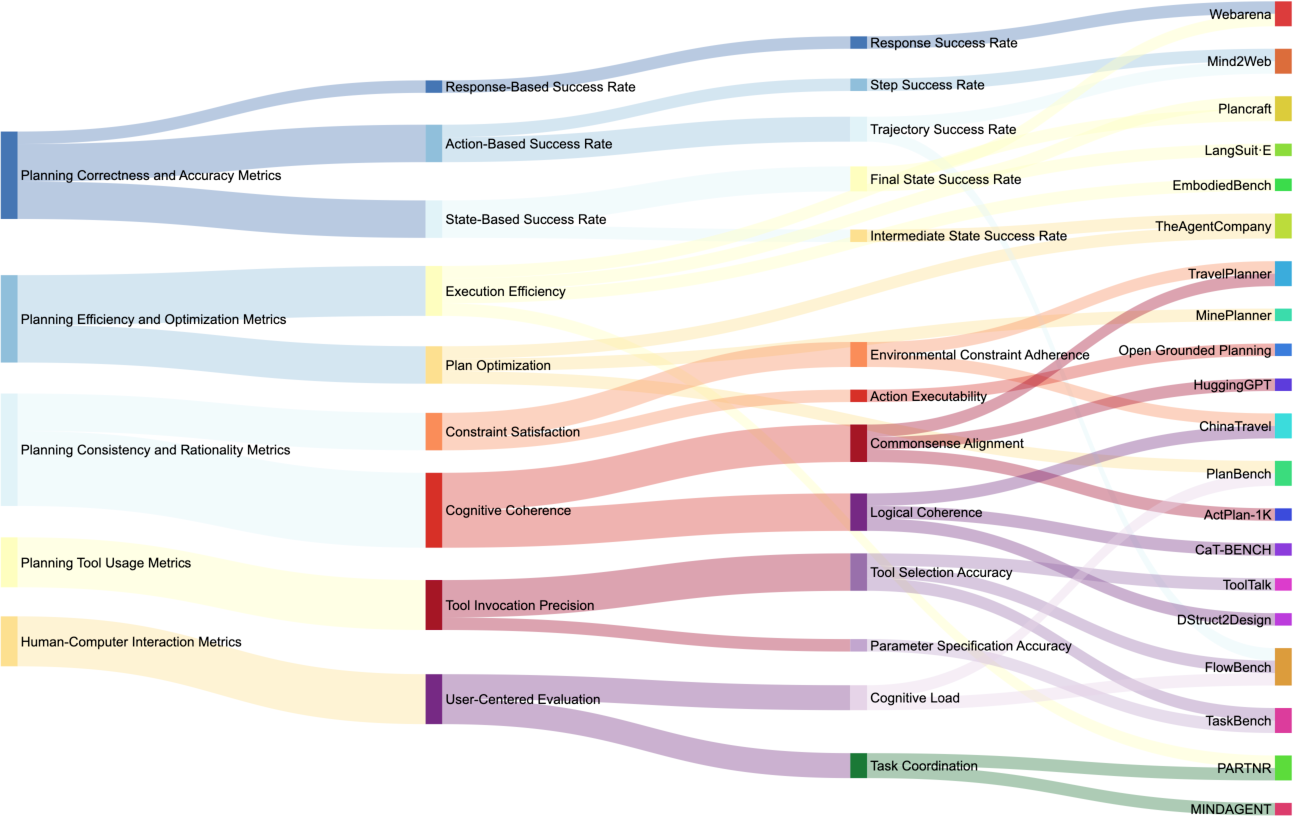

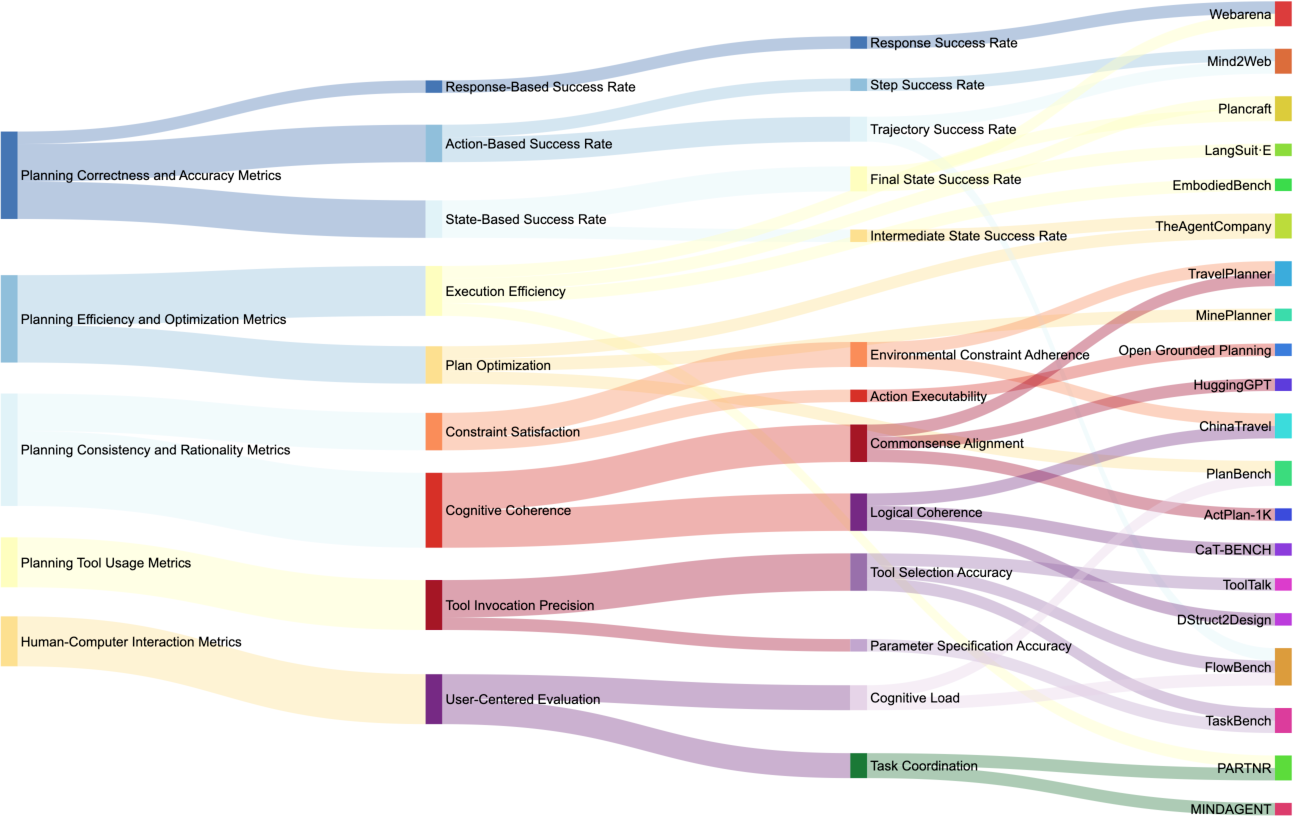

The evaluation of LLM-based planning methods encompasses diverse datasets and metrics to measure success in digital, embodied, everyday, and vertical scenarios (Figure 7). Performance is gauged using markers like correctness, efficiency, consistency, and contextual interaction ability, enabling comprehensive analysis of model capability in handling sophisticated tasks.

Figure 7: The corresponding relationship between planning evaluation metrics and some typical planning datasets. The left three columns represent evaluation metrics of different granularities, while the rightmost column denotes the dataset.

Analysis and Interpretation

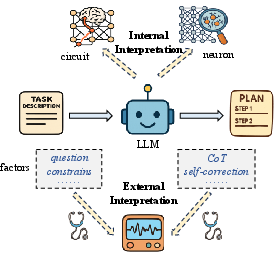

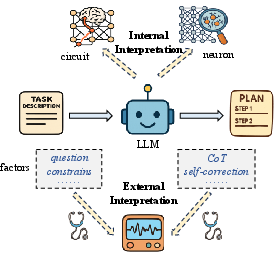

The internal and external interpretations of LLM planning abilities reveal insights into task-specific strengths and capabilities. External interpretation examines factors like the influence of CoT reasoning and self-correction in enhancing planning tasks. Internal interpretation focuses on mechanisms within LLMs, such as the emergence and integration of planning features, elucidating the model's decision-making processes (Figure 8).

Figure 8: The illustration of external interpretation and internal interpretation, respectively.

Future Directions

Future research should focus on enhancing reinforcement learning integration in LLMs, improving personalized planning systems, and developing lightweight models for edge deployment. Further, advances in constructing training environments for pretraining models and enhancing generalization will be crucial for robust wide-ranged applicability in LLM-based planning systems.

Conclusion

This survey presents an in-depth examination of the landscape of LLM-based planning, providing researchers with a broad understanding of the methodologies, evaluations, and emerging insights into these systems. As LLMs continue to evolve, they promise significant advancements in autonomous decision-making and strategic planning across multifaceted domains.