Kernel Quantile Embeddings and Associated Probability Metrics

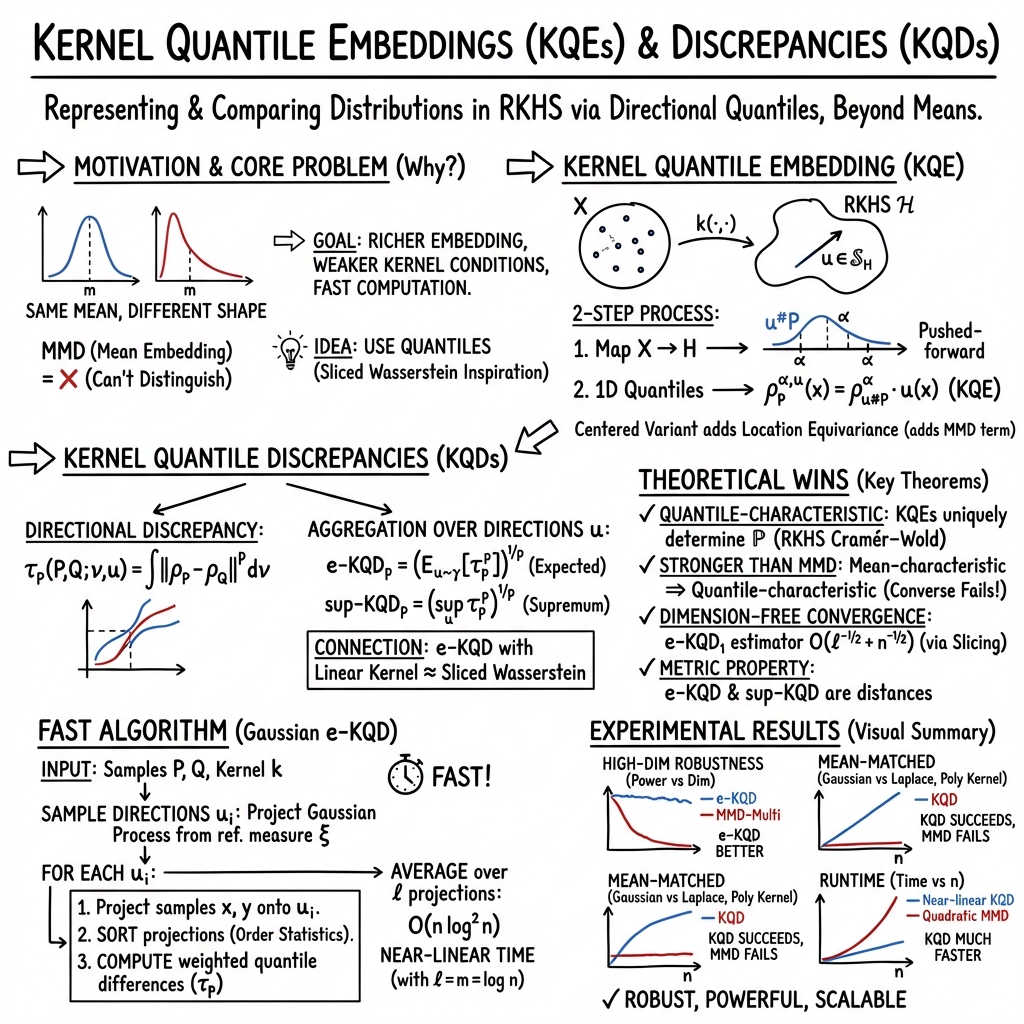

Abstract: Embedding probability distributions into reproducing kernel Hilbert spaces (RKHS) has enabled powerful nonparametric methods such as the maximum mean discrepancy (MMD), a statistical distance with strong theoretical and computational properties. At its core, the MMD relies on kernel mean embeddings to represent distributions as mean functions in RKHS. However, it remains unclear if the mean function is the only meaningful RKHS representation. Inspired by generalised quantiles, we introduce the notion of kernel quantile embeddings (KQEs). We then use KQEs to construct a family of distances that: (i) are probability metrics under weaker kernel conditions than MMD; (ii) recover a kernelised form of the sliced Wasserstein distance; and (iii) can be efficiently estimated with near-linear cost. Through hypothesis testing, we show that these distances offer a competitive alternative to MMD and its fast approximations.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, consolidated list of concrete gaps, limitations, and open questions that remain unresolved and could guide future research.

- Necessity vs. sufficiency of assumptions: Theoretical results rely on Hausdorff, separable, σ-compact input spaces and continuous, separating kernels. It is unclear which conditions are truly necessary and which can be relaxed (e.g., non-separable/Non-σ-compact spaces, discontinuous kernels, non-separating but structured kernels), and how results extend to Radon measures in more general topological settings.

- Characterizing quantile-characteristic kernels: While every mean-characteristic kernel is quantile-characteristic, a full characterisation of kernels that are quantile-characteristic (but not mean-characteristic) is missing. Practical, verifiable criteria for common non-Euclidean kernels (e.g., graph, string, manifold kernels) are not provided.

- Metric topology and convergence properties: Whether e-KQD and sup-KQD metrize weak convergence (as MMD does under characteristic kernels) is not established. The induced topologies, continuity in distribution under perturbations, and their relationship to standard modes of convergence remain open.

- Conditions for consistency beyond densities: Consistency of empirical KQEs (Theorem 3) assumes a strictly positive density for each projected measure u#P. Extending rates and guarantees to discrete, mixed, heavy-tailed, or singular distributions—where densities may not exist or be bounded away from zero—is unresolved.

- p>1 rate conditions and verification: The extension of finite-sample rates for KQDs when p>1 requires integrability of a nontrivial functional J_p involving level-set volumes. Practical criteria to verify these conditions for common kernels and data distributions are lacking.

- Centering and equivariance: The impact of using uncentered KQEs (which violate location equivariance) on statistical procedures is only informally justified (cancellation “when comparing two distributions”). Formal results clarifying invariances, failure modes, and when centering is necessary for specific tasks are needed.

- Supremum approximation quality: sup-KQD is computed by taking the maximum over finitely many sampled directions rather than solving a true supremum. There are no approximation guarantees (e.g., uniform convergence rates, covering number bounds on S_H, error bounds vs. the true sup), nor convergence analyses as l→∞.

- Choice and effect of the direction measure γ: In infinite-dimensional RKHSs, uniform measures on S_H do not exist; Gaussian measures projected onto S_H are used instead. The statistical and geometric implications of this choice (bias in direction sampling, sensitivity to covariance operator C, and whether γ has full support in practice) are not analysed. With empirical ξ and finite m, γ may not have full support, and e-KQD may cease to be a true metric—quantifying this degradation and its impact on testing is open.

- Role and selection of the weighting measure ν: The measure ν on quantile levels controls what parts of the distribution are emphasised, but there is no guidance on how to choose ν (uniform vs. targeted intervals, tails, robustness) or how ν affects metric properties, power, and consistency.

- Reference measure ξ selection: The Gaussian sampling scheme uses an integral-operator covariance defined by ξ; in experiments ξ is the empirical mixture of P_n and Q_n. Theoretical and empirical effects of different choices of ξ (data-dependent vs. prior, continuous vs. empirical, support coverage issues) on bias, variance, metric validity, and test power are unstudied.

- Computational trade-offs and parameter tuning: The near-linear estimator depends on l (number of directions) and m (reference samples) chosen as log n. Optimal l and m schedules (minimax or oracle rates), error–cost trade-offs, and principled adaptive selection strategies are not developed.

- Norm computation bottleneck: Computing ∥f∥_H incurs O(m2) cost; scalable alternatives (e.g., fast low-rank updates, random feature norms, sketching) and their effect on estimator bias/variance are not explored.

- Quantile computation scalability: The estimator sums across all order statistics, requiring sorting O(n log n) per direction. Alternatives (e.g., multi-quantile sketches, selection-based partial quantiles) to reduce sorting cost while preserving accuracy are not investigated.

- Analytical null distributions: Tests rely on permutation thresholds. Asymptotic distributions (CLTs) of e-KQD/sup-KQD under H0 and H1, and bootstrap procedures enabling analytic p-values and confidence intervals are not provided.

- Sensitivity to kernel hyperparameters: As with MMD, KQD performance depends on kernel choice and bandwidth (median heuristic used). Systematic kernel selection/tuning (e.g., power maximization, cross-validation, data-driven bandwidths) for KQDs is not developed.

- Extensions to conditional and structured settings: The paper focuses on marginal distributions. Open questions include conditional KQEs/KQDs (for conditional independence testing, causal inference), operator-valued kernels, and embeddings for distributions on complex structured spaces (graphs, sequences, manifolds) with established computational and statistical guarantees.

- Robustness properties: Quantiles are robust to outliers, but the robustness of KQDs (choice of ν, trimming, influence functions, breakdown points) is not theoretically or empirically analysed.

- Connections to Wasserstein/GSW: While KQDs recover sliced and max-sliced Wasserstein in special cases, quantitative equivalence bounds, conditions for exact recovery with non-linear kernels, and error analysis versus SW/GSW as d or RKHS dimension grows remain to be derived.

- Interpolation with Sinkhorn: The stated “mid-point interpolant” interpretation for centered KQDs (MMD + kernel-sliced Wasserstein) lacks a formal interpolation parameter and comparative theory versus entropic regularization (e.g., bias, convergence, sample complexity, and limiting regimes).

- Graph and non-characteristic kernels: Although many graph kernels are not mean-characteristic, it remains untested whether they are quantile-characteristic in practice. Empirical and theoretical investigations on real graph/structured datasets are needed.

- Practical guidance for ν, γ, and ξ: The method introduces three measures (quantile weights ν, direction measure γ, and reference ξ), but provides no principled recipes for their joint selection tailored to task/data, nor sensitivity analyses identifying their relative impact.

- Finite-sample metric validity: e-KQD is a distance when ν, γ have full support; the finite-sample Monte Carlo estimator uses finite l and finite-rank γ_m. Formal results establishing when the estimator defines a pseudo-metric vs. a true metric, and how quickly metric validity is recovered as l, m increase, are missing.

- Application breadth and benchmarks: Experiments mostly use Euclidean data and a small set of tasks; broader benchmarks (structured/non-Euclidean domains, generative modeling evaluation, domain adaptation, distribution regression) and comparisons against state-of-the-art SW/GSW/Sinkhorn/MMD variants under matched complexity remain to be performed.

Collections

Sign up for free to add this paper to one or more collections.