- The paper demonstrates that LLaMEA-BO leverages LLMs and evolutionary strategies to automatically generate effective Bayesian Optimization algorithms.

- It employs a multi-parent evolutionary approach with BO-specific prompts, enabling rapid convergence in high-dimensional optimization tasks.

- The evaluation shows that LLaMEA-BO-generated algorithms outperform traditional baselines, optimizing up to 19 of 24 BBOB functions under tight evaluation budgets.

LLaMEA-BO: A LLM Evolutionary Algorithm for Automatically Generating Bayesian Optimization Algorithms

This paper presents "LLaMEA-BO," a framework that leverages LLMs to automate the generation of complete Bayesian Optimization (BO) algorithms. LLaMEA-BO is demonstrated to outperform state-of-the-art BO algorithms on the BBOB test suite, showcasing the potential of LLMs as algorithmic co-designers by generating novel and effective optimization algorithms.

Introduction to LLaMEA-BO

The "LLaMEA-BO" framework applies LLMs for the automated design of Bayesian Optimization (BO) algorithms, addressing the complex, labor-intensive process traditionally reliant on expert knowledge. By adapting the Large-Language-Model Evolutionary Algorithm (LLaMEA) framework, LLaMEA-BO uses a multi-parent evolutionary strategy specifically tailored for BO. This involves a population-based approach with crossover operations suited for the modular nature of BO, facilitating the discovery of high-performing novel algorithmic combinations. The methodology, demonstrated in Algorithm 1, extends beyond the μ+λ evolution strategy by introducing a BO-specific prompt structure that includes templates and enriched mutation operators to improve algorithm effectiveness iteratively.

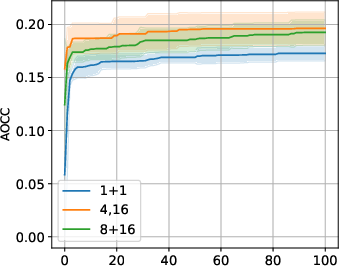

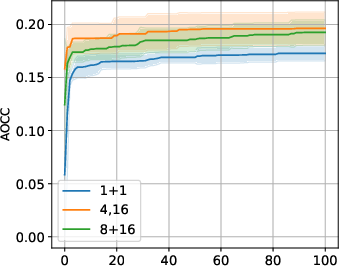

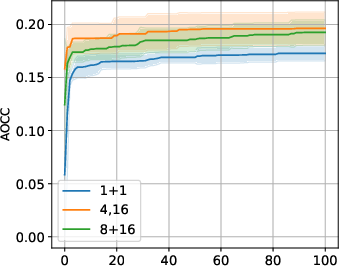

Figure 1: AOCC for different ES configurations.

\section{Evaluation}

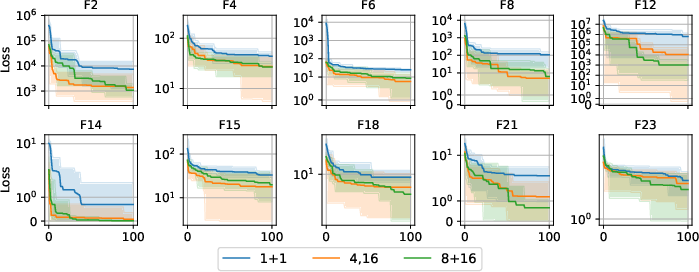

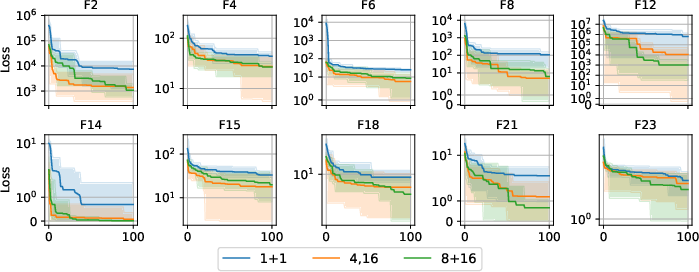

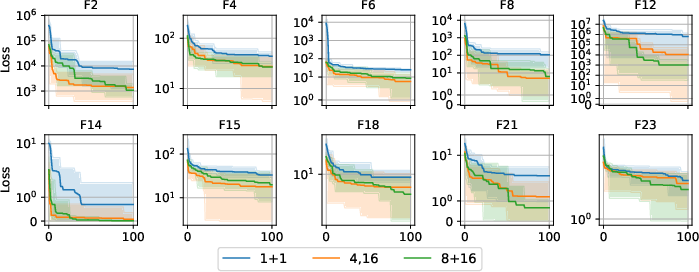

The evaluation of LLaMEA-BO-generated optimization algorithms utilizes the ANYTIME area over the convergence curve (AOCC) using a pragmatic subset of ten functions from the Owing Black-Box Optimization Benchmarking (BBOB) suite. The evaluation employs a tight budget of 20×d function evaluations for dimension d=5, a scenario typical in BO benchmarks, emphasizing rapid convergence and surrogate model exploitation. This setup is designed to ascertain the generated algorithms' ability to generalize across unseen tasks and more complex tasks in dimension {10,20,40} and within the Bayesmark framework.

The top-performing algorithms are benchmarked against state-of-the-art BO baselines, such as CMA-ES, HEBO, TuRBO, and Vanilla BO.

Figure 2: Best algorithm evaluation based on AOCC: Violin plots aggregating over 24 functions, 3 instances, 5 runs.

The evaluation based on aggregate AOCC across the BBOB functions shows that up to 19 out of 24 test functions in dimension $5$ were effectively optimized by LLaMEA-BO generated algorithms, a remarkable performance considering the brief evaluations even in high-dimensional spaces. Notably, the ATRBO variant excelled in achieving the highest average AOCC in the comparative assessment. These results indicate significant generalization capacities of the LLaMEA-BO-generated algorithms, effectively closing the performance gap with established baselines like CMA-ES and outperforming them in some instances.

A crucial observation is the emergence of distinct algorithms incorporating various approaches such as trust regions, Bayesian parameter schedules, and crossover strategies during both the code production and testing phases. These methods are suggestive of introducing effective explorative dynamics. The ablation studies revealed the significance of population-based strategies in the evolutionary process, with results favoring a larger population size via a comma-strategy as an enabler for further code exploration.

\section{Discussion}

The presented research underscores the viability of employing LLMs as generators for the creation of optimized Bayesian Optimization algorithms that are not only competitive but often superior to existing state-of-the-art approaches. This study represents a significant step in reimagining the role of automated tools in the synthesis and advancement of complex algorithmic solutions. The results highlight the robustness of the LLaMEA-BO in achieving potent BO variants without additional fine-tuning, offering a glimpse into an era where novel optimization procedures can be discovered with minimal human intervention.

The broader implications of this work point towards the potential opportunities arising from LLMs as co-designers in computational tasks, allowing for rapid prototyping and development, particularly in fields that demand specialized expertise and are resistant to generic solutions. However, the ethical considerations of leveraging LLMs must not be overlooked, particularly in relation to the risks associated with the misuse of technology, such as in adversarial attacks. Consequently, it is crucial to adopt stringent safety and auditing protocols when deploying LLaMEA-BO generated solutions in real-world applications.

Future research could focus on expanding LLaMEA-BO's framework to encompass other optimization contexts, including discrete, multi-objective, or noisy problems. Additionally, exploring the potential of multi-modal prompting and using diverse LLMs may offer further opportunities for enhancing methodological diversity and robustness of the generated algorithms. This study underscores the viability of LLM-based frameworks in the automated co-design of optimization algorithms and sets a precedent for future work in autonomous algorithm discovery.

In conclusion, LLaMEA-BO represents a significant advancement in the application of LLMs in optimization algorithm design, establishing promising potential for both academia and industry, as it demonstrates that LLMs are capable of generating powerful Bayesian optimization pipelines that rival and, in some instances, eclipse the capabilities of traditional state-of-the-art algorithms.