Beyond the Black Box: Interpretability of LLMs in Finance

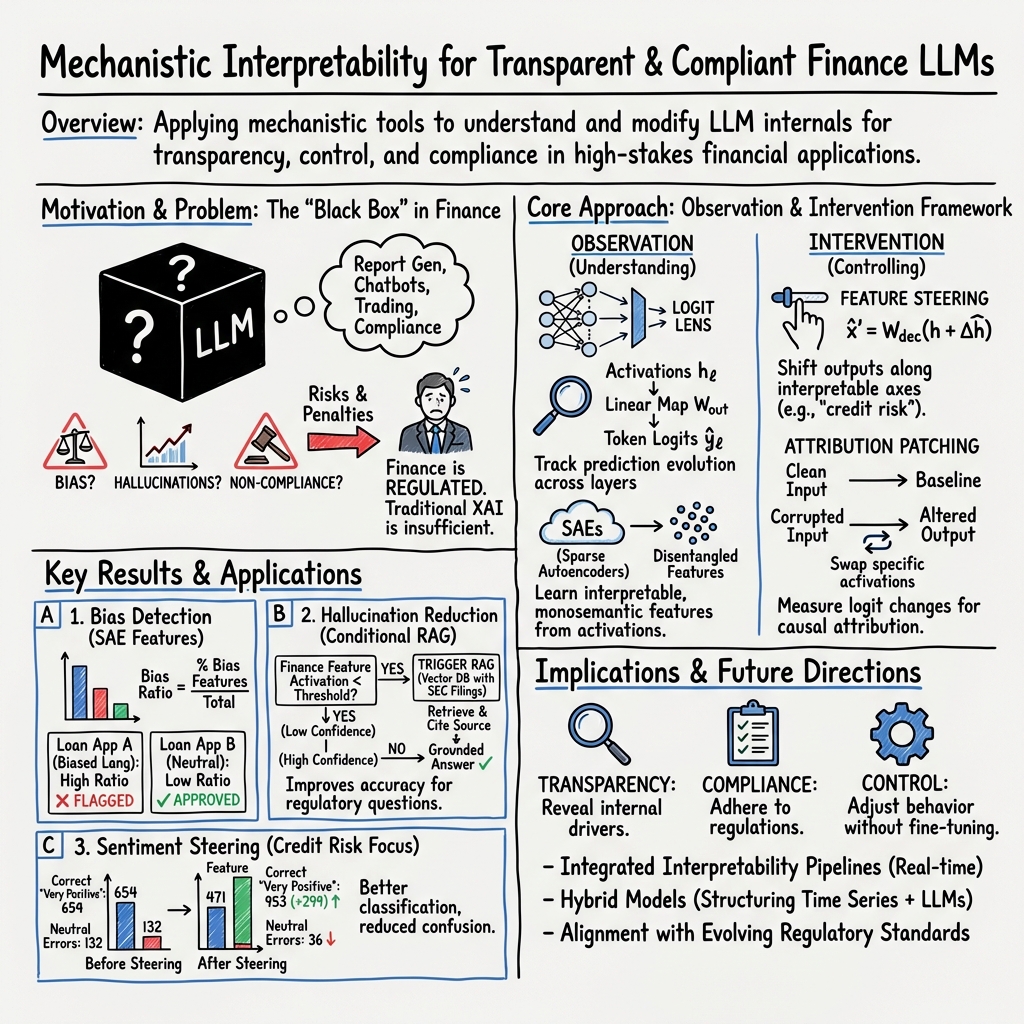

Abstract: LLMs exhibit remarkable capabilities across a spectrum of tasks in financial services, including report generation, chatbots, sentiment analysis, regulatory compliance, investment advisory, financial knowledge retrieval, and summarization. However, their intrinsic complexity and lack of transparency pose significant challenges, especially in the highly regulated financial sector, where interpretability, fairness, and accountability are critical. As far as we are aware, this paper presents the first application in the finance domain of understanding and utilizing the inner workings of LLMs through mechanistic interpretability, addressing the pressing need for transparency and control in AI systems. Mechanistic interpretability is the most intuitive and transparent way to understand LLM behavior by reverse-engineering their internal workings. By dissecting the activations and circuits within these models, it provides insights into how specific features or components influence predictions - making it possible not only to observe but also to modify model behavior. In this paper, we explore the theoretical aspects of mechanistic interpretability and demonstrate its practical relevance through a range of financial use cases and experiments, including applications in trading strategies, sentiment analysis, bias, and hallucination detection. While not yet widely adopted, mechanistic interpretability is expected to become increasingly vital as adoption of LLMs increases. Advanced interpretability tools can ensure AI systems remain ethical, transparent, and aligned with evolving financial regulations. In this paper, we have put special emphasis on how these techniques can help unlock interpretability requirements for regulatory and compliance purposes - addressing both current needs and anticipating future expectations from financial regulators globally.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper looks at how to “open the black box” of big AI LLMs used in finance. Instead of just seeing what the model outputs, the authors try to understand how the model thinks inside—step by step—so banks and financial companies can trust it, check it for fairness, and make it follow rules. They focus on a research area called mechanistic interpretability, which is like taking apart an engine to see how the parts work and control them.

Key Questions

The paper explores a few simple, big questions:

- How do LLMs make decisions about financial topics (like whether a stock might rise or fall)?

- Can we find and name the “features” inside the model that represent ideas (like positive sentiment or risk)?

- Can we change those features to reduce bias, stop hallucinations (wrong facts), and follow regulations?

- How can these tools help explain AI decisions to regulators and make models more trustworthy?

How Did They Study It?

Think of an LLM like a huge, complicated machine that reads text and predicts the next word. The authors use tools to watch and change what happens inside this machine.

Key ideas explained in everyday language

- Mechanistic interpretability: Like opening the hood of a car to see which gears and wires make it move, and then testing or adjusting those parts.

- Features: Small pieces of knowledge inside the model (for example, “positive sentiment” or “mentions of London”).

- Superposition: The model packs many ideas into the same space, like several songs overlapping on one audio channel. This makes single “neurons” react to more than one idea.

- Monosemantic features: Clear, single-meaning features (each one represents just one idea), which are easier to understand.

Main tools and how they work

- Logit Lens: Imagine checking the model’s “best guess” at every step, not just at the end. This shows how its predictions change layer by layer, like watching a student’s thinking improve through steps of a math problem.

- Attribution Patching: Take a normal input (clean) and a trickier or wrong input (corrupted). Swap parts of the model’s inner activity and see what changes. It’s like replacing a part in a circuit to see which component actually causes the light to turn on.

- Sparse Autoencoders (SAEs): The model’s thoughts can be messy, with many ideas mixed. SAEs rearrange them into neat, labeled bins. This helps pull out clear, separate features and even lets you “steer” them up or down to change the model’s behavior.

- Auto-interpretation: Use another AI to read where a feature strongly activates and give it a human-readable label (like “references to London”).

- Self-interpretation: Ask the model itself to describe a feature you’re steering.

- Steering: Turn a specific feature up or down during the model’s processing (like moving a slider). This can gently push the model to be more cautious, reduce bias, or avoid hallucinations.

Observation vs. Intervention

- Observation methods: Just watch how the model works inside without changing it (Logit Lens, feature probing, attention analysis).

- Intervention methods: Actively change inner signals and measure the effect (Attribution Patching, SAE steering).

What Did They Find and Why It’s Important

Here are the main takeaways from the experiments and examples:

- Predictions develop across layers: Using Logit Lens on financial sentences, the model’s early layers might lean toward one answer (for example, “fall”), but deeper layers refine the thinking and often flip to the more sensible answer (“rise”) when the context is positive. In negative contexts (like “recession risk”), deeper layers correctly favor words like “fall” or “decline.” This shows the model builds up its final decision in stages.

- Specific “heads” and layers matter: Attribution patching found that certain layers (like layers 8 and 10 in GPT-2 Small) and specific attention heads strongly affect the final decision. Others can introduce noise. This pinpoints where to audit or improve the model for financial tasks.

- Clearer features with SAEs: Sparse autoencoders can pull apart mixed signals and reveal interpretable features (like sentiment, risk cues, or location references). Labeling and steering these features makes the model more controllable.

- Steering reduces bias and hallucinations: By turning certain features up or down, you can guide outputs toward fairer, safer, and more accurate responses without retraining the entire model. That’s faster and can be more transparent than heavy fine-tuning.

- Better fit for regulations: Because you can show which parts of the model caused a decision, and even adjust them, it’s easier to explain decisions, check for fairness, and meet rules like the EU’s AI requirements.

Why This Could Matter in the Real World

Finance is high-stakes: poor decisions can cost a lot of money and break rules. This paper shows practical ways to:

- Audit and explain AI decisions in credit scoring, fraud detection, risk assessment, and trading.

- Detect and reduce bias and hallucinations, improving fairness and trust.

- Adjust model behavior quickly (by steering features) without retraining, which saves time and resources.

- Build stronger compliance and accountability, making it easier to answer regulators’ questions.

In short, opening the black box helps financial institutions use powerful AI safely and responsibly. With tools like SAEs, Logit Lens, and attribution patching, we get both transparency and control—two things that matter a lot when real money and real people are involved.

Collections

Sign up for free to add this paper to one or more collections.