- The paper introduces a multi-agent strategy with planning, thought, and execution agents to enable adaptive graph exploration for enhanced LLM reasoning.

- The methodology incorporates a self-reflection module that identifies and corrects semantic errors through reverse reasoning, ensuring improved logical consistency.

- Empirical results on GRBENCH and WebQSP benchmarks demonstrate significant gains in multi-hop reasoning accuracy compared to prior GraphRAG approaches.

Graph Counselor: Enhancing LLM Reasoning through Adaptive Graph Exploration

Introduction to Graph Counselor

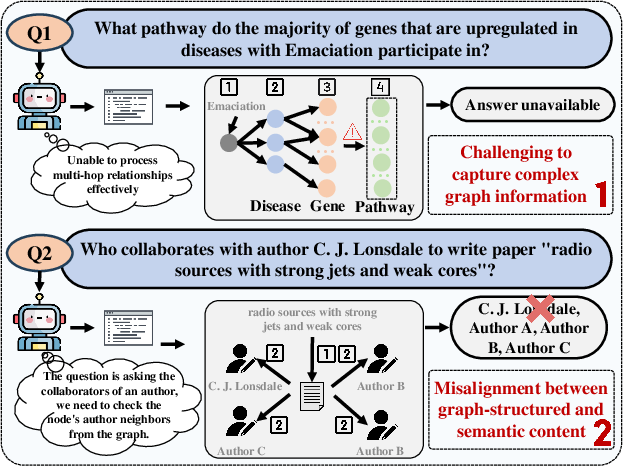

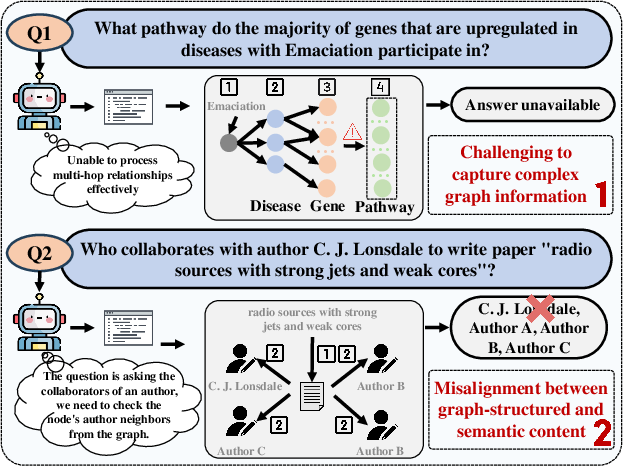

The paper presents "Graph Counselor: Adaptive Graph Exploration via Multi-Agent Synergy to Enhance LLM Reasoning," a method designed to address the challenges present in Graph Retrieval Augmented Generation (GraphRAG). Despite GraphRAG's ability to integrate external knowledge by modeling relationships within graph data, previous methods have fallen short due to inefficient information aggregation and rigid reasoning mechanisms. Graph Counselor proposes a solution through multi-agent collaboration, enabling adaptive information extraction and dynamic reasoning depth.

Figure 1: Two examples of LLM reasoning that highlight the two challenges.

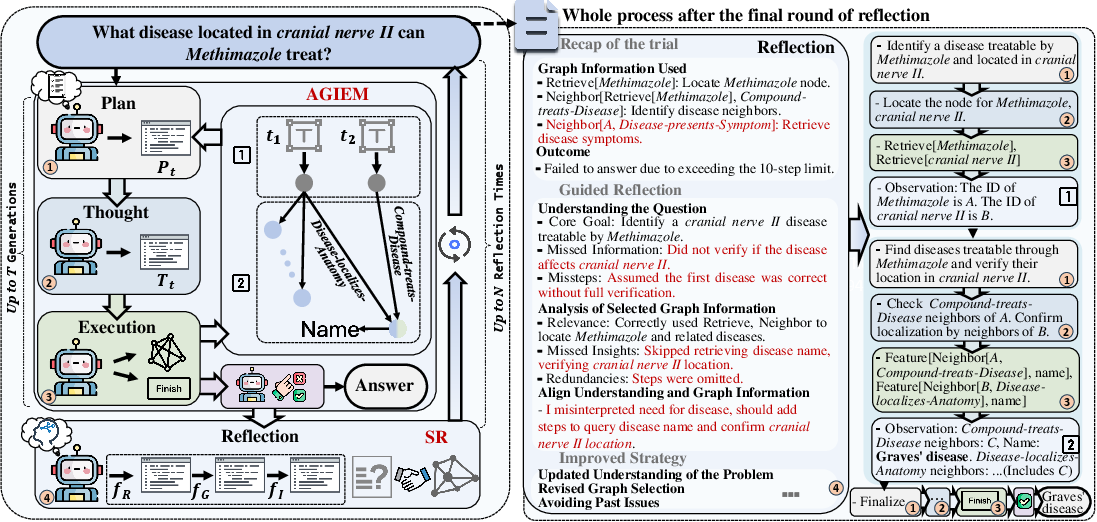

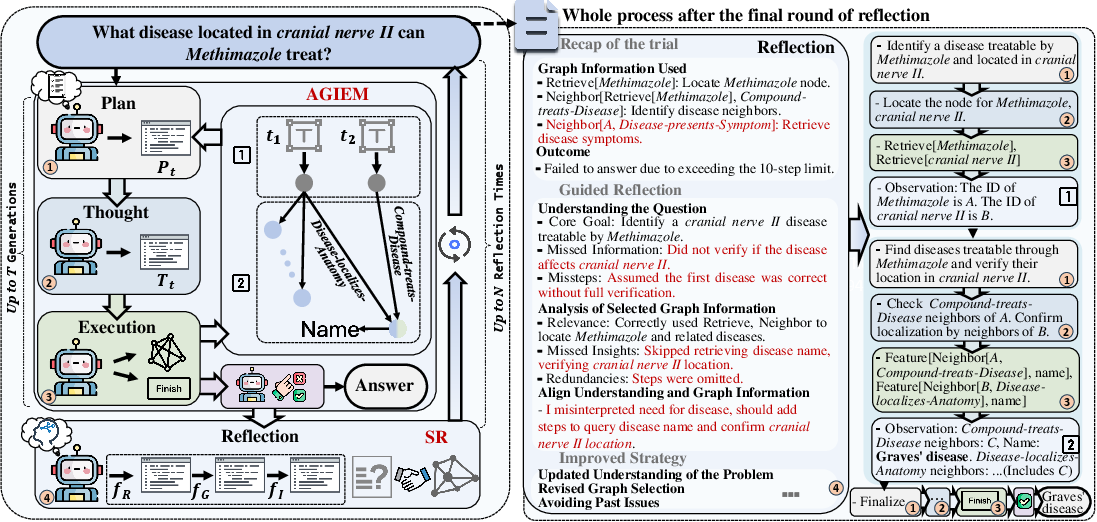

Multi-Agent Synergy in Graph Counselor

The key innovation in Graph Counselor is the Adaptive Graph Information Extraction Module (AGIEM), which utilizes three collaborative agents—Planning, Thought, and Execution—to enhance reasoning capabilities:

- Planning Agent: It constructs a coherent reasoning pathway by analyzing query requirements and contextual information to structure the reasoning process.

- Thought Agent: It refines information extraction by pinpointing the graph-related knowledge necessary at each reasoning step.

- Execution Agent: It dynamically modifies retrieval strategies based on previous steps, ensuring the extracted knowledge maintains structural integrity and relevance.

This tri-agent approach models complex graph structures more effectively, facilitating precise semantic correction and multi-level dependency modeling.

Figure 2: The workflow of Graph Counselor (left), with an example of reasoning process after reflection (right), where red highlights indicate errors and key reflections. The numbers in the boxes (left) correspond to the numbered reasoning steps shown in the process (right).

Self-Reflection and Error Correction

A crucial contribution of Graph Counselor is the Self-Reflection with Multiple Perspectives (SR) module. It mitigates semantic misalignments by evaluating reasoning paths and responses for logical consistency. Through self-reflection and reverse reasoning, SR identifies discrepancies and adjusts input contexts, thereby enhancing semantic coherence and reasoning reliability.

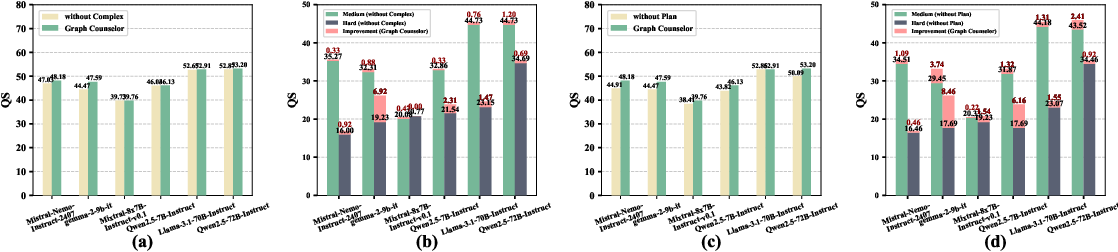

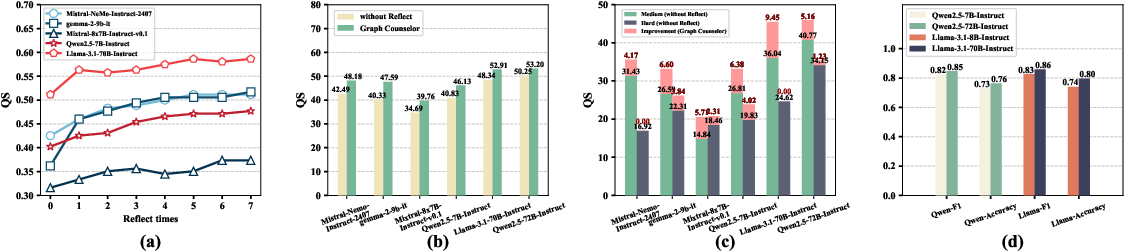

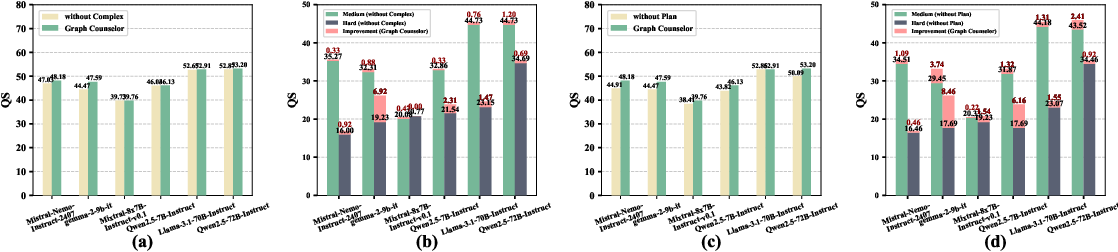

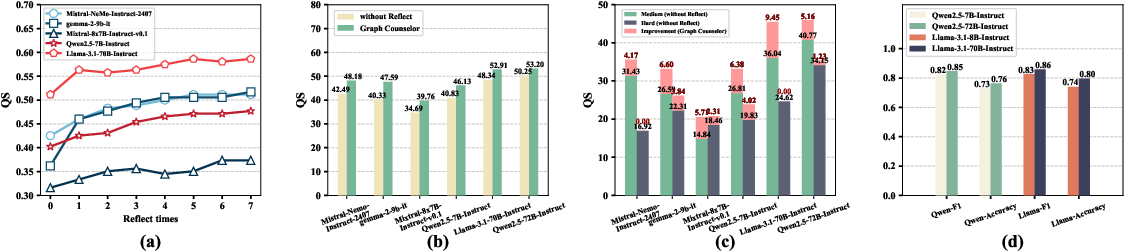

Results and Evaluation

Graph Counselor has been empirically validated on various benchmarks, including the GRBENCH and WebQSP datasets. It consistently surpasses existing methods in reasoning accuracy and generalization ability. Notably, Graph Counselor outperforms GraphRAG and Graph-CoT in multi-hop reasoning tasks, addressing challenges such as information aggregation inefficiency and rigid reasoning depth adjustment.

Figure 3: (a): Results comparison between without Complex and Graph Counselor on GRBENCH; (b): Results comparison between without Complex and Graph Counselor on GRBENCH with different levels; (c): Results comparison between without Plan and Graph Counselor on GRBENCH; (d): Results comparison between without Plan and Graph Counselor on GRBENCH with different levels.

Practical Implications

Graph Counselor demonstrates significant improvements in graph-based reasoning tasks, offering a robust framework that effectively combines retrieval augmentation with adaptive graph exploration. Its applications extend to domains requiring complex knowledge integration, such as healthcare, legal analysis, and scientific research, where structured knowledge retrieval and dynamic reasoning are pivotal.

Computational and Resource Considerations

While enhancing reasoning capabilities, Graph Counselor does introduce increased computational demand due to its iterative and multi-agent processing paradigm. However, the trade-off between accuracy and computing cost is justified by the substantial improvement in reasoning outcomes.

Figure 4: (a): Performance of Graph Counselor with different reflect times; (b): Results comparison between without Reflect and Graph Counselor on GRBENCH; (c): Results comparison between without Reflect and Graph Counselor on GRBENCH with different levels; (d): Reflect performance of models with different sizes.

Conclusion

Graph Counselor emerges as a promising approach for overcoming existing limitations in GraphRAG methodologies. By leveraging multi-agent synergy and self-reflection mechanisms, it presents a comprehensive solution for enhancing LLM reasoning with graph-based information. Future research could focus on optimizing the interactive iteration efficiency and exploring multimodal knowledge representations. The findings of this paper represent a step toward more robust and contextually aware systems that can be applied across various specialized domains.