- The paper introduces FA-INR, which leverages adaptive encoding via cross-attention and a Mixture of Experts to significantly enhance surrogate model fidelity.

- It employs a learnable memory bank for dynamic spatial feature encoding, addressing rigid INR limitations while reducing computational load.

- Experimental results across oceanography, cosmology, and fluid dynamics demonstrate superior accuracy, model compactness, and reliable predictions.

High-Fidelity Scientific Simulation Surrogates via Adaptive Implicit Neural Representations

Scientific simulations are a fundamental tool for exploring complex physical systems, but they often require significant computational power and time. This paper introduces Feature-Adaptive Implicit Neural Representation (FA-INR), a method designed to provide high-fidelity surrogates for scientific simulations. FA-INR aims to address the limitations of rigid geometric structures in traditional implicit neural representations (INRs) by leveraging adaptive and flexible feature representations through cross-attention mechanisms and the Mixture of Experts framework. The methodology improves model fidelity and compactness while adequately modeling localized, high-frequency variations.

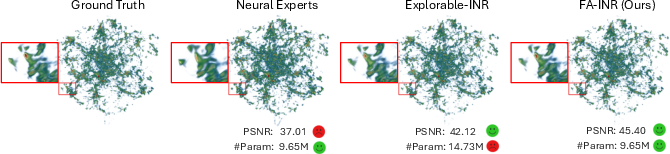

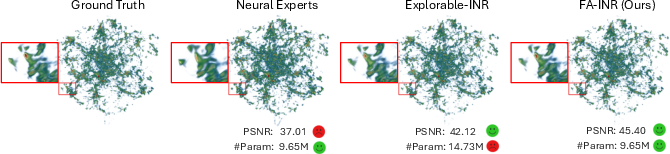

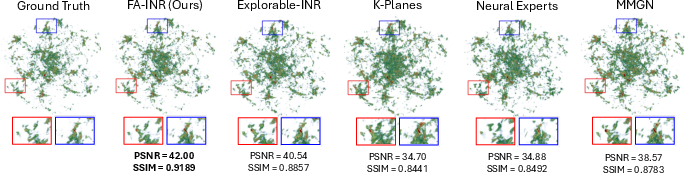

Figure 1: FA-INR outperforms alternative INR methods, including Neural Experts.

Implicit Neural Representations in Surrogate Modeling

Problem Definition and Application

INRs offer a continuous and compact framework for surrogate modeling, mapping input coordinates and simulation conditions to target variables. They provide resolution-independent predictions but struggle with localized complexities. FA-INR proposes using cross-attention with an augmented memory bank to address these fidelity issues. This novel approach allocates model capacity on data characteristics rather than predefined structures, preserving compactness while enhancing fidelity.

Challenges in INRs

Traditional INR models often rely on explicit grid-based feature encodings to capture high-frequency details, leading to increased memory usage and scalability issues. These approaches can be inefficient and limit the adaptability to data's inherent characteristics.

Feature-Adaptive Implicit Neural Representation

Adaptive Encoding

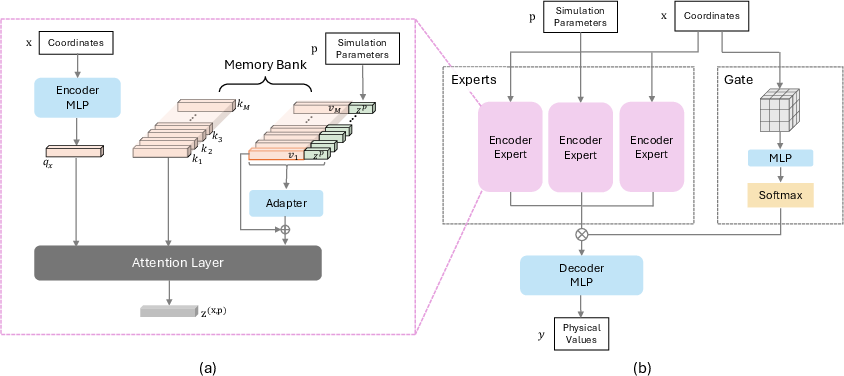

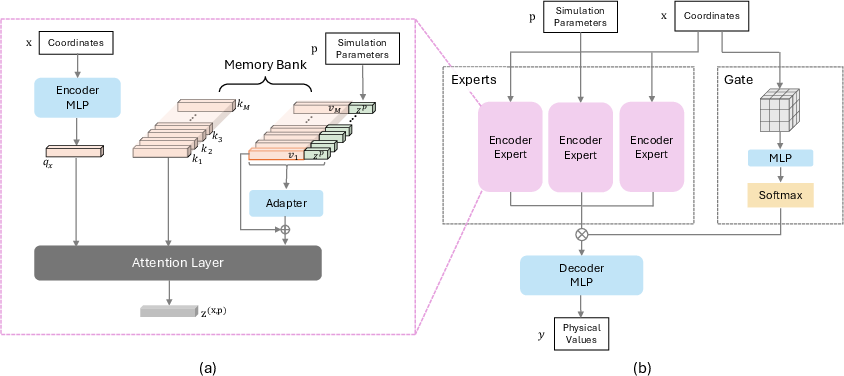

FA-INR employs a learnable memory bank for spatial encoding, integrated with simulation parameters through a value adapter mechanism. The cross-attention process allows querying data characteristics dynamically, improving model flexibility and fidelity.

Figure 2: Architecture of the proposed FA-INR, showcasing the adaptive feature routing mechanism.

Mixture of Experts Framework

The MoE framework enhances specialization in spatial encoding, utilizing a gating mechanism for efficient expert routing based on spatial features. This method significantly improves representation efficiency and prediction quality over high-dimensional scientific data.

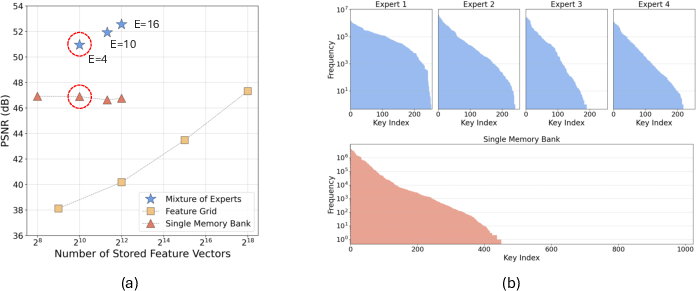

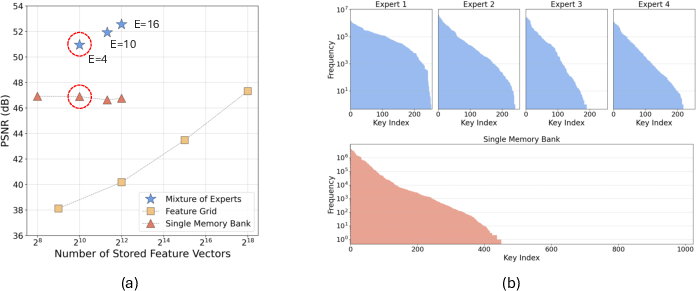

Figure 3: Comparison of scalability among strategies on the MPAS-Ocean dataset, illustrating effective scaling using MoE.

Experimental Evaluation

Setup and Metrics

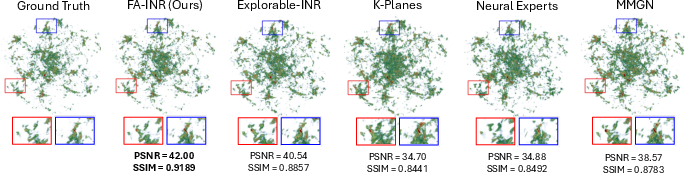

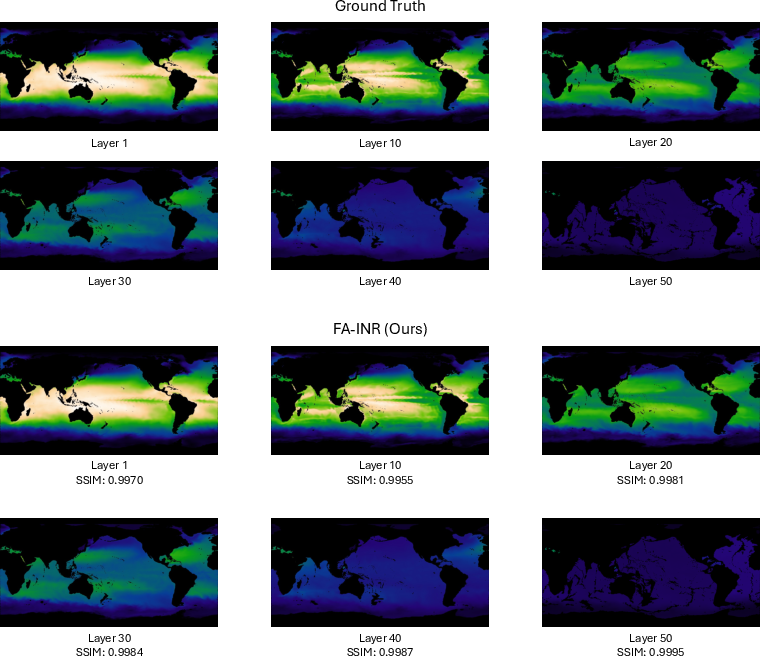

FA-INR's performance is evaluated on datasets from oceanography, cosmology, and fluid dynamics. Metrics include PSNR, SSIM, and MD, measuring numerical accuracy and visual fidelity. FA-INR consistently outperforms baseline models, achieving high PSNR scores and reduced model sizes while offering improved prediction reliability.

Figure 4: Rendering results comparing FA-INR with baseline models on the Nyx dataset.

Ablation Studies

Studies explore the impact of different spatial encoding strategies, confirming the efficacy of adaptive key-value memory banks over rigid grids or planes. The utilization of MoE and encoder expertise provides superior fidelity with efficient computational resource use.

Implications and Future Work

FA-INR represents a significant advancement in surrogate modeling for scientific simulations, balancing compactness and fidelity. Future work could explore broader applications across diverse scientific domains and integration with real-time simulation systems. The approach opens pathways for enhancing transparency and interpretation in model predictions, crucial for scientific exploration and decision-making.

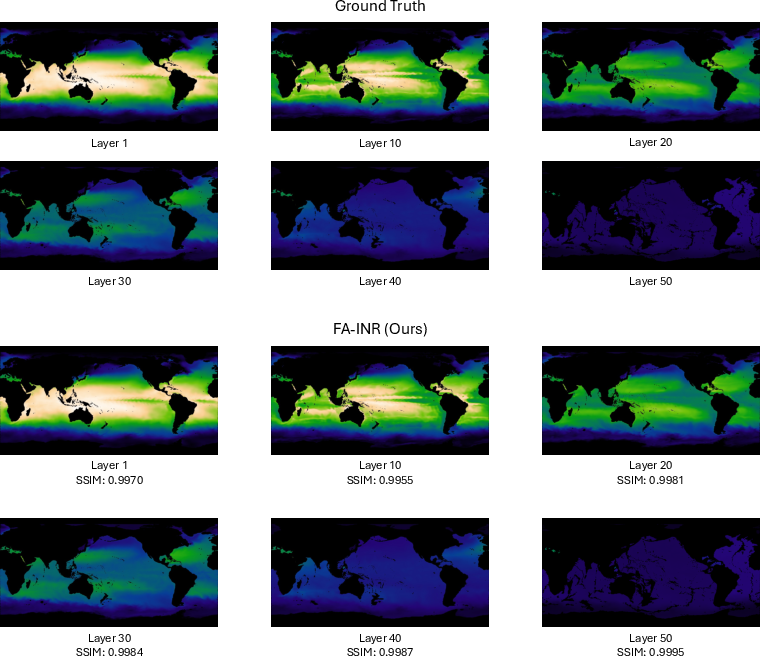

Figure 5: Visualization of high-variation features in MPAS-Ocean, with prediction errors highlighted.

Conclusion

Feature-Adaptive Implicit Neural Representation (FA-INR) offers a powerful and flexible framework for surrogate modeling in scientific simulations, addressing traditional limitations by employing adaptive features and expert-driven predictions. These advances facilitate high-fidelity modeling while maintaining computational efficiency, advancing methodologies for real-time scientific analysis and exploration.