Too Big to Think: Capacity, Memorization, and Generalization in Pre-Trained Transformers

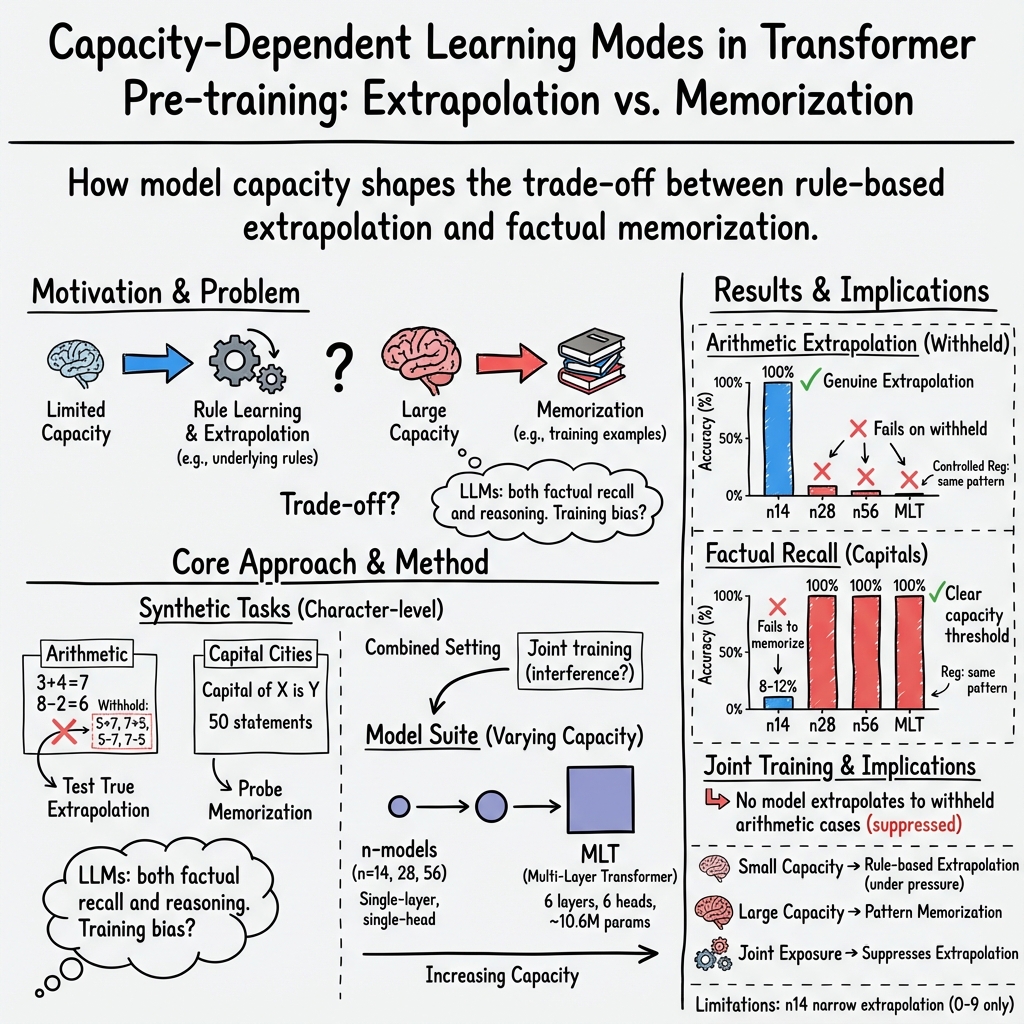

Abstract: The relationship between memorization and generalization in LLMs remains an open area of research, with growing evidence that the two are deeply intertwined. In this work, we investigate this relationship by pre-training a series of capacity-limited Transformer models from scratch on two synthetic character-level tasks designed to separately probe generalization (via arithmetic extrapolation) and memorization (via factual recall). We observe a consistent trade-off: small models extrapolate to unseen arithmetic cases but fail to memorize facts, while larger models memorize but fail to extrapolate. An intermediate-capacity model exhibits a similar shift toward memorization. When trained on both tasks jointly, no model (regardless of size) succeeds at extrapolation. These findings suggest that pre-training may intrinsically favor one learning mode over the other. By isolating these dynamics in a controlled setting, our study offers insight into how model capacity shapes learning behavior and offers broader implications for the design and deployment of small LLMs.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper asks a simple but important question: when we train AI LLMs, do they learn real rules (like how to do math) or do they mostly memorize facts (like “the capital of France is Paris”)? The authors show that the size of the model strongly affects which of these two styles it learns.

What questions were they asking?

The researchers focused on three easy-to-understand questions:

- If you make a model very small, does it learn the “rules” behind patterns (generalization), like doing math it hasn’t seen before?

- If you make a model bigger, does it get better at memorizing facts (like capital cities), even if it stops learning rules?

- If you train a model on both math and facts at the same time, can any model learn both rule-like generalization and memorized facts together?

How did they study it?

They built and trained several “Transformer” models. You can think of a Transformer as a smart text predictor—like a phone keyboard that guesses the next character or word—but much more powerful.

To keep things simple and controlled, they created two tiny, synthetic (made-up) datasets at the character level (letter-by-letter), so nothing fancy about words gets in the way:

- A math set: short addition and subtraction problems with single digits (like “<3+4=7>”). They deliberately left out all problems that involve 5 and 7 (like 5+7, 7+5, 5−7, 7−5). If a model solves those anyway, it’s a sign it learned the rule and can “extrapolate” to unseen cases.

- A facts set: 50 short sentences like “Capital of X is Y.” This is pure memorization—there’s no rule to discover, just remembering.

They trained multiple models of different sizes—from very small (“small brain”) to quite large (“big brain”)—and checked:

- Can they do the held‑out math (like 5+7)?

- Can they recite the capital city facts perfectly?

- What happens if you mix both tasks together?

They also double-checked that their results weren’t caused by specific training tricks (like dropout or weight decay) and even tried very long training to see if models would eventually “grok” (switch from memorizing to understanding rules). They didn’t.

What did they find?

Here’s the big picture:

- Small models learned rules but not facts:

- The tiniest successful model could do single-digit math it never saw before (like 5+7), which means it learned a simple math rule for that limited range.

- But it couldn’t memorize the 50 capital city facts well.

- Larger models memorized facts but stopped generalizing:

- Bigger models perfectly memorized the capital city sentences.

- But they failed on the held‑out math cases (like 5+7), even though they did great on the math examples they saw during training. In other words, they didn’t learn the rule; they memorized patterns.

- Training both tasks together made generalization worse for everyone:

- When math and facts were mixed, no model—small or large—could do the held‑out math cases (like 5+7).

- Larger models still memorized facts well, but even the small model lost its earlier math generalization.

- The “rule learning” was narrow:

- The small model’s math skill didn’t extend beyond single digits. When tested on bigger numbers (like up to 19), it failed. So its “rule” was shallow and limited to what it had seen.

- Extra checks didn’t change the story:

- Matching regularization (training settings) across models didn’t fix the trade-off.

- Extremely long training didn’t make the big model switch from memorizing to understanding the math rule.

Why this matters: It shows a consistent trade-off. Small models tend to discover simple rules when they can’t memorize everything. Bigger models tend to memorize lots of examples and stop learning rules that help on unseen cases.

Why is this important?

- It reveals a built-in trade-off: With limited capacity, models can be pushed toward learning simple, general patterns. With more capacity, they often choose memorization instead. This affects how we design and train models, especially small ones.

- It warns about training on mixed objectives: When you train a model to both reason (math-like rules) and memorize facts at the same time, the reasoning can suffer.

- It connects to “hallucinations”: If we push models to memorize a lot of facts, we may accidentally weaken their ability to generalize, which can hurt reasoning and reliability.

What does this mean for the future?

- One-size-fits-all models may hit limits: If memorization crowds out generalization, then simply making models bigger or feeding them mixed tasks might not produce the best reasoning.

- Consider specialized or modular designs: Separate components for “facts” and “rules” might help—one part memorizes, another part reasons—so they don’t interfere with each other.

- Small models can be useful: In settings where doing more with less is important (like robotics or on-device AI), small models might actually be better at learning simple rules, even if they remember fewer facts.

In short: The paper shows that model size steers how models learn—small models are nudged toward understanding simple patterns, while larger ones tend to memorize. Mixing tasks can make generalization worse. This insight can guide how we design and train future AI systems.

Collections

Sign up for free to add this paper to one or more collections.