- The paper introduces TreeLoRA which uses a hierarchical gradient-similarity tree to guide adaptive, sparse parameter updates for continual learning.

- It employs bandit algorithms with a Lower Confidence Bound approach to efficiently identify task similarities and preserve previously learned knowledge.

- Experimental results demonstrate up to 3.2x speedup for vision transformers and 2.4x for LLMs, highlighting its practical efficiency over state-of-the-art methods.

TreeLoRA: Efficient Continual Learning via Layer-Wise LoRAs Guided by a Hierarchical Gradient-Similarity Tree

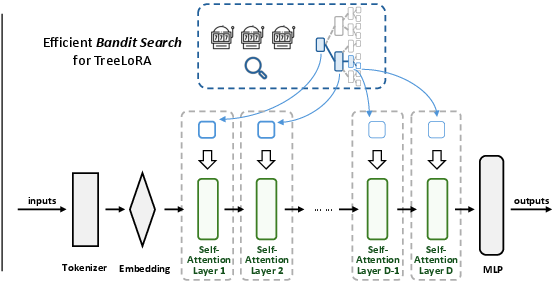

The paper introduces TreeLoRA, an approach designed for efficient continual learning using large pre-trained models (LPMs). TreeLoRA leverages hierarchical gradient similarity to construct layer-wise adapters that facilitate efficient adaptation to new tasks while preserving previous knowledge. The approach employs bandit algorithms for exploring the hierarchical task structure, aiming to mitigate the computational burdens of task similarity estimation and parameter optimization in LPMs.

Continual learning poses challenges due to distribution shifts when new tasks arrive with different data distributions, which can lead to catastrophic forgetting. TreeLoRA addresses these issues within the framework of continual learning using hierarchical task structures. Two main stages define this problem: offline initialization, where a pre-trained model is established, and online adaptation, where new tasks are sequentially handled. The overall goal is to optimize both the accuracy of the model on new and previous tasks while minimizing performance degradation known as backward transfer (Bwt).

Approach Details

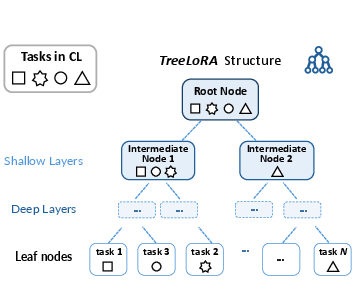

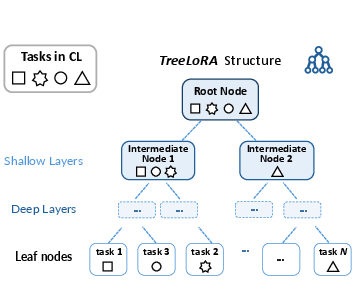

TreeLoRA constructs a hierarchical task-similarity tree structure based on gradient direction similarity, capturing shared knowledge among tasks and handling conflicting tasks separately. Specifically, low-level task patterns are captured by shallow nodes, while high-level task-specific semantics are associated with deeper nodes.

Adaptive Tree Construction

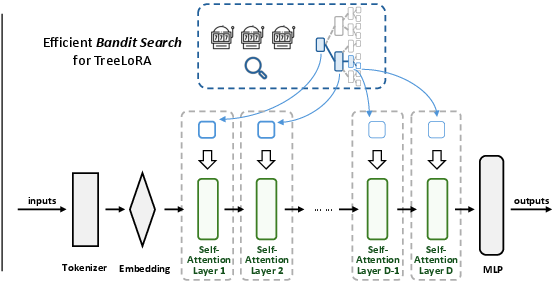

TreeLoRA adapts to the complexity of computing task similarity. It transitions the problem to a multi-armed bandit setup where gradient direction similarity is sequentially queried. The algorithm employs a Lower Confidence Bound (LCB) approach to identify promising task branches, searching for relations on a tree structure that enhances efficiency compared to non-structured bandit algorithms.

Sparse Updates

The method performs sparse updates by modifying only the relevant model parameters based on hierarchical task similarity. A regularization term guides these updates, preserving acquired task-specific knowledge while minimizing storage and computational overhead compared to full model parameter updates.

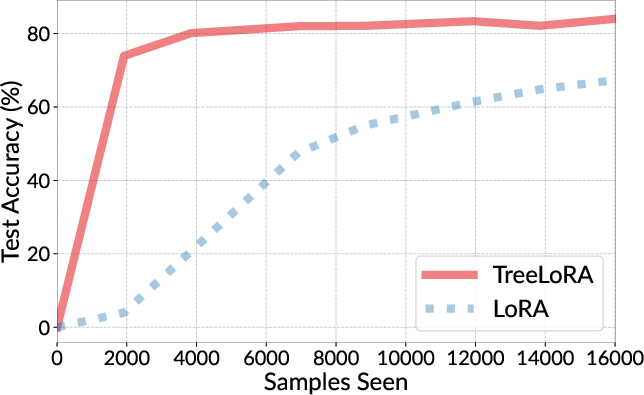

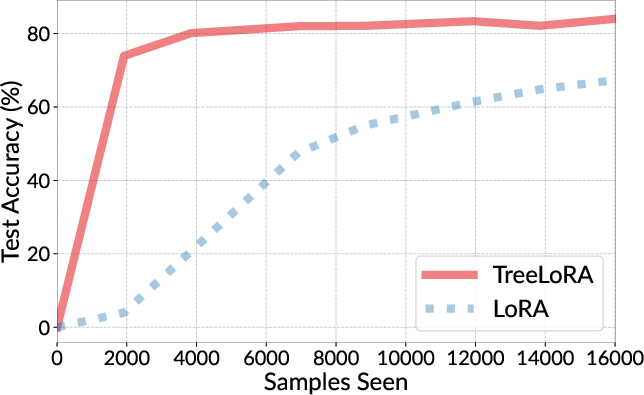

Figure 1: Test accuracy vs. the number of arrived samples for our TreeLoRA and the vanilla LoRA [ICLR'22:LoRA], showcasing efficient task adaptation using hierarchical gradient similarity.

Theoretical Guarantees

The paper provides theoretical insights into TreeLoRA's effectiveness, highlighting improved regret bounds due to the tree structure employed for bandit search. The regret analysis confirms TreeLoRA's higher efficiency in identifying task similarities, backed by stochastic approximation techniques and smoothness assumptions.

Experimental Results

TreeLoRA demonstrates superior performance across multiple benchmarks, validating its effectiveness in reducing training time on both vision transformer models and LLMs. In practice, TreeLoRA offers up to 3.2x speedup for vision transformers and 2.4x speedup for LLMs compared to previous state-of-the-art methods.

Visualization and Impact

The learned task-similarity structure is visually represented, showing how TreeLoRA effectively groups linguistic and reasoning tasks based on shared characteristics. This capability reinforces TreeLoRA's practical applicability in efficiently handling real-time streaming data.

Figure 2: Figure illustrating our approach: Efficient continual learning via TreeLoRA on transformer-based models.

Conclusion

TreeLoRA presents an efficient framework for continual adaptation using large pre-trained models, significantly reducing computational demands while preserving task knowledge. Its hierarchical gradient-similarity tree structure and bandit-based algorithm prove advantageous in real-world applications requiring dynamic task learning. Future directions include exploring evolving task relationships and optimizing for varying task complexities.

These results and theoretical analyses position TreeLoRA as a promising approach for advancing efficient continual learning strategies in AI systems.