- The paper introduces a 2D triangle splatting method that improves mesh training fidelity and efficiency over traditional Gaussian-based approaches.

- It leverages a compactness parameter and spatial opacity to transition from radiance fields to photorealistic mesh representations.

- Experimental results demonstrate the method's superior performance in preserving thin structures and scalability for large-scale 3D reconstructions.

Analysis of "2D Triangle Splatting for Direct Differentiable Mesh Training" (2506.18575)

Introduction and Motivation

The paper introduces a novel approach called 2D Triangle Splatting (2DTS) for direct differentiable mesh training. It addresses inherent limitations in traditional multi-view stereo (MVS) methods and differentiable rendering techniques, notably the accuracy and computational challenges involved in high-fidelity 3D scene reconstruction. Traditional methods such as NeRF and 3D Gaussian splatting (3DGS) have limitations regarding explicit geometry representation and computational costs, motivating this research to blend the robustness of mesh-based models with the adaptability of radiance fields.

Methodology

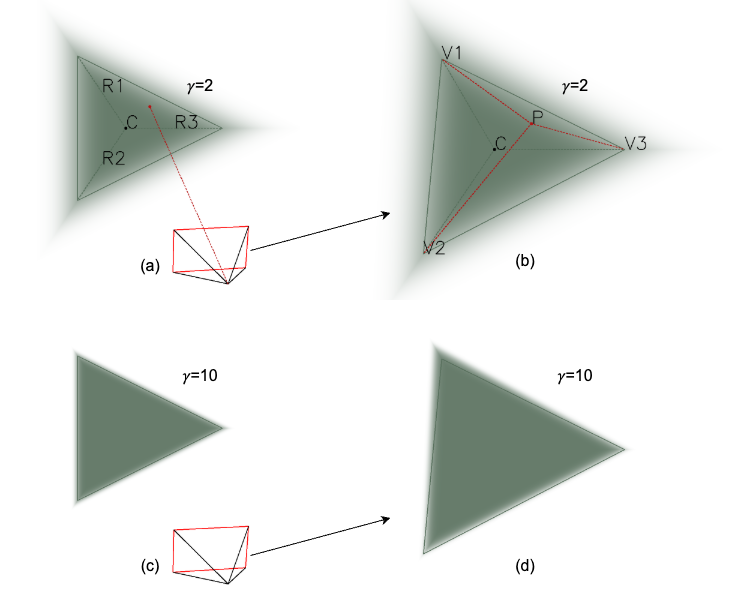

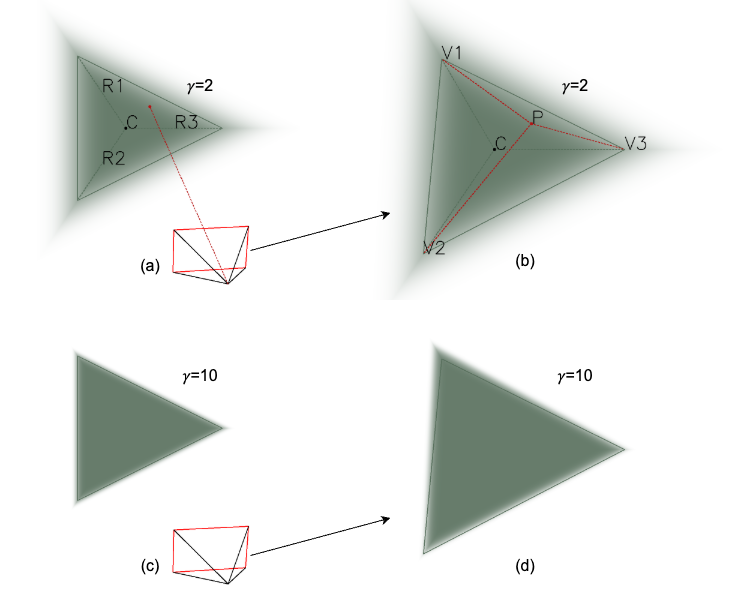

The 2DTS method replaces traditional 3D Gaussian primitives with 2D triangle facelets, which naturally form a mesh-like structure amenable to differentiable learning techniques. This transition leverages the spatially varying opacity based on a compactness parameter, enabling photorealistic meshes' direct training. The opacity mimics the exponential decay used in Gaussian splatting while enhancing geometric fidelity. The introduction of a compactness parameter during training is particularly innovative, as it allows transitioning from diffusive representations to solid mesh forms.

Figure 1: Overview of the proposed 2D Triangle Splatting (2DTS) method.

Experimental Results

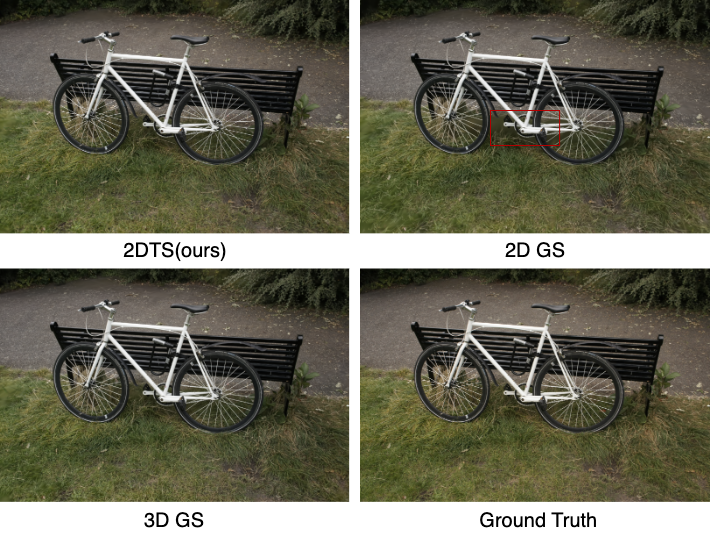

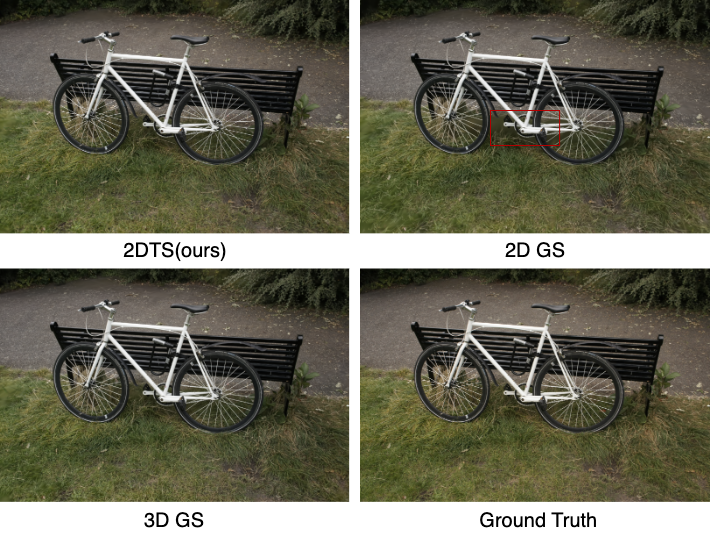

The research presents compelling experimental results demonstrating that their triangle-based method, without additional compactness tuning, achieves higher fidelity in the reconstructed meshes compared to state-of-the-art Gaussian-based methods. Results are detailed across various datasets, including Mip-NeRF360 and NeRF-Synthetic, showcasing the method's superiority in preserving thin structures—a common challenge in existing methods.

Figure 2: Comparison of the radiance field reconstruction between 2DTS, 3DGS and 2DGS.

Through both quantitative metrics such as PSNR and visual assessments, 2DTS consistently outperforms alternatives in most tests. Notably, the Mesh Extraction on the MatrixCity dataset demonstrates the scalability of 2DTS to large scenes, a critical requirement for real-world applications like virtual reality and 3D mapping.

Comparative Analysis

The 2DTS method differentiates itself through superior handling of scene complexity and its capacity to generate high-quality meshes without post-processing artifacts typical in Gaussian-based methods. Compared to NeRF and other Gaussian splatting variants, 2DTS offers an efficient end-to-end training pipeline that improves final visual outcomes and reduces processing times.

The study includes a detailed analysis against existing techniques like the more advanced 3D Convex Splatting and recently proposed combination methods leveraging SDFs with Gaussian primitives. 2DTS's grid-free mesh construction is particularly advantageous, eliminating the resolution limitations faced by grid-dependent methods.

Figure 3: Comparison of Mesh Extraction.

Implications and Future Work

The practical implications of this research are extensive. The ability to directly train photorealistic meshes that maintain geometry accuracy while allowing advanced rendering effects positions 2DTS as a versatile tool for various applications. From augmented and virtual reality environments to 3D map reconstruction, the advancements proposed through this method pave the way for more efficient and scalable 3D visualization technologies.

Moving forward, research can explore adaptive point cloud densification and ways to enhance the watertightness of meshes for simulation applications. Additionally, improving upon the compactness parameter tuning could lead to even more robust geometry preservation across diverse datasets.

Conclusion

In conclusion, 2D Triangle Splatting positions itself as a critical evolution in 3D reconstruction methodologies, marrying the fidelity of radiance fields with the spatial efficiency of mesh-based systems. By addressing both geometric fidelity and processing efficiency, it stands as a viable solution for complex and large-scale 3D scene reconstructions in modern computer vision tasks.