- The paper proposes HedgeTune to optimize hyperparameters and determine the hacking threshold for mitigating reward hacking in LLMs.

- It introduces Best-of-Poisson, a Poisson-based sampling method that efficiently balances exploration and exploitation.

- The study highlights practical implications for reliable LLM performance by aligning proxy rewards with true objectives in critical applications.

Inference-Time Reward Hacking in LLMs

Introduction

The paper "Inference-Time Reward Hacking in LLMs" addresses the challenge of reward hacking in alignment methods used for LLMs. Reward hacking occurs when an AI system optimizes proxy reward signals that do not perfectly align with true goals, leading to unexpected or undesired behavior. The study focuses on inference-time methods like Best-of-n (BoN), Soft Best-of-n (SBoN), and the newly proposed Best-of-Poisson (BoP) for aligning LLM outputs with intended objectives while highlighting mitigation strategies.

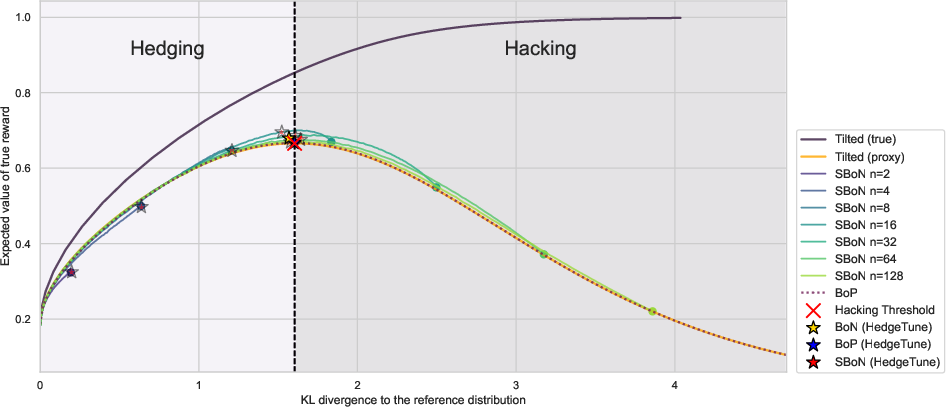

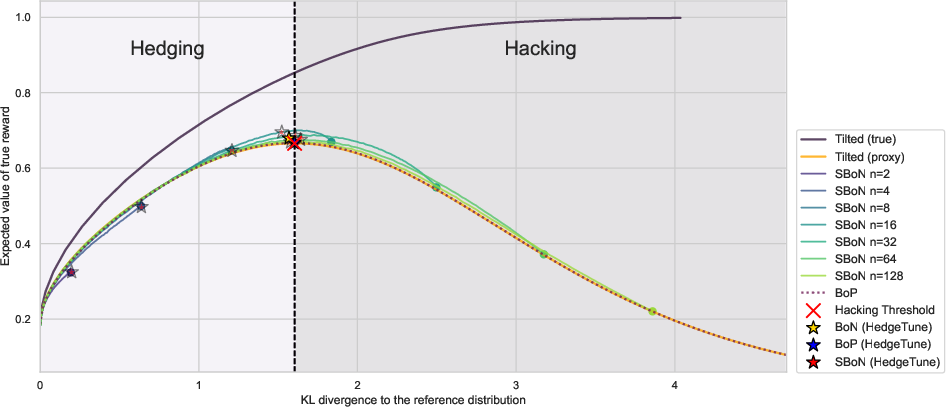

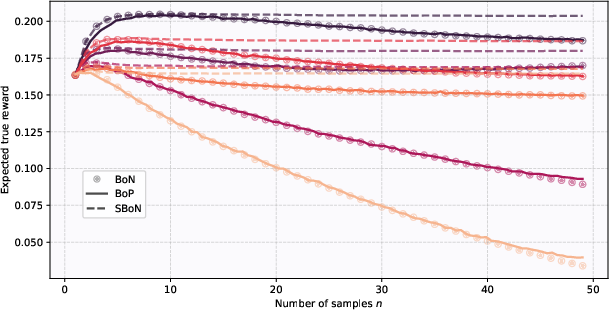

Figure 1: The mismatch between the proxy and gold rewards manifests through the winner's curse. In an ideal world where we could optimize directly on the gold reward, its value would rise monotonically. However, since we are optimizing for a proxy, the gold reward peaks and then collapses.

Key Concepts and Background

The core challenge explored is the disparity between proxy rewards (an approximation used during training) and true or gold rewards (the desired model behavior). The paper introduces the notion of the hacking threshold, which is the point where further optimization against a proxy reward leads to performance degradation. Significant implications of this phenomenon include unreliable AI behavior in critical applications when overly relying on proxy rewards.

Inference-Time Methods and Reward Hacking

Inference-time methods like BoN are commonly employed because of their efficiency and effectiveness in enhancing LLMs by sampling multiple outputs and selecting the best under a proxy reward model. However, such methods are prone to the "winner's curse," where selected outputs score highly on proxy rewards but poorly on true metrics, due to misalignment between proxy and true rewards.

HedgeTune, an algorithm developed in this paper, identifies the optimal parameters for inference-time alignment methods, preventing overoptimization on proxy rewards. The algorithm is applied to align the selected hyperparameters to the hacking threshold.

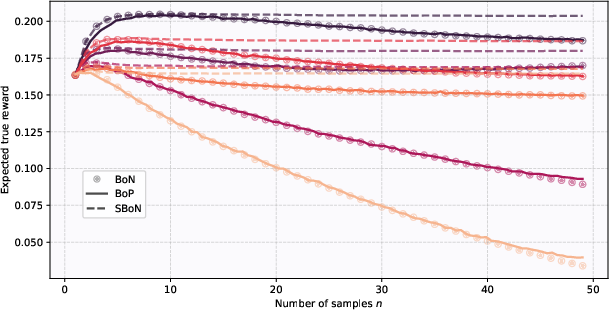

Figure 2: Use of three inference-time methods (BoN, SBoN, and BoP) on trained proxy rewards. Hacking is effectively mitigated by hedging via lambda in SBoN or n in BoN and BoP.

Best-of-Poisson: A New Approach

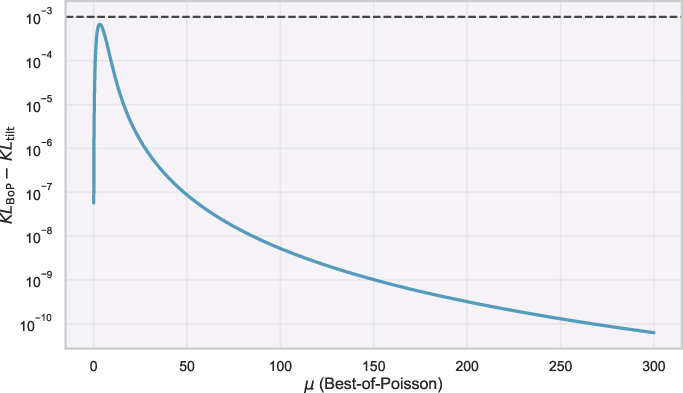

BoP, introduced in this paper, draws a random sample size from a Poisson distribution, offering a balance between excessive exploitation and strict adherence to reference distributions. It approximates the optimal reward-KL distortion tradeoff and serves as a computationally efficient alternative to traditional methods.

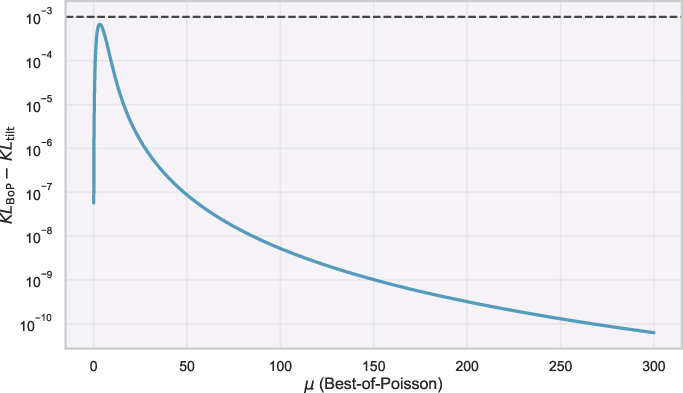

Figure 3: KL divergence gap between BoP and the optimal tilted distribution with respect to the reference distribution.

Practical Implications

The research has significant implications for designing LLMs for real-world applications where reliance solely on proxy rewards could lead to unreliable systems. Methods developed in this study, such as HedgeTune and BoP, offer pathways to ameliorate reward hacking by achieving a balance between achieving high proxy scores and closely aligning with true objectives.

Conclusion

This paper provides a vital contribution to AI alignment research by theoretically characterizing and empirically demonstrating inference-time reward hacking and proposing strategies like HedgeTune and BoP to mitigate its impact. These methods ensure AI systems reliably meet their intended objectives while reducing the risk of unpredictable behavior due to reward hacking.