- The paper introduces a compute governance framework featuring tamper-proof FLOP caps and regulatory controls to restrict AI training.

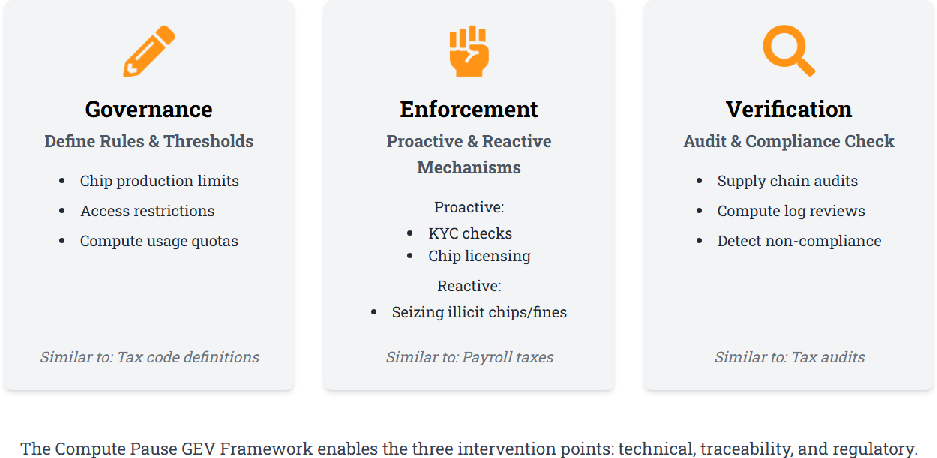

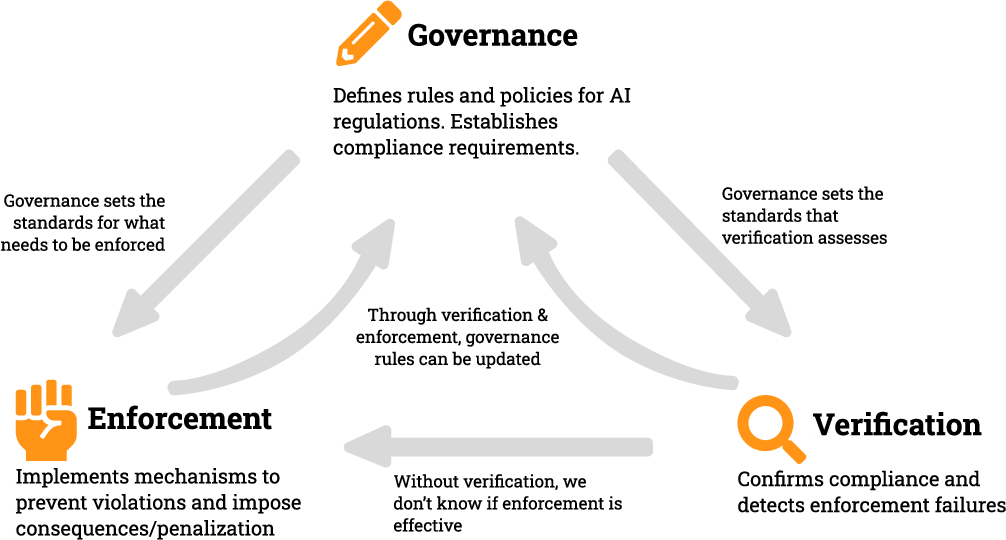

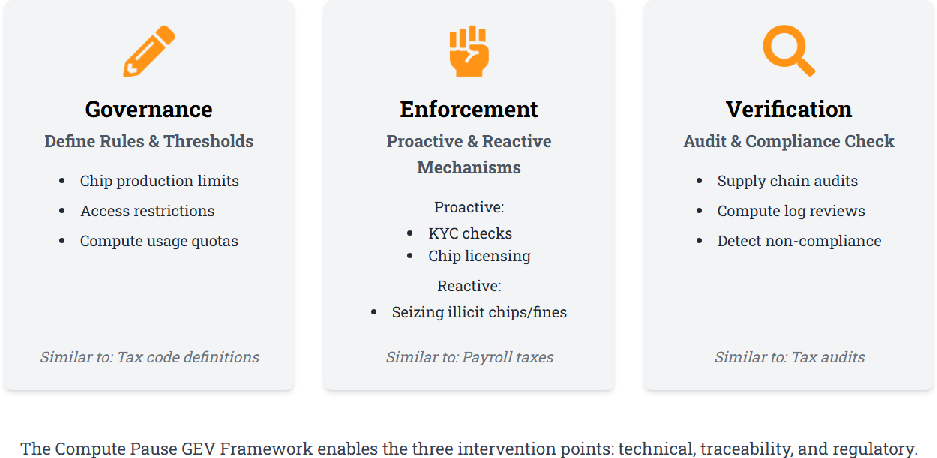

- It details a tripartite Governance, Enforcement, and Verification (GEV) model that integrates technical, traceability, and regulatory mechanisms.

- The study draws parallels with non-proliferation treaties to advocate for international cooperation and preemptive controls over high-risk AI development.

Introduction

The paper "Toward a Global Regime for Compute Governance: Building the Pause Button" explores the urgent need for a global governance system to regulate the compute infrastructure that underpins advanced AI model training. As AI models become increasingly capable, their potential to cause societal disruption and harm grows. The paper proposes a specific framework aimed at preventing potentially dangerous AI systems from being developed by restricting access to the necessary compute resources. This is achieved by targeting intervention points—technical controls, traceability, and regulatory mechanisms—organized within a Governance, Enforcement, and Verification (GEV) framework.

Proposed Compute Governance Framework

The core proposition is the establishment of a "Compute Pause Button," a governance mechanism designed to regulate the use of computational resources in AI training. The paper identifies three key intervention points:

- Technical Mechanisms: Incorporate modifications to hardware to enforce limits on computational power usage, such as tamper-proof FLOP caps. These could be implemented using secure hardware modules that prevent training runs from exceeding predefined compute thresholds.

- Traceability Mechanisms: Develop comprehensive traceability infrastructure to track chips and computational usage across the entire supply chain. This ensures visibility into who is accessing compute resources, thus preventing unmonitored large-scale training runs.

- Regulatory Mechanisms: Establish export controls, licensing schemes, and production caps to set rules for compute use and ensure compliance. These would align with international legal frameworks and utilize existing structures for implementation and oversight.

Figure 1: Framework and Example Mechanisms for Compute Pause Button.

Governance, Enforcement, and Verification (GEV) Framework

The GEV framework provides the operational basis for compute governance, distinguishing between setting rules (governance), ensuring compliance (enforcement), and monitoring adherence (verification). This approach ensures an integrated system where each component reinforces the others:

Implementing the Framework

The paper suggests multiple mechanisms to operationalize the framework:

- Tamper-Proof FLOP Caps: Hardware-level FLOP caps act as a direct intervention, providing a failsafe against excessive compute use.

- Model Locking: This mechanism restricts the unauthorized deployment of trained model weights, allowing regulation of how and where models are used post-training.

- Offline Licensing: By implementing licensing systems that limit compute usage and require periodic renewals, this mechanism enacts control even in offline environments.

- Traceability and Chain of Custody: These mechanisms ensure end-to-end visibility of compute resources, from chip production through deployment, strengthening auditability and compliance.

The paper also discusses existing policy analogues like the Nuclear Non-Proliferation Treaty and the Chemical Weapons Convention, drawing parallels with compute governance. These analogues provide insights into effective implementation, such as the use of international cooperation and verification mechanisms.

Conclusion

The proposed architecture aims to shift the focus of AI governance from post-deployment oversight to preemptive controls on computational resources. By doing so, it seeks to prevent the unchecked development of powerful AI systems. The paper concludes that although technical and political challenges remain, credible mechanisms for governing compute already exist. Mobilizing political will and fostering international cooperation will be crucial to implementing this architecture. The framework offers a pragmatic approach to managing the rapid advancements in AI, helping to ensure technologies develop in a manner aligned with global safety and stability objectives.