- The paper introduces an annealing guidance scheduler that dynamically adjusts the guidance scale (w) during denoising to improve image quality and prompt adherence.

- It employs a lightweight MLP that uses timesteps and prediction discrepancies to determine the optimal guidance scale, overcoming the limitations of static CFG.

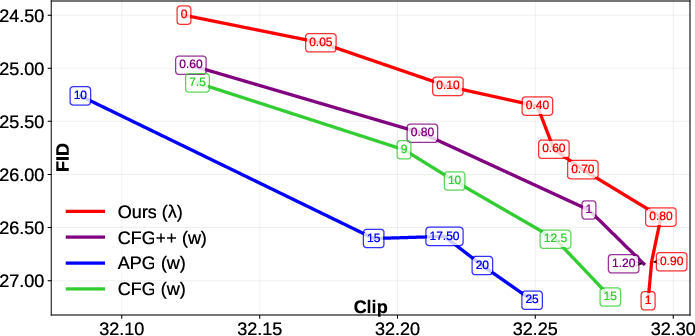

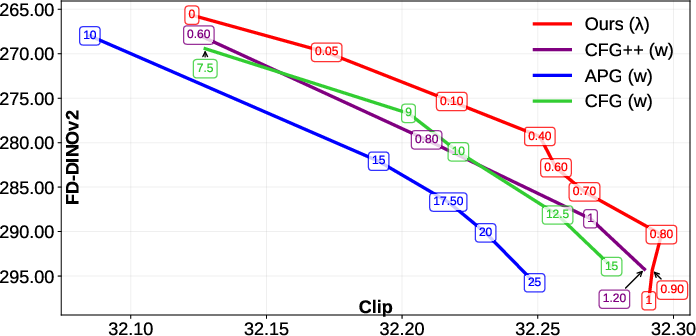

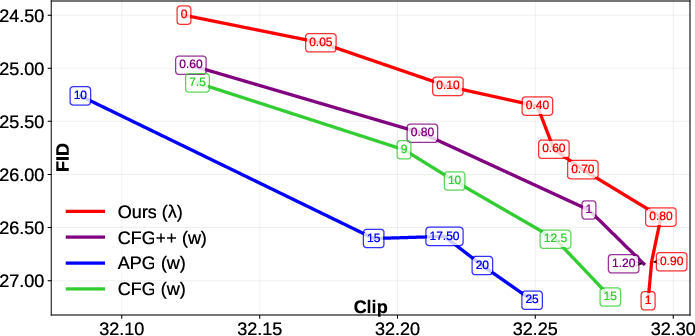

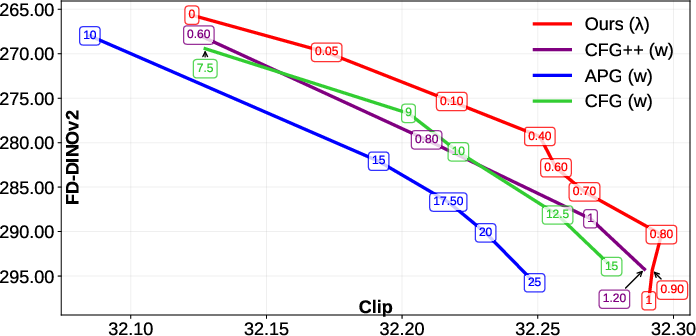

- Empirical results on MSCOCO17 show significant improvements in FID/CLIP and FD-DINOv2/CLIP metrics, indicating the method's effectiveness for complex conditional image generation.

Navigating with Annealing Guidance Scale in Diffusion Space

Introduction

The paper "Navigating with Annealing Guidance Scale in Diffusion Space" proposes a method to enhance the image generation capabilities of denoising diffusion models. These models are known for their proficiency in creating high-quality images from textual prompts but heavily rely on guidance during the sampling process. Classifier-Free Guidance (CFG) has been a common technique in this domain, where a guidance scale w adjusts the balance between image quality and adherence to the prompt. This paper introduces an annealing guidance scheduler that dynamically adjusts w during sampling, leveraging the evolving conditional noisy signal to improve image generation outcomes.

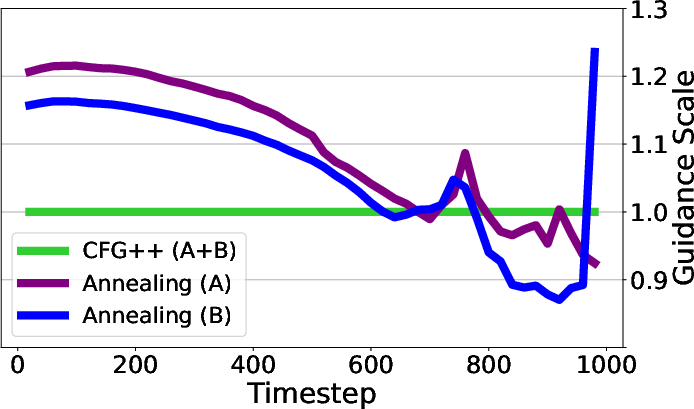

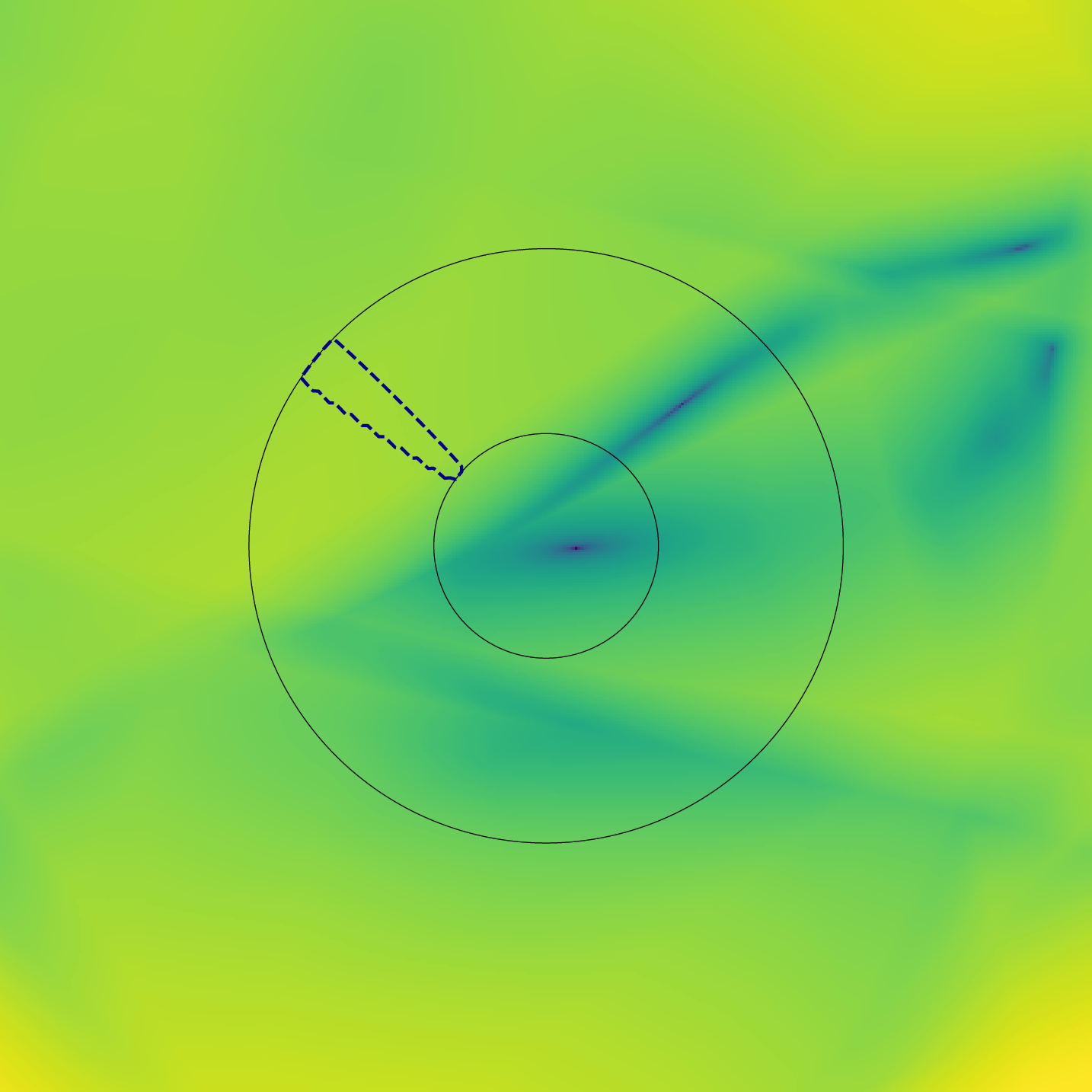

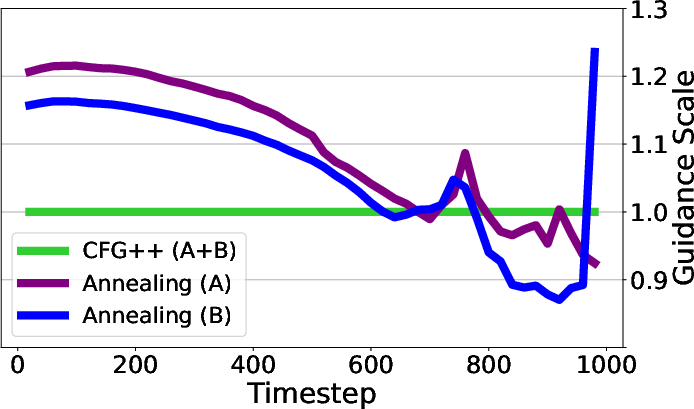

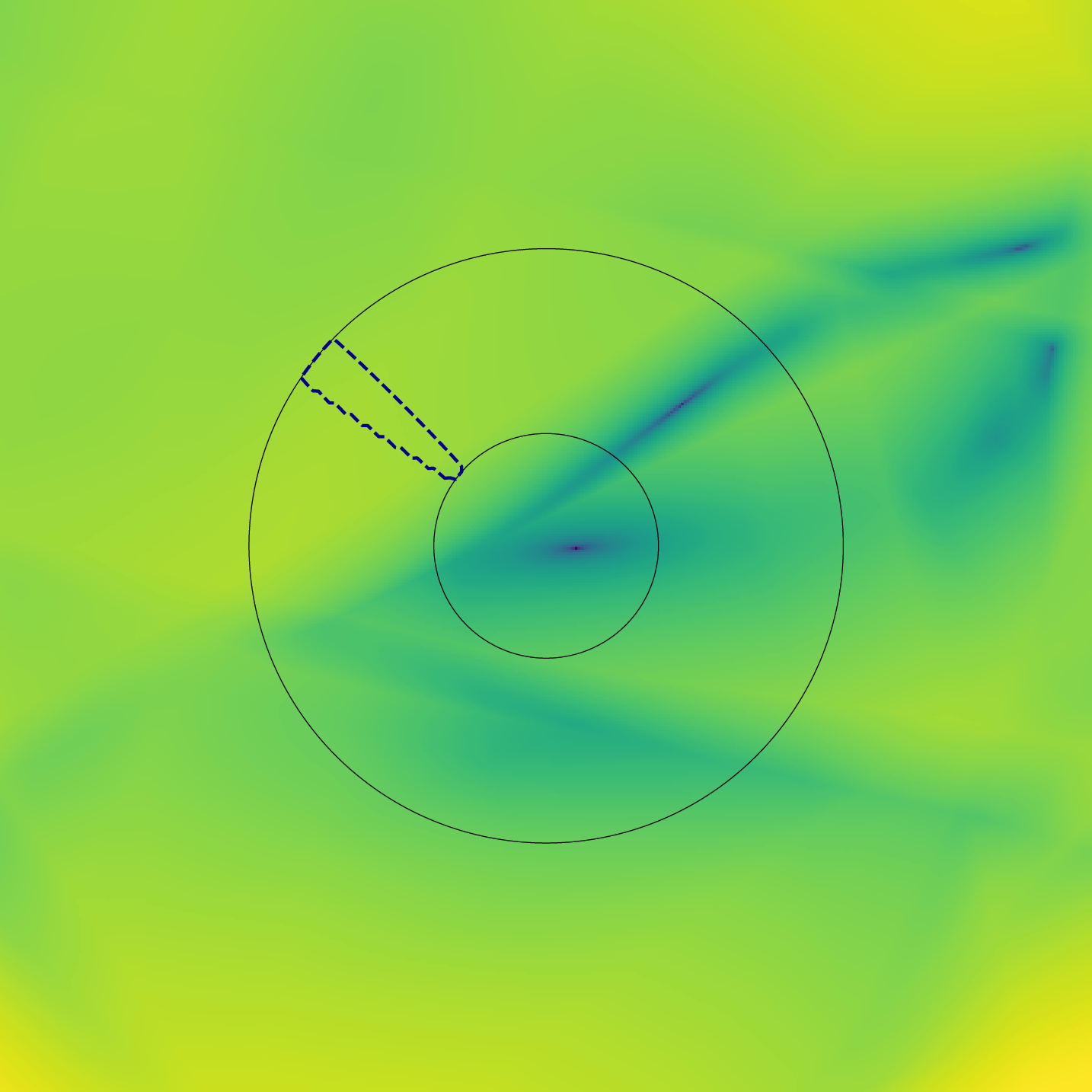

Figure 1: Guidance Scale Over Time. This demonstrates the dynamic adjustment of guidance scale by our annealing scheduler in comparison to static CFG and CFG++.

Methodology

Classifier-Free Guidance and Its Limitations

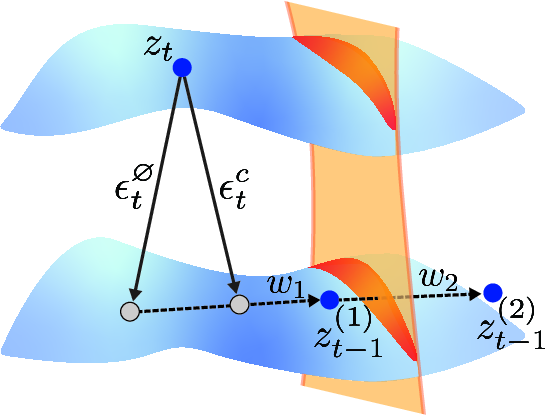

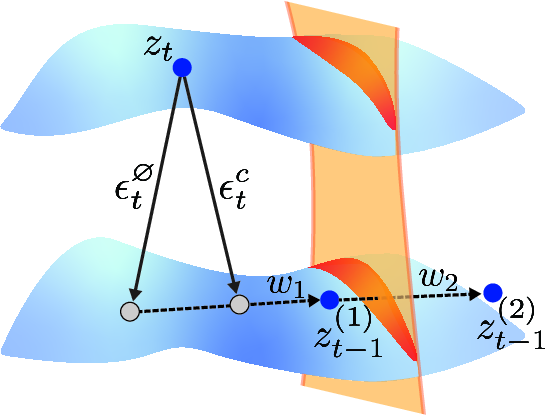

Classifier-Free Guidance (CFG) has been instrumental in guiding the sampling of diffusion models. CFG works by adjusting the output of the model based on conditional and unconditional predictions, ϵtc(zt) and ϵt∅(zt). However, CFG's effectiveness hinges on selecting an appropriate static guidance scale w, which often fails to adapt to the trajectory of the denoising process, especially in complex and variable diffusion landscapes.

Figure 2: Classifier-Free Guidance Step. Illustrates the denoising process using CFG, highlighting the role of guidance scale in adjusting prediction.

Annealing Guidance Scheduler

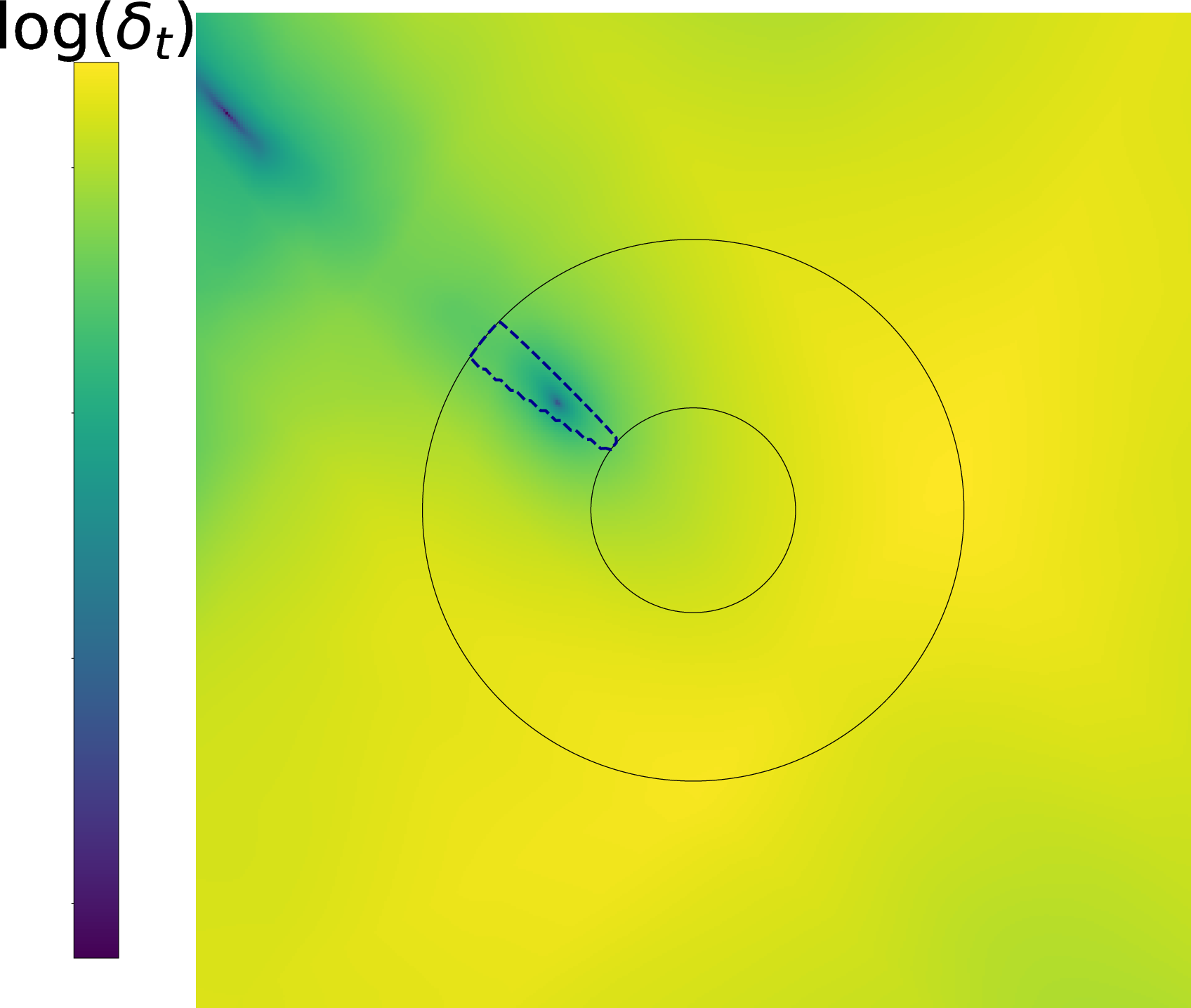

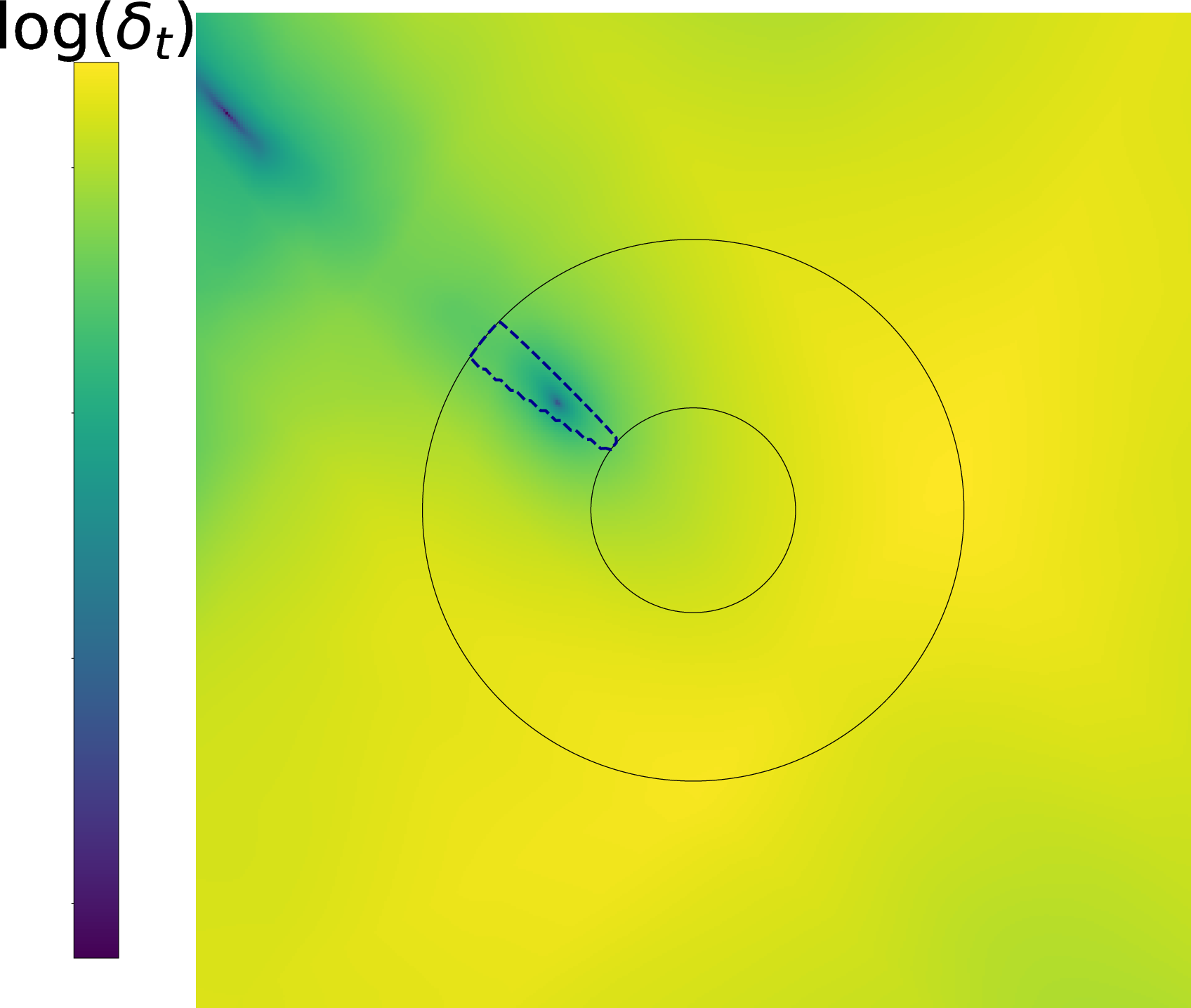

The proposed annealing guidance scheduler learns to adjust w over time, based on the discrepancy δt=ϵtc−ϵt∅. This learning-based approach employs a lightweight MLP to predict the guidance scale w, considering both the timestep t and ∥δt∥.

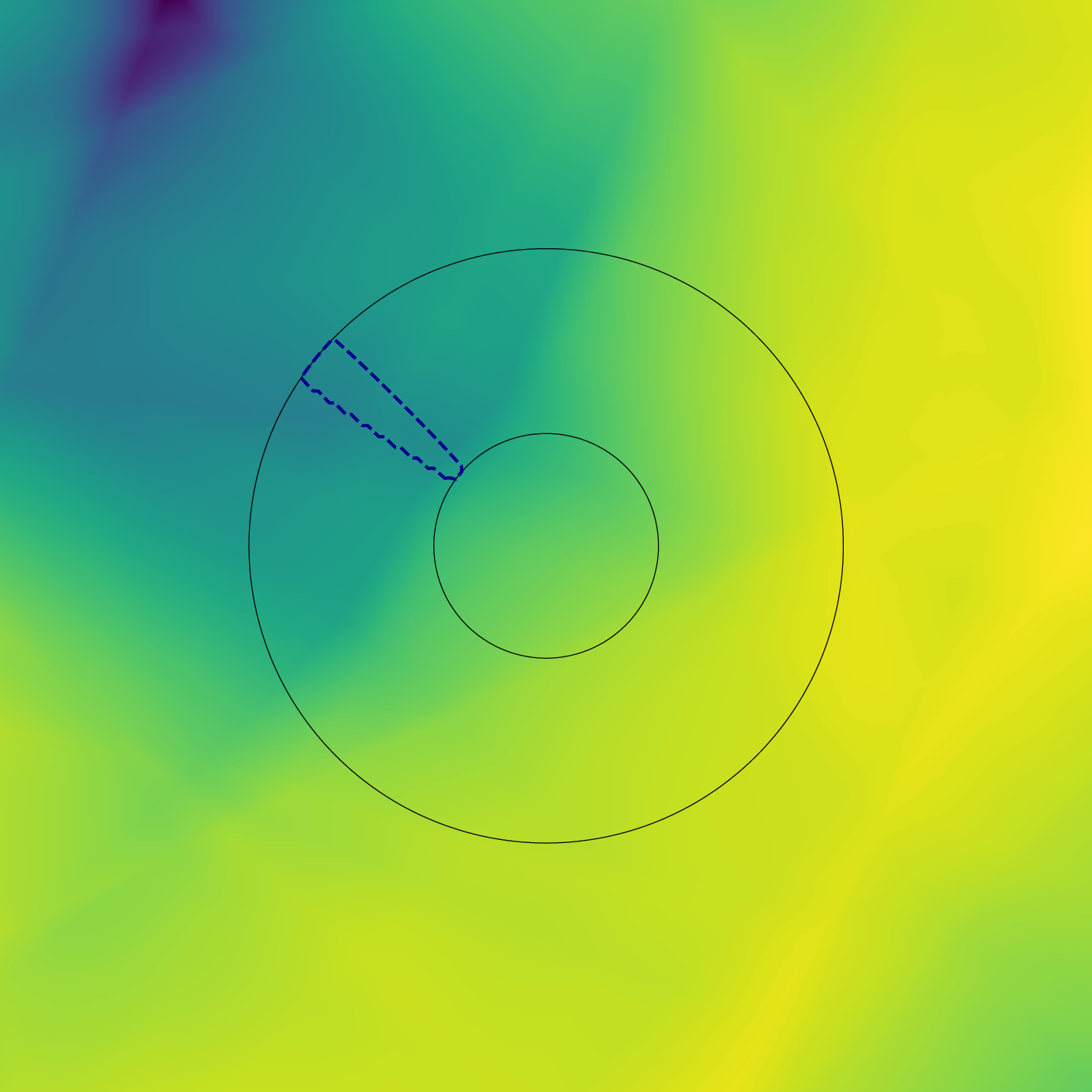

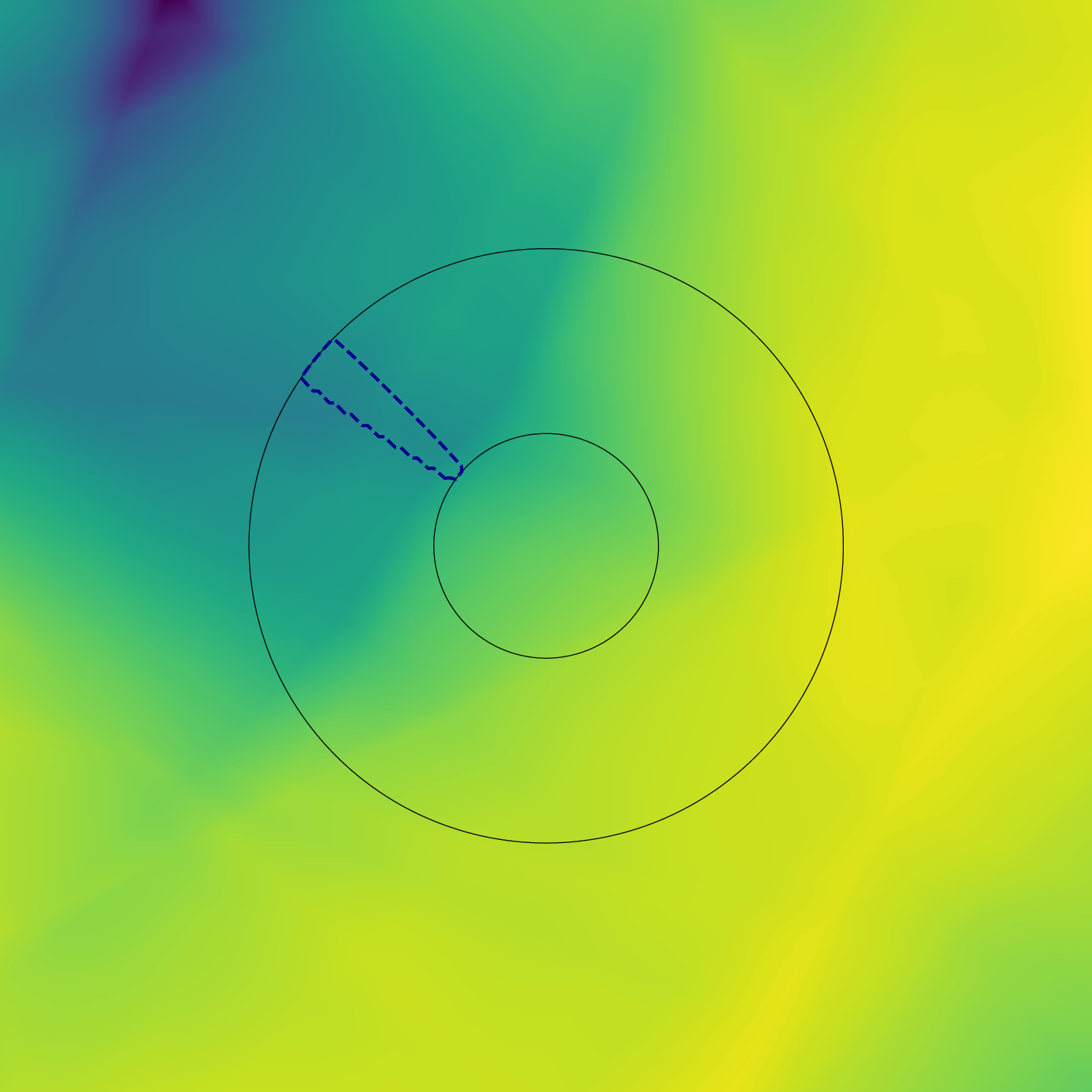

Figure 3: Heatmap illustrating the alignment between conditional and unconditional predictions, demonstrating the effectiveness of dynamic scaling.

The scheduler adapts the guidance trajectory during generation, allowing for sample-specific guidance that better aligns with evolving generation dynamics and noise patterns.

Experiments and Results

The paper reports empirical results demonstrating significant improvements in both image quality and text-prompt alignment. The scheduler achieves state-of-the-art performance in metrics such as FID/CLIP and FD-DINOv2/CLIP when evaluated on MSCOCO17. Unlike CFG and CFG++, which use fixed guidance scales, the annealing method flexibly adjusts guidance in response to generation conditions.

Figure 4: Quantitative Metrics of the annealing guidance scheduler performance on benchmark datasets against baseline methods.

Implications and Future Work

This research introduces a novel approach to dynamic guidance in diffusion models, suggesting potential improvements in generative modeling for complex scenes. The method is particularly impactful for tasks requiring a delicate balance between prompt fidelity and sample diversity. Future work may explore extending this concept to other generative frameworks and condition modalities, including audio or video synthesis.

Conclusion

The Annealing Guidance Scheduler offers a sophisticated mechanism for guiding diffusion models beyond fixed-scale CFG methods. By incorporating adaptive scaling via a trained model, this approach promises enhanced generation performance across diverse scenarios. The combination of theoretical insights and practical evaluations demonstrates the scheduler's capability to navigate diffusion space more effectively, setting a promising direction for future advancements in conditional generative models.

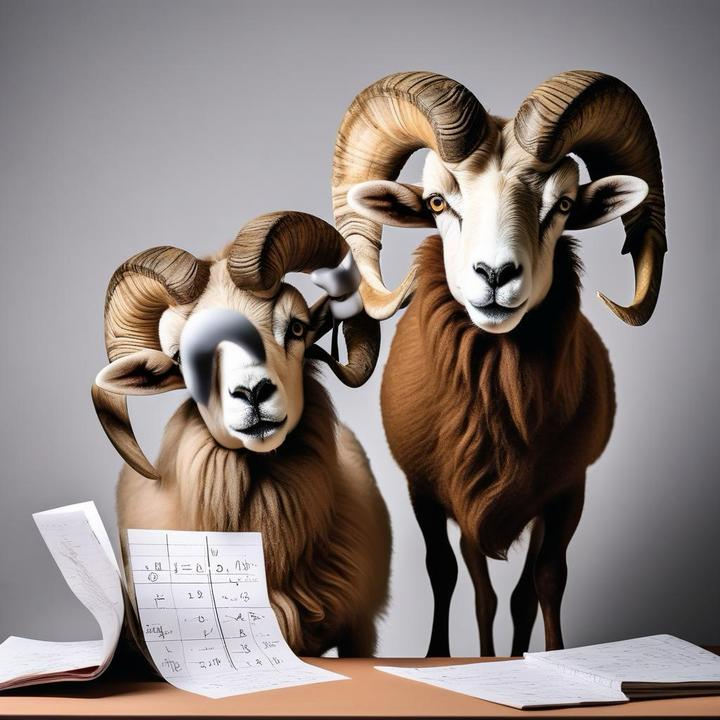

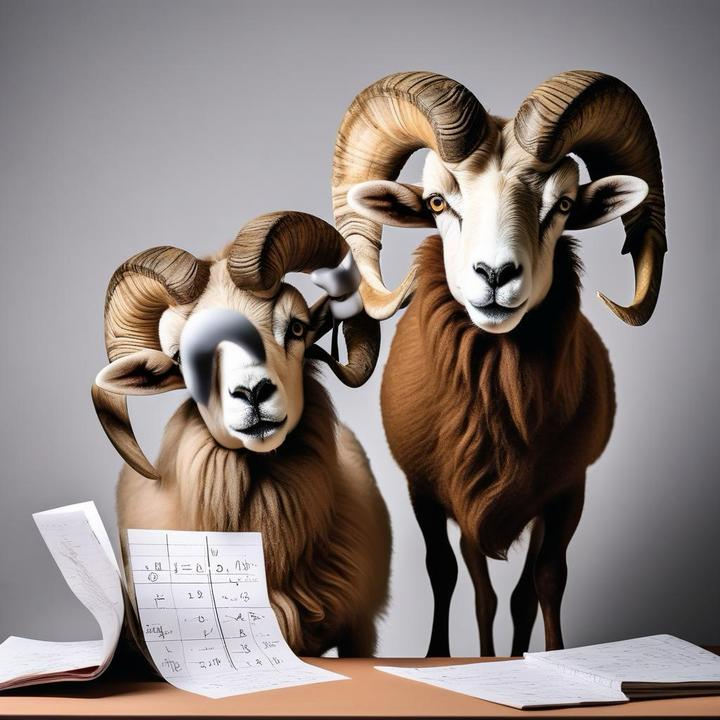

Figure 5: Qualitative comparison of generated images using Annealing guidance (right) vs CFG++ (left), highlighting improved adherence to prompts.