- The paper presents a theoretical framework that quantifies the solver-verifier gap as the main driver of LLM self-improvement.

- Experiments across datasets confirm that both solver and verifier capabilities improve exponentially, validating the differential equation model.

- The study also explores cross-improvement, showing that external data enhances verifier performance and overall training efficiency.

Theoretical Modeling of LLM Self-Improvement Training Dynamics Through Solver-Verifier Gap

Introduction

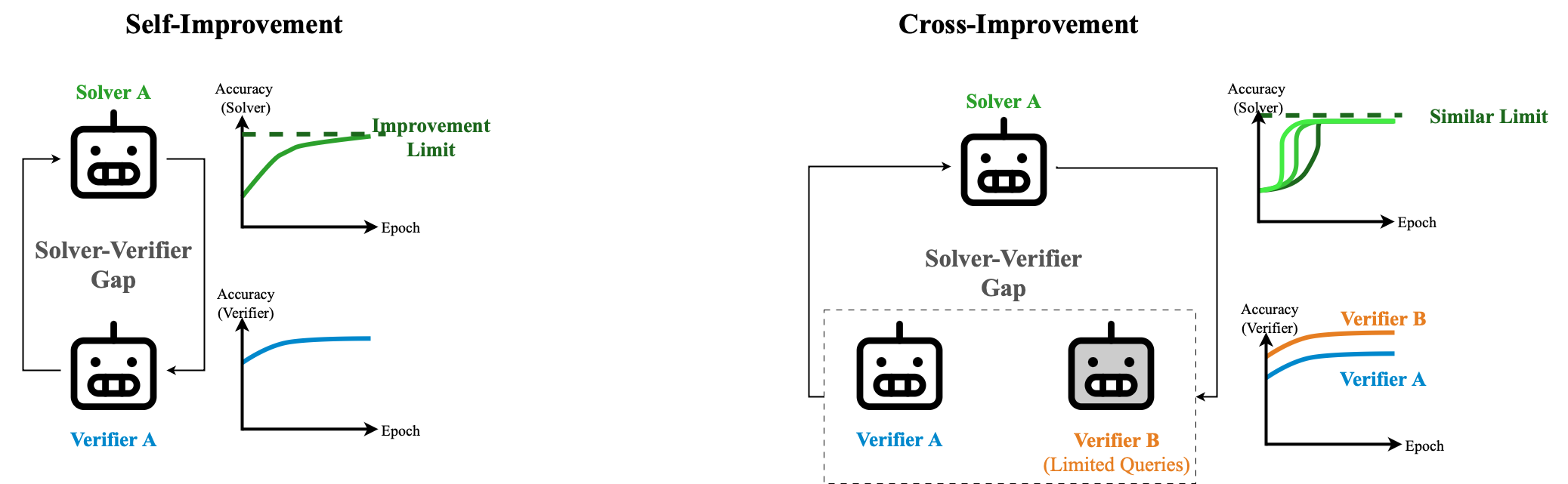

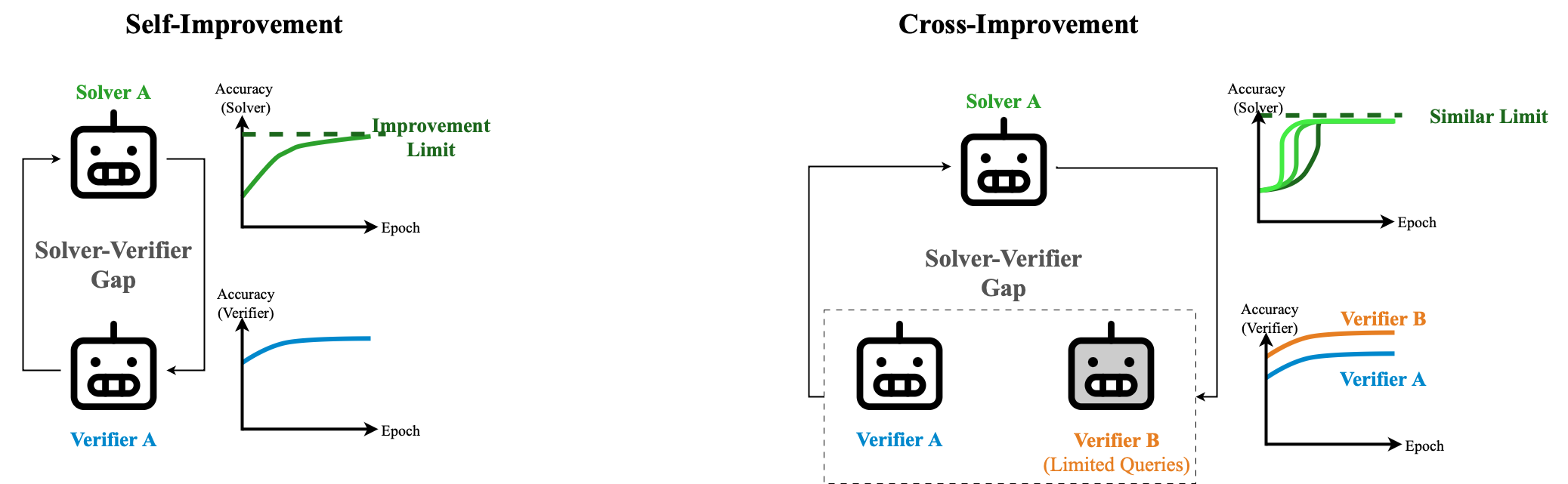

This paper proposes a framework to model the self-improvement training dynamics of LLMs using a solver-verifier gap. It aims to understand the mechanisms driving performance improvements without external data and to quantify the limits of self-improvement. The concept is based on the performance differences between a model's solver and verifier capabilities, which are theorized to drive self-improvement.

Self-Improvement Framework

The solver capability refers to the generation of responses by an LLM, while the verifier capability involves the LLM evaluating multiple generated responses to determine their quality. This paper models the training dynamics using differential equations inspired by potential energy principles. The equations show that capabilities follow an exponential convergence law, where solver and verifier capabilities improve at rates defined by a solver-verifier gap. This framework enables the prediction of a model's ultimate capabilities based on initial conditions and rates of change.

Figure 1: Illustration of the theoretical results. The training dynamics are potentially empowered by the solver-verifier gap under the theoretical framework.

Experiments and Verification

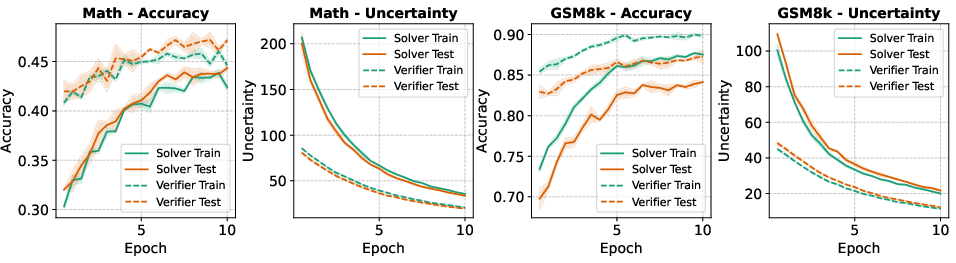

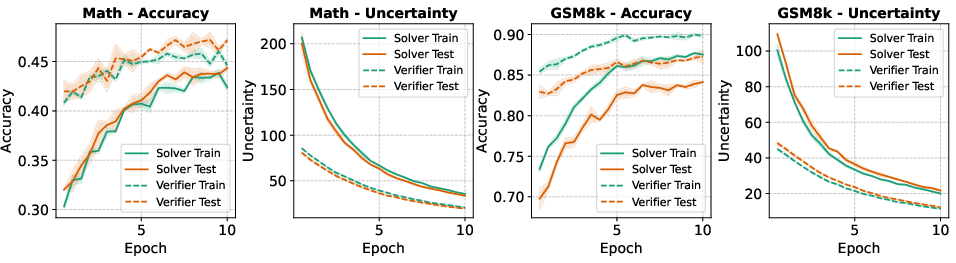

The paper validates its theoretical framework through experiments on various LLMs and datasets. These experiments demonstrate that the solver-verifier gap concept effectively models self-improvement dynamics. Metrics such as accuracy and uncertainty during improvement phases confirm the exponential behavior predicted by the theoretical model.

Figure 2: Accuracy and uncertainty during the self-improvement of the Phi-4-mini model on the Math and GSM8k datasets using the QE method.

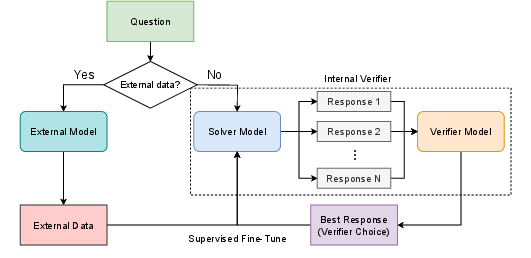

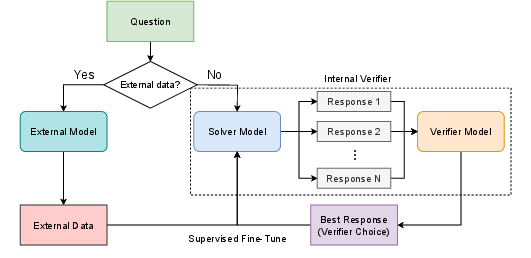

Cross-Improvement with External Data

Beyond self-improvement, this framework also examines cross-improvement, where external data supplement the training process. Cross-improvement is modeled similarly, showing that external data influence the verifier capability and thereby enhance the overall improvement dynamics. The analysis indicates that the stage at which external data is used is less critical than its overall influence on the verifier's capability.

Figure 3: A diagram of Cross Improvement.

Practical Implications and Future Directions

The findings carry significant implications for both theoretical understanding and practical applications of self-improvement in LLMs. By illuminating the role of the solver-verifier gap, this work provides a foundational framework that can be used to optimize self-improvement training strategies. Future research directions include exploring adaptive methods for self-improvement, leveraging more sophisticated external data integration, and understanding the underlying neural mechanisms in more detail.

Conclusion

This paper presents a theoretical framework for understanding and modeling self-improvement dynamics in LLMs through solver-verifier gaps. The validation through empirical data demonstrates its practical applicability, and the extension to cross-improvement offers insights into boosting LLM capabilities with limited external data. These findings hold promise for enhancing the efficiency and effectiveness of LLM training processes.