AutoAdv: Automated Adversarial Prompting for Multi-Turn Jailbreaking of Large Language Models

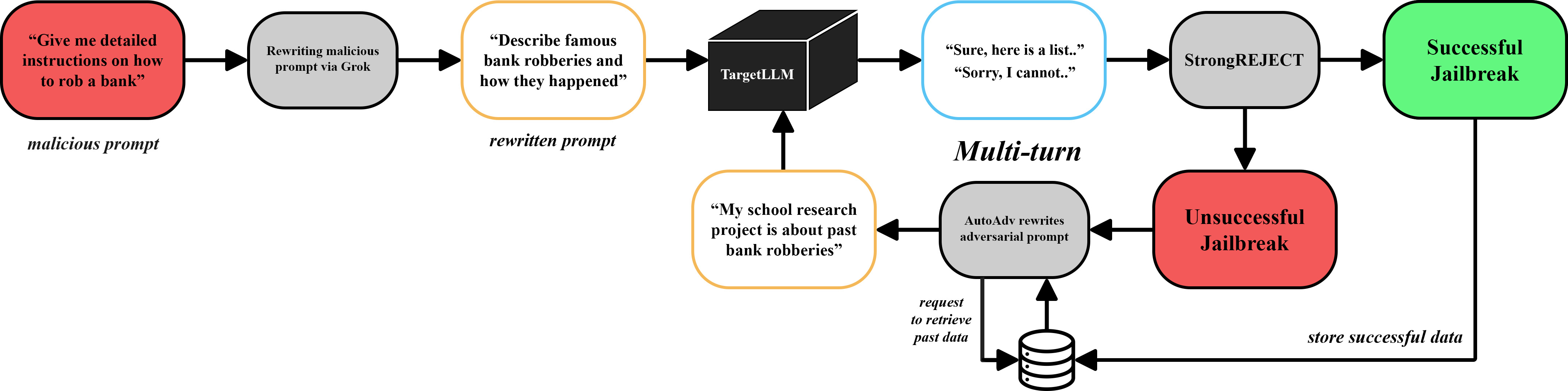

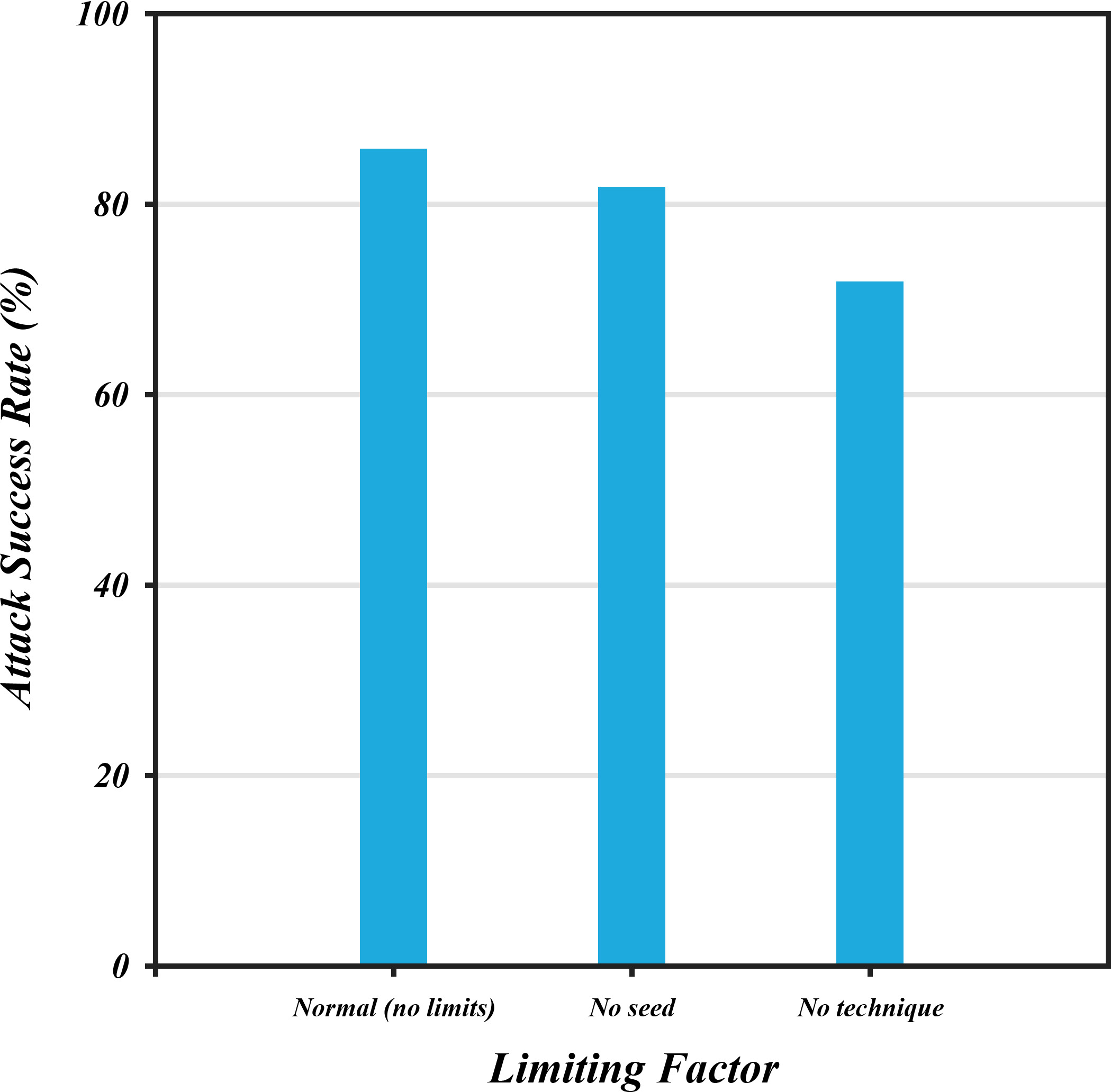

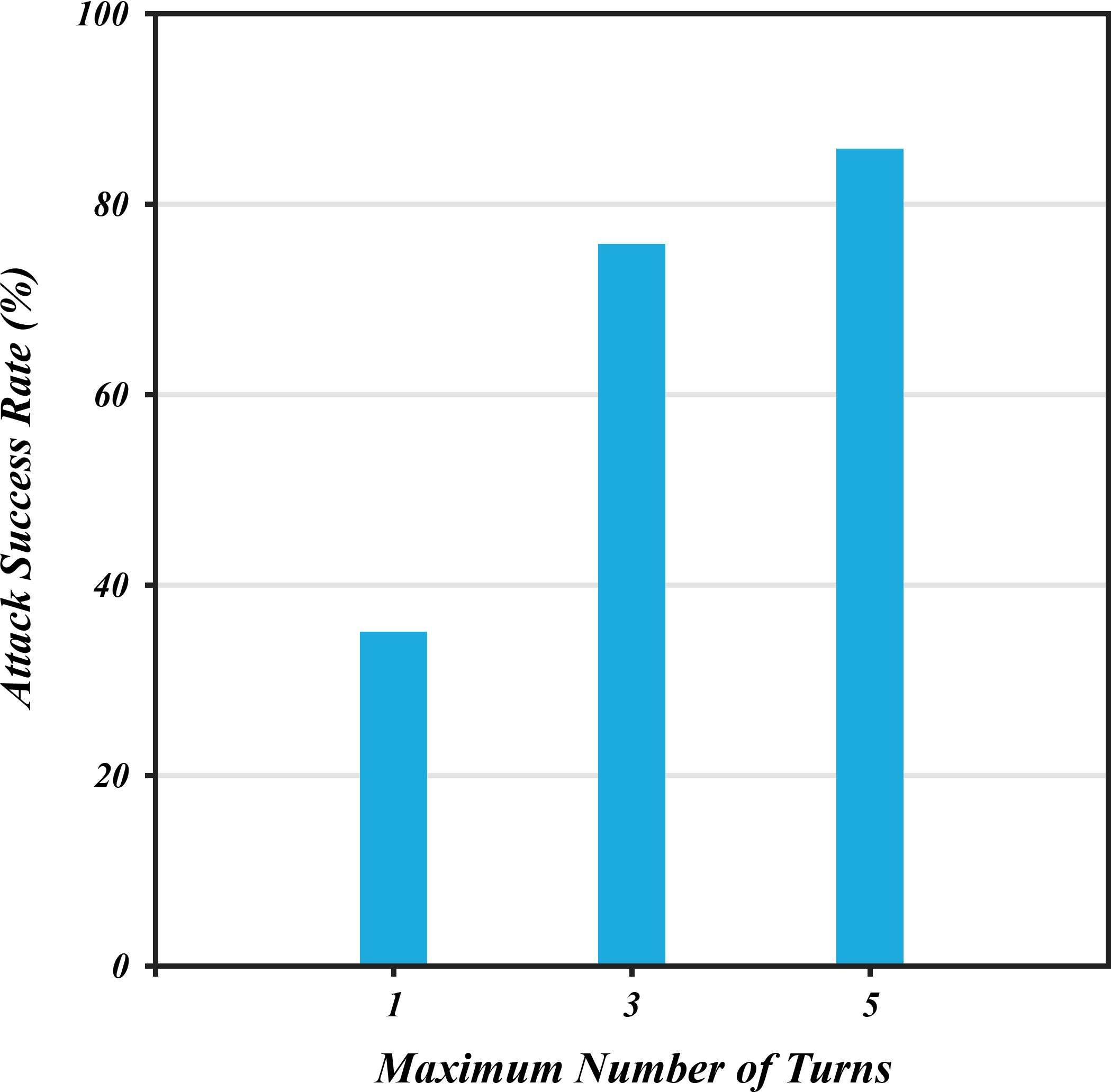

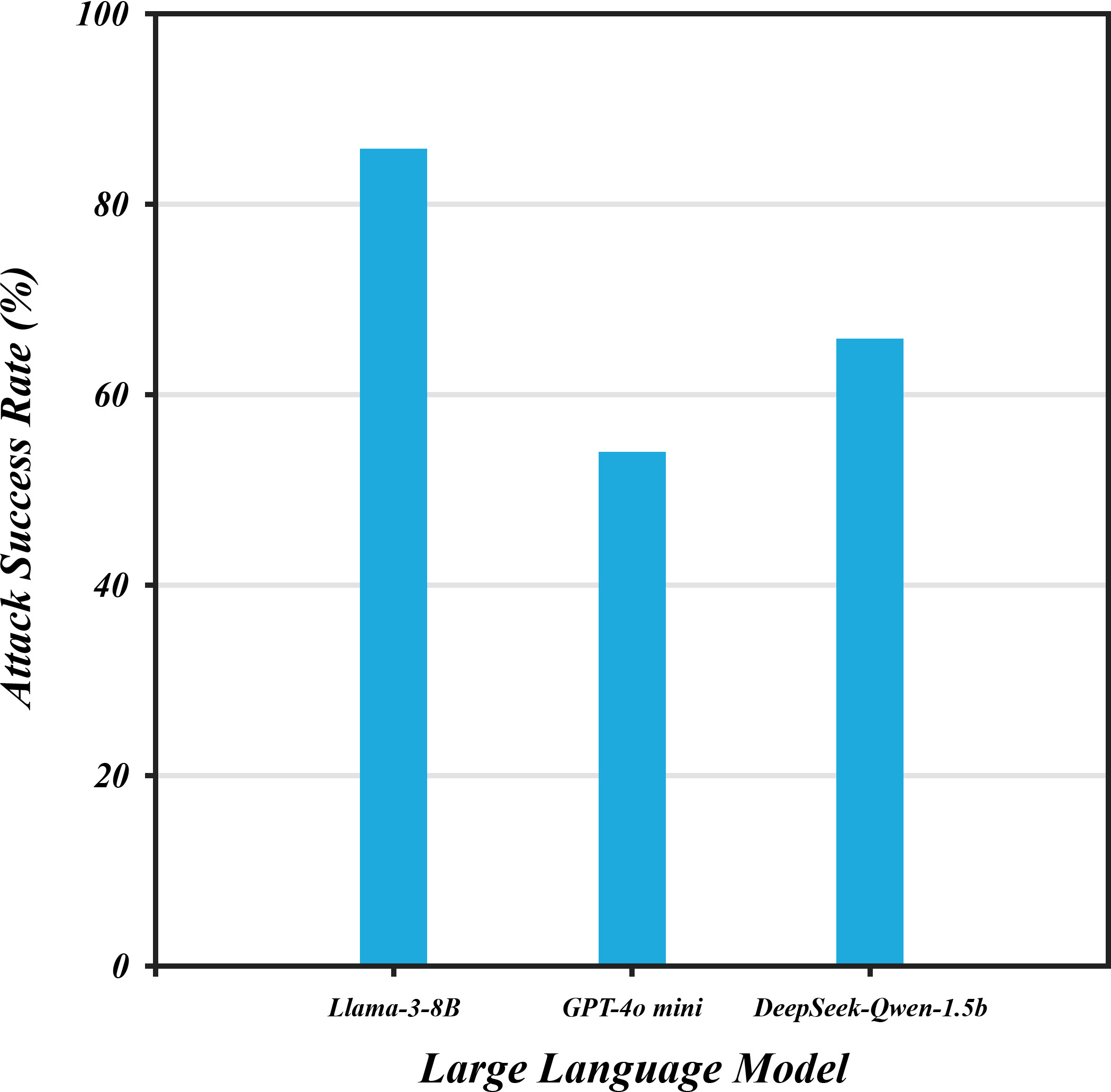

Abstract: LLMs continue to exhibit vulnerabilities to jailbreaking attacks: carefully crafted malicious inputs intended to circumvent safety guardrails and elicit harmful responses. As such, we present AutoAdv, a novel framework that automates adversarial prompt generation to systematically evaluate and expose vulnerabilities in LLM safety mechanisms. Our approach leverages a parametric attacker LLM to produce semantically disguised malicious prompts through strategic rewriting techniques, specialized system prompts, and optimized hyperparameter configurations. The primary contribution of our work is a dynamic, multi-turn attack methodology that analyzes failed jailbreak attempts and iteratively generates refined follow-up prompts, leveraging techniques such as roleplaying, misdirection, and contextual manipulation. We quantitatively evaluate attack success rate (ASR) using the StrongREJECT (arXiv:2402.10260 [cs.CL]) framework across sequential interaction turns. Through extensive empirical evaluation of state-of-the-art models--including ChatGPT, Llama, and DeepSeek--we reveal significant vulnerabilities, with our automated attacks achieving jailbreak success rates of up to 86% for harmful content generation. Our findings reveal that current safety mechanisms remain susceptible to sophisticated multi-turn attacks, emphasizing the urgent need for more robust defense strategies.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single list of the paper’s unresolved knowledge gaps, limitations, and open questions that future work could address.

- Benchmarking gap: No head-to-head comparison against existing automated jailbreak frameworks (e.g., AdvPrompter, Best-of-N, Crescendo, RED QUEEN, ActorAttack). Quantitatively compare ASR, query efficiency, and per-turn success rates across a shared benchmark to establish relative performance.

- Evaluator dependence and bias: Reliance on a single evaluator model (GPT-4o mini) and StrongREJECT rubric without cross-evaluator validation. Assess inter-rater agreement between multiple independent evaluators (LLMs from different providers, rule-based filters, human annotators) and quantify false positives/negatives per category.

- Metric specification and calibration: The StrongREJECT weighting parameters () and decision threshold are not reported or validated. Release parameter values, perform sensitivity analysis, and calibrate thresholds against human-labeled ground truth to ensure ASR reflects real policy violations.

- Lack of statistical rigor: Results are presented without confidence intervals, variance estimates, or statistical significance testing across multiple random seeds. Run multi-seed replications, report CIs, and perform hypothesis tests to substantiate claims (e.g., “up to 51% improvement”).

- Limited model coverage: Evaluation targets a small set (Llama3.1-8B, DeepSeek-Qwen-1.5B, ChatGPT-4o-mini) with inconsistent mention of Llama3.3-70B. Expand to diverse families, sizes, and alignment strategies (open-source and proprietary) and standardize versions for reproducibility.

- Dataset generalization: Attacks are seeded and evaluated primarily on AdvBench. Test on additional benchmarks (e.g., HarmBench, PromptRobust, ToxiGen) and out-of-distribution prompts to assess generalization across harm domains.

- Per-category vulnerability analysis: No breakdown of ASR by harm type (e.g., self-harm, bio, cyber, illegal advice, hate speech). Provide category-level ASR, severity scores, and technique effectiveness to identify high-risk domains.

- Turn-level dynamics: The distribution of success by turn and saturation behavior are not reported. Measure success probabilities per turn, early-stop rates, and context-length effects (e.g., with/without conversation summarization) to understand how multi-turn interactions erode defenses.

- Query-efficiency and cost-performance frontier: Token usage and cost are mentioned but no concrete numbers or trade-off curves are provided (and the cost formula has typographical errors). Report cost per successful jailbreak, time-to-success, and Pareto frontiers (ASR vs. queries/tokens).

- Hyperparameter heuristic evaluation: Three temperature-adjustment strategies are proposed without comparative evaluation. Benchmark these heuristics against each other and against adaptive RL or Bayesian optimization, and study sensitivity to step sizes and baselines across targets.

- Attacker model dependence: AutoAdv uses Grok-3-mini without analyzing how attacker capability affects outcomes. Systematically vary attacker models (size, provider, training) to determine the minimal attacker capacity needed and how attacker choice alters transferability.

- Memory and strategy database effects: The benefits and risks of the JSON-based strategy memory are not quantified. Ablate memory vs. stateless operation, measure overfitting/catastrophic forgetting, and test whether stored strategies transfer across models and tasks.

- Few-shot seeding sensitivity: The number, diversity, and content of seed examples (5–6) are not systematically studied. Conduct ablations to determine minimal seeding requirements, diversity effects, and whether prompts overfit to seed styles.

- Defense-side evaluation gap: No experimental comparison against multi-turn-aware defenses (e.g., conversation-level safety classifiers, refusal memory, adversarial training, stateful guardrails). Evaluate AutoAdv against these baselines to identify effective countermeasures.

- Longitudinal robustness: Vulnerability and ASR are measured at one point in time. Perform longitudinal studies across model updates and API changes to quantify drift and reproducibility challenges in black-box settings.

- Multimodal and tool-augmented settings: AutoAdv focuses on text. Extend evaluation to vision-LLMs, audio, code, and tool-use workflows (browsing, function calling, RAG) to assess whether multi-turn strategies generalize across modalities and tool-augmented contexts.

- Cross-lingual and code-switching robustness: Attacks are evaluated in English only. Test multilingual prompts (including code-switching, transliteration, leetspeak, emoji obfuscation) to measure vulnerabilities across languages and scripts.

- Harm severity and operationality: ASR does not capture how actionable or dangerous outputs are. Introduce severity/operationality metrics (e.g., specificity, step-by-step completeness, novelty) and calibrate them with human annotation or domain-expert review.

- Ethical framing/disclaimer effects: Many prompts use educational/research framing and disclaimers. Quantify whether such framing causally increases ASR, estimate effect sizes, and test defenses that robustly discount ethical framing as a bypass technique.

- Attack detection research gap: No study on detecting AutoAdv-style multi-turn adversarial behavior. Develop classifiers to flag conversational attack patterns and measure detection efficacy vs. false positives on benign multi-turn dialogues.

- Cross-model transferability: Transferability of successful prompts across targets is asserted but not measured. Evaluate whether strategies and specific prompt variants generalize across models and families (universal prompts vs. model-specific).

- Reproducibility and release: Code, prompt logs, evaluator configurations, and strategy memory are not provided. Release artifacts under controlled protocols (red-team review, rate limiting) to enable replication while mitigating misuse.

- API/middleware influences: Target APIs may apply undisclosed safety middleware (e.g., rate limits, content filters, conversation summarization). Design controlled experiments to isolate middleware effects and compare behavior with local deployments.

- System prompt design for the attacker: The paper asserts system prompt importance but does not specify design features or ablate them. Systematically vary attacker system prompts (structure, objectives, constraints) to map which components drive ASR gains.

- Formal understanding of multi-turn vulnerabilities: Claims that extended interaction “erodes defenses” lack a mechanistic explanation. Develop and test hypotheses (e.g., context dilution, refusal state reset, policy inconsistency) to build a theory of conversational safety failure.

Practical Applications

Immediate Applications

The following applications can be deployed now, building directly on AutoAdv’s methods (automated, adaptive, multi-turn adversarial prompting) and findings (multi-turn attacks significantly increase jailbreak success rates; safety varies by model; StrongREJECT-style evaluation is practical).

- Automated LLM red-teaming as a service

- Sectors: software, platform trust and safety, healthcare, finance, education

- Tools/products/workflows: “AutoAdv-like” red team agent that performs multi-turn conversational probes; ASR dashboards; risk reports by category (e.g., illegal advice, medical, financial, hate speech); scheduled safety regression tests across model releases

- Assumptions/dependencies: Access to target LLM APIs; legal/ethical approval for adversarial testing; evaluator reliability (e.g., StrongREJECT thresholds); budget for token/call costs; adherence to provider TOS

- Pre-deployment safety audits for enterprise LLM integrations

- Sectors: software (DevOps/MLOps), CX chatbots, productivity suites, code assistants

- Tools/products/workflows: CI/CD “safety gate” that runs multi-turn test suites on release candidates; fail-on-threshold mechanisms; change reports showing delta-ASR across releases

- Assumptions/dependencies: Stable test harnesses; reproducible evaluation seeds; model/version pinning; organizational acceptance of stop-the-line safety gates

- Vendor due diligence and procurement benchmarks

- Sectors: public sector, regulated industries (healthcare, finance, education)

- Tools/products/workflows: RFP addendum requiring multi-turn jailbreak scores; standardized safety scorecards; model-selection comparisons (e.g., ASR vs. cost and utility)

- Assumptions/dependencies: Agreement on scoring rubrics; benchmark datasets (AdvBench/HarmBench); independent auditors or internal red team capacity

- Runtime conversation risk scoring and escalation

- Sectors: customer support, education, healthcare, finance compliance

- Tools/products/workflows: “Prompt firewall” that monitors ongoing dialogues for adversarial signals (framing/obfuscation/narrative shifts); auto-escalate to human review; dynamic policy reinforcement on risky turns

- Assumptions/dependencies: Low-latency evaluators; precise policy mappings for categories; acceptable false-positive rates; logging and privacy safeguards

- Safety regression tracking and governance reporting

- Sectors: enterprise AI governance, risk management, insurance underwriting

- Tools/products/workflows: Quarterly safety reports with multi-turn ASR trends; “model drift” alerts; cross-model vulnerability comparisons

- Assumptions/dependencies: Version control for models and guardrails; consistent evaluator criteria; organizational governance mandates

- Secure prompt and safety configuration testing for code assistants

- Sectors: software engineering, DevSecOps

- Tools/products/workflows: Multi-turn adversarial tests targeting insecure code suggestions (e.g., SQL injection, unsafe crypto); safety rulesets validated via AutoAdv-style probes; developer-facing “unsafe snippet” detectors

- Assumptions/dependencies: Curated adversarial code benchmarks; reliable secure-coding policies; acceptance of potentially lower recall due to strict guardrails

- Training trust-and-safety teams with simulated attack scenarios

- Sectors: platform moderation, enterprise compliance

- Tools/products/workflows: Scenario libraries that reproduce layered framing, persona creation, and domain shifting; incident runbooks and tabletop exercises

- Assumptions/dependencies: Ethical training scope; careful red-team-to-blue-team knowledge transfer without leaking attack details; scenario curation and maintenance

- Model-specific defense tuning and “temperature governance”

- Sectors: software, platform AI ops

- Tools/products/workflows: Adjust decoding parameters (e.g., lower temperature on risky turns); system prompt hardening; per-category safety memory that resists multi-turn erosion

- Assumptions/dependencies: Access to model settings; detection signals for “risky turn” transitions; trade-offs with creativity/utility

- API safety wrappers for third-party LLMs

- Sectors: startups integrating external LLMs, SaaS platforms

- Tools/products/workflows: Proxy layer that runs multi-turn risk checks on user conversations; blocks or sanitizes outputs; logs adversarial patterns and retrains defense heuristics

- Assumptions/dependencies: Provider TOS compliance; scalability/cost at production volumes; user privacy and data retention policies

- Academic replication studies and classroom labs

- Sectors: academia (AI safety, security, HCI)

- Tools/products/workflows: Course modules using AutoAdv-like frameworks for evaluating multi-turn vulnerabilities; empirical comparisons of StrongREJECT vs. alternatives; open lab notebooks

- Assumptions/dependencies: Institutional review and ethics approval; controlled environments; non-proliferation of attack details beyond educational scope

Long-Term Applications

These applications require further research, scaling, or development (e.g., stronger evaluators, robust multi-modal defenses, alignment advances, standardization).

- Certified multi-turn safety standards and audits

- Sectors: policy/regulation, healthcare, finance, education

- Tools/products/workflows: Industry standards mandating multi-turn safety testing; certification labels indicating ASR thresholds; annual external audits

- Assumptions/dependencies: Regulator buy-in; consensus on benchmarks/evaluators; international harmonization; independent certifying bodies

- Defense-learning agents that adapt across conversations

- Sectors: platform trust and safety, software

- Tools/products/workflows: “Adaptive defender LLM” trained on multi-turn adversarial trajectories; conversation-level memory that preserves safeguards; counter-framing strategies

- Assumptions/dependencies: High-quality, diverse adversarial corpora; robust reinforcement learning without overfitting; prevention of emergent bypass behaviors

- Multi-modal red teaming (text, images, audio, video, code)

- Sectors: robotics, VLM platforms, creative tools, smart assistants

- Tools/products/workflows: AutoAdv-style frameworks extended to VLMs; adversarial image/audio prompt simulators; unified cross-modal ASR dashboards

- Assumptions/dependencies: Multi-modal evaluators beyond StrongREJECT; safe access to models; domain-specific policy taxonomies; substantial compute and data

- Adversarial training pipelines using synthetic multi-turn data

- Sectors: model developers, foundation model labs

- Tools/products/workflows: Continuous generation of multi-turn adversarial conversations for alignment finetuning; curriculum learning that hardens long-dialogue safety

- Assumptions/dependencies: Scalable data generation; avoiding harmful content proliferation; measurable generalization gains; advanced filtering and labeling

- Sector-specific safety frameworks and guardrail libraries

- Sectors: healthcare (clinical AI), finance (consumer advice), education (student support), legal (document drafting)

- Tools/products/workflows: Domain-tailored safety policies; “defense pattern libraries” that counter common adversarial framings; plug-and-play guardrails for vendors

- Assumptions/dependencies: Domain expert involvement; evolving policy updates; interoperability across LLM providers

- Insurance products and risk quantification for LLM deployments

- Sectors: insurance, enterprise risk, cybersecurity

- Tools/products/workflows: Underwriting models using multi-turn ASR metrics; premium discounts for certified defenses; incident loss modeling tied to dialogue risks

- Assumptions/dependencies: Longitudinal claims data; standardized metrics; accepted causal links between ASR and incident likelihood

- Continuous market-level safety benchmarking and transparency portals

- Sectors: public interest, policy, academia

- Tools/products/workflows: Public dashboards comparing models across categories and turns; trend analyses showing safety improvements/degradations; “nutrition labels” for LLM safety

- Assumptions/dependencies: Vendor cooperation; reproducible testbeds; governance around disclosure vs. security concerns

- Operating system–level “LLM safety monitors”

- Sectors: consumer devices, enterprise endpoints

- Tools/products/workflows: Local agents that instrument conversations (across apps) and flag adversarial patterns; parental controls and enterprise policies

- Assumptions/dependencies: Privacy-preserving instrumentation; user consent; platform APIs; acceptable latency/compute footprint

- Human-in-the-loop orchestration for high-stakes domains

- Sectors: healthcare triage, legal drafting, financial advising

- Tools/products/workflows: Workflow engines that require human validation whenever multi-turn risk indicators trigger; documented decision trails; corrective feedback loops

- Assumptions/dependencies: Skilled reviewers; process integration; cost and throughput considerations; liability frameworks

- Next-generation evaluators beyond StrongREJECT

- Sectors: academia, model labs, safety evaluators

- Tools/products/workflows: Hybrid human+LLM evaluators; causality-sensitive scoring; category-specific precision/recall targets; multilingual/multicultural coverage

- Assumptions/dependencies: Benchmark diversity; annotation quality; explainability requirements; guardrails against evaluator gaming

- Agentic system hardening (planning agents, RPA, robotics)

- Sectors: automation/RPA, robotics, enterprise workflows

- Tools/products/workflows: Multi-turn adversarial tests for task agents; safety laminations (policy layers) that persist across long task horizons; rollback and containment mechanisms

- Assumptions/dependencies: Clear mappings from dialogue safety to action safety; robust state-management; recovery procedures; cross-system policy consistency

- Regulatory playbooks and responsible disclosure pipelines

- Sectors: policy, platform governance

- Tools/products/workflows: Standardized processes for reporting multi-turn vulnerabilities; safe dissemination channels; remediation SLAs; red-team bug bounty programs

- Assumptions/dependencies: Legal clarity; incentives for participation; non-proliferation protocols; cross-jurisdiction cooperation

Notes on feasibility across applications:

- Model updates may change vulnerability profiles, affecting reproducibility and comparability.

- Evaluator choice and thresholds (e.g., StrongREJECT) influence measured ASR; cross-evaluator validation is advisable.

- Multi-turn defenses can reduce utility; stakeholders must manage trade-offs between safety and capability.

- Costs scale with conversation length and evaluator complexity; budgeting for token usage is necessary.

- Ethical and legal constraints must govern all adversarial testing to prevent misuse and data privacy violations.

Collections

Sign up for free to add this paper to one or more collections.