- The paper introduces a dual-training strategy that explicitly anchors the model in the probability flow using a flow matching task.

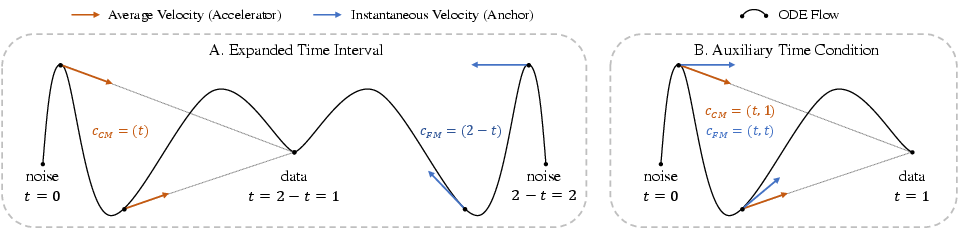

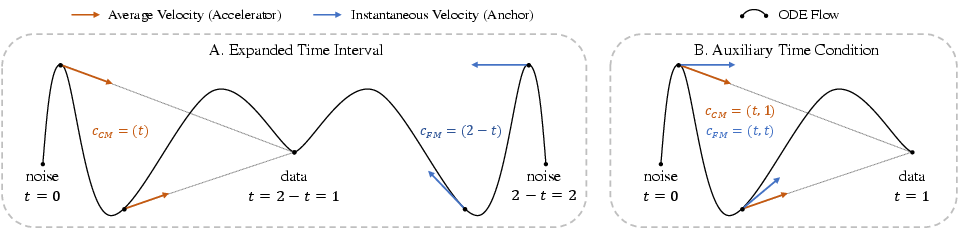

- It employs two novel implementations—expanded time interval and auxiliary time condition—to stabilize training without altering the model architecture.

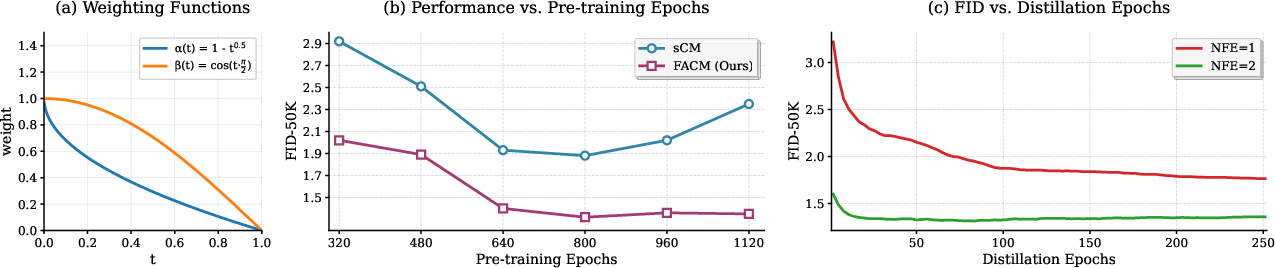

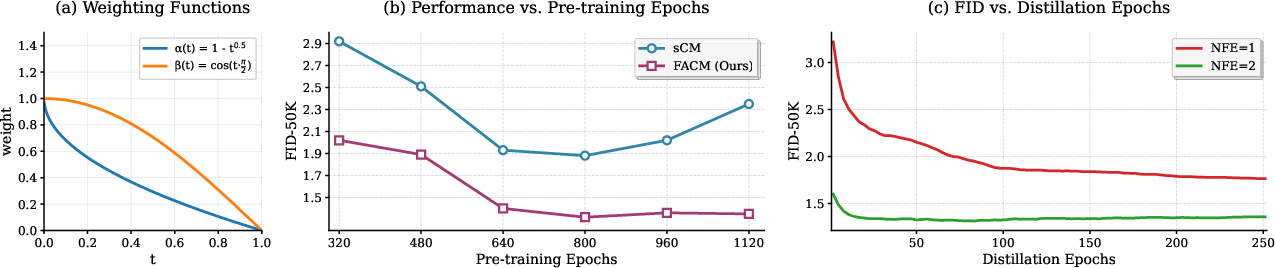

- Experimental results demonstrate significant performance gains on ImageNet, achieving FID scores of 1.76 (NFE=1) and 1.32 (NFE=2).

Flow-Anchored Consistency Models

Introduction

Flow-Anchored Consistency Models (FACMs) address the challenge of training instability in Continuous-time Consistency Models (CMs) by introducing a novel training strategy that anchors models in the probability flow they aim to shortcut. This paper introduces a fundamental adjustment to the CM framework, exploiting the Flow Matching (FM) task to stabilize model training without altering the model architecture. The robustness of the FACM is demonstrated through substantial improvements in metric evaluation on standard datasets, illustrating its practical implications for real-time generative applications.

Theoretical Foundation and Methodology

The instability in training CMs results from a lack of explicit anchoring in the underlying velocity field of the probability flow. Traditional CM objectives enable models to bypass this field but often lead to degraded performance due to the implicit assumption that models understand the flow thoroughly. FACM resolves this by explicitly incorporating an FM task that learns the velocity field, thus maintaining the model's anchor in the flow.

A dual-objective approach is implemented:

- Flow-Anchoring Objective: This explicitly trains the model on the instantaneous velocity field for stability.

- Shortcut Objective: Focuses on efficient one-step consistency mapping.

The FACM strategy includes two primary implementations:

- Expanded Time Interval: Leverages a conceptual expansion of the time domain, assigning tasks to distinct temporal conditions without modifying existing architectures.

- Auxiliary Time Condition: Introduces an additional time condition distinguishing FM and CM tasks via conditioning tuples.

By employing these strategies, FACM achieves high performance without necessitating architectural revisions.

Figure 1: Two implementation strategies for the mixed-objective function in FACM.

Experimental Validation

FACM sets a precedent with its exceptional performance on datasets such as ImageNet 256×256. By implementing state-of-the-art models in generative scenarios, FACM achieves Fréchet Inception Distance (FID) scores of 1.76 (NFE=1) and 1.32 (NFE=2) on ImageNet, outperforming previous methodologies without compromising computational efficiency.

Figure 2: Performance of distilled models vs. FM pre-training epochs.

The numerical superiority of FACM is sustained across architectures, showcasing its adaptability and architectural agnosticism. FACM delivers consistent performance irrespective of the pre-trained model or stabilization strategy, underpinning the robustness induced by flow anchoring.

Implications and Future Work

FACM's methodological framework redefines the approach to training generative models, introducing a stability layer through flow anchoring. This foundational shift not only crystallizes the theoretical underpinnings of generative modeling but also facilitates practical implementations in scenarios demanding efficiency with minimal computational expense.

Future work should explore optimized sampling strategies aligning closer with true flow trajectories to further hone model efficiency. Extending FACM's principles to more intricate large-scale tasks, such as text-to-image synthesis, could yield significant advancements, making FACM a versatile tool in the evolving landscape of AI-driven content generation.

Conclusion

The FACM introduces a significant advancement in the stability and performance of Continuous-time Consistency Models through a simple yet effective anchoring strategy in flow dynamics. By exploiting the flow matching task, FACM ensures reliable model training and superior generation capabilities, achieving unprecedented results on standard data benchmarks and heralding a new era in generative modeling with potential applications across diverse AI and machine learning domains.