- The paper demonstrates that integrating curiosity (information gain) and competence (empowerment) improves exploration strategies in RL agents.

- It employs both a Tabular agent with fixed state representations and a Dreamer agent that learns latent models from raw pixel inputs.

- Results indicate that hybrid intrinsic motivation strategies yield robust performance in challenging and unpredictable grid environments.

Analysis of "From Curiosity to Competence: How World Models Interact with the Dynamics of Exploration"

Introduction

The paper "From Curiosity to Competence: How World Models Interact with the Dynamics of Exploration" (2507.08210) investigates the balance between curiosity-driven learning and competence-driven control in reinforcement learning (RL) agents. It bridges cognitive theories of intrinsic motivation with RL by formalizing how evolving internal representations mediate the trade-off between curiosity, often associated with novelty or information gain, and competence, typified by empowerment. This study employs both a Tabular agent with handcrafted state abstractions and a Dreamer agent learning an internal world model to explore this balance and its effects on exploration and learning efficacy.

Intrinsic Motivations and Environment Setup

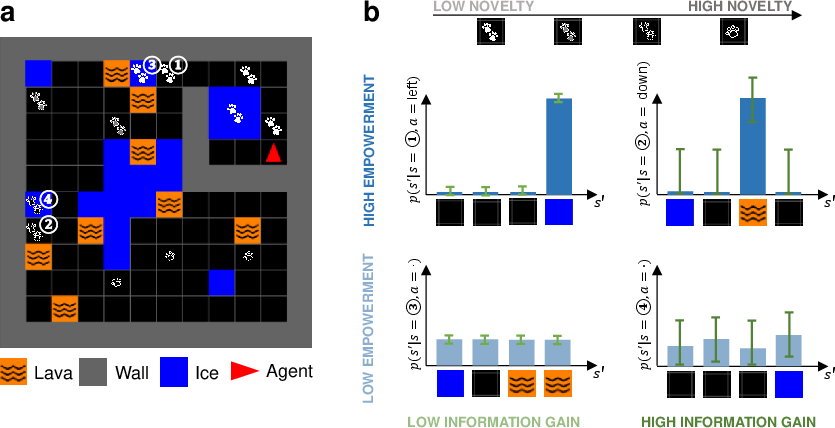

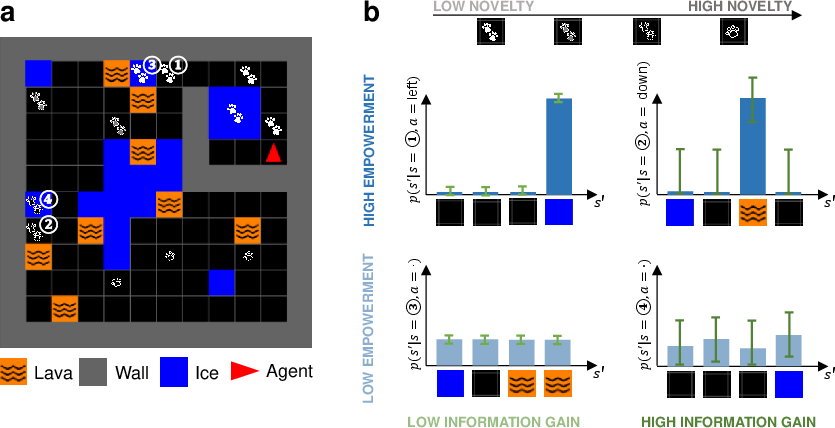

The study utilizes a varied grid environment with distinct terrains, including lava, ice, and walls, to test the influence of different intrinsic motivations. The grid constitutes an environment that demands navigation while avoiding irreversible penalties, exemplified by lava. Intrinsically motivated agents must manage exploration patterns to enhance learning about the environment while optimizing decision control.

Figure 1: Task overview. The mixed-state playground entails navigation challenges including dangerous lava and stochastic ice tiles.

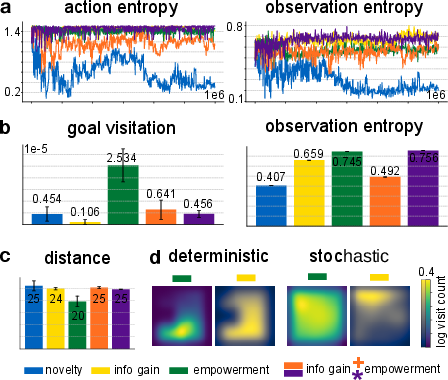

Three intrinsic motivations are examined: novelty (based on state visitation frequency), information gain (reduction in epistemic uncertainty), and empowerment (control over future states). These motivations aim to emulate human cognitive patterns where curiosity and competence co-evolve, facilitating both knowledge acquisition and mastery over environments.

Agent Design and Simulations

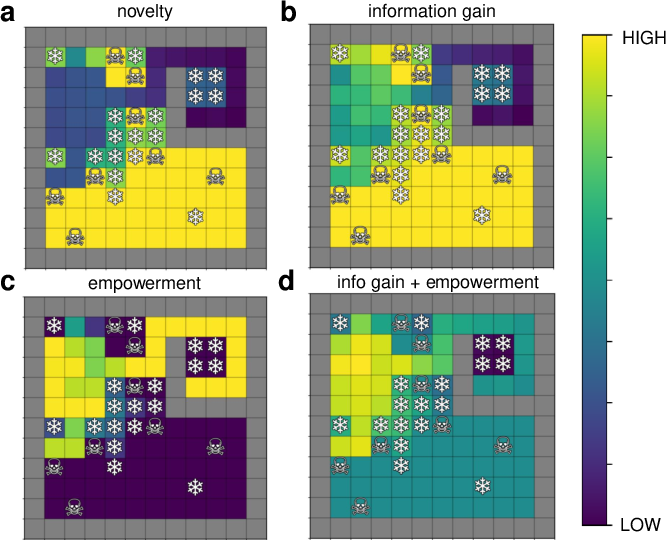

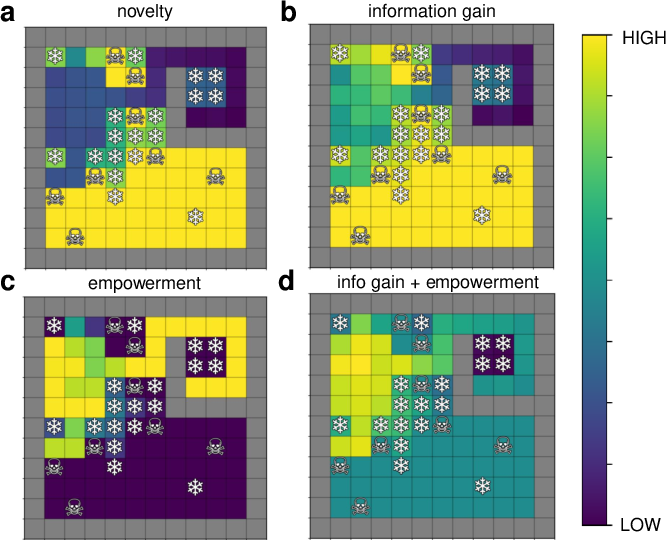

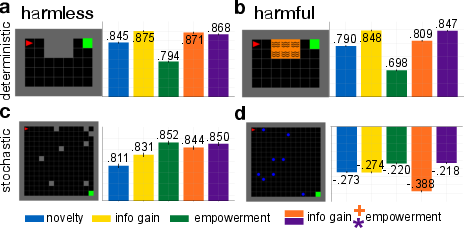

A significant aspect of the study is the performance analysis of a Tabular agent. The Tabular agent, with fixed state representations, isolates the effects of intrinsic motivations on exploration within the mixed-state grid. The simulations reveal that combining curiosity-driven and competence-driven strategies enhances exploration quality by balancing the trade-off between information-seeking and control retention. Notably, the synergy between information gain and empowerment proves advantageous, suggesting their coupled benefit in navigation tasks.

Figure 2: Intrinsic motivation heatmaps demonstrate how novelty, information gain, and empowerment impact exploration strategies on the grid.

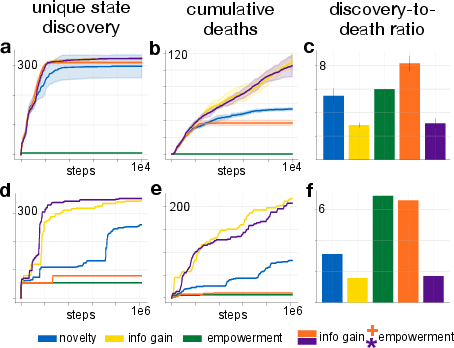

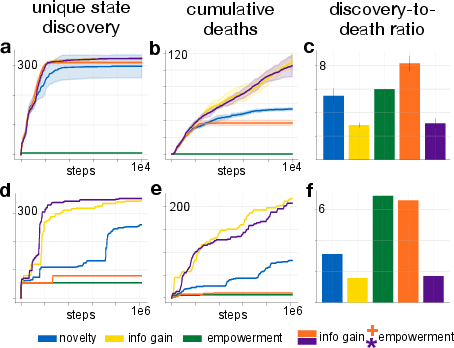

The Dreamer agent's ability to learn representations dynamically provides insights into how intrinsic motivations interact with evolving state abstractions. Unlike the Tabular agent, the Dreamer agent learns from raw pixel observations, autonomously constructing latent state representations. The agent navigates the task space, balancing its intrinsic motivations against environmental feedback, thereby adapting strategies in real-time.

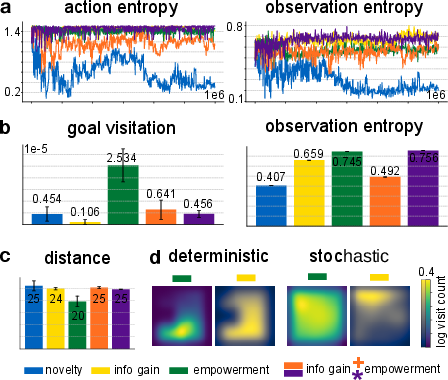

Figure 3: Exploration patterns for the Dreamer agent illustrate adaptive strategies under intrinsic motivation influences.

Results and Findings

The results indicate that agents driven solely by information gain engage thoroughly with their environments but risk deleterious exploration paths, e.g., entering high-risk states like lava. Conversely, agents focused on empowerment demonstrate more conservative exploration patterns, necessitating a balance to avoid exploration pitfalls. Interestingly, composite motivation strategies that leverage both information gain and empowerment excel in environments requiring nuanced navigation dynamics.

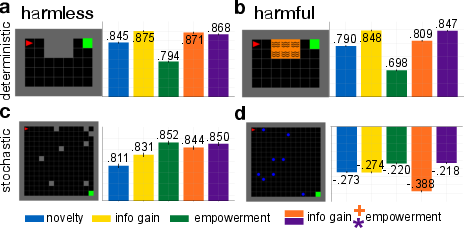

Figure 4: Generalization performance in unary-state grid worlds highlights the differential impacts of intrinsic motivations across varied environments.

Empirical findings confirm that environments with predictable dynamics allow both curiosity and competence to manifest robustly, while stochastic environments challenge purely information-driven agents. The adaptive agents, with hybrid motivations, reveal improved resilience in tasks demanding balance between exploration and control.

Figure 5: Examination of the Dreamer agent's exploration patterns during pretraining showcases the intrinsic motivation impacts on discovery and learning.

Conclusion

"From Curiosity to Competence" offers foundational insights into the interplay of intrinsic motivations and state representations within RL frameworks. The research underscores the complementary nature of curiosity and competence, advocating for hybrid approaches to optimize agent exploration and learning. Future research directions may explore the scalability of these findings to more complex, real-world scenarios and further investigate the adaptive tuning of intrinsic motivation strategic weightings to environmental complexities. These studies will potentially refine RL agent design, fostering autonomous systems better equipped to handle diverse and unpredictable real-world tasks.