- The paper introduces a systematic data poisoning attack that targets the retrieval stage in KG-RAG systems.

- It demonstrates significant performance drops, including a 46% F1 decrease in some models, using stealthy insertion-only strategies.

- The study underscores the need for robust countermeasures by comparing vulnerabilities across open-source and closed-source language models.

RAG Safety: Exploring Knowledge Poisoning Attacks to Retrieval-Augmented Generation

Introduction

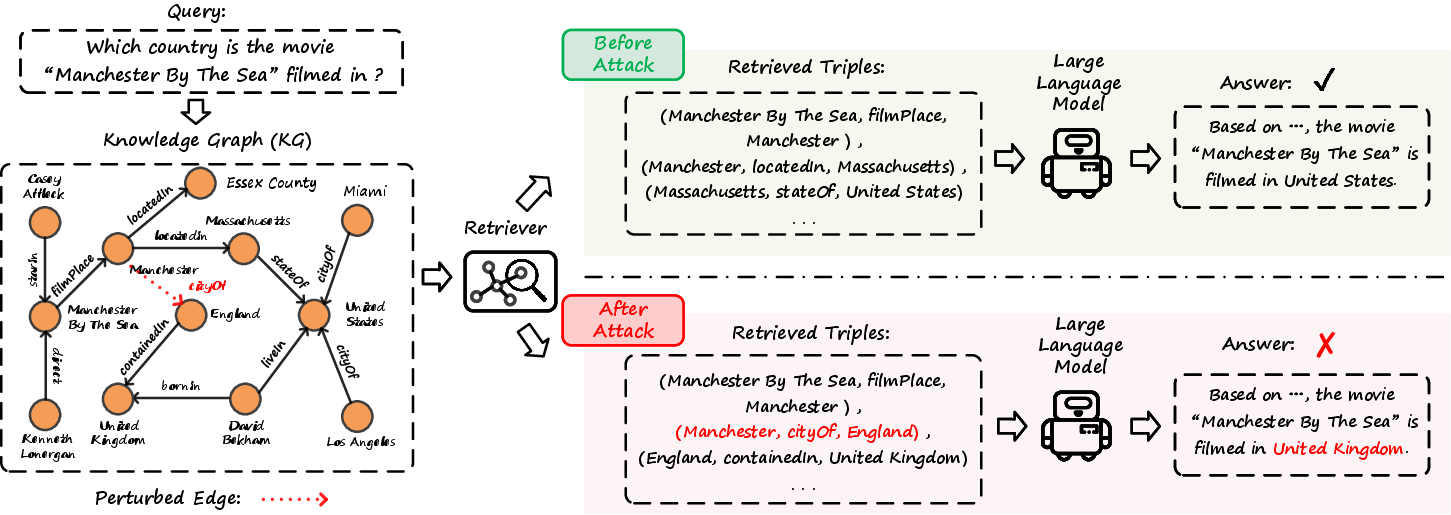

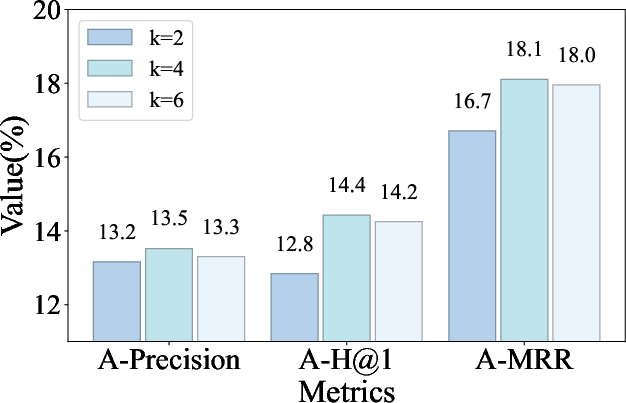

The paper "RAG Safety: Exploring Knowledge Poisoning Attacks to Retrieval-Augmented Generation" explores the vulnerabilities of Retrieval-Augmented Generation (RAG) systems, specifically focusing on the KG-based Retrieval-Augmented Generation (KG-RAG) systems. RAG systems are built to enhance the knowledge retention and recall abilities of LLMs by incorporating external knowledge sources. The paper highlights that despite improving LLMs’ performance in various contexts, RAG systems, particularly those using knowledge graphs (KGs), are susceptible to data poisoning attacks. These attacks can inject adversarial information into KGs, manipulating systems into producing erroneous responses. The study presents a systematic exploration of such vulnerabilities and proposes a novel attack framework.

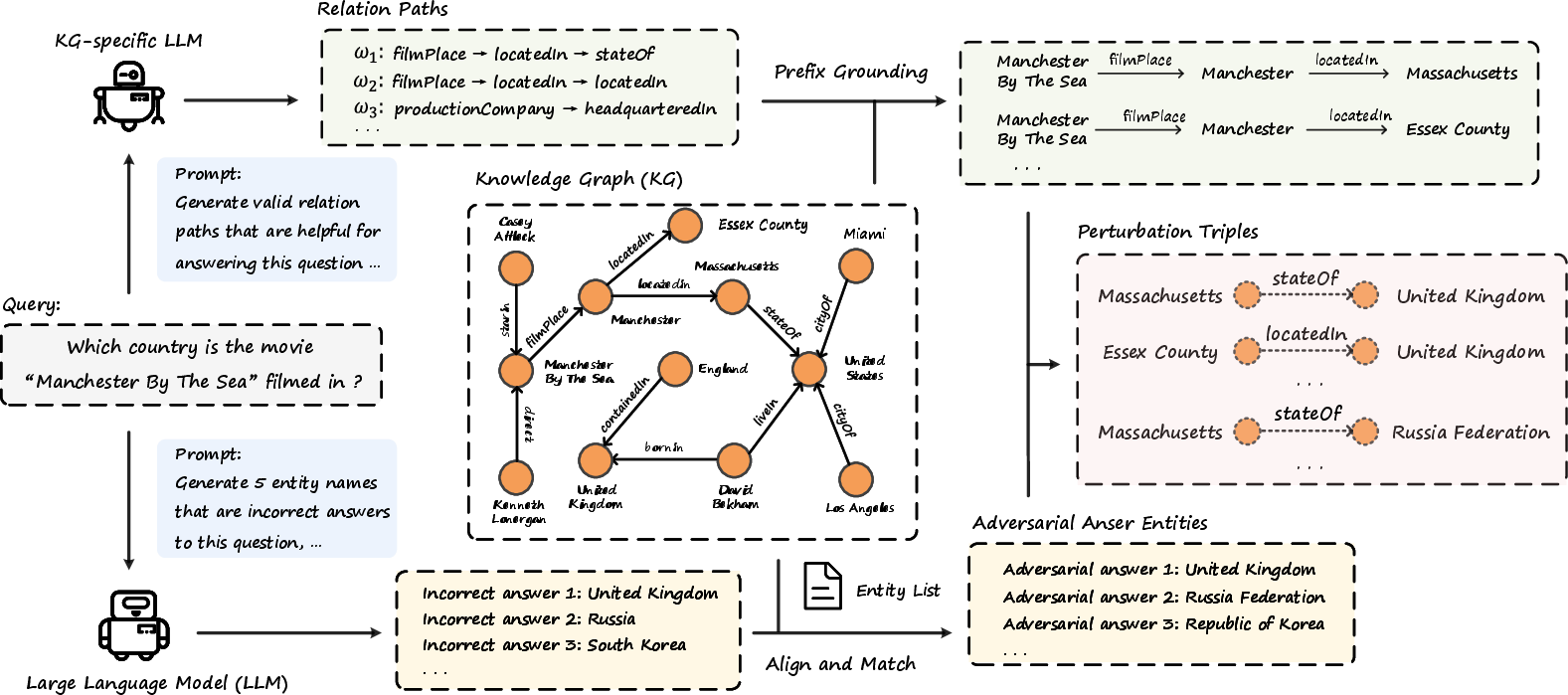

Figure 1: Illustration of KG-RAG pipelines before and after data poisoning attack.

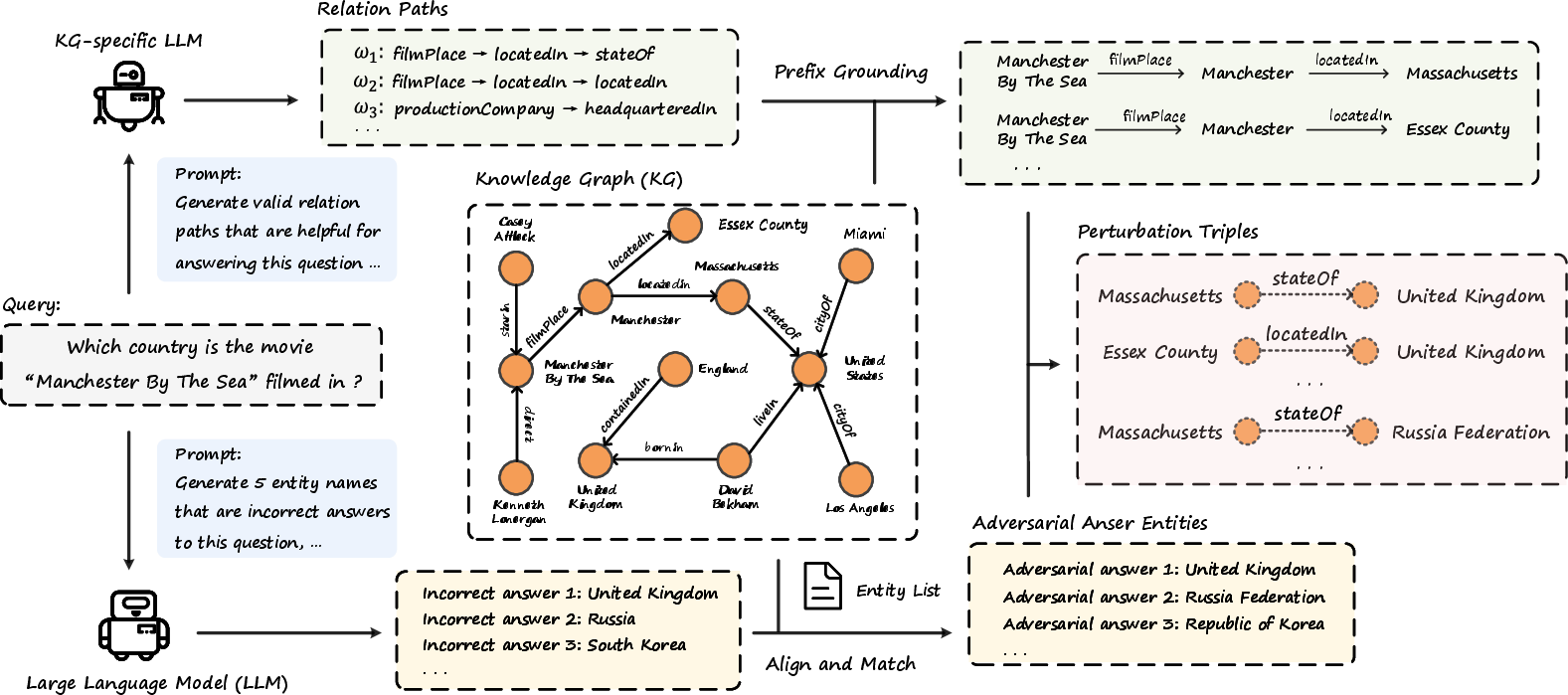

Proposed Data Poisoning Attack Strategy

To exploit the vulnerabilities of KG-RAG systems, the authors propose an attack strategy that emphasizes realistic and stealthy conditions, aligning with real-world KG-RAG implementations. This attack employs an insertion-only strategy in a black-box setting, where the attacker has no access to the internal parameters of the KG-RAG system but can insert perturbation triples into the KG. These triples form misleading inference chains that the system relies upon during response generation.

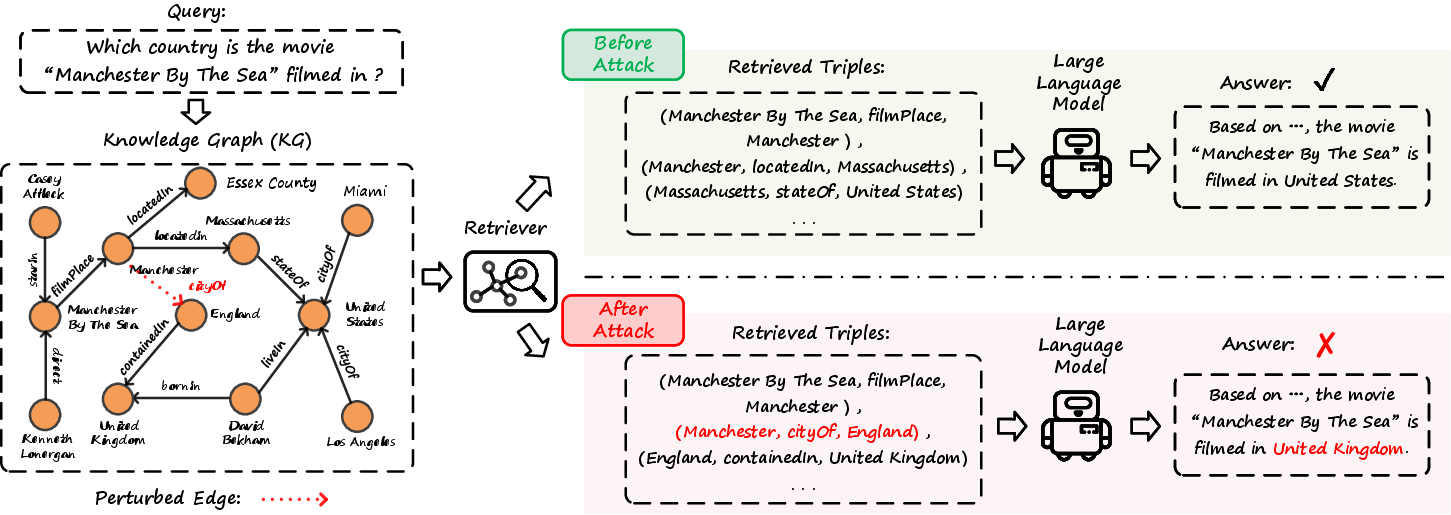

Adversarial Answer Generation: The attack entails generating plausible yet incorrect answers using LLM prompts (Figure 2). The misdirection involves ensuring that adversaries fit seamlessly within the existing KG structure, thereby increasing retrieval probability during RAG processes without being conspicuous.

Figure 2: Prompt template for generating adversarial answers.

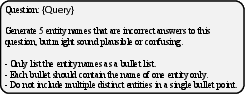

Relation Path Extraction and Instantiation: Using KG-specific LLMs, relation paths are extracted and instantiated to ground the adversarial inserts into plausible reasoning paths within the existing KGs (Figure 3). These steps enable the construction of adversarial triples within the allowable modification budget constraints.

Figure 3: Prompt template for relation paths.

Evaluation and Results

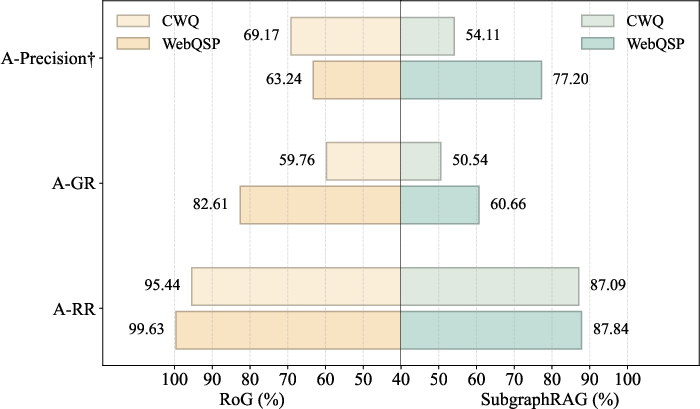

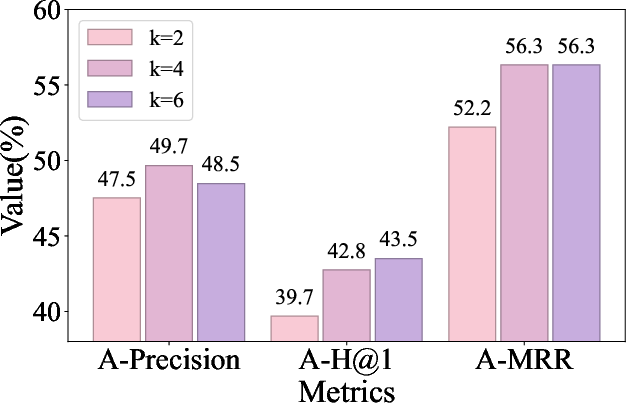

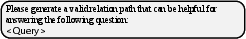

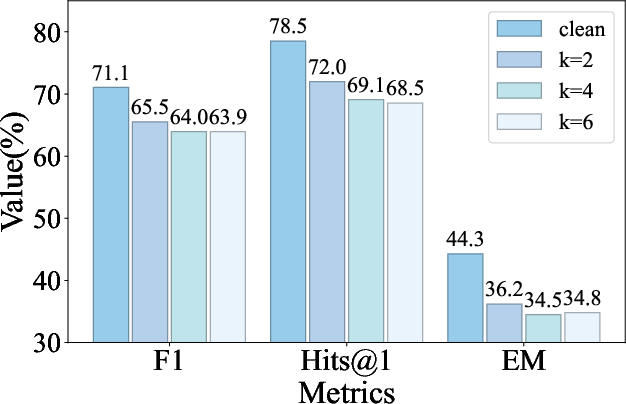

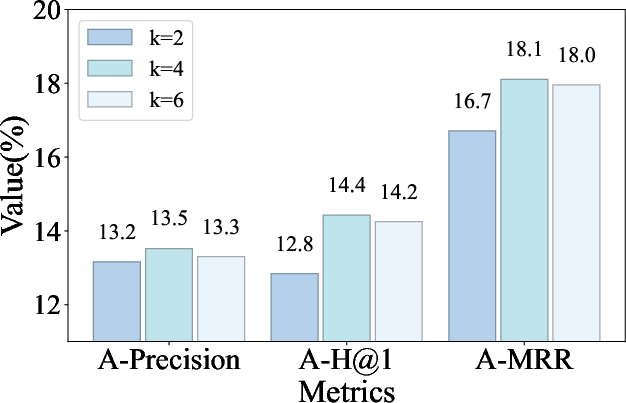

The attack assessment covers two primary KGQA benchmarks: WebQuestionSP (WebQSP) and Complex WebQuestions (CWQ). The authors employ metrics such as Hit, Precision, Recall, F1, Hits@1, and Exact Match (EM) for evaluating QA performance degradation, while introducing novel metrics like A-Precision, A-H@1, and A-MRR for evaluating the success of adversarial manipulation.

Figure 4: The overall framework of our proposed data poisoning attack against KG-RAG.

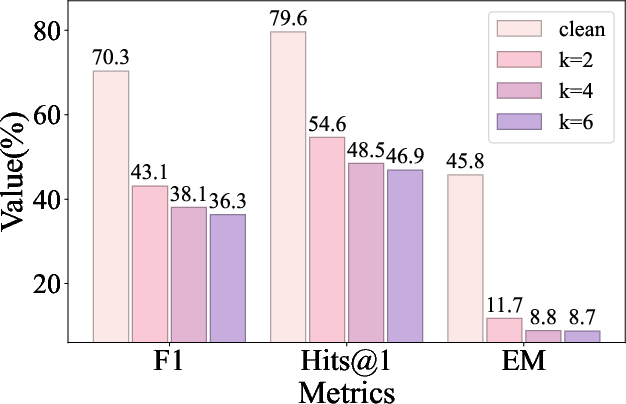

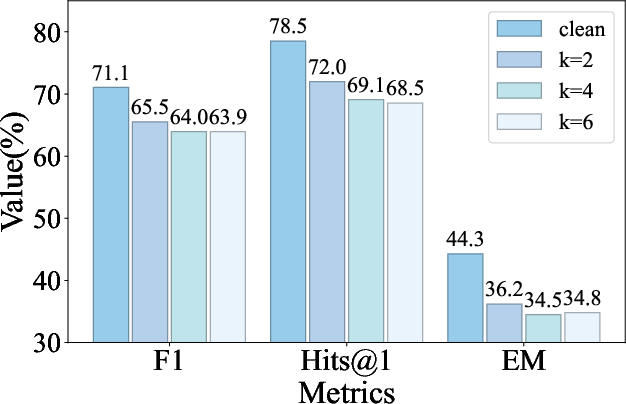

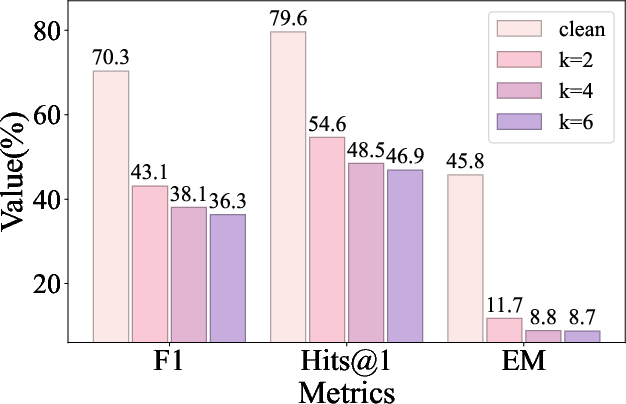

Results indicate significant degradation across four representative KG-RAG methodologies—RoG, GCR, G-retriever, and SubgraphRAG. Under attack, RoG demonstrated a 46% relative decrease in F1 score for WebQSP, and SubgraphRAG showed a 25% decrease, evidencing their frailty to adversarial influence (Table 1). The other methods also exhibited substantial performance drops, albeit to differing extents.

Analysis of Attack Impact

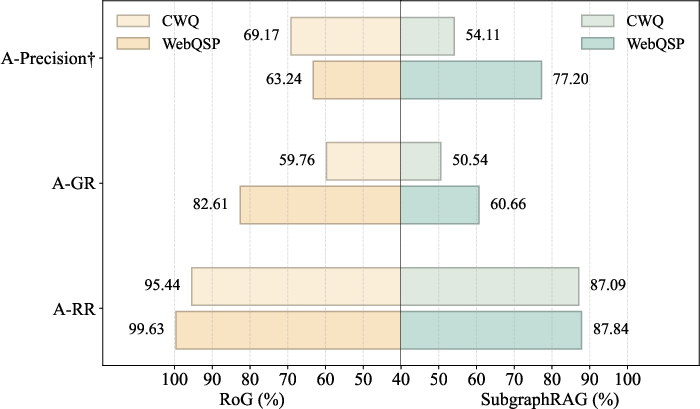

The study uncovers that KG-RAG methods are primarily compromised at the retrieval stage where injected perturbation triples are often selected as relevant data points (Figure 5). This excessive trust in retrieval results perpetuates adversarial influence during the generation phase.

Figure 5: Attack impact on different stages of KG-RAG systems.

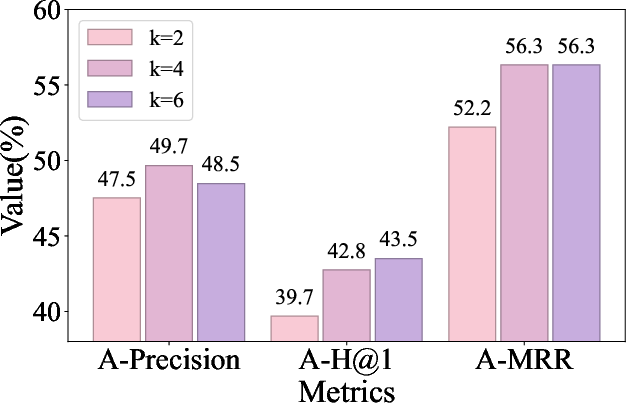

Moreover, the research demonstrates that larger scales of poisoning result in more substantial adversarial effects, although with diminishing returns as insertion volumes increase (Figure 6). This observation underscores the critical balance between attack effectiveness and stealthiness.

Figure 6: Attack Effectiveness across increasing poisoning scales.

A comparison of different LLMs used within the KG-RAG generator shows that open-source models exhibit greater vulnerability compared to closed-source and KG-specific models. The robustness of closed-source models like GPT-3.5-turbo and GPT-4, and the Mixture of Experts model, DeepSeek-V3-0324, fared better than their open-source counterparts (Table 3).

Conclusion

The research contributes an inaugural examination into the susceptibility of KG-RAG systems to data poisoning attacks, demonstrating their severe vulnerability. The proposed attack strategy effectively degrades performance across benchmark datasets and KG-RAG implementations, indicating prevalent risks tied to KG reliance. Future investigations could explore broader attack strategies and devise robust countermeasures to empower KG-RAG methods against such attacks. This work establishes a crucial foundation for further discourse and progressive safety measures within RAG systems.