- The paper proposes a novel spherical convolution technique that reprojects kernels onto the sphere to eliminate distortions in panoramic images.

- The method integrates spherical sampling with conventional pre-trained models, boosting semantic segmentation accuracy without extra computational cost.

- Experiments on the Stanford2D3D dataset demonstrate improved mIoU and mAcc metrics, highlighting robust performance across different image resolutions.

360-Degree Full-view Image Segmentation Using Spherical Convolution

Introduction

The advent of panoramic imaging technologies poses unique challenges in image processing, owing primarily to distortions that occur at the edges of such images, particularly at the poles. Traditional image processing models trained on two-dimensional datasets struggle to effectively manage these distortions without incurring substantial computational overhead. This paper introduces a novel technique that integrates spherical convolutions with existing planar pre-trained models to solve these challenges optimally. Specifically, the proposed method focuses on spherical sampling in panoramic images to facilitate seamless integration of two-dimensional pre-trained networks, thus enhancing panoramic semantic segmentation performance without extra computation burdens.

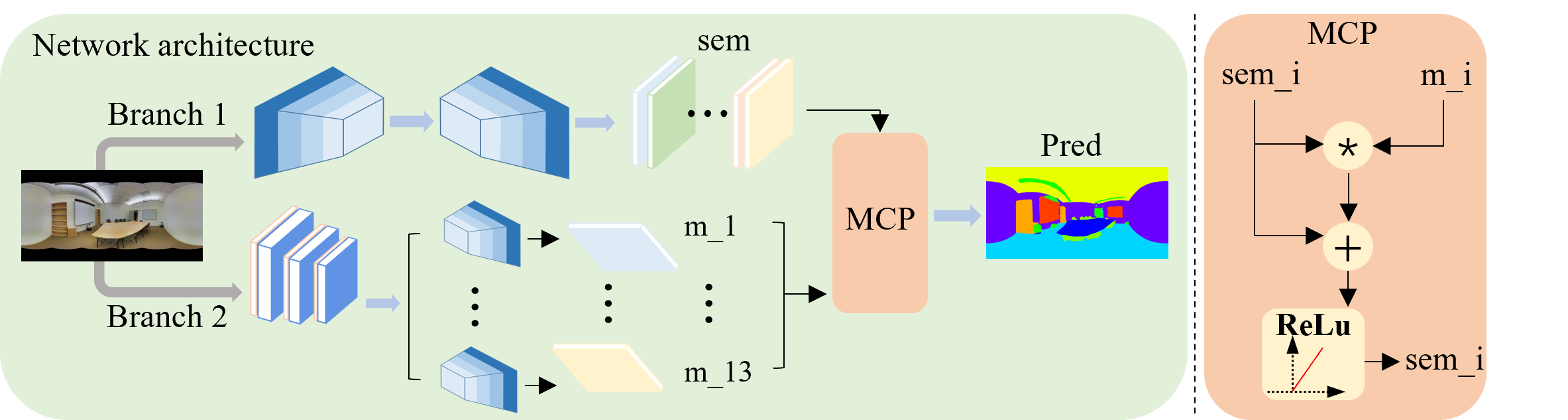

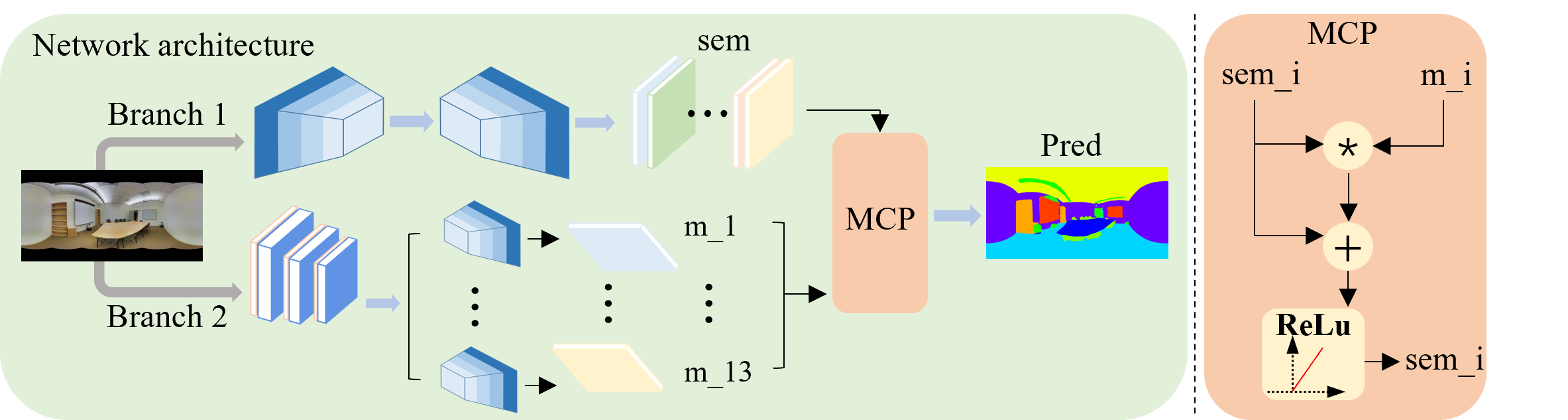

Figure 1: Network architecture.

Methodology

Spherical Convolution Implementation

Conventional methods for distortion correction in panoramic images typically require complex fusion techniques or alternative framework modifications that come with computational overheads. The proposed spherical convolution method modifies the convolution operation by recalibrating the kernel sampling points based on spherical geometry. Unlike planar kernels, spherical kernels account for the inherent curvature by aligning sampling points with spherical coordinates. By adjusting the kernel according to spherical rules, this approach eliminates distortion while leveraging existing pre-trained models, significantly reducing the network overhead associated with additional distortion correction modules.

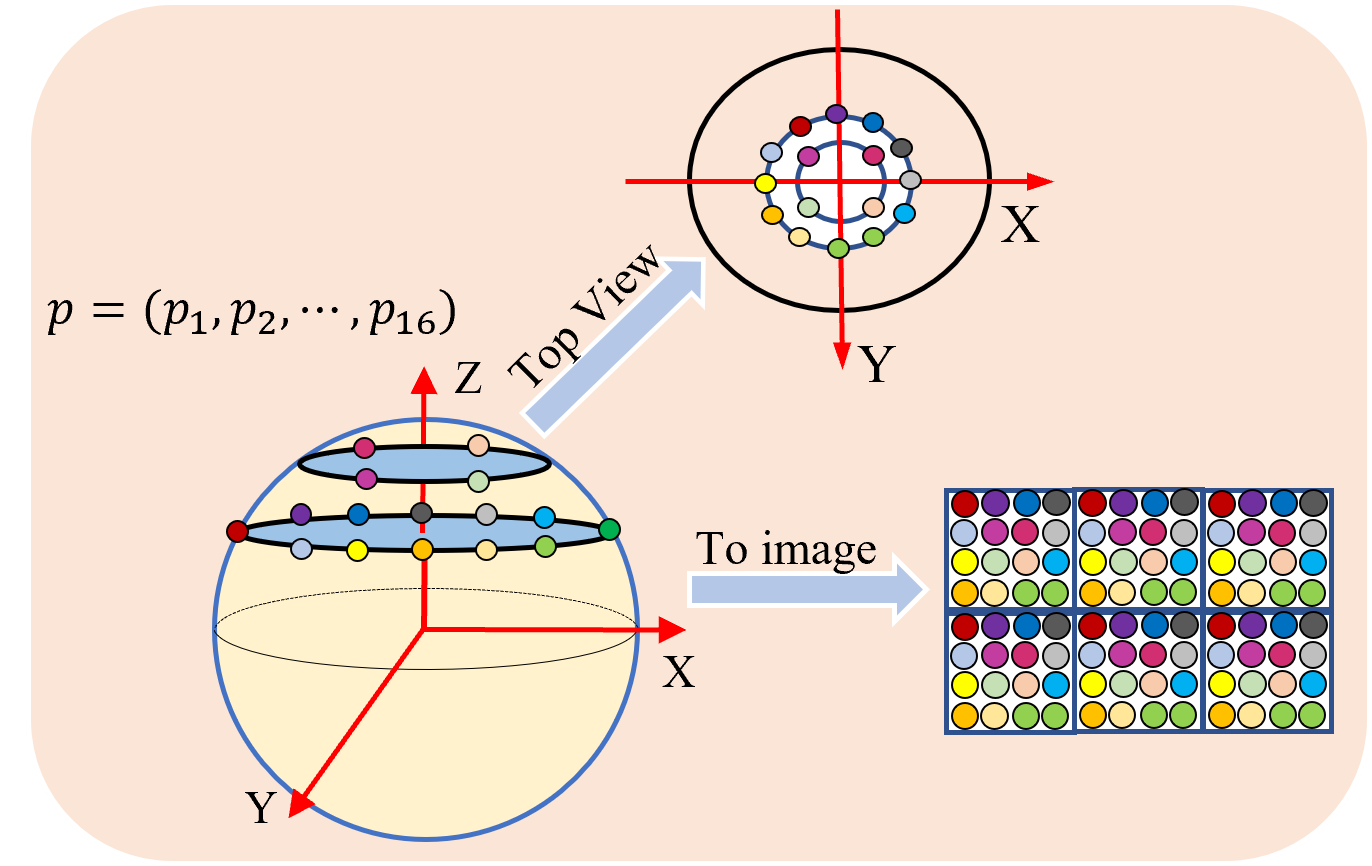

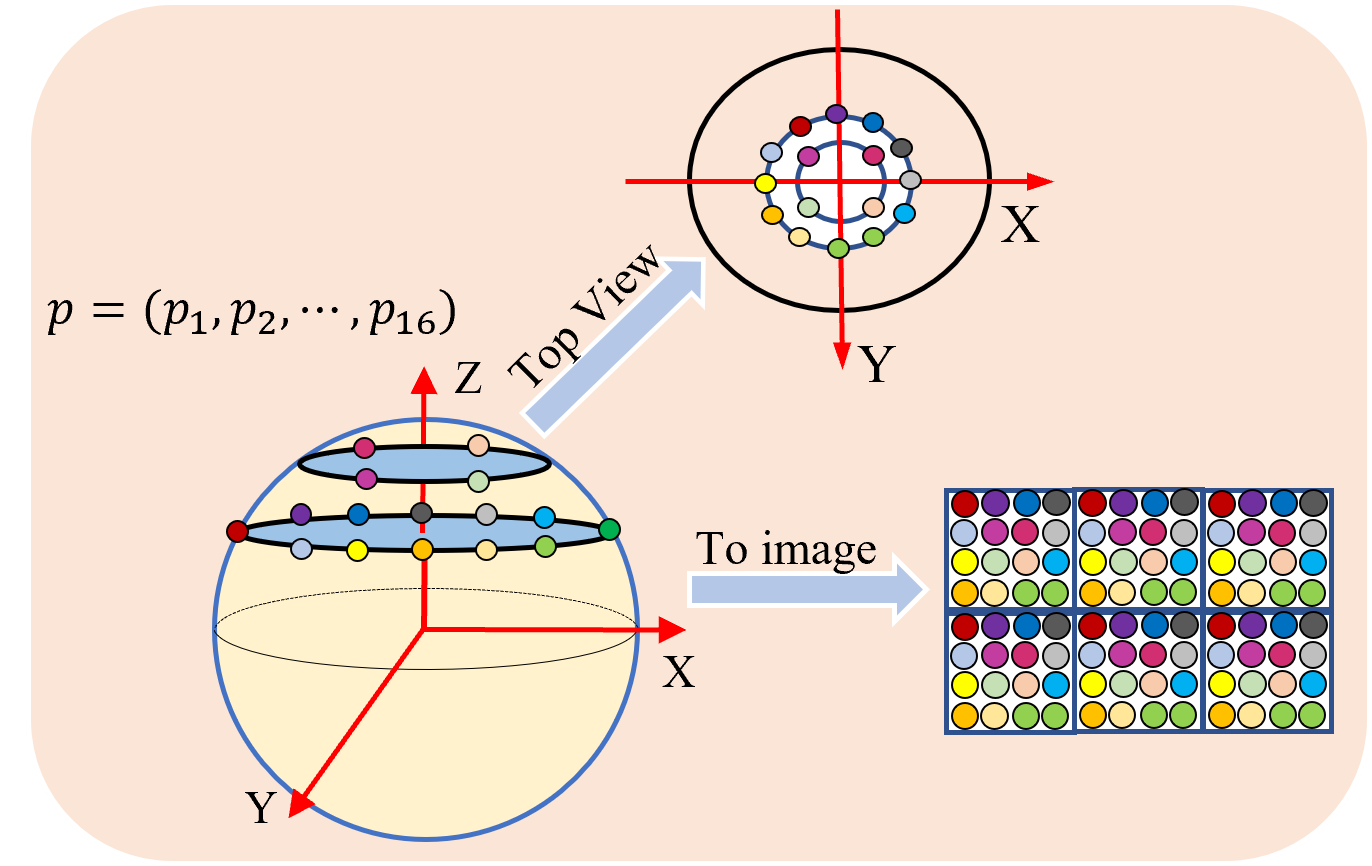

Figure 2: Spherical sampling.

In detail, our approach reprojects convolution kernels onto the sphere, recalculates pixel coordinates to correspond to spherical samples, and thus enables planar pre-trained models to efficiently handle spherical data.

Semantic Segmentation Enhancements

To achieve improved semantic segmentation, the methodology includes a spherical branch that generates heatmaps tailored to each semantic channel, enhancing attention weights focused on critical image regions. This segmentation strategy treats each semantic category by generating specific attention maps, thereby improving the network's accuracy and robustness in capturing panoramic image features. The proposed technique segments the image into multiple channels and processes these channels with attention weights, which are adjusted based on spherical convolution-derived features.

This dual-path architecture ensures precision by combining the spherical branch with a conventional semantic processing network, reinforcing panoramic image perception and segmentation accuracy effectively.

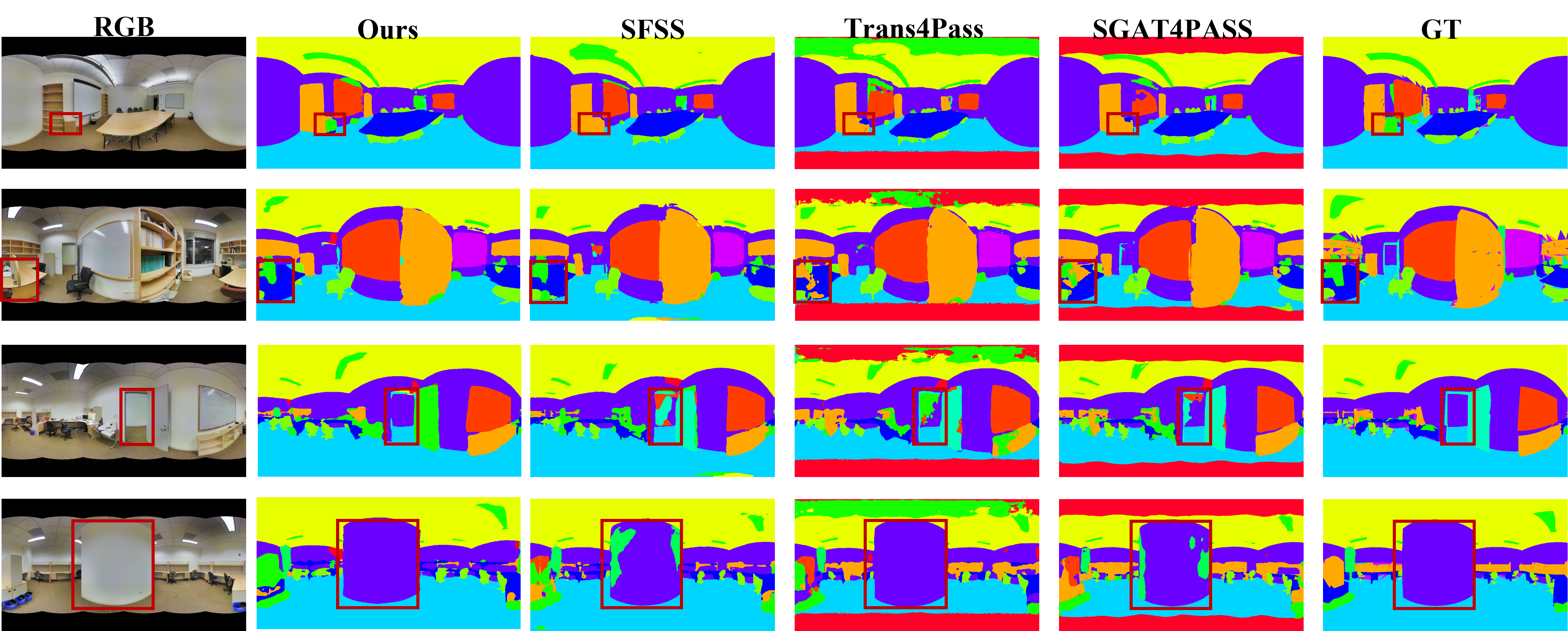

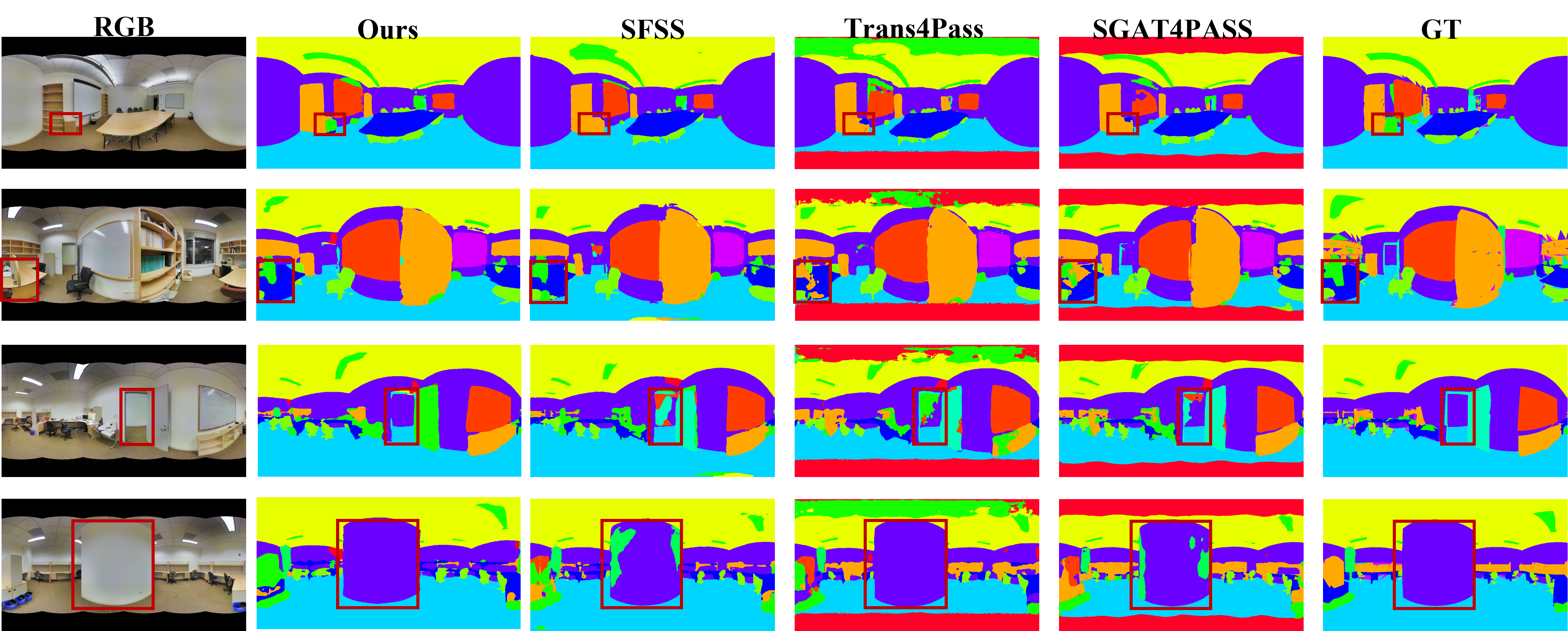

Figure 3: The segmentation results on the Stanford2D3D.

Experimental Results

Comprehensive evaluation was conducted on the Stanford2D3D dataset, highlighting significant improvements in segmentation accuracy, even under varying image resolutions.

Our method demonstrated superior performance compared to traditional methods across various metrics including both mean Intersection over Union (mIoU) and mean accuracy (mAcc). Notably, our approach achieved mIoU improvements over several state-of-the-art methods, demonstrating its efficacy in handling panoramic distortions efficiently without modifying existing network architectures drastically.

Discussion and Ablation Studies

A series of ablation studies underscored the impact of spherical convolution and the semantic branch configuration. These studies validated the selection of the third layer for optimal segmentation outcomes and highlighted the benefits derived from attention-enhanced fusion techniques over traditional concatenation methods in the final segmentation results.

The devised architecture further reinforced the utility of integrating spherical properties into existing convolution networks, substantiating the premise that spherical adaptation advances panoramic segmentation performance substantially, even with varying image scales and underlying dataset constraints.

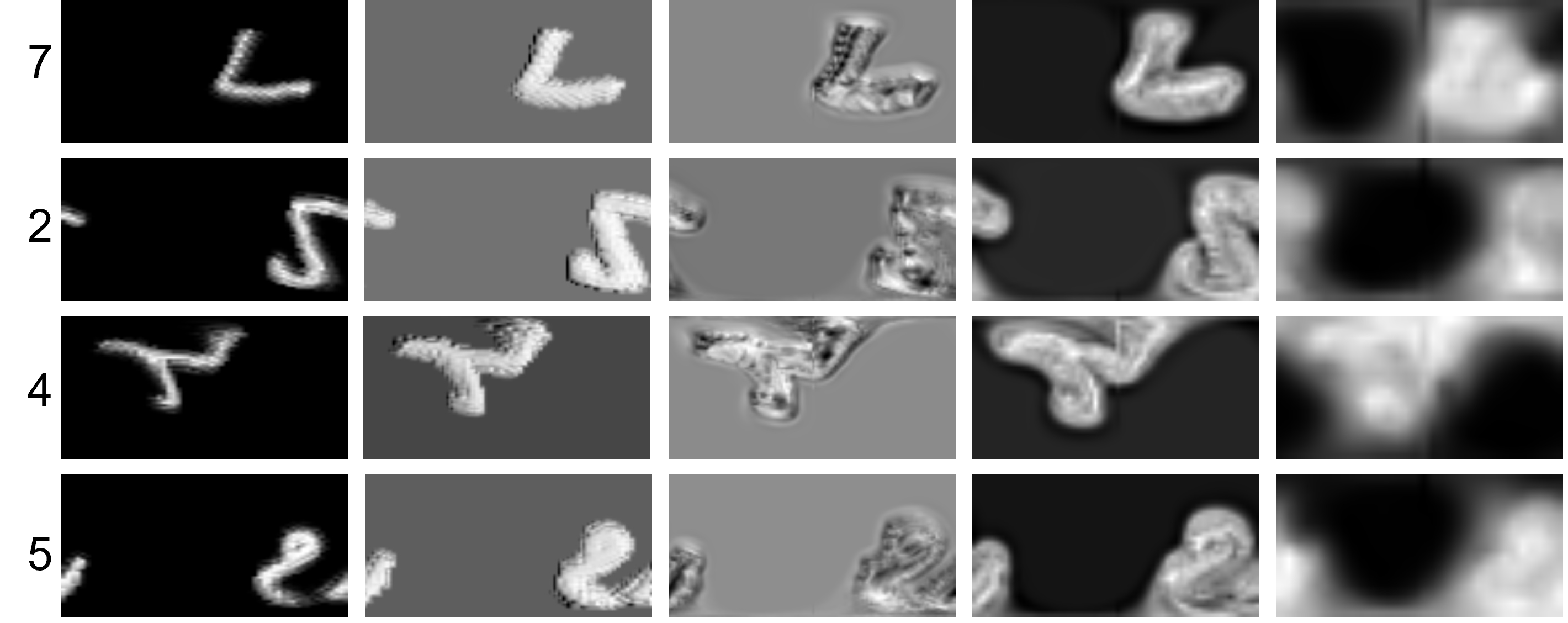

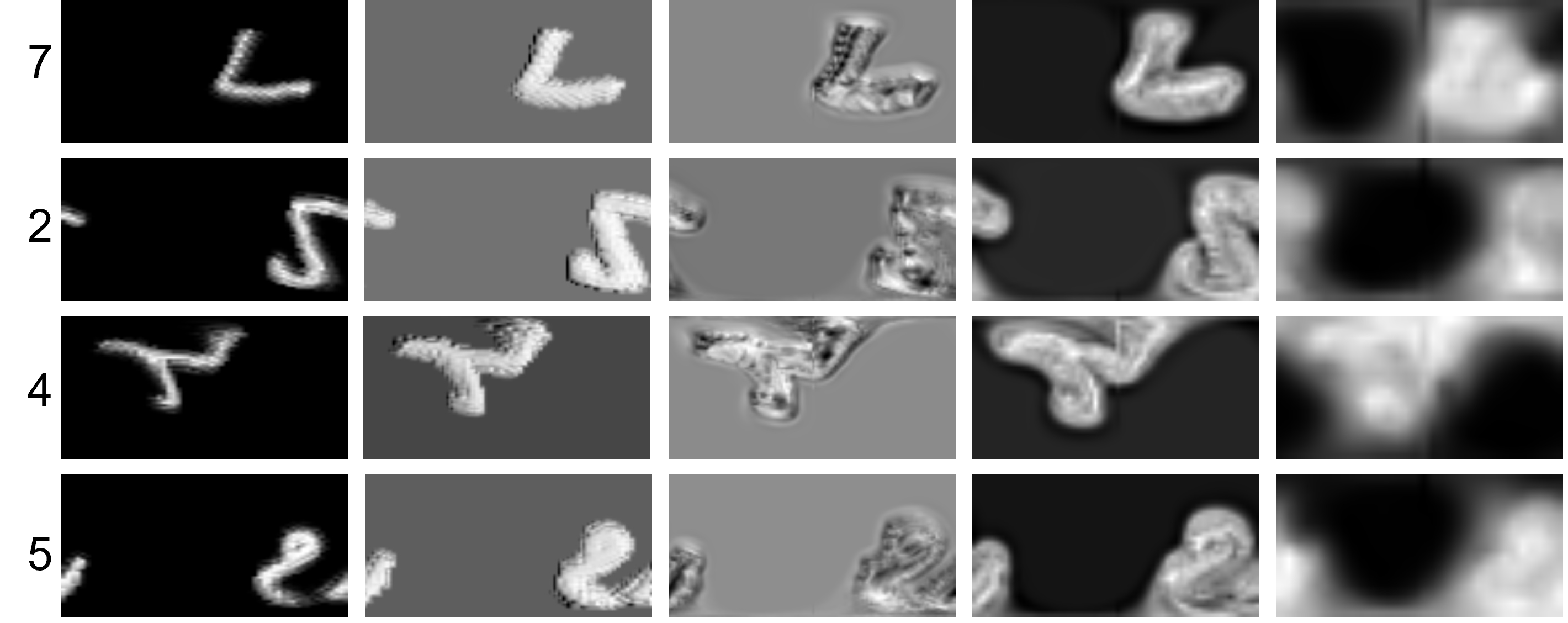

Figure 4: Visualization of pre-trained model convolutions. The results presented from left to right represent the consecutively down-convolved features.

Conclusion

This paper introduced a spherical sampling technique for panoramic image segmentation compatible with large-scale pre-trained planar models. Our method effectively mitigates distortion by recalibrating convolution kernels per spherical rules, resulting in improved semantic segmentation results on panoramic images. Future work will focus on refining spherical kernel configuration, potentially enhancing upsampling and addressing computational challenges further, solidifying spherical convolutions' role in panoramic image processing.