- The paper introduces a machine thinking framework that integrates mental imagery to simulate human cognitive processes for improved autonomous reasoning.

- It employs a modular design with Needs, Input Data, and Mental Imagery Units to transform sensory data into actionable, context-driven scenarios.

- Experimental evaluations, including image captioning and object detection, validate its potential to bridge AI functions with human-like simulation.

Can Mental Imagery Improve the Thinking Capabilities of AI Systems?

This paper investigates the integration of mental imagery into AI systems to enhance autonomous reasoning capabilities. It proposes a "machine thinking" framework that mimics human cognitive processes by simulating mental images, thus enabling AI systems to perform tasks based on internal data, planned actions, needs, and reasoning processes.

Framework Overview

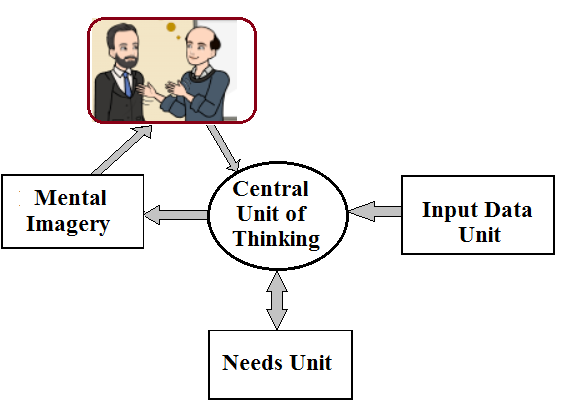

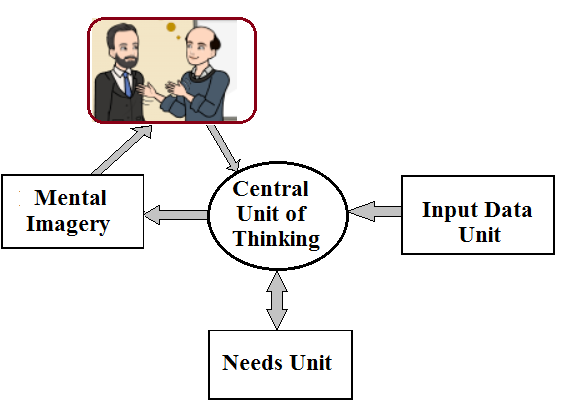

The proposed framework consists of a Cognitive Thinking Unit (CTU) supported by three auxiliary units: the Needs Unit, the Input Data Unit, and the Mental Imagery Unit. Each unit plays a distinct role:

- Needs Unit: Maintains internal motivations and scheduled actions based on reasoning outcomes.

- Input Data Unit: Processes sensory data into natural language descriptions using advanced CV and NLP models.

- Mental Imagery Unit: Generates and refines imagined images for reasoning simulations.

- Cognitive Thinking Unit (CTU): Synthesizes information from all units to simulate reasoning, plan actions, and generate contextual decisions.

Figure 1: (Left)Diagram of Machine Thinking algorithms. (Right) Output of Human Thinking.

Role of Mental Imagery

Mental imagery, a critical aspect of human cognition, plays a pivotal role in the framework by enabling the simulation and prediction of scenarios within the CTU. This capacity for generating mental images allows the AI to engage in proactive reasoning and decision-making, moving beyond reactive responses typical of existing AI systems.

Human cognitive studies have shown that imagination and perception share overlapping neural substrates. Inspired by these insights, the framework leverages mental imagery to perform tasks such as generating hypotheses, simulating future events, and updating knowledge representations.

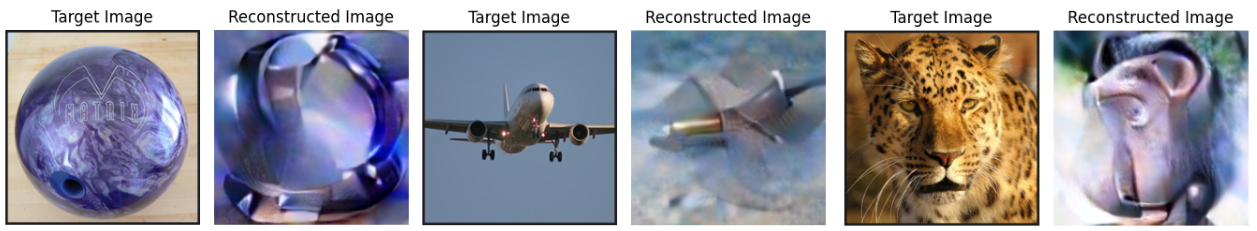

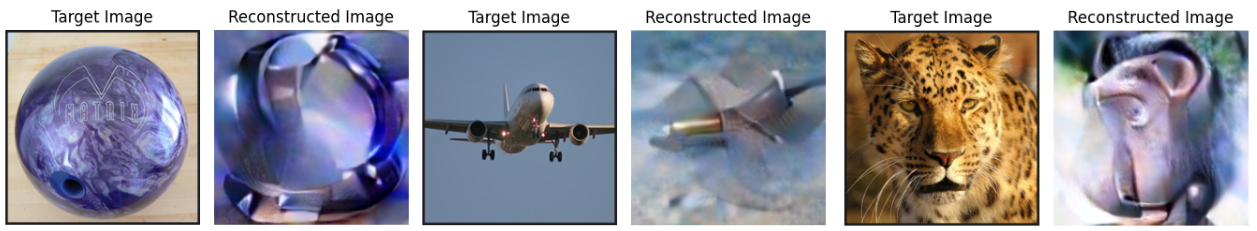

Figure 2: Reconstruction of imagined images from brain activity.

Experimental Evaluation

The paper presents experiments across multiple domains to validate the framework:

Image Captioning and Object Detection

Using a combination of Faster R-CNN for object detection and BLIP for image captioning, the system translates visual data into descriptive sentences. This conversion facilitates the needs-context match process within the CTU.

Needs-Context Alignment

The CTU matches internal needs with contextual sentences using semantic similarity models like SentenceTransformer. The framework generates appropriate actions by interpreting the context and pursuing goal-oriented tasks, demonstrating reasoning capabilities through natural language summaries.

Mental Image Generation

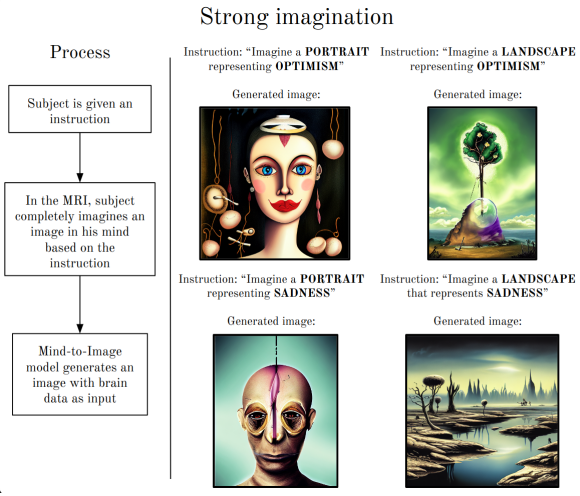

Mental imagery enables dynamic scenario simulations. For example, when presented with an event description, the mental imagery unit generates, visualizes, and examines a sequence of mental images, assisting the CTU in refining conclusions or formulating new actions.

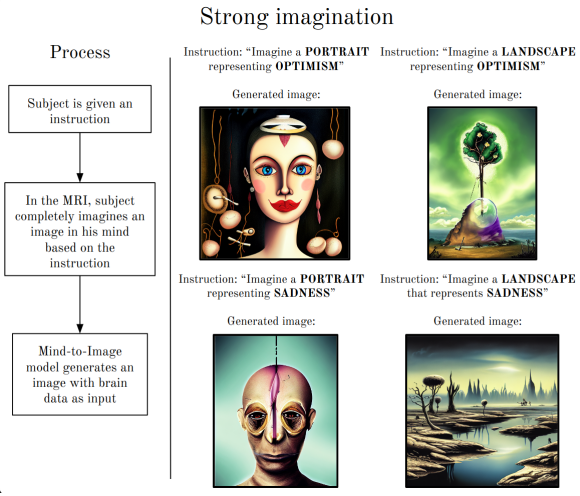

Figure 3: Results from strong imagination. The subject is given an instruction on the fMRI screen, such as "Imagine a portrait representing optimism". Then, the associated brain data is fed to the fMRI-to-Image model to produce a reconstruction.

Conclusion

The integration of mental imagery within machine thinking frameworks enriches autonomous reasoning by extending beyond current AI limitations. This synergistic approach leverages internal simulations for contextual decision-making, thereby offering a pathway to emulate human-like cognitive processes artificially. Future directions include optimizing the integration of mental imagery for more nuanced tasks and further bridging AI cognition with human intelligence.