- The paper presents the ARES framework, a probabilistic model that ensures each reasoning step's soundness by verifying claims against previously validated premises.

- It introduces an autoregressive method that sequentially validates base and derived claims, effectively mitigating error propagation in chain-of-thought processes.

- Empirical results demonstrate that ARES outperforms traditional methods by detecting reasoning errors with fewer computational samples, enhancing LLM reliability.

Probabilistic Soundness Guarantees in LLM Reasoning Chains

This essay discusses the paper "Probabilistic Soundness Guarantees in LLM Reasoning Chains," which introduces the Autoregressive Reasoning Entailment Stability (ARES) framework. ARES aims to improve the detection of reasoning errors propagated by LLMs by assessing each reasoning step probabilistically. The paper establishes a method to provide certified statistical guarantees of soundness for reasoning chains generated by LLMs.

Introduction

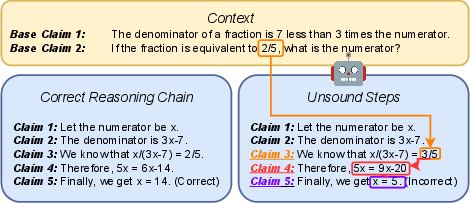

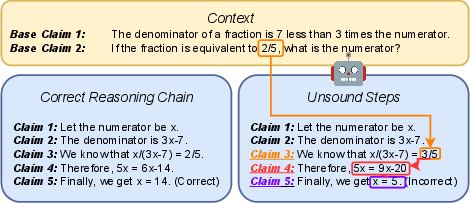

LLMs are increasingly applied in complex reasoning tasks across various domains, including medicine and scientific discovery. A persistent issue is the presence of errors in chain-of-thought processes, which diminishes the reliability of outputs. These errors can be ungrounded or logically invalid steps that compromise the final conclusions (Figure 1).

Figure 1: Faulty LLM reasoning due to propagated errors from ungrounded and invalid steps. An unsound step is a step that is either ungrounded, invalid, or contains propagated errors.

ARES addresses this issue by evaluating each reasoning step based only on previously verified premises, using a probabilistic framework instead of a binary classification. It thus provides a nuanced indication of soundness for each step, enhancing the robustness of error detection mechanisms.

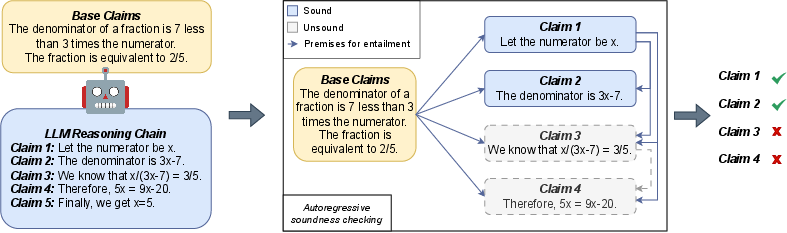

Autoregressive Soundness Checking

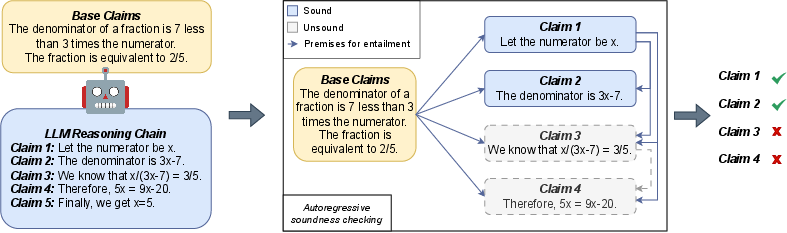

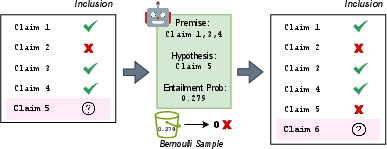

The ARES framework comprehensively examines the reasoning chain generated by an LLM. It separates reasoning into verified base claims and derived claims, systematically checking each derived claim's soundness using only sound premises (Figure 2).

Figure 2: Autoregressive soundness checking process, involving base and derived claims.

Traditional methods evaluate all steps simultaneously, which can lead to incorrect evaluations by misinterpreting propagated errors. In contrast, ARES employs a human-inspired approach of reviewing claims iteratively, discounting unsound statements during subsequent evaluations.

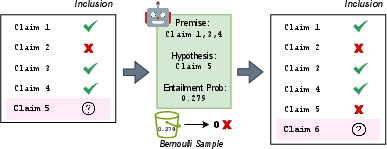

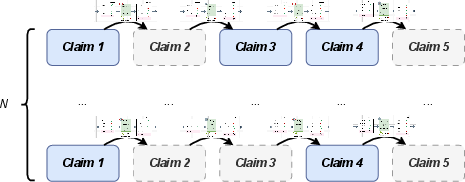

Probabilistic Entailment and Soundness

ARES formalizes the concepts of probabilistic entailment and soundness in reasoning chains. Each claim in the chain is verified against previously sound claims, employing a probabilistic model to handle the inherent ambiguity in natural language. This method leverages a probabilistic entailment model to ascertain the soundness of each claim step-by-step (Figure 3).

Figure 3: Estimating ARES shows the entailment rate of derived claims computed autoregressively.

The entailment model assesses the probability that a given set of premises entails a derived claim. If a step's entailment probability is below a certain threshold, it is likely erroneous, allowing ARES to identify and correct errors in reasoning chains effectively.

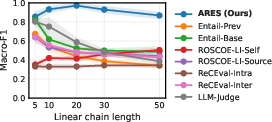

Implementation and Empirical Validation

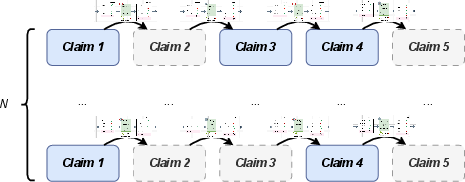

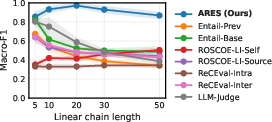

The ARES framework uses a computationally efficient algorithm to estimate entailment stability scores, ensuring practical applicability. The method's robustness and efficacy are validated through extensive experiments, revealing its superior performance in detecting errors across various benchmarks compared to existing methods (Figure 4).

Figure 4: ClaimTrees demonstrate that GPT-4o-mini can identify error propagations in long reasoning chains robustly, unlike other methods.

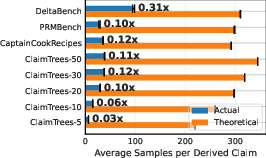

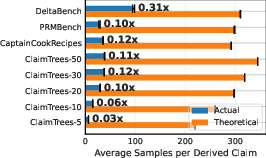

In terms of computational efficiency, ARES requires samples to estimate entailment rates, but practical implementations show that the number of samples needed is significantly lower than theoretical requirements (Figure 5).

Figure 5: ARES in practice uses significantly fewer samples than required by theoretical bounds.

Conclusion

ARES provides a significant advancement in error detection for LLM-generated reasoning chains. By implementing probabilistic soundness checks, ARES effectively identifies propagated errors that traditional methods fail to detect. This probabilistic framework demonstrates promising results in improving reliability and robustness in AI applications, establishing a systematic approach to verifying reasoning chains' soundness with statistical guarantees. Future work can explore further adaptations and improvements in entailment models to enhance ARES's applicability across broader contexts.