- The paper introduces an automated framework that replaces manual prompt engineering, enabling scalable and accessible LLM deployment.

- It presents a modular architecture integrating configuration, optimization, yield, and feedback components to streamline prompt refinement.

- The framework achieves competitive benchmark performance and reduces computational costs in tasks like GSM8K and SQuAD_2.

Promptomatix: An Automatic Prompt Optimization Framework for LLMs

Introduction

"Promptomatix: An Automatic Prompt Optimization Framework for LLMs" (2507.14241) addresses the significant challenges in manual prompt engineering for LLMs by introducing an automated framework. The paper targets issues such as scalability, accessibility, and unpredictability in prompt optimization. By automating prompt design, the framework democratizes advanced LLM capabilities, enabling efficient deployment across various domains without the need for deep technical expertise.

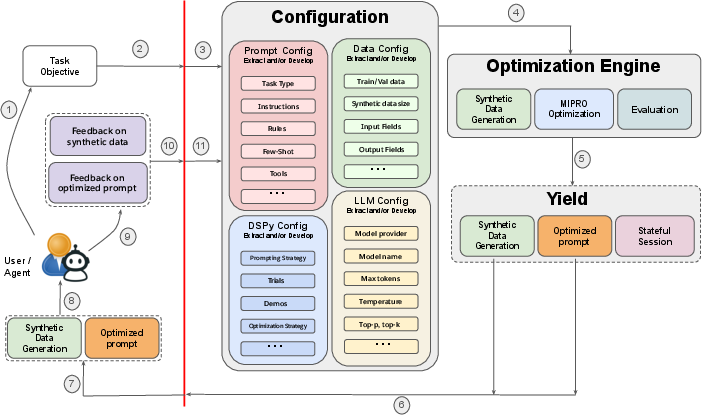

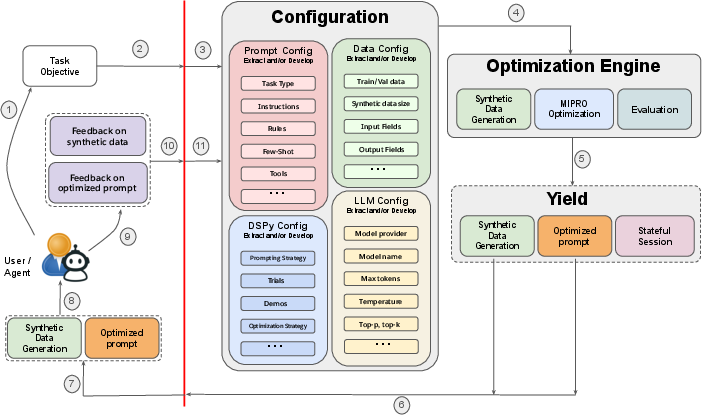

The Promptomatix framework incorporates a modular architecture that supports a range of optimizations, from basic to advanced prompting techniques, thereby making it an inclusive tool for both technical and non-technical users. The system comprises components for Configuration, Optimization, Yield, and Feedback, which collectively streamline prompt creation, optimization, and refinement.

Figure 1: Promptomatix System Architecture: The complete optimization pipeline showing Configuration, Optimization Engine, Yield, and Feedback components.

Framework Components

Configuration

The Configuration component automates the initial setup by interpreting task objectives from minimal user input. It uses a combination of natural language understanding and advanced models to establish task parameters like type, rules, and few-shot examples. Importantly, this component eliminates the need for expertise in prompting techniques, as it automatically configures the necessary parameters, streamlining the user's interaction with the framework.

Optimization Engine

The core of Promptomatix lies in its Optimization Engine, which implements sophisticated algorithms for prompt refinement. This includes generating synthetic data for training and evaluating prompts, ensuring that the prompts are not only effective but also computationally efficient. The engine supports both structured optimizations via DSPy and a lightweight, meta-prompt-based approach that allows for quick adaptability to user constraints.

The engine’s cost-aware optimization objective balances performance and computational expense, which is crucial for scalable deployment in resource-constrained environments.

Feedback and Yield

Promptomatix integrates user and system feedback mechanisms to continuously refine and enhance prompt effectiveness. The Feedback component captures user interactions and system logs, feeding improvements back into the optimization cycle. Meanwhile, the Yield component manages the delivery of optimized prompts and maintains detailed session states, facilitating iterative enhancements and ensuring high usability.

Experimental Evaluation

Promptomatix demonstrates competitive performance across multiple benchmarks, including question answering, summarization, and text generation tasks. The framework achieves performance on par or superior to traditional methods, reducing computational overhead through its cost-aware design. For instance, Promptomatix’s application in tasks like GSM8K and SQuAD_2 shows it offers precise control over efficiency and output quality, outperforming manual and semi-automated approaches in both speed and resource usage.

Implications and Future Developments

The introduction of Promptomatix marks a significant advancement in the LLM domain, offering a scalable solution for automatic prompt optimization that is both accessible and efficient. Its modular design allows for future expansions, such as integrating additional backend optimizers or supporting new task types, including multimodal interactions.

Potential avenues for future research include enhancing the framework’s capabilities in handling complex multi-turn dialogues, adaptive learning in real-time environments, and extending support for domain-specific optimizations in areas like medical and legal reasoning. Moreover, improving the synthetic data generation process to further mitigate bias and enhance diversity will be a key focus.

Conclusion

Promptomatix effectively alleviates the existing challenges of manual prompt engineering by providing an automated, accessible solution. With its comprehensive evaluation and modularity, the framework provides a robust platform for deploying LLMs across various real-world applications, marking a significant step towards the widespread adoption of LLM technologies.

By making advanced prompt techniques readily available without the need for domain-specific expertise, Promptomatix democratizes the power of LLMs, enabling broader participation and innovation across diverse fields. In conclusion, this work not only advances prompt optimization but also sets the stage for integrating LLMs more seamlessly into everyday tasks and specialized applications.