- The paper demonstrated that AI Consult reduced diagnostic errors by 16% and treatment errors by 13% using a traffic-light alert system.

- It evaluated 39,849 patient visits across 15 clinics, highlighting the potential of LLM-based CDS in real-world primary care settings.

- Clinician feedback indicated improved workflow and educational benefits, though patient-reported outcomes remained largely unchanged.

AI-based Clinical Decision Support for Primary Care: A Real-World Study

Introduction

The study "AI-based Clinical Decision Support for Primary Care: A Real-World Study" focuses on the implementation and evaluation of a LLM-based clinical decision support (CDS) system — AI Consult — in primary care clinics operated by Penda Health in Nairobi, Kenya. In real-world clinical settings, LLMs have demonstrated potential advantages over physicians on various health-related benchmarks. However, the deployment of such models in live healthcare environments lags, primarily due to the model-implementation gap that separates the theoretical capabilities of AI from practical, everyday medical practice.

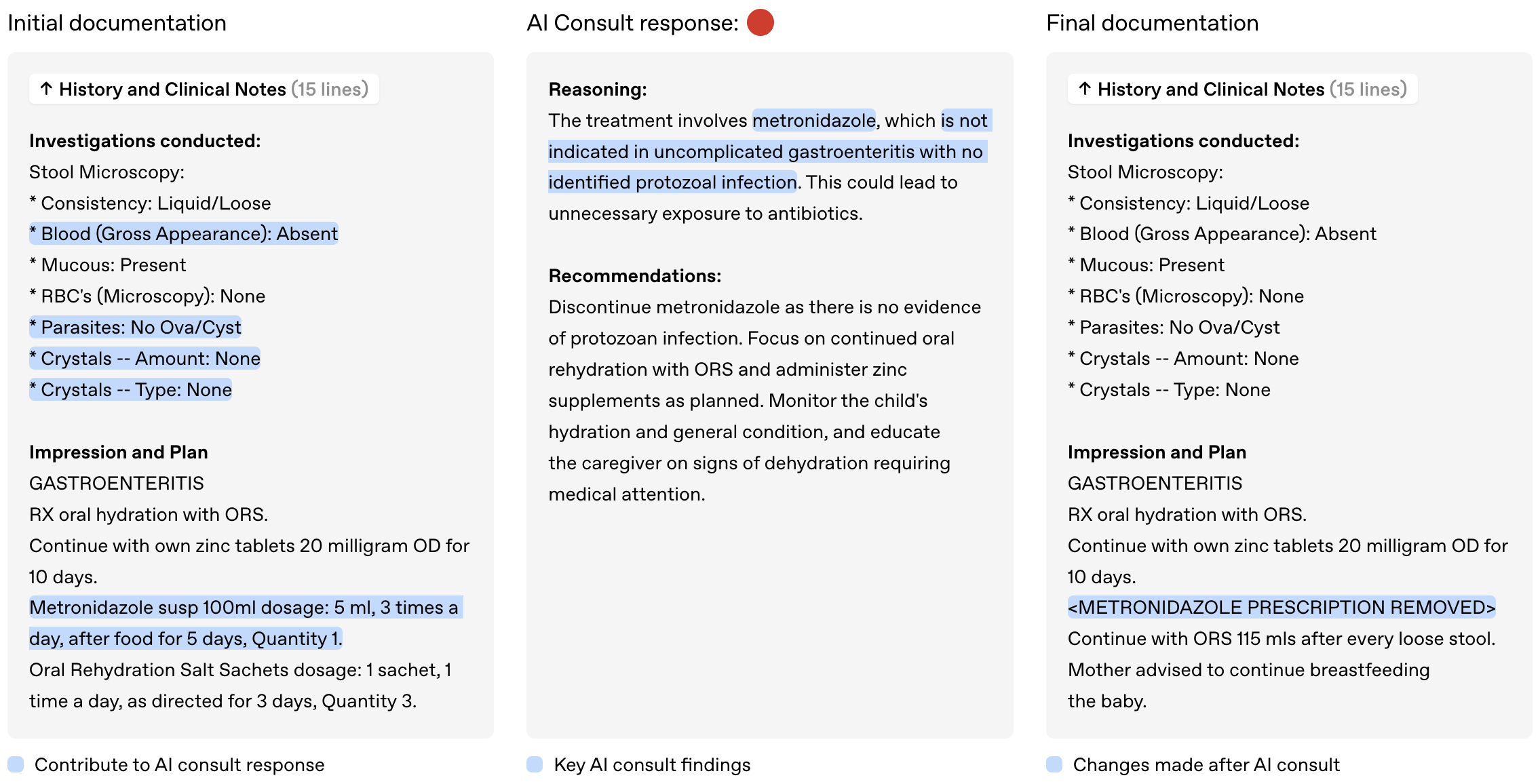

Figure 1: AI Consult is a safety net that runs in the background during a patient visit, flagging potential errors with severity levels to preserve clinician autonomy.

Motivation and Contributions

The vast spectrum of cases encountered in primary care, combined with local infrastructural challenges, offers a compelling context for deploying AI decision support tools. Penda Health, serving over 1000 daily patient visits across its clinics, presents a real-world setting where the inclusion of AI Consult was studied.

Primary motivations for this study include demonstrating the real-world clinical impact of an LLM-based CDS, identifying the potential of such systems in reducing clinical errors, and providing a framework for the timely and thoughtful implementation of novel health AI systems.

Methods

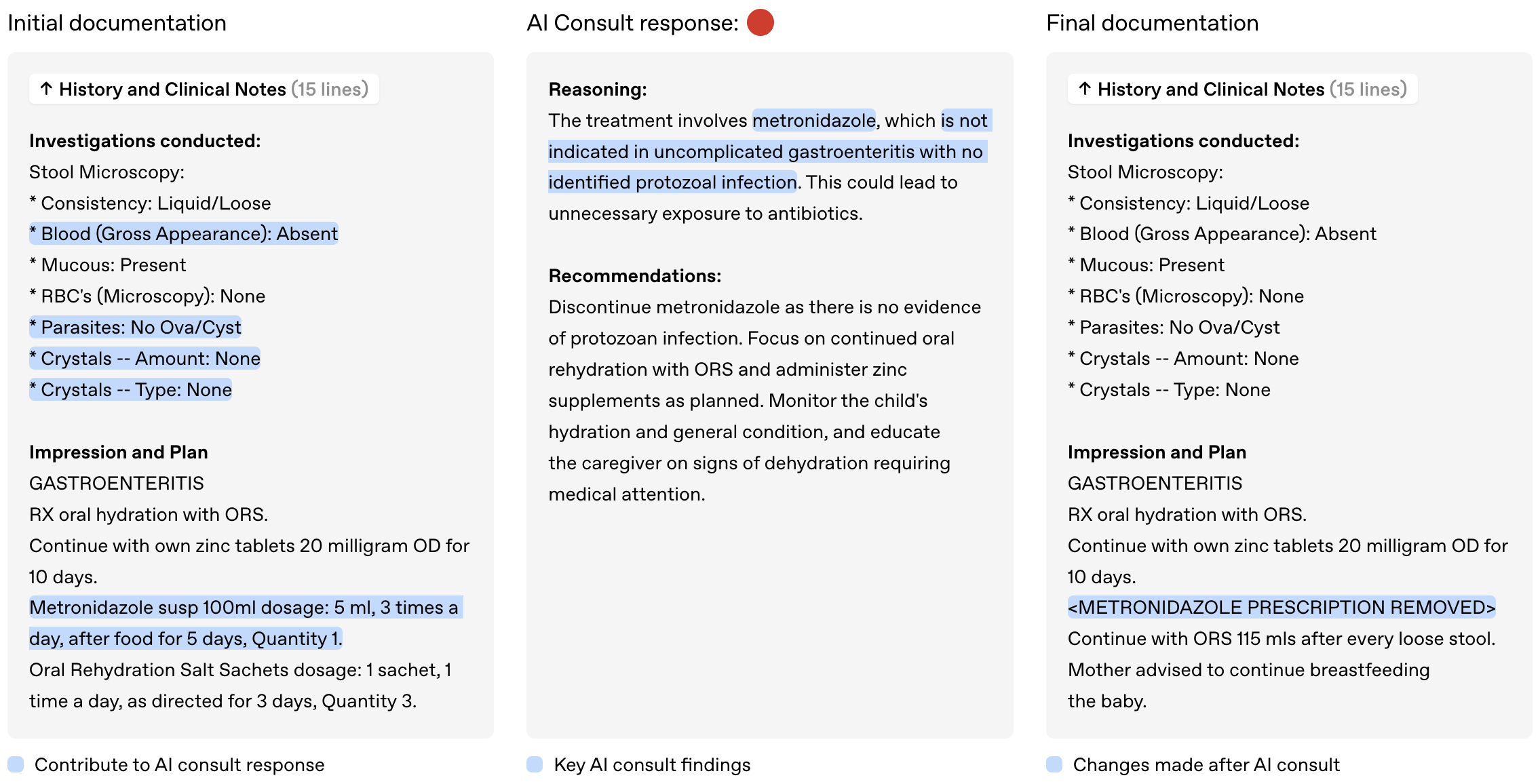

The AI Consult system was designed to be a safety net that scans clinical interactions asynchronously during pivotal decision-making junctures within the EMR system (Easy Clinic). By flagging potential errors using a traffic-light system — green, yellow, and red alerts — it aims to maintain clinical autonomy while prioritizing critical risks.

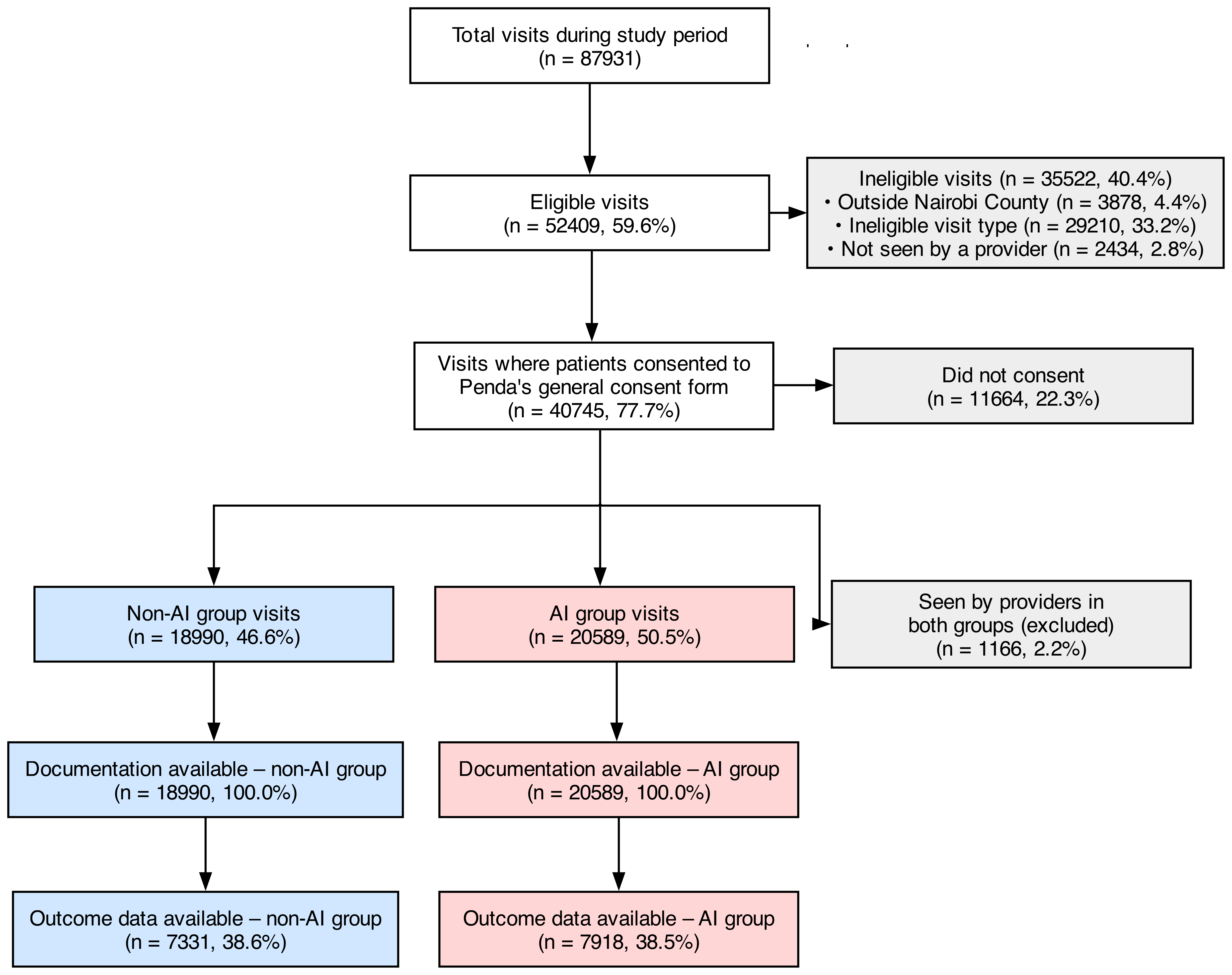

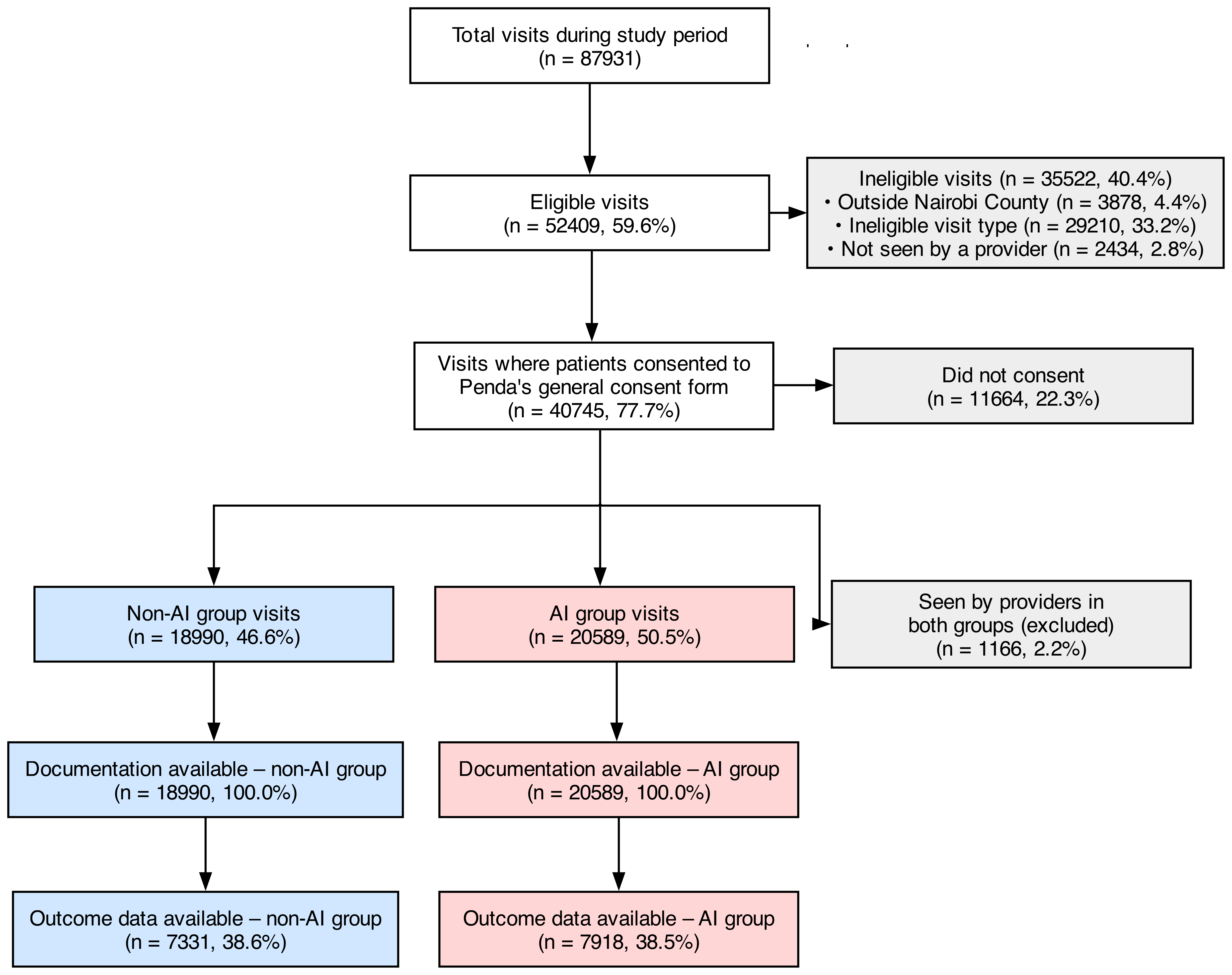

Figure 2: Flow diagram showing visit eligibility, consent, group assignment, and data availability.

A pragmatic cluster-assigned study was conducted across 15 clinics assessing the impact of AI Consult on clinical quality, use, and usability, and patient-reported outcomes. Analysis was carried out on 39,849 patient visits with and without access to AI Consult to determine significant differences in clinical error rates.

Discussion

Impact on Clinical Quality

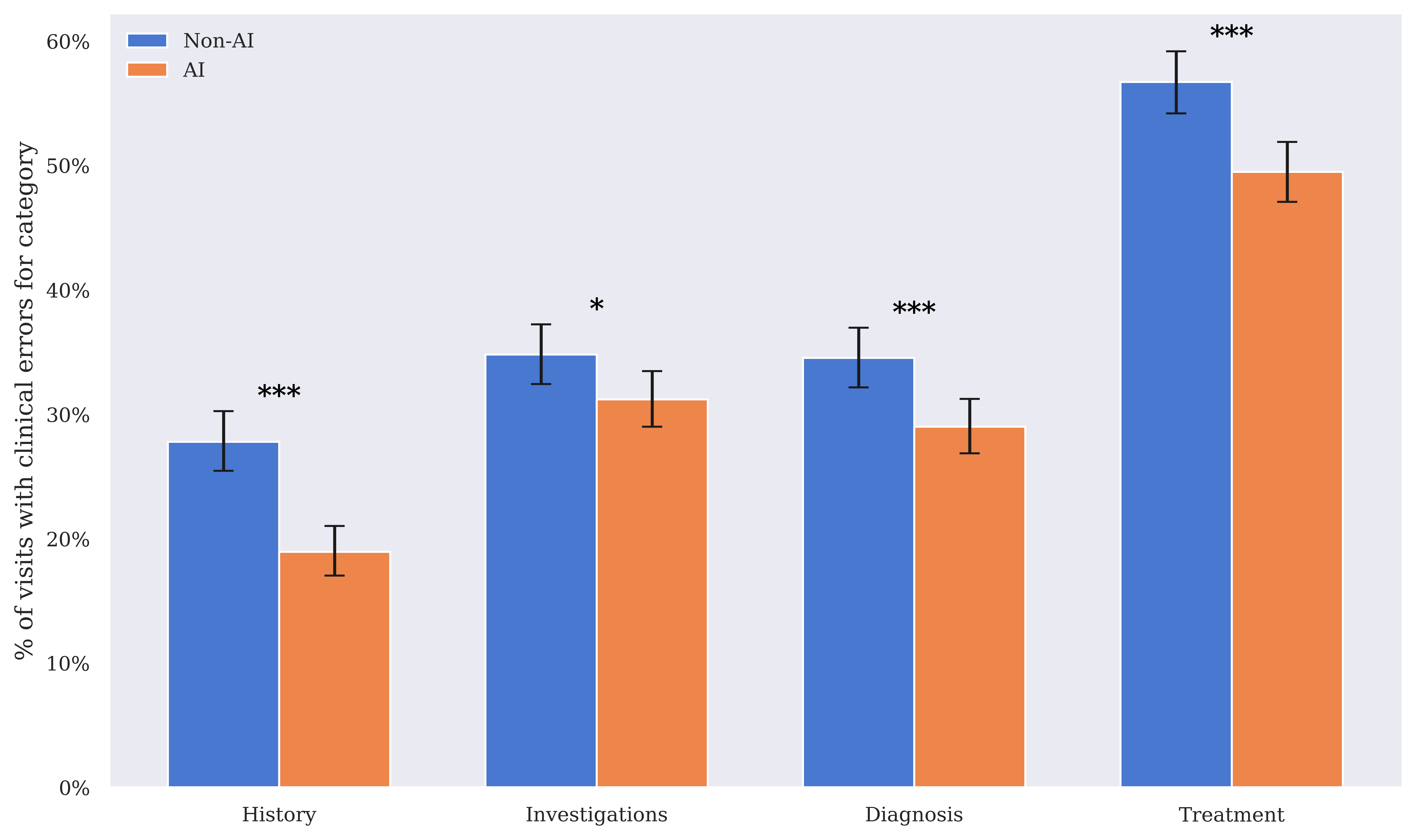

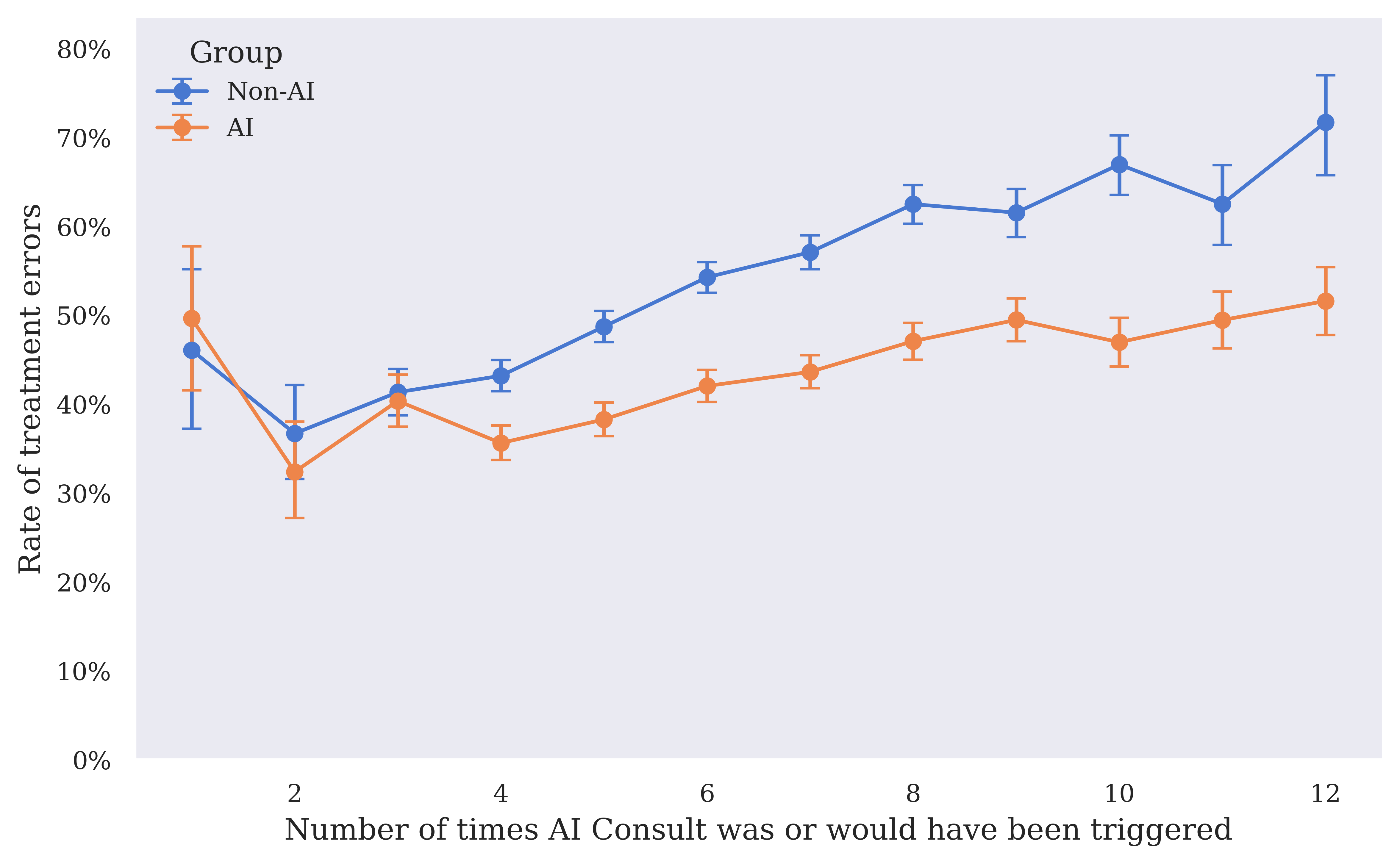

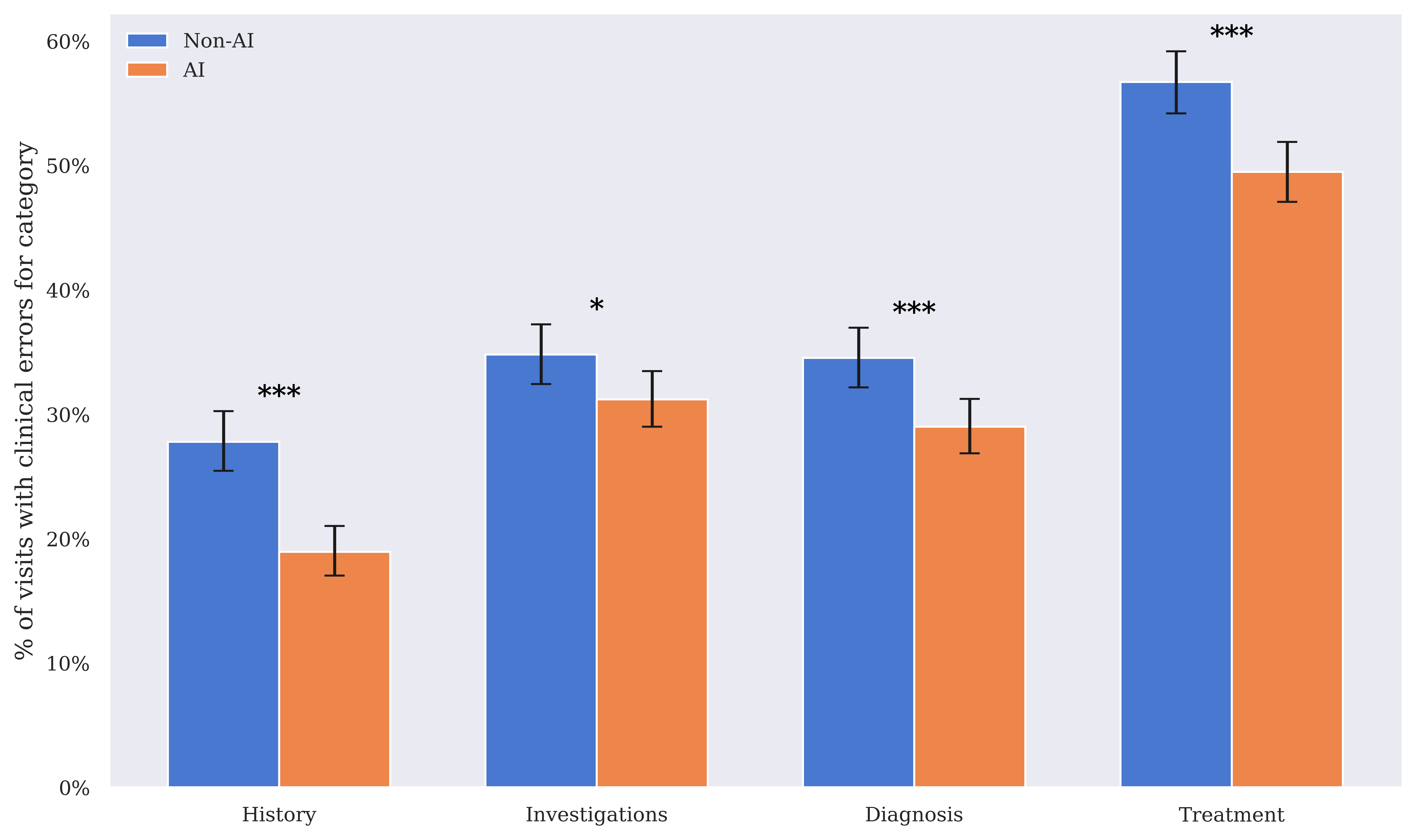

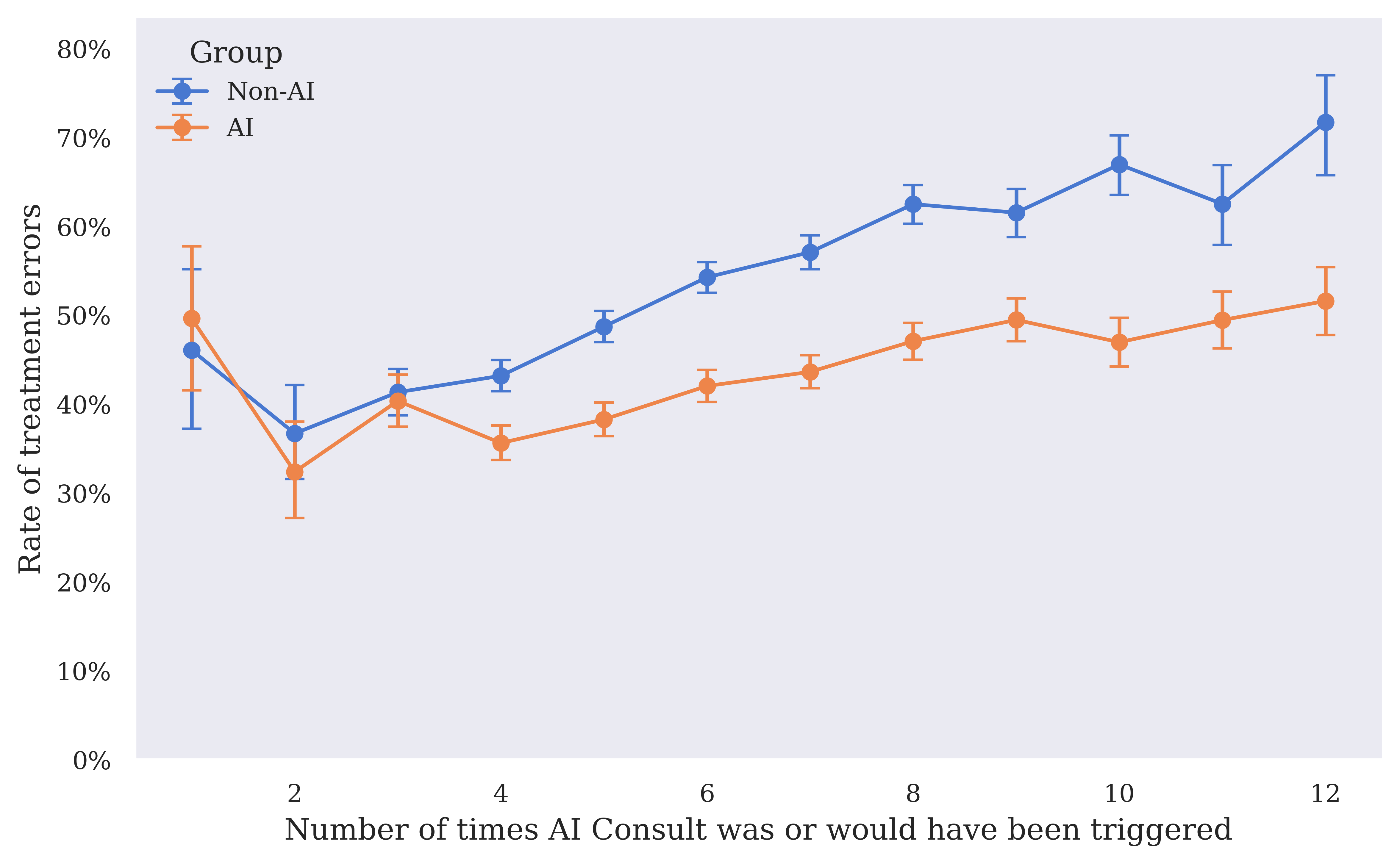

The integration of AI Consult demonstrated a substantial reduction in clinical errors — 16% fewer diagnostic errors and 13% fewer treatment errors in the AI group compared to the non-AI group. The benefits were especially notable within visits with high severity ("red" alerts), revealing that proactive intervention at decision points is crucial to preventing clinical errors.

Figure 3: Clinical error rates for history-taking, investigations, diagnosis, and treatment, comparing the AI group to the non-AI group. Error bars show 95% Wilson confidence intervals.

The model's deployment encourages learning, as AI-assisted clinicians showed improvements in addressing potential errors even before receiving alerts, indicative of an education effect ingrained in the CDS design. The tool, however, did not significantly affect patient-reported outcomes, suggesting limitations in the study's temporal scope and the subjective nature of patient-reported metrics.

Use and Usability

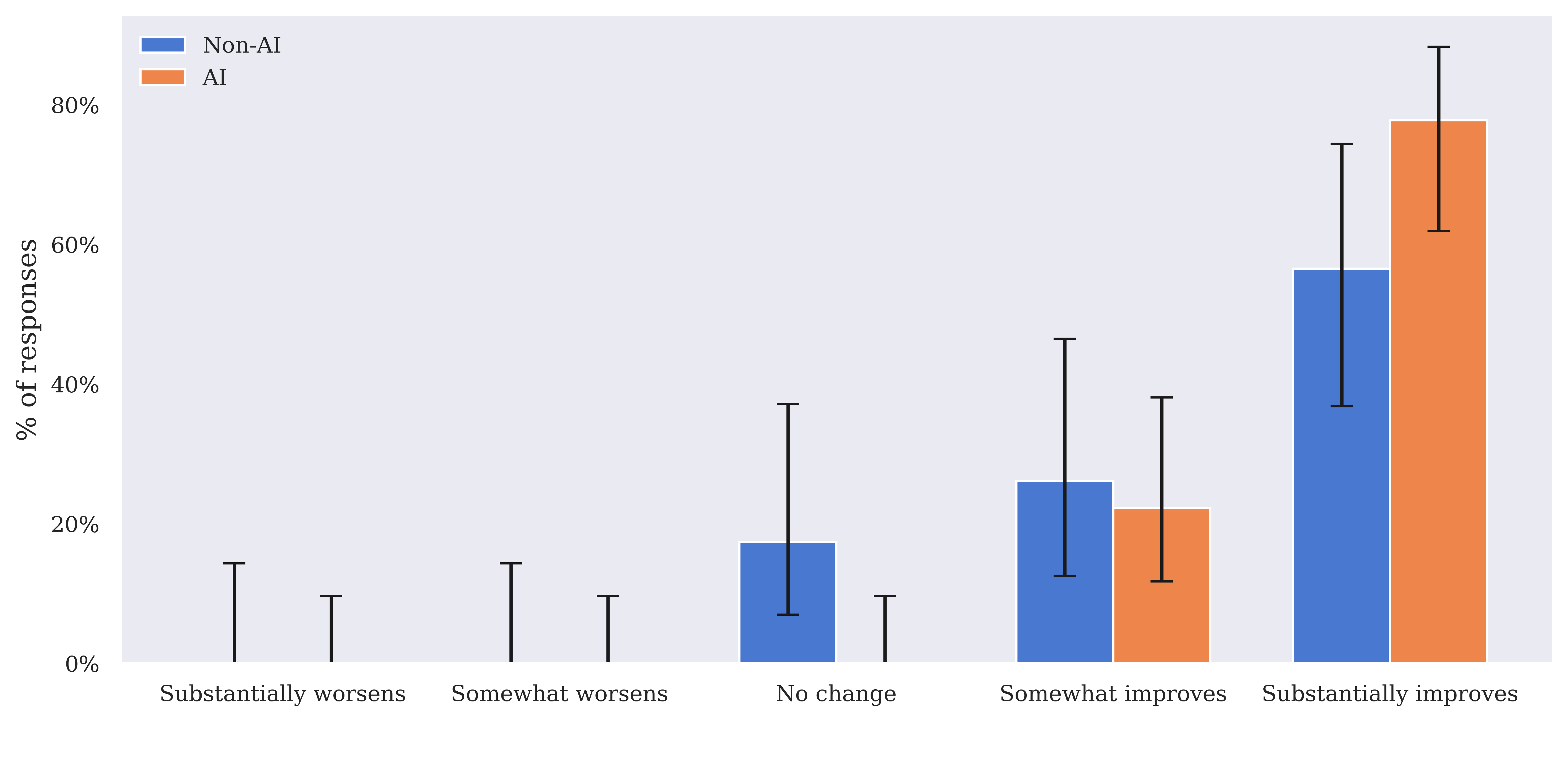

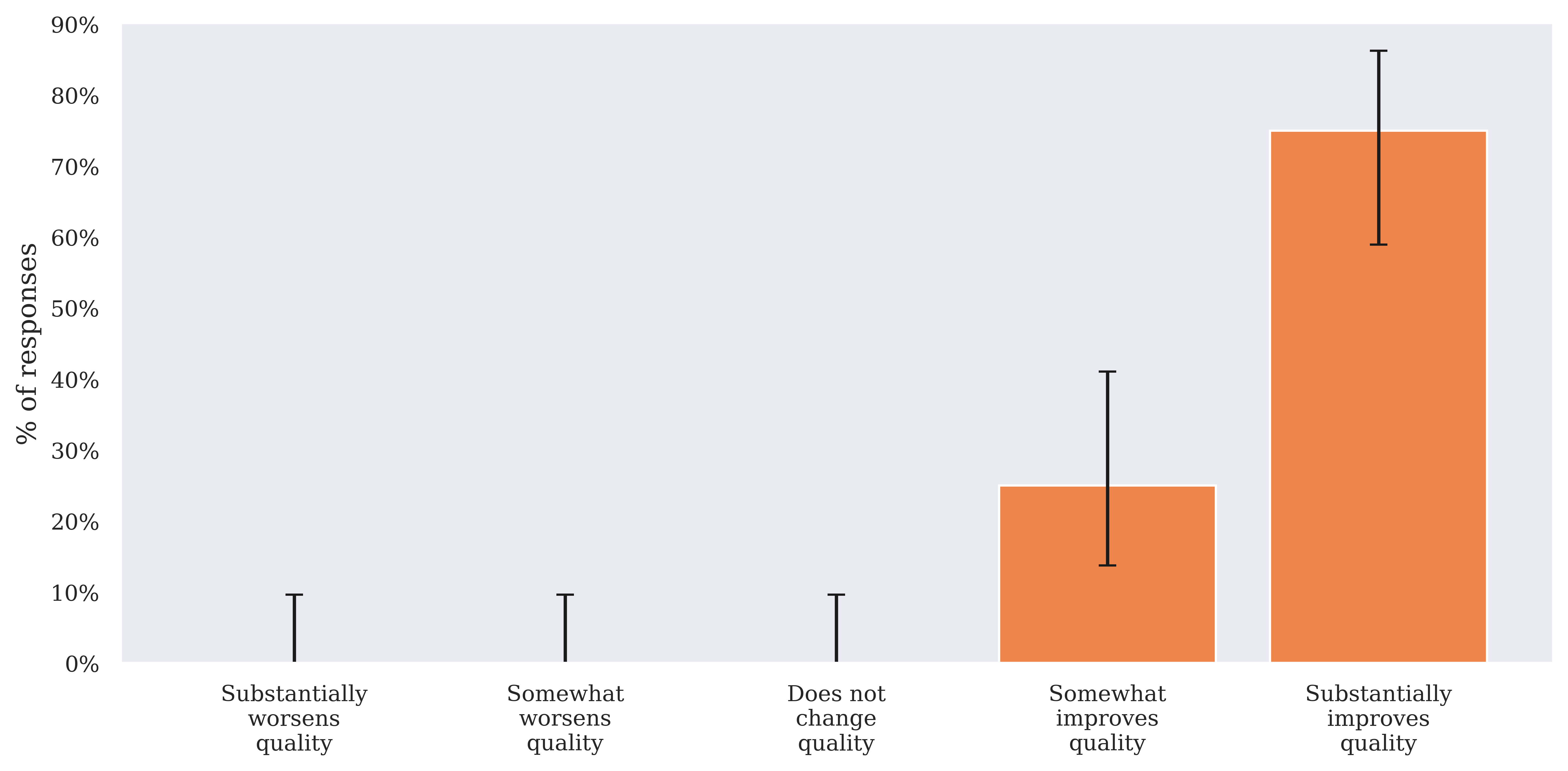

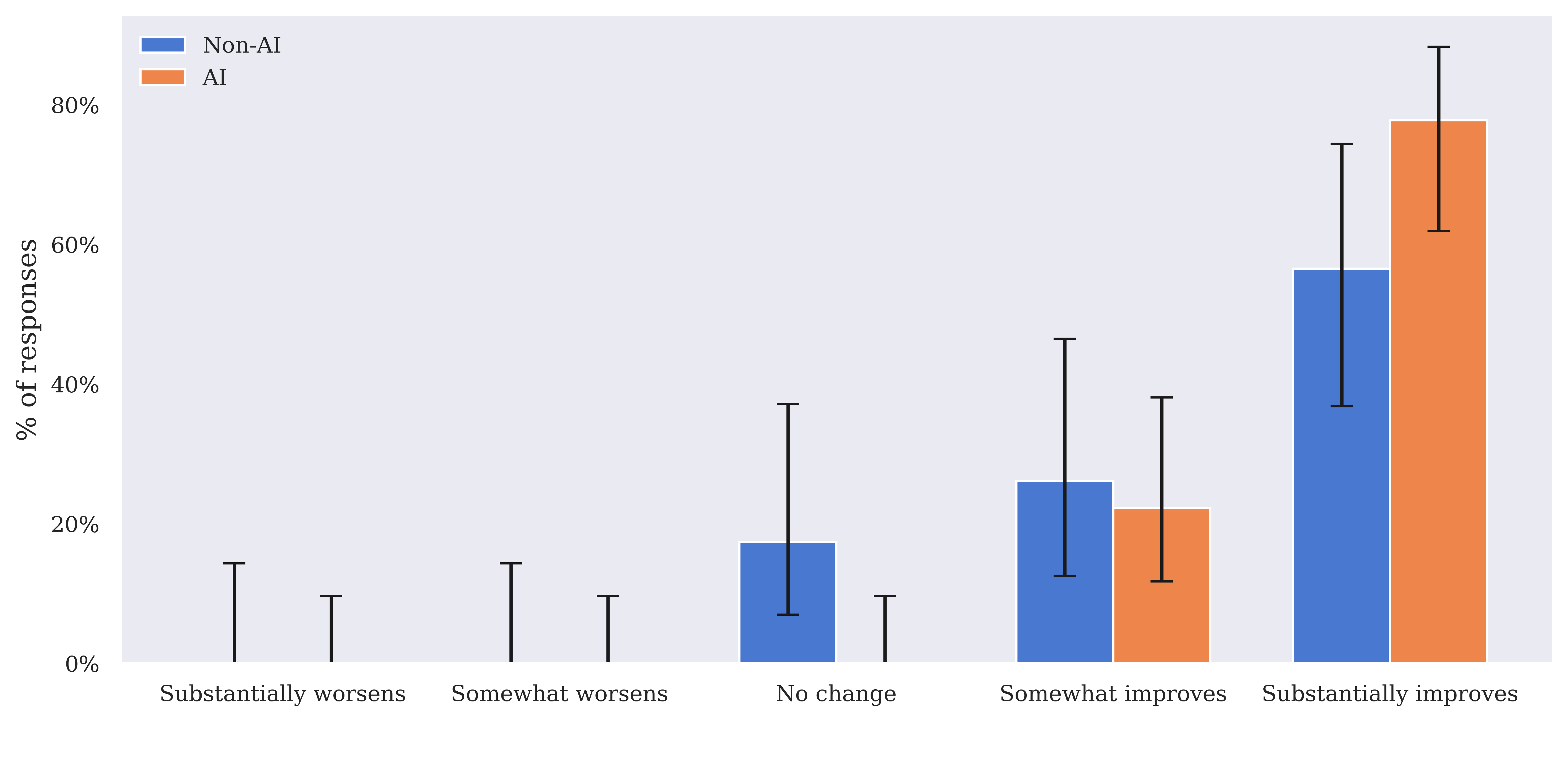

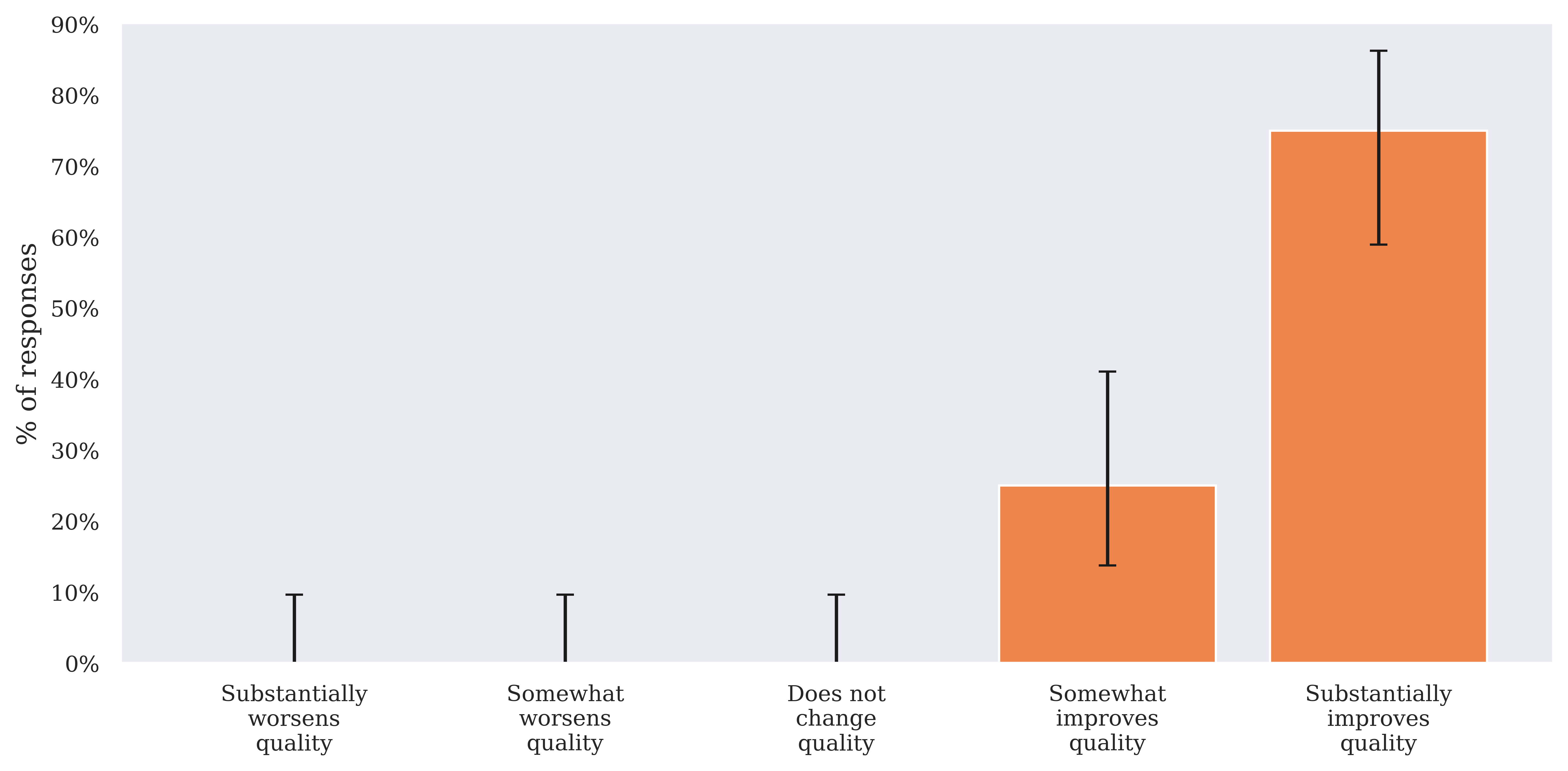

Clinician feedback highlighted the benefits of AI Consult, mentioning notable improvements in care quality and workflow efficiency. Survey results showed that clinicians felt AI Consult enhanced care quality, with many describing it as an effective learning tool.

Figure 4: Impact of the EMR (including AI Consult, if present), on quality of care in both the AI and the non-AI group.

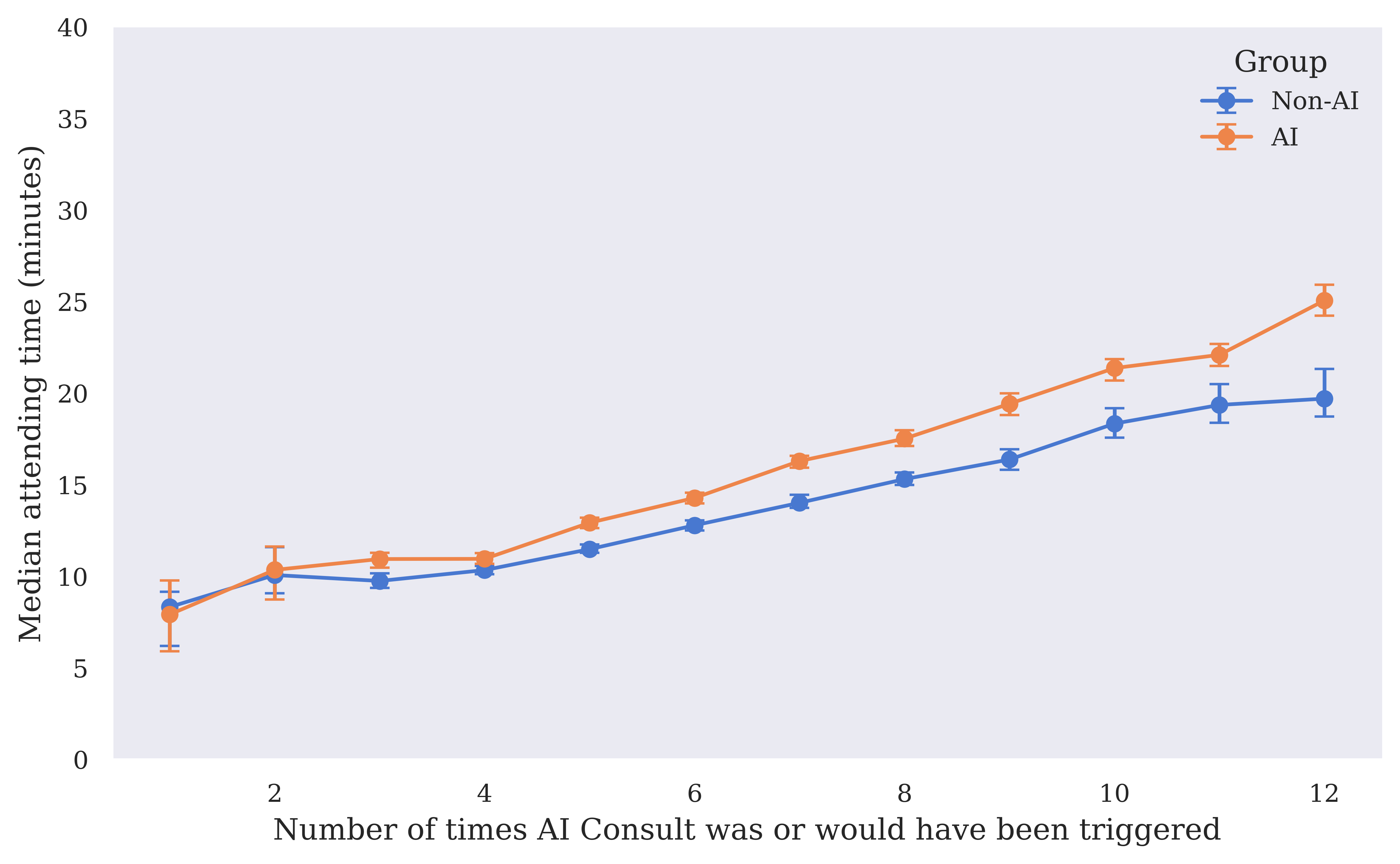

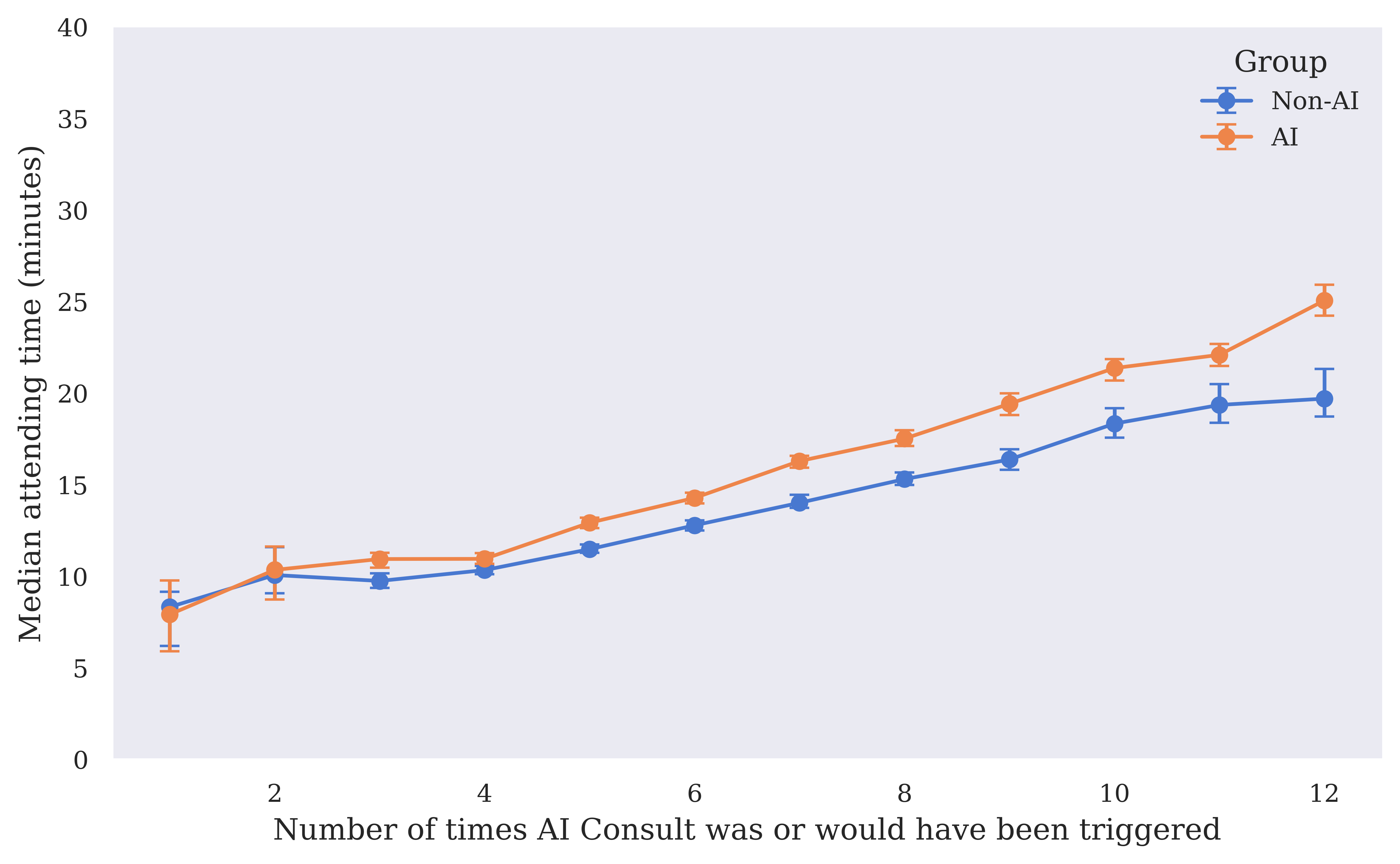

The study noted an increase in median clinician attending time for cases with frequent AI Consult triggers in the AI group, suggesting additional time spent on reviewing AI feedback may improve care decisions.

Figure 5: Median clinician attending time by number of AI Consult triggers in the non-AI and AI groups. 95% CIs calculated with 1000 bootstrap samples. Includes only visits with 12 or fewer AI Consult calls.

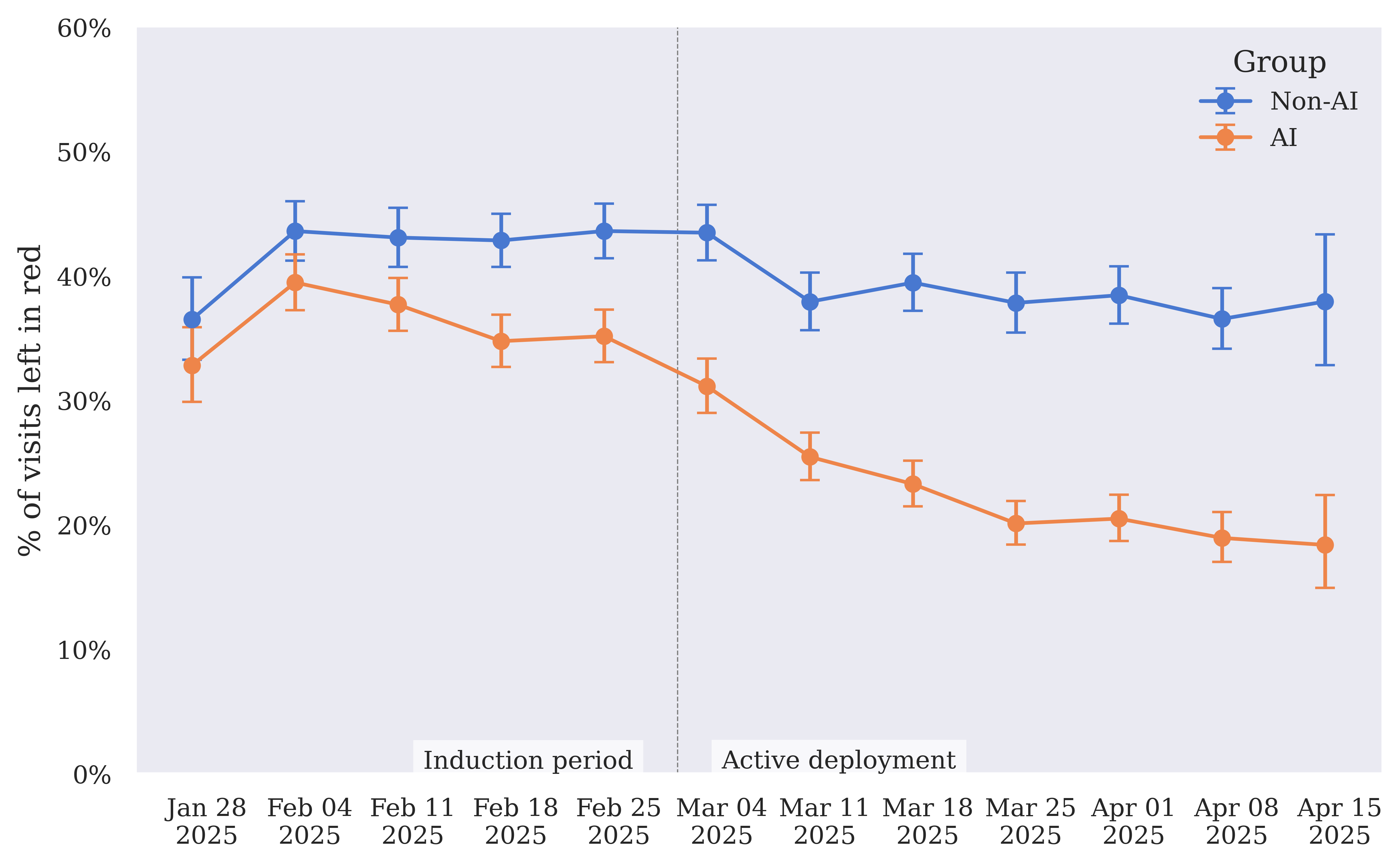

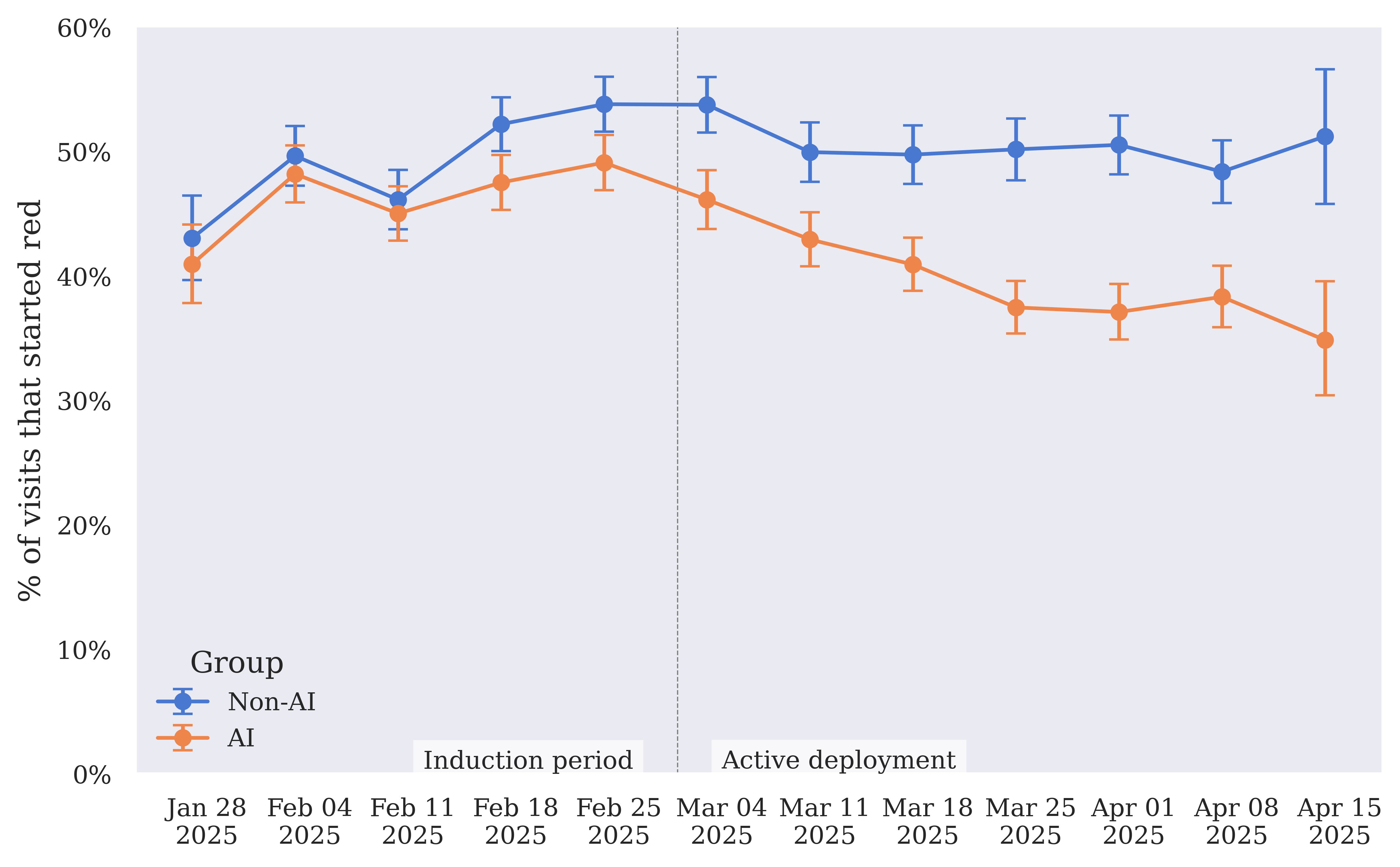

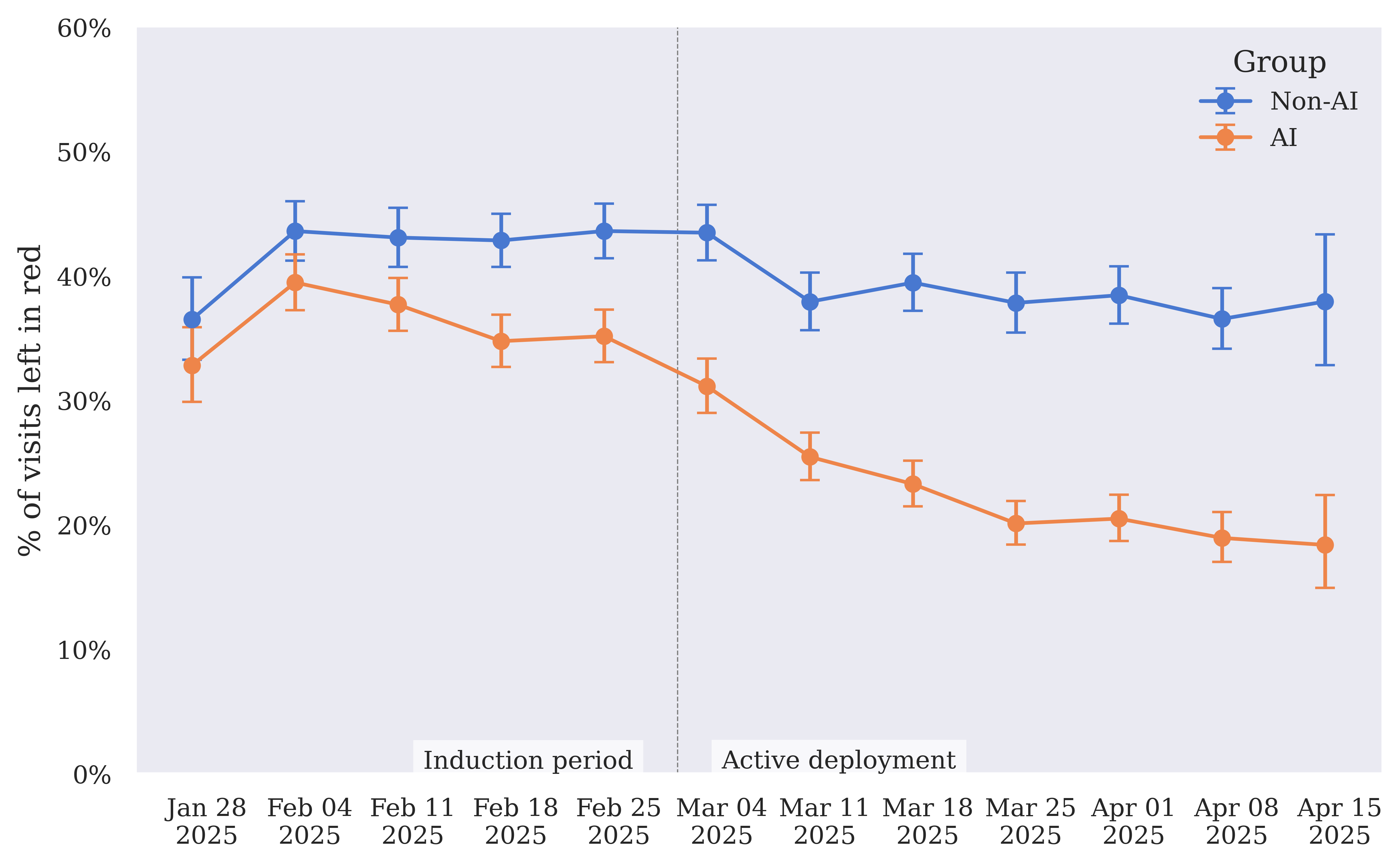

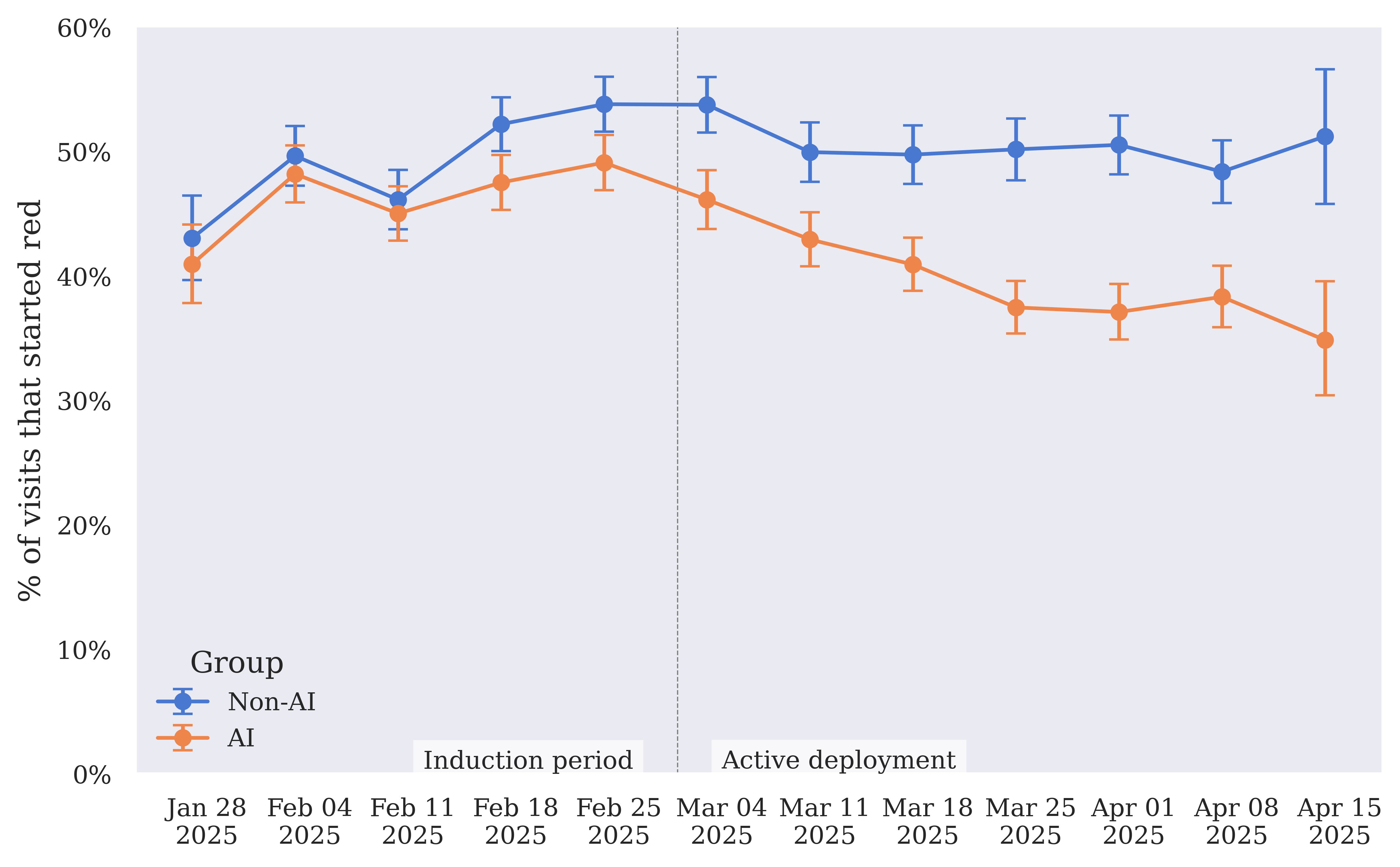

Figure 6: Left in red rate — rate of visits where the final call for any of the AI Consult categories is red, for AI and non-AI groups over time.

Additional feedback emphasized challenges related to software localization and alert fatigue, highlighting areas for further improvement.

Patient Safety and Outcomes

The study did not find statistically significant differences in patient-reported outcomes between AI and non-AI groups, but it did observe that the rate of unplanned follow-up visits to other clinics was slightly lower in the AI group. Importantly, no incidents were identified where AI Consult actively caused harm, reinforcing its potential as a safe addition to clinical workflows.

Conclusion

This study provides evidence that LLM-based CDS, when integrated effectively, can support clinicians in reducing diagnostic and treatment errors in real-time. The research underscores the need for capable models, clinically-aligned implementations, and comprehensive deployment strategies to bridge the model-implementation gap in healthcare. The findings propose a compelling case for the further development and integration of AI systems like AI Consult in real-world health systems, potentially paving the way toward more reliable and consistent primary care. Further longitudinal studies, particularly those powered to assess patient-reported outcomes, will be necessary for a complete evaluation of the broader clinical impact of these AI tools.